#!/usr/bin/env python3

# -*- coding: utf-8 -*-

"""

Created on Thu Jan 4 12:40:58 2018

@author: lisir

"""

from __future__ import print_function

from __future__ import absolute_import

from __future__ import division

import argparse

import sys

import input_data

import tensorflow as tf

mnist = input_data.read_data_sets("./MNIST_data", one_hot=True)

# 定义网络超参数

learning_rate = 0.001

training_iters = 200000

batch_size = 128

display_step = 10

# 定义网络参数

n_inputs = 28 # 输入的维度

n_steps = 28

n_hidden_units = 128 # 隐藏层的神经元个数

n_classes = 10 # 输出的数量,也就分类数量,0-9

# 占位符输入

x = tf.placeholder(tf.float32, [None, n_steps, n_inputs])

y = tf.placeholder(tf.float32, [None, n_classes])

keep_prob = tf.placeholder(tf.float32)

weights = {'in':tf.Variable(tf.random_normal([n_inputs, n_hidden_units])),

'out':tf.Variable(tf.random_normal([n_hidden_units, n_classes]))}

biases = {'in':tf.Variable(tf.constant(0.1, shape=[n_hidden_units, ])),

'out':tf.Variable(tf.constant(0.1, shape=[n_classes, ]))}

def RNN(X, weights, biases):

X = tf.reshape(X, [-1, n_inputs])

X_in = tf.matmul(X, weights['in']) + biases['in']

X_in = tf.reshape(X_in, [-1, n_steps, n_hidden_units])

# 使用基本的LSTM循环网络单元

lstm_cell = tf.contrib.rnn.BasicLSTMCell(n_hidden_units, forget_bias=1.0, state_is_tuple=True)

# 初始化为0,LSTM单元由两部分构成(c_state, h_state)

init_state = lstm_cell.zero_state(batch_size, dtype=tf.float32)

# dynamic_rnn接收张量要么为(batch, steps, inputs)或者(steps, batch, inputs)作为X_in

outputs, final_state = tf.nn.dynamic_rnn(lstm_cell, X_in, initial_state=init_state, time_major=False)

results = tf.matmul(final_state[1], weights['out']) + biases['out']

return results

# 构建模型

pred = RNN(x, weights, biases)

# 定义损失函数和学习步骤

cost = tf.reduce_mean(tf.nn.softmax_cross_entropy_with_logits(logits=pred, labels=y))

optimizer = tf.train.AdamOptimizer(learning_rate).minimize(cost)

# 测试网络

correct_pred = tf.equal(tf.argmax(pred, 1), tf.argmax(y, 1))

accuracy = tf.reduce_mean(tf.cast(correct_pred, tf.float32))

# 初始化所有的共享变量

init = tf.global_variables_initializer()

# 开启一个训练

with tf.Session() as sess:

sess.run(init)

step = 0

# Keep training until reach max iterations

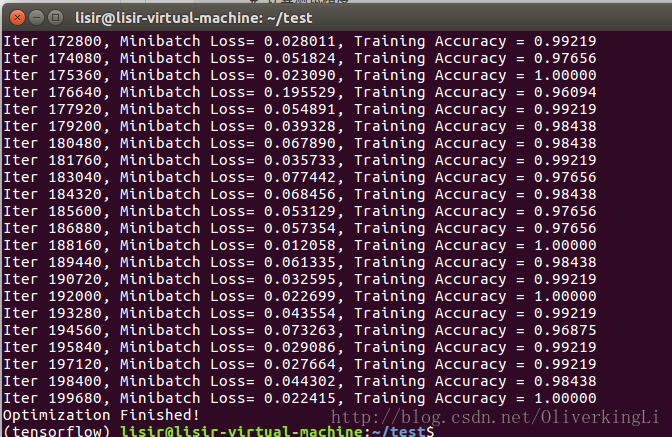

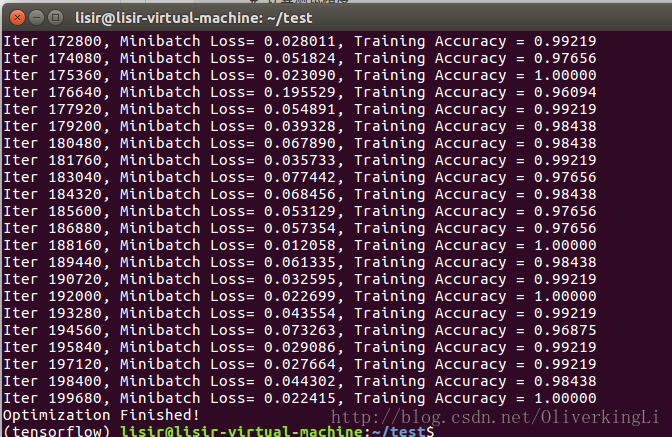

while step * batch_size < training_iters:

batch_xs, batch_ys = mnist.train.next_batch(batch_size)

batch_xs = batch_xs.reshape([batch_size, n_steps, n_inputs])

# 获取批数据

sess.run(optimizer, feed_dict={x: batch_xs, y: batch_ys})

if step % display_step == 0:

# 计算精度

acc = sess.run(accuracy, feed_dict={x: batch_xs, y: batch_ys, keep_prob: 1.})

# 计算损失值

loss = sess.run(cost, feed_dict={x: batch_xs, y: batch_ys, keep_prob: 1.})

print("Iter " + str(step*batch_size) + ", Minibatch Loss= " + "{:.6f}".format(loss) +

", Training Accuracy = " + "{:.5f}".format(acc))

step += 1

print("Optimization Finished!")

# 计算测试精度

print("Testing Accuracy:", sess.run(accuracy, feed_dict={x: mnist.test.images[:256],

y: mnist.test.labels[:256],

keep_prob: 0.5}))

print('**********************')

print("Testing Accuracy:", sess.run(accuracy, feed_dict={x: mnist.test.images[:256],

y: mnist.test.labels[:256],

keep_prob: 1.0}))

3050

3050

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?