从集合中创建RDD

parallelize

def parallelize[T](seq: Seq[T], numSlices: Int = defaultParallelism)(implicit arg0: ClassTag[T]): RDD[T]从一个Seq集合创建RDD。

- 参数1: Seq 集合, 必须要有

- 参数2: 分区数,默认为该Application分配的资源的CPU核心

scala> var rdd = sc.parallelize(1 to 10)

rdd: org.apache.spark.rdd.RDD[Int] = ParallelCollectionRDD[2] at parallelize at :21

scala> rdd.collect

res3: Array[Int] = Array(1, 2, 3, 4, 5, 6, 7, 8, 9, 10)

scala> rdd.partitions.size

res4: Int = 15

//设置RDD为3个分区

scala> var rdd2 = sc.parallelize(1 to 10,3)

rdd2: org.apache.spark.rdd.RDD[Int] = ParallelCollectionRDD[3] at parallelize at :21

scala> rdd2.collect

res5: Array[Int] = Array(1, 2, 3, 4, 5, 6, 7, 8, 9, 10)

scala> rdd2.partitions.size

res6: Int = 3

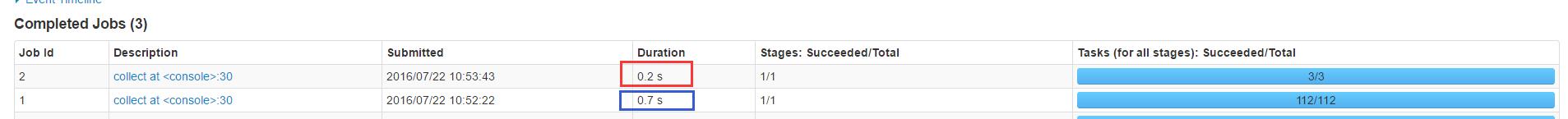

从这里可以看出,小数据分批的核心多,运行总时间反而要长于核心少的

makeRDD

def makeRDD[T](seq: Seq[T], numSlices: Int = defaultParallelism)(implicit arg0: ClassTag[T]): RDD[T]用法和parallelize完全一样,该用法可以指定每一个分区的preferredLocations。

scala> var collect = Seq((1 to 10,Seq("slaver1","slaver2")),(11 to 15, Seq("slaver3","slaver4")))

collect: Seq[(scala.collection.immutable.Range.Inclusive, Seq[String])] = List((Range(1, 2, 3, 4, 5, 6, 7, 8, 9, 10),List(slaver1, slaver2)), (Range(11, 12, 13, 14, 15),List(slaver3, slaver4)))

scala> var rdd = sc.makeRDD(collect)

rdd: org.apache.spark.rdd.RDD[scala.collection.immutable.Range.Inclusive] = ParallelCollectionRDD[12] at makeRDD at <console>:29

scala> rdd.partitions.size

res11: Int = 2

scala> rdd.preferredLocations(rdd.partitions(0))

res12: Seq[String] = List(slaver1, slaver2)

scala> rdd.preferredLocations(rdd.partitions(1))

res13: Seq[String] = List(slaver3, slaver4)makeRDD

从外部存储中创建RDD

textFile

从hdfs文件创建

scala> var rdd = sc.textFile("hdfs:///qgzang/1.txt")

16/07/22 11:35:29 INFO storage.MemoryStore: Block broadcast_0 stored as values in memory (estimated size 86.5 KB, free 86.5 KB)

16/07/22 11:35:29 INFO storage.MemoryStore: Block broadcast_0_piece0 stored as bytes in memory (estimated size 19.6 KB, free 106.1 KB)

16/07/22 11:35:29 INFO storage.BlockManagerInfo: Added broadcast_0_piece0 in memory on 192.168.122.1:57099 (size: 19.6 KB, free: 511.5 MB)

16/07/22 11:35:29 INFO spark.SparkContext: Created broadcast 0 from textFile at <console>:27

rdd: org.apache.spark.rdd.RDD[String] = hdfs:///qgzang/1.txt MapPartitionsRDD[1] at textFile at <console>:27

scala> rdd.count()

res0: Long = 3

从本地文件创建

scala> var rdd = sc.textFile("file:///home/bio/data3/data/qgzang/spark/qgzang/1.txt")

16/07/22 11:38:25 INFO storage.MemoryStore: Block broadcast_3 stored as values in memory (estimated size 229.6 KB, free 345.0 KB)

16/07/22 11:38:25 INFO storage.MemoryStore: Block broadcast_3_piece0 stored as bytes in memory (estimated size 19.6 KB, free 364.6 KB)

16/07/22 11:38:25 INFO storage.BlockManagerInfo: Added broadcast_3_piece0 in memory on 192.168.122.1:57099 (size: 19.6 KB, free: 511.5 MB)

16/07/22 11:38:25 INFO spark.SparkContext: Created broadcast 3 from textFile at <console>:27

rdd: org.apache.spark.rdd.RDD[String] = file:///home/bio/data3/data/qgzang/spark/qgzang/1.txt MapPartitionsRDD[3] at textFile at <console>:27

scala> rdd.count

16/07/22 11:38:31 INFO mapred.FileInputFormat: Total input paths to process : 1

16/07/22 11:38:31 INFO spark.SparkContext: Starting job: count at <console>:30

16/07/22 11:38:31 INFO scheduler.DAGScheduler: Got job 2 (count at <console>:30) with 2 output partitions

16/07/22 11:38:31 INFO scheduler.DAGScheduler: Final stage: ResultStage 2 (count at <console>:30)

16/07/22 11:38:31 INFO scheduler.DAGScheduler: Parents of final stage: List()

16/07/22 11:38:31 INFO scheduler.DAGScheduler: Missing parents: List()

16/07/22 11:38:31 INFO scheduler.DAGScheduler: Submitting ResultStage 2 (file:///home/bio/data3/data/qgzang/spark/qgzang/1.txt MapPartitionsRDD[3] at textFile at <console>:27), which has no missing parents

16/07/22 11:38:31 INFO storage.MemoryStore: Block broadcast_4 stored as values in memory (estimated size 3.0 KB, free 367.6 KB)

16/07/22 11:38:32 INFO storage.MemoryStore: Block broadcast_4_piece0 stored as bytes in memory (estimated size 1800.0 B, free 369.3 KB)

16/07/22 11:38:32 INFO storage.BlockManagerInfo: Added broadcast_4_piece0 in memory on 192.168.122.1:57099 (size: 1800.0 B, free: 511.5 MB)

16/07/22 11:38:32 INFO spark.SparkContext: Created broadcast 4 from broadcast at DAGScheduler.scala:1006

16/07/22 11:38:32 INFO scheduler.DAGScheduler: Submitting 2 missing tasks from ResultStage 2 (file:///home/bio/data3/data/qgzang/spark/qgzang/1.txt MapPartitionsRDD[3] at textFile at <console>:27)

16/07/22 11:38:32 INFO scheduler.TaskSchedulerImpl: Adding task set 2.0 with 2 tasks

16/07/22 11:38:32 INFO scheduler.TaskSetManager: Starting task 0.0 in stage 2.0 (TID 4, slaver4, partition 0,PROCESS_LOCAL, 2153 bytes)

16/07/22 11:38:32 INFO scheduler.TaskSetManager: Starting task 1.0 in stage 2.0 (TID 5, master, partition 1,PROCESS_LOCAL, 2153 bytes)

16/07/22 11:38:32 INFO storage.BlockManagerInfo: Added broadcast_4_piece0 in memory on master:58865 (size: 1800.0 B, free: 511.5 MB)

16/07/22 11:38:32 INFO storage.BlockManagerInfo: Added broadcast_3_piece0 in memory on master:58865 (size: 19.6 KB, free: 511.5 MB)

16/07/22 11:38:32 INFO scheduler.TaskSetManager: Finished task 1.0 in stage 2.0 (TID 5) in 213 ms on master (1/2)

16/07/22 11:38:32 INFO storage.BlockManagerInfo: Added broadcast_4_piece0 in memory on slaver4:47052 (size: 1800.0 B, free: 511.5 MB)

16/07/22 11:38:32 INFO storage.BlockManagerInfo: Added broadcast_3_piece0 in memory on slaver4:47052 (size: 19.6 KB, free: 511.5 MB)

16/07/22 11:38:33 INFO scheduler.TaskSetManager: Finished task 0.0 in stage 2.0 (TID 4) in 1074 ms on slaver4 (2/2)

16/07/22 11:38:33 INFO scheduler.DAGScheduler: ResultStage 2 (count at <console>:30) finished in 1.075 s

16/07/22 11:38:33 INFO scheduler.TaskSchedulerImpl: Removed TaskSet 2.0, whose tasks have all completed, from pool

16/07/22 11:38:33 INFO scheduler.DAGScheduler: Job 2 finished: count at <console>:30, took 1.093315 s

res2: Long = 3注意这里的本地文件路径需要在Driver和Executor端存在。

- 从其他HDFS文件格式创建

- hadoopFile

- sequenceFile

- objectFile

- newAPIHadoopFile

- 从Hadoop接口API创建

- hadoopRDD

- newAPIHadoopRDD

352

352

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?