Hadoop 文件API的起点是FileSystem类。这是一个与文件系统交互的抽象类。存在不同的具体实现子类来处理HDFS和本地文件系统。

HDFS接口的FileSystem对象:

Configuration conf = new Configuration();

FileSystem hdfs = FileSystem.get(conf);

HDFS直接操作:

hadoop fs -copyFromlocal /home/lichen.txt hdfs://node01:31000/lichen

从本地复制到HDFS

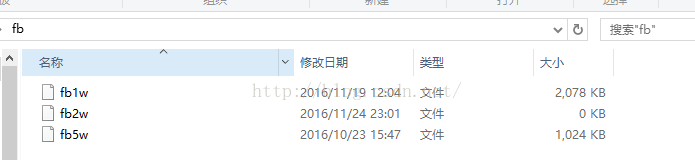

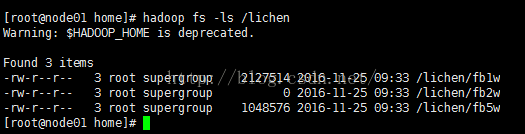

1.合并本地文件到hdfs中

package com.HDFSMerge.test;

import java.io.IOException;

import org.apache.hadoop.conf.Configuration;

import org.apache.hadoop.fs.FSDataInputStream;

import org.apache.hadoop.fs.FSDataOutputStream;

import org.apache.hadoop.fs.FileStatus;

import org.apache.hadoop.fs.FileSystem;

import org.apache.hadoop.fs.Path;

public class PutMerge {

public static void main(String[] args) throws IOException {

Configuration conf = new Configuration();

FileSystem hdfs = FileSystem.get(conf);

FileSystem local =FileSystem.getLocal(conf);

Path inputDir = new Path(args[0]);

Path HdfsFile = new Path(args[1]);

try {

FileStatus [] inputfiles =local.listStatus(inputDir);

FSDataOutputStream out = hdfs.create(HdfsFile);

for (int i=0;i<inputfiles.length;i++){

System.out.println(inputfiles[i].getPath().getName());

FSDataInputStream in = local.open(inputfiles[i].getPath());

byte []buffer=new byte[256];

int bytesRead = 0;

while((bytesRead = in.read(buffer))>0){

out.write(buffer,0,bytesRead);

}

in.close();

}

} catch (Exception e) {

e.printStackTrace();

}

}

}

将这三个小文件合成一个大的lichen1文件

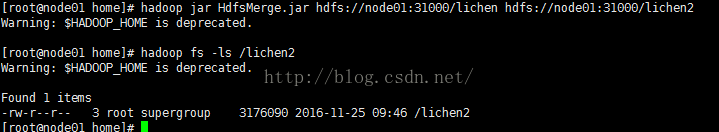

2.合并hdfs中的文件

package com.HDFSMerge.test;

import java.io.IOException;

import org.apache.hadoop.conf.Configuration;

import org.apache.hadoop.fs.FileUtil;

import org.apache.hadoop.fs.Path;

public class HdfsMerge {

public static void main(String[] args) {

Path src = new Path(args[0]);

Path dst = new Path(args[1]);

Configuration conf = new Configuration();

try {

FileUtil.copyMerge(src.getFileSystem(conf), src,

dst.getFileSystem(conf), dst, false, conf, null);

} catch (IOException e) {

// TODO Auto-generated catch block

e.printStackTrace();

}

}

}

lichen1 lichen2 都是文件 不是文件夹

1168

1168

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?