目录

目录

1.1、下载flink-1.15.1-bin-scala_2.12版本并解压。

2.3、配置配置文件zookeeper.properties

系统环境

CentOS7.9 IP地址:10.10.10.99

工作空间目录:/home/demo,所有操作都放在工作空间目录。

一、部署Flink

1.1、下载flink-1.15.1-bin-scala_2.12版本并解压。

wget https://dlcdn.apache.org/flink/flink-1.15.1/flink-1.15.1-bin-scala_2.12.tgz

1.2、配置flink-conf.yaml文件

jobmanager.rpc.address: 10.10.10.99

jobmanager.rpc.port: 6123

jobmanager.bind-host: 0.0.0.0

jobmanager.memory.process.size: 1600m

taskmanager.bind-host: 0.0.0.0

taskmanager.host: 10.10.10.99

taskmanager.memory.process.size: 1728m

taskmanager.numberOfTaskSlots: 1

parallelism.default: 2

# The default file system scheme and authority.

#

# By default file paths without scheme are interpreted relative to the local

# root file system 'file:///'. Use this to override the default and interpret

# relative paths relative to a different file system,

# for example 'hdfs://mynamenode:12345'

#

# fs.default-scheme

#==============================================================================

# High Availability

#==============================================================================

# The high-availability mode. Possible options are 'NONE' or 'zookeeper'.

#

# high-availability: zookeeper

# The path where metadata for master recovery is persisted. While ZooKeeper stores

# the small ground truth for checkpoint and leader election, this location stores

# the larger objects, like persisted dataflow graphs.

#

# Must be a durable file system that is accessible from all nodes

# (like HDFS, S3, Ceph, nfs, ...)

#

# high-availability.storageDir: hdfs:///flink/ha/

# The list of ZooKeeper quorum peers that coordinate the high-availability

# setup. This must be a list of the form:

# "host1:clientPort,host2:clientPort,..." (default clientPort: 2181)

#

# high-availability.zookeeper.quorum: localhost:2181

# ACL options are based on https://zookeeper.apache.org/doc/r3.1.2/zookeeperProgrammers.html#sc_BuiltinACLSchemes

# It can be either "creator" (ZOO_CREATE_ALL_ACL) or "open" (ZOO_OPEN_ACL_UNSAFE)

# The default value is "open" and it can be changed to "creator" if ZK security is enabled

#

# high-availability.zookeeper.client.acl: open

#==============================================================================

# Fault tolerance and checkpointing

#==============================================================================

# The backend that will be used to store operator state checkpoints if

# checkpointing is enabled. Checkpointing is enabled when execution.checkpointing.interval > 0.

#

# Execution checkpointing related parameters. Please refer to CheckpointConfig and ExecutionCheckpointingOptions for more details.

#

# execution.checkpointing.interval: 3min

# execution.checkpointing.externalized-checkpoint-retention: [DELETE_ON_CANCELLATION, RETAIN_ON_CANCELLATION]

# execution.checkpointing.max-concurrent-checkpoints: 1

# execution.checkpointing.min-pause: 0

# execution.checkpointing.mode: [EXACTLY_ONCE, AT_LEAST_ONCE]

# execution.checkpointing.timeout: 10min

# execution.checkpointing.tolerable-failed-checkpoints: 0

# execution.checkpointing.unaligned: false

#

# Supported backends are 'hashmap', 'rocksdb', or the

# <class-name-of-factory>.

#

# state.backend: hashmap

# Directory for checkpoints filesystem, when using any of the default bundled

# state backends.

#

# state.checkpoints.dir: hdfs://namenode-host:port/flink-checkpoints

# Default target directory for savepoints, optional.

#

# state.savepoints.dir: hdfs://namenode-host:port/flink-savepoints

# Flag to enable/disable incremental checkpoints for backends that

# support incremental checkpoints (like the RocksDB state backend).

#

# state.backend.incremental: false

# The failover strategy, i.e., how the job computation recovers from task failures.

# Only restart tasks that may have been affected by the task failure, which typically includes

# downstream tasks and potentially upstream tasks if their produced data is no longer available for consumption.

jobmanager.execution.failover-strategy: region

#==============================================================================

# Rest & web frontend

#==============================================================================

# The port to which the REST client connects to. If rest.bind-port has

# not been specified, then the server will bind to this port as well.

#

rest.port: 8081

# The address to which the REST client will connect to

#

rest.address: 10.10.10.99

# Port range for the REST and web server to bind to.

#

#rest.bind-port: 8080-8090

# The address that the REST & web server binds to

# By default, this is localhost, which prevents the REST & web server from

# being able to communicate outside of the machine/container it is running on.

#

# To enable this, set the bind address to one that has access to outside-facing

# network interface, such as 0.0.0.0.

#

rest.bind-address: 0.0.0.0

# Flag to specify whether job submission is enabled from the web-based

# runtime monitor. Uncomment to disable.

web.submit.enable: true

# Flag to specify whether job cancellation is enabled from the web-based

# runtime monitor. Uncomment to disable.

web.cancel.enable: true

#==============================================================================

# Advanced

#==============================================================================

# Override the directories for temporary files. If not specified, the

# system-specific Java temporary directory (java.io.tmpdir property) is taken.

#

# For framework setups on Yarn, Flink will automatically pick up the

# containers' temp directories without any need for configuration.

#

# Add a delimited list for multiple directories, using the system directory

# delimiter (colon ':' on unix) or a comma, e.g.:

# /data1/tmp:/data2/tmp:/data3/tmp

#

# Note: Each directory entry is read from and written to by a different I/O

# thread. You can include the same directory multiple times in order to create

# multiple I/O threads against that directory. This is for example relevant for

# high-throughput RAIDs.

#

io.tmp.dirs: /home/demo/flink-1.15.1/tmp

# The classloading resolve order. Possible values are 'child-first' (Flink's default)

# and 'parent-first' (Java's default).

#

# Child first classloading allows users to use different dependency/library

# versions in their application than those in the classpath. Switching back

# to 'parent-first' may help with debugging dependency issues.

#

# classloader.resolve-order: child-first

# The amount of memory going to the network stack. These numbers usually need

# no tuning. Adjusting them may be necessary in case of an "Insufficient number

# of network buffers" error. The default min is 64MB, the default max is 1GB.

#

taskmanager.memory.network.fraction: 0.1

taskmanager.memory.network.min: 64mb

taskmanager.memory.network.max: 1gb

#==============================================================================

# Flink Cluster Security Configuration

#==============================================================================

# Kerberos authentication for various components - Hadoop, ZooKeeper, and connectors -

# may be enabled in four steps:

# 1. configure the local krb5.conf file

# 2. provide Kerberos credentials (either a keytab or a ticket cache w/ kinit)

# 3. make the credentials available to various JAAS login contexts

# 4. configure the connector to use JAAS/SASL

# The below configure how Kerberos credentials are provided. A keytab will be used instead of

# a ticket cache if the keytab path and principal are set.

# security.kerberos.login.use-ticket-cache: true

# security.kerberos.login.keytab: /path/to/kerberos/keytab

# security.kerberos.login.principal: flink-user

# The configuration below defines which JAAS login contexts

# security.kerberos.login.contexts: Client,KafkaClient

#==============================================================================

# ZK Security Configuration

#==============================================================================

# Below configurations are applicable if ZK ensemble is configured for security

# Override below configuration to provide custom ZK service name if configured

# zookeeper.sasl.service-name: zookeeper

# The configuration below must match one of the values set in "security.kerberos.login.contexts"

# zookeeper.sasl.login-context-name: Client

#==============================================================================

# HistoryServer

#==============================================================================

# The HistoryServer is started and stopped via bin/historyserver.sh (start|stop)

# Directory to upload completed jobs to. Add this directory to the list of

# monitored directories of the HistoryServer as well (see below).

jobmanager.archive.fs.dir: /home/demo/flink-1.15.1/completed-jobs

# The address under which the web-based HistoryServer listens.

historyserver.web.address: 0.0.0.0

# The port under which the web-based HistoryServer listens.

historyserver.web.port: 8082

# Comma separated list of directories to monitor for completed jobs.

historyserver.archive.fs.dir: /home/demo/flink-1.15.1/history-jobs

# Interval in milliseconds for refreshing the monitored directories.

historyserver.archive.fs.refresh-interval: 10000

1.3、启动flink集群

/home/demo/flink-1.15.1/bin/start-cluster.sh

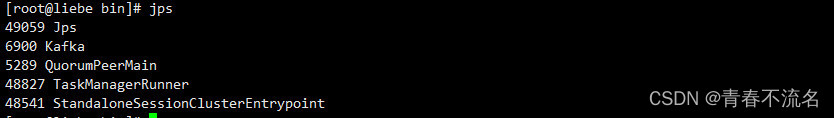

1.4、执行jps

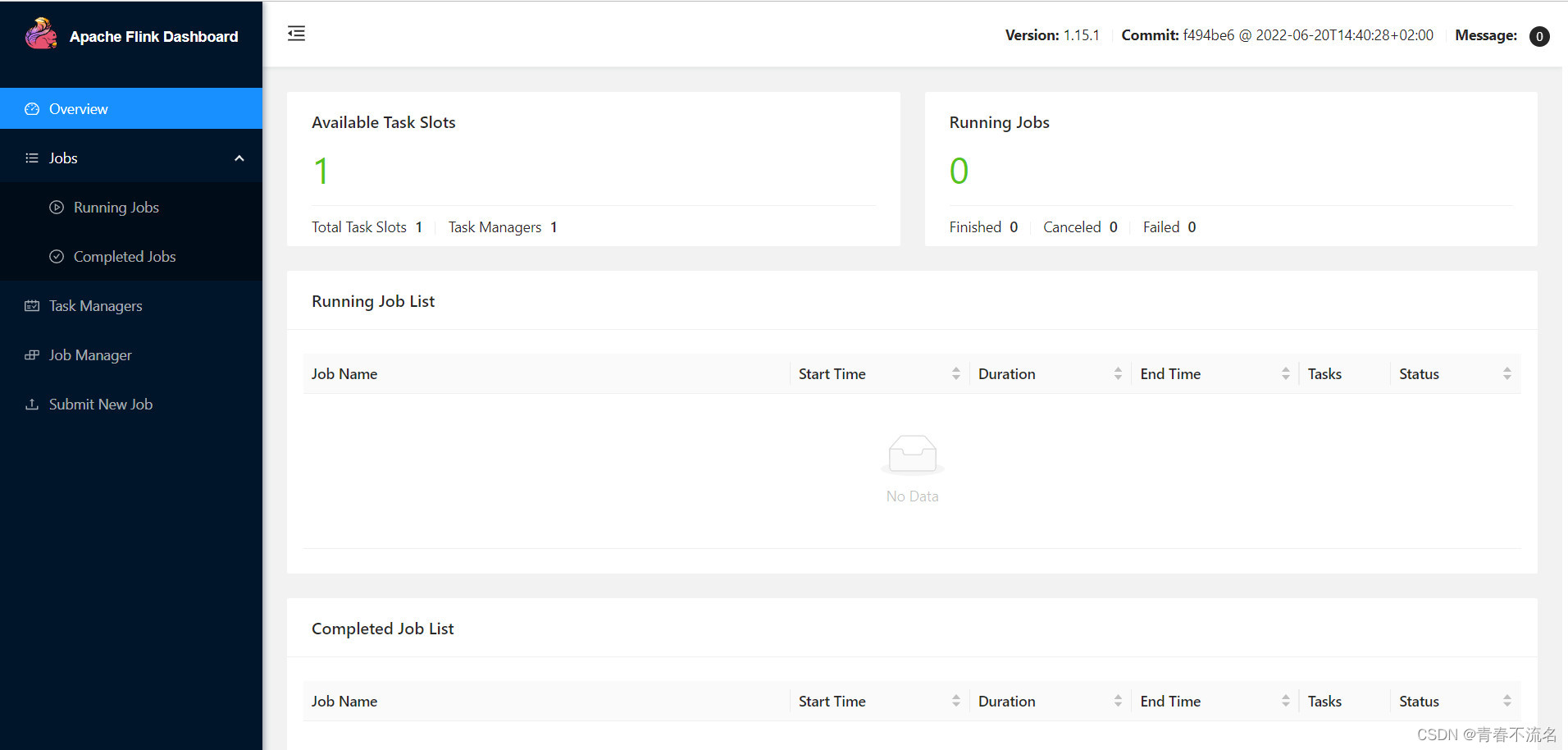

1.5、启动web ui页面

二、部署kafka

2.1、下载kafka_2.12-3.2.0版本并解压

wget https://dlcdn.apache.org/kafka/3.2.0/kafka_2.12-3.2.0.tgz

2.2、配置配置文件server.properties

vim /home/demo/kafka_2.12-3.2.0/config/server.properties

内容:

broker.id=0

listeners=PLAINTEXT://0.0.0.0:9092

advertised.listeners=PLAINTEXT://10.10.10.99:9092

num.network.threads=3

num.io.threads=8

socket.send.buffer.bytes=102400

socket.receive.buffer.bytes=102400

socket.request.max.bytes=104857600

log.dirs=/home/demo/kafka_2.12-3.2.0/kafka-logs

num.partitions=1

num.recovery.threads.per.data.dir=1

offsets.topic.replication.factor=1

transaction.state.log.replication.factor=1

transaction.state.log.min.isr=1

log.flush.interval.messages=10000

log.flush.interval.ms=1000

log.retention.hours=24

log.retention.bytes=1073741824

log.segment.bytes=1073741824

log.retention.check.interval.ms=300000

zookeeper.connect=localhost:2181

zookeeper.connection.timeout.ms=18000

group.initial.rebalance.delay.ms=0

2.3、配置配置文件zookeeper.properties

vim /home/demo/kafka_2.12-3.2.0/config/zookeepe.properties

内容:

dataDir=/home/demo/kafka_2.12-3.2.0/logs/zookeeper

clientPort=2181

maxClientCnxns=0

admin.enableServer=true

admin.serverPort=18080

2.4、启动kafka

先启动kafka的内置zookeeper

/home/demo/kafka_2.12-3.2.0/bin/zookeeper-server-start.sh -daemon /home/demo/kafka_2.12-3.2.0/config/zookeeper.properties

在启动kafka server

/home/demo/kafka_2.12-3.2.0/bin/kafka-server-start.sh -daemon /home/demo/kafka_2.12-3.2.0/config/server.properties

2.5、验证kafka监听端口

netstat -ntulp | grep 2181 (zookeeper)

netstat -ntulp | grep 9200 (kafka)

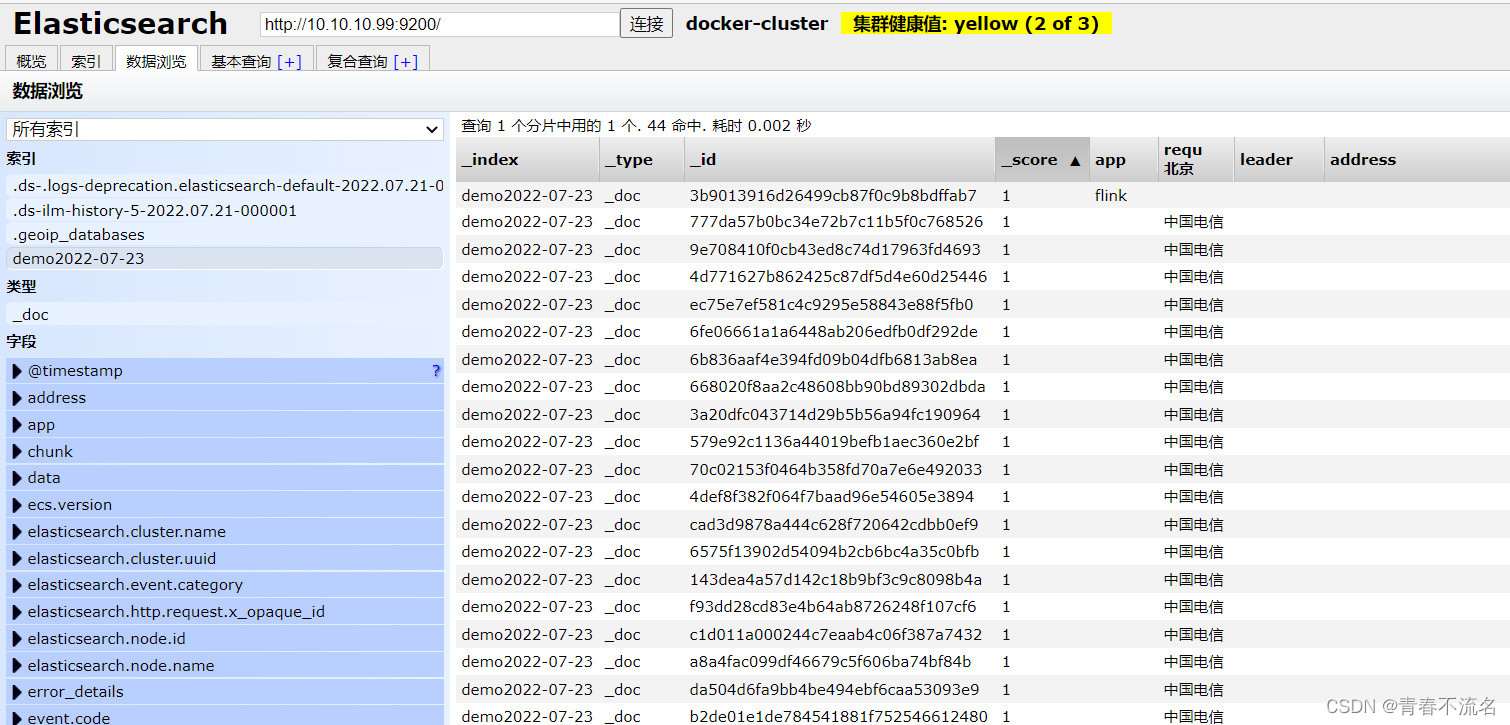

三、部署Elasticsearch

3.1、使用dockers部署ES,启动命令如下:

docker network create es

docker run -d --restart=unless-stopped --privileged --name elasticsearch --net es -v /home/demo/elasticsearch/data:/usr/share/elasticsearch/data -v /home/demo/elasticsearch/logs:/usr/share/elasticsearch/logs -p 9200:9200 -p 9300:9300 -e "discovery.type=single-node" elasticsearch:7.17.5

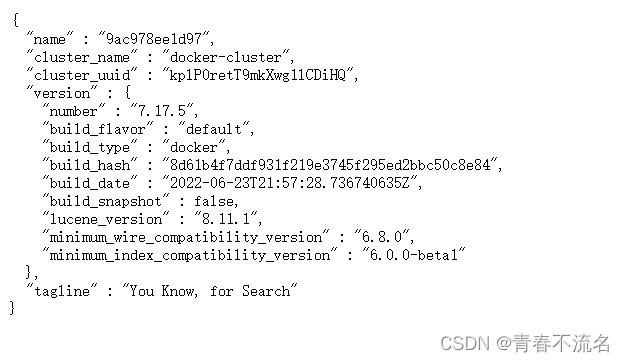

3.2、访问HTTP 9200地址

四、编写程序

https://download.csdn.net/download/TT1024167802/86248701

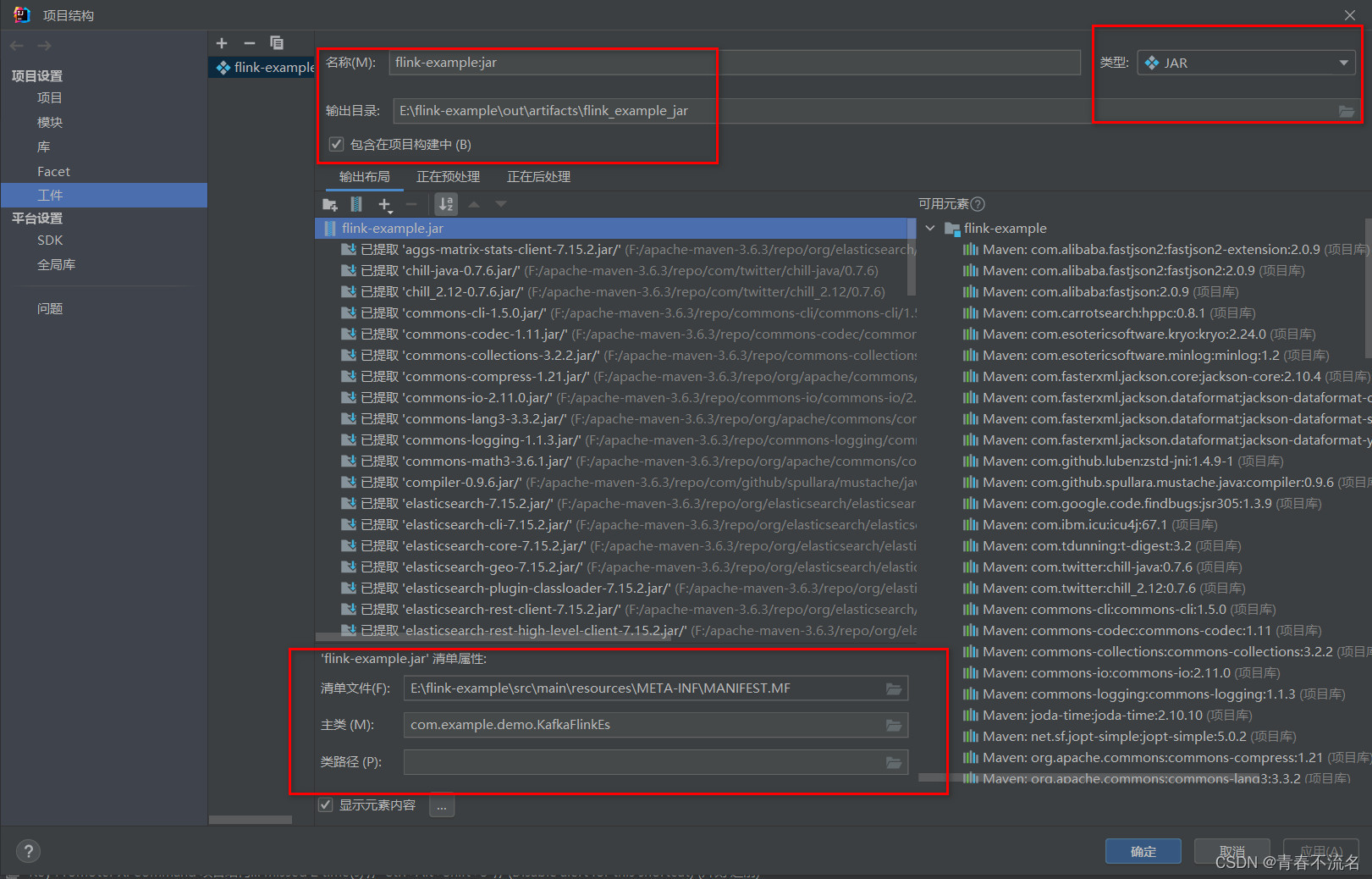

五、IDEA打包成可执行jar文件

5.1、idea配置构建信息

demo使用maven构建,多次导出运行java -jar 命令后,出现找不到主类问题,使用Maven Helper解决Jar冲突后运行,正常

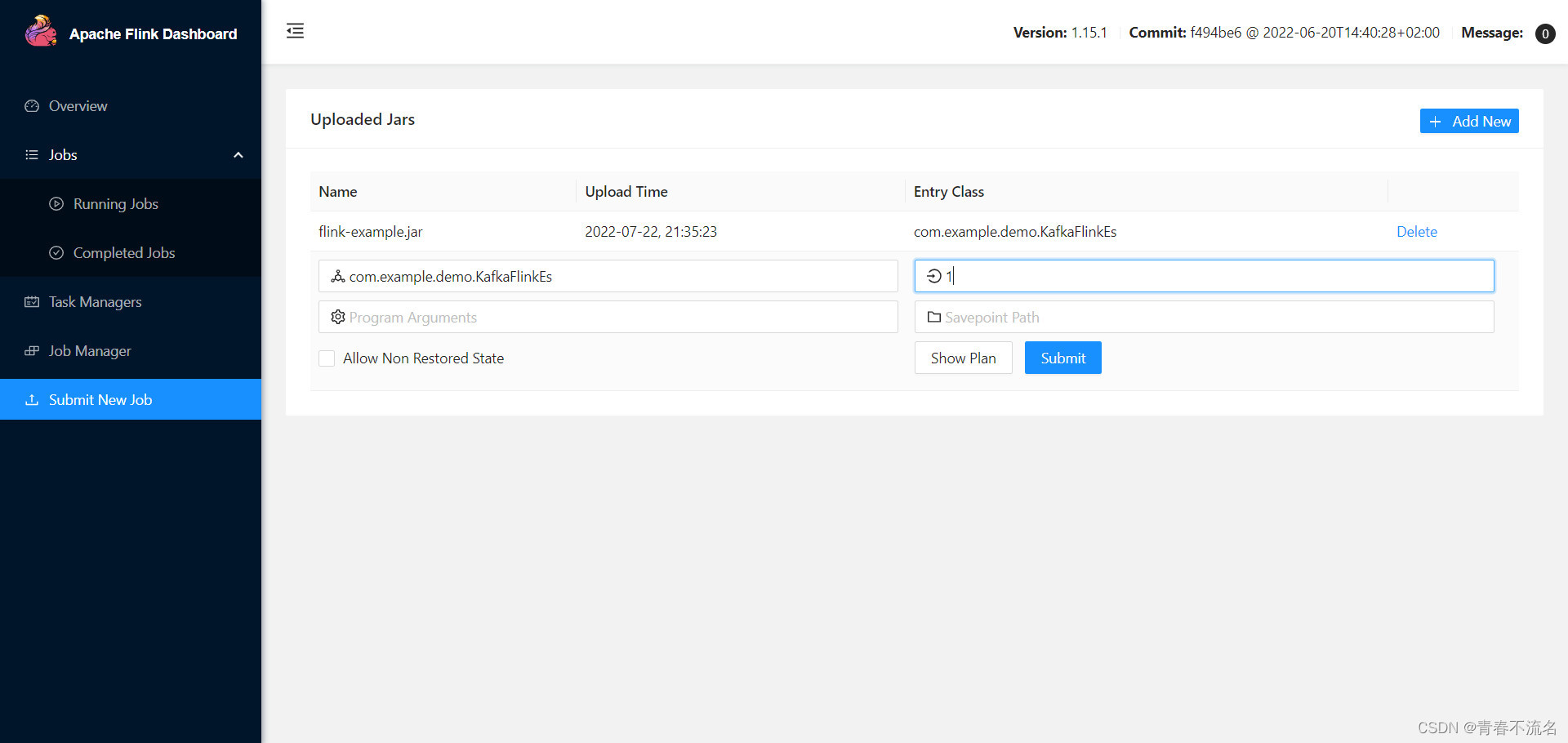

六、部署demo jar查看演示结果

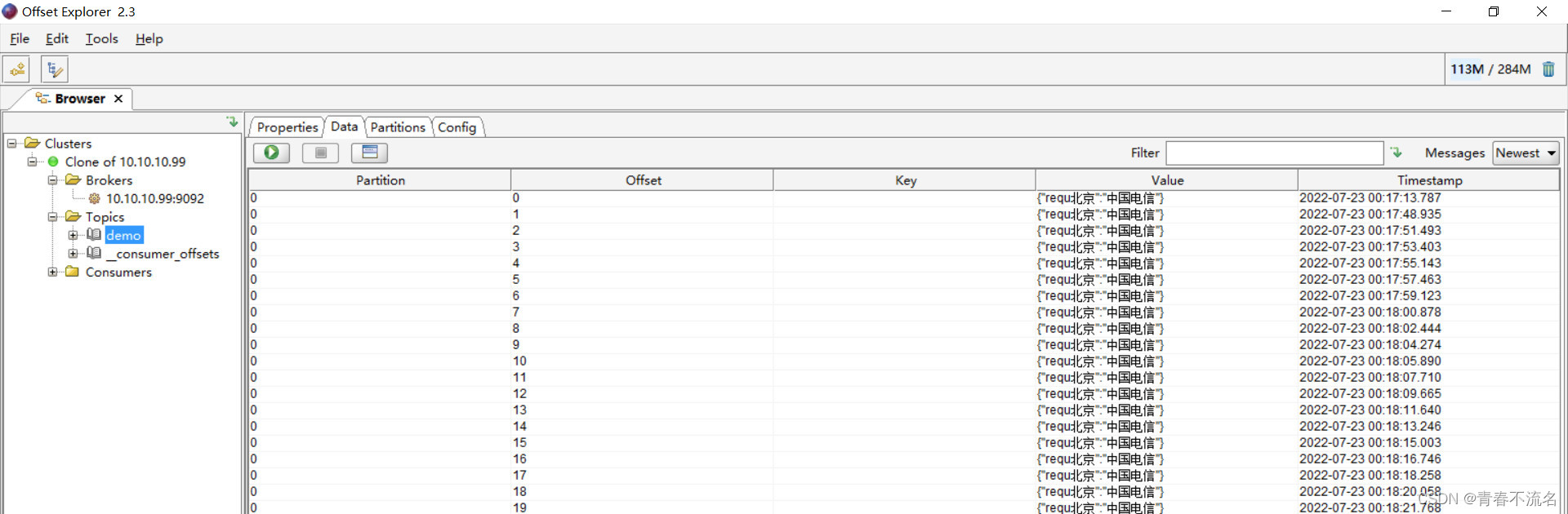

6.1、kafka命令

/home/demo/kafka_2.12-3.2.0/bin/kafka-topics.sh --bootstrap-server 10.10.10.99:9092 --list --describe

/home/demo/kafka_2.12-3.2.0/bin/kafka-topics.sh --bootstrap-server 10.10.10.99:9092 --create --partitions 1 --replication-factor 1 --topic demo

/home/demo/kafka_2.12-3.2.0/bin/kafka-console-consumer.sh --bootstrap-server 10.10.10.99:9092 --topic demo --from-beginning

/home/demo/kafka_2.12-3.2.0/bin/kafka-console-producer.sh --bootstrap-server 10.10.10.99:9092 --topic demo

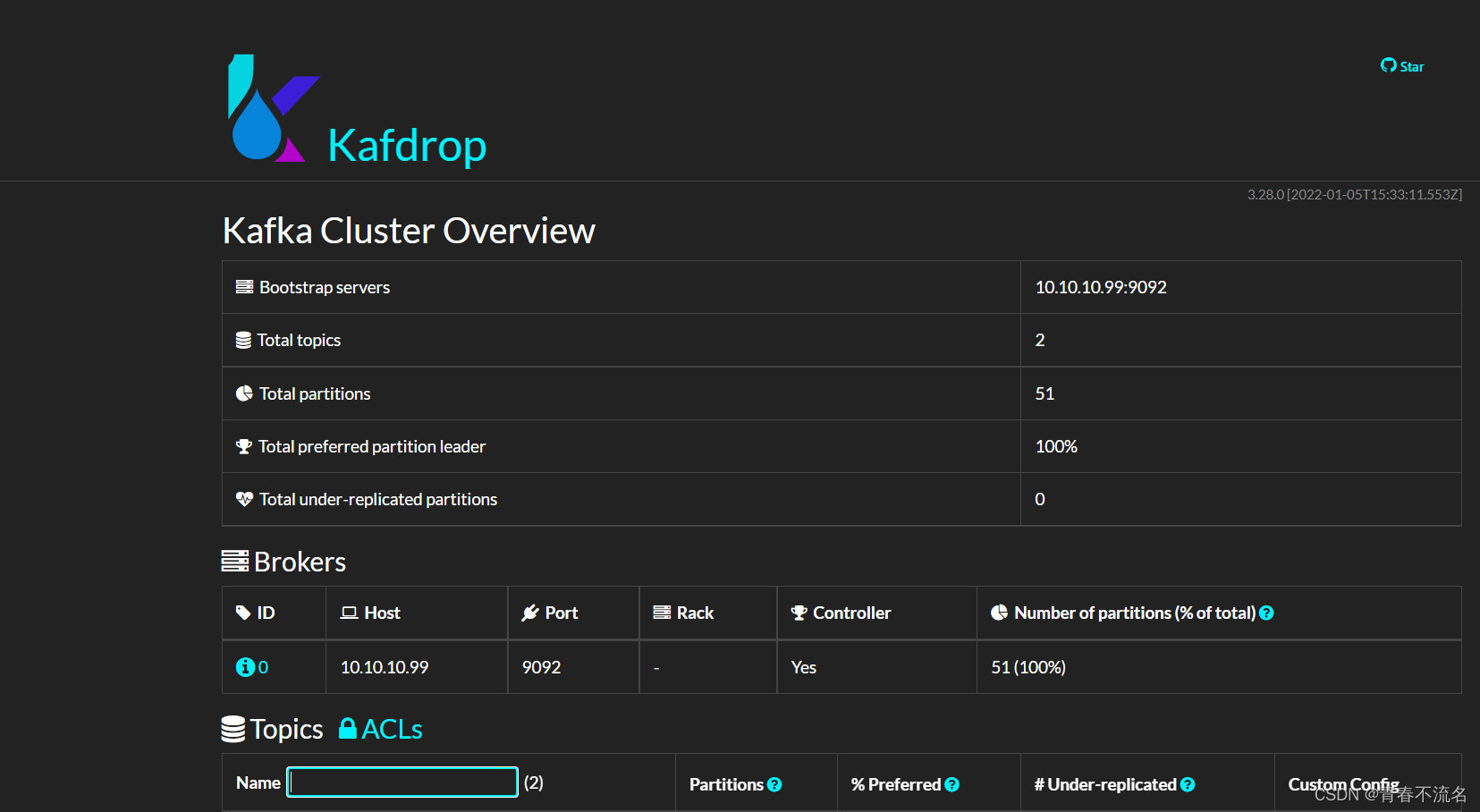

6.2、部署kafka ui程序

docker run -p 8061:8080 \ -e KAFKA_CLUSTERS_0_NAME=local \ -e KAFKA_CLUSTERS_0_BOOTSTRAPSERVERS=10.10.10.99:9092 \ -d provectuslabs/kafka-ui:latest

docker run -d --rm -v /home/demo/protobuf_desc:/var/protobuf_desc -p 9000:9000 -e KAFKA_BROKERCONNECT=10.10.10.99:9092 -e JVM_OPTS="-Xms32M -Xmx64M" -e SERVER_SERVLET_CONTEXTPATH="/" -e CMD_ARGS="--message.format=PROTOBUF --protobufdesc.directory=/var/protobuf_desc" obsidiandynamics/kafdrop

打包jar方式运行

java --add-opens=java.base/sun.nio.ch=ALL-UNNAMED \

-jar kafdrop.jar \

--kafka.brokerConnect=10.10.10.99:9092 --server.port=9000 --management.server.port=9002

1993

1993

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?