目录

一. 开题说明:

本人在完成HW1的过程中,发现存在以下困难:

1. 信息获取相对麻烦,不易于寻找相关资料

2. 可提供的有用信息比较零散,导致不能马上检索出关键

3. 部分高质量代码因博主个人收取费用,致使开源学习受限

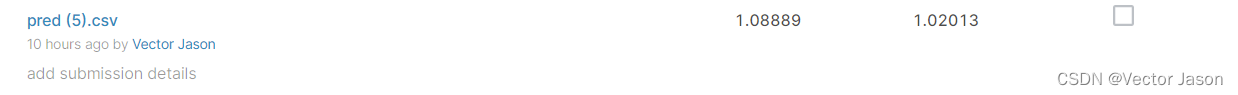

鉴于以上,在此进行开源分享,希望能更好地帮助大家同时加深自己的印象。以下是提交结果,其中得分分别来自于Private Score和Public Score(已过Strong Baseline)

二. 梗概:

通过Sample Code的运行,在Kaggle平台上可取得在Private和Public Score上分别为1.91281,1.89565的分数,本文在Sample Code的基础上,更进了Feature Selection,DNN,Optimizer以及learning rate,最终取得Private和Public Score上分别为1.08889,1.02013的分数。

三. 问题背景:

Based on the survey results of the past 5 days in specific states of the United States, the percentage of new positive cases on the fifth day is predicted. Through this training, we should achieve the following objectives:

- Solve a regression problem with deep neural networks (DNN).

- Understand basic DNN training tips.

- Familiarize yourself with PyTorch.

四. 模型建立:

1. 数据下载

P.S. 数据集能够通过pip指令下载,也能够从Kaggle平台上直接下载:

!gdown --id '1kLSW_-cW2Huj7bh84YTdimGBOJaODiOS' --output covid.train.csv

!gdown --id '1iiI5qROrAhZn-o4FPqsE97bMzDEFvIdg' --output covid.test.csv

2. 导入必要的包

# Numerical Operations

import math

import numpy as np

# Reading/Writing Data

import pandas as pd

import os

import csv

# For Progress Bar

from tqdm import tqdm

# Pytorch

import torch

import torch.nn as nn

from torch.utils.data import Dataset, DataLoader, random_split

# For plotting learning curve

from torch.utils.tensorboard import SummaryWriter

3. 定义函数

def same_seed(seed):

'''Fixes random number generator seeds for reproducibility.'''

torch.backends.cudnn.deterministic = True

torch.backends.cudnn.benchmark = False

np.random.seed(seed)

torch.manual_seed(seed)

if torch.cuda.is_available():

torch.cuda.manual_seed_all(seed)

def train_valid_split(data_set, valid_ratio, seed):

'''Split provided training data into training set and validation set'''

valid_set_size = int(valid_ratio * len(data_set))

train_set_size = len(data_set) - valid_set_size

train_set, valid_set = random_split(data_set, [train_set_size, valid_set_size], generator=torch.Generator().manual_seed(seed))

return np.array(train_set), np.array(valid_set)

def predict(test_loader, model, device):

model.eval() # Set your model to evaluation mode.

preds = []

for x in tqdm(test_loader):

x = x.to(device)

with torch.no_grad():

pred = model(x)

preds.append(pred.detach().cpu())

preds = torch.cat(preds, dim=0).numpy()

return preds

def trainer(train_loader, valid_loader, model, config, device):

criterion = nn.MSELoss(reduction='mean') # Define your loss function, do not modify this.

# Define your optimization algorithm.

# TODO: Please check https://pytorch.org/docs/stable/optim.html to get more available algorithms.

# TODO: L2 regularization (optimizer(weight decay...) or implement by your self).

optimizer = torch.optim.Adam(model.parameters(), lr=config['learning_rate'])

writer = SummaryWriter() # Writer of tensoboard.

if not os.path.isdir('./models'):

os.mkdir('./models') # Create directory of saving models.

n_epochs, best_loss, step, early_stop_count = config['n_epochs'], math.inf, 0, 0

for epoch in range(n_epochs):

model.train() # Set your model to train mode.

loss_record = []

# tqdm is a package to visualize your training progress.

train_pbar = tqdm(train_loader, position=0, leave=True)

for x, y in train_pbar:

optimizer.zero_grad() # Set gradient to zero.

x, y = x.to(device), y.to(device) # Move your data to device.

pred = model(x)

loss = criterion(pred, y)

loss.backward() # Compute gradient(backpropagation).

optimizer.step() # Update parameters.

step += 1

loss_record.append(loss.detach().item())

# Display current epoch number and loss on tqdm progress bar.

train_pbar.set_description(f'Epoch [{epoch+1}/{n_epochs}]')

train_pbar.set_postfix({'loss': loss.detach().item()})

mean_train_loss = sum(loss_record)/len(loss_record)

writer.add_scalar('Loss/train', mean_train_loss, step)

model.eval() # Set your model to evaluation mode.

loss_record = []

for x, y in valid_loader:

x, y = x.to(device), y.to(device)

with torch.no_grad():

pred = model(x)

loss = criterion(pred, y)

loss_record.append(loss.item())

mean_valid_loss = sum(loss_record)/len(loss_record)

print(f'Epoch [{epoch+1}/{n_epochs}]: Train loss: {mean_train_loss:.4f}, Valid loss: {mean_valid_loss:.4f}')

writer.add_scalar('Loss/valid', mean_valid_loss, step)

if mean_valid_loss < best_loss:

best_loss = mean_valid_loss

torch.save(model.state_dict(), config['save_path']) # Save your best model

print('Saving model with loss {:.3f}...'.format(best_loss))

early_stop_count = 0

else:

early_stop_count += 1

if early_stop_count >= config['early_stop']:

print('\nModel is not improving, so we halt the training session.')

return4. 定义类(Dataset以及DNN)

class COVID19Dataset(Dataset):

'''

x: Features.

y: Targets, if none, do prediction.

'''

def __init__(self, x, y=None):

if y is None:

self.y = y

else:

self.y = torch.FloatTensor(y)

self.x = torch.FloatTensor(x)

def __getitem__(self, idx):

if self.y is None:

return self.x[idx]

else:

return self.x[idx], self.y[idx]

def __len__(self):

return len(self.x)

class My_Model(nn.Module):

def __init__(self, input_dim):

super(My_Model, self).__init__()

# TODO: modify model's structure, be aware of dimensions.

self.layers = nn.Sequential(

nn.Linear(input_dim, 64),

nn.LeakyReLU(0.1),

nn.Linear(64, 32),

nn.LeakyReLU(0.1),

nn.Linear(32, 16),

nn.LeakyReLU(0.1),

nn.Linear(16, 8),

nn.LeakyReLU(0.1),

nn.Linear(8, 1),

)

def forward(self, x):

x = self.layers(x)

#x = nn.functional.dropout(x,p=0.01,training=self.training)

x = x.squeeze(1) # (B, 1) -> (B)

return x

from google.colab import drive

drive.mount('/content/drive')5. 特征选择

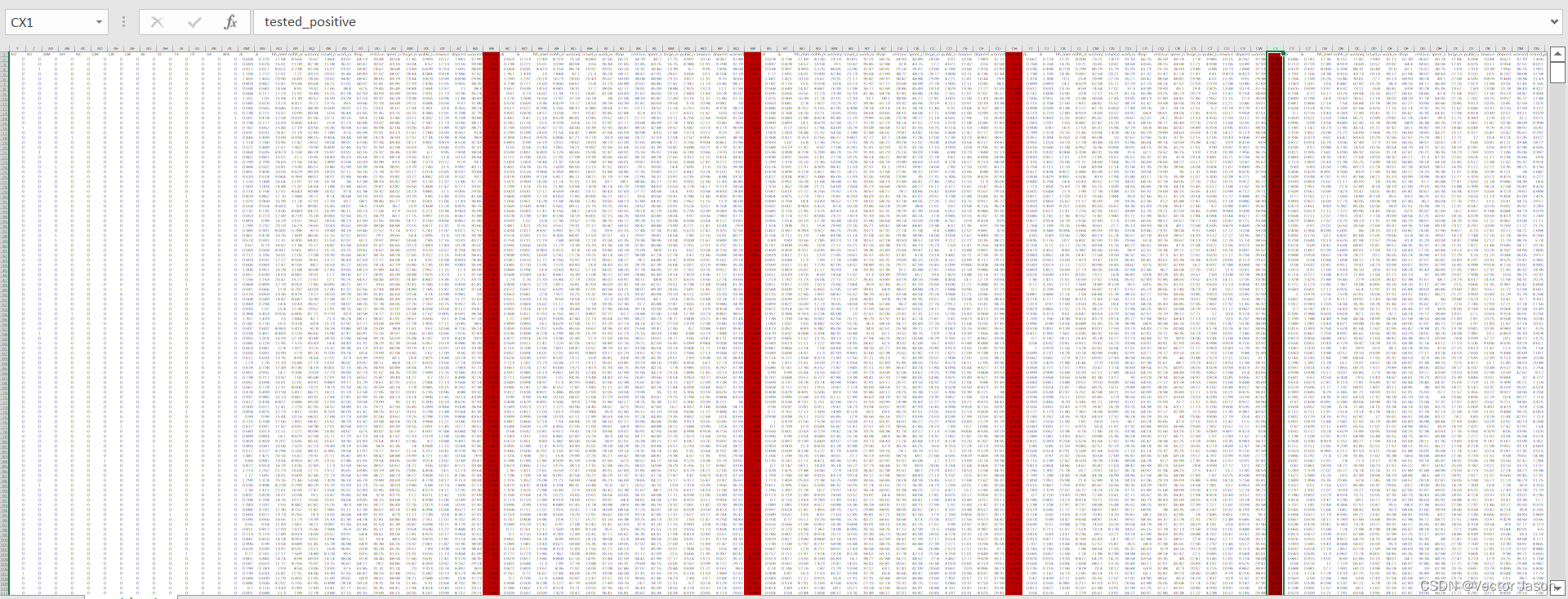

由于在测试集中需预测第五天的tested_postive,因此我选用了前四天的tested_positive作为feat_idx以增强数据之间的相关性。值得注意的是,在处理csv文件时需去除第一列(id对问题的解决无实际性意义)。

def select_feat(train_data, valid_data, test_data, select_all=True):

'''Selects useful features to perform regression'''

y_train, y_valid = train_data[:,-1], valid_data[:,-1]

raw_x_train, raw_x_valid, raw_x_test = train_data[:,:-1], valid_data[:,:-1], test_data

if select_all:

feat_idx = list(range(raw_x_train.shape[1]))

else:

feat_idx = [53, 69, 85, 101] # TODO: Select suitable feature columns.

return raw_x_train[:,feat_idx], raw_x_valid[:,feat_idx], raw_x_test[:,feat_idx], y_train, y_valid训练集covid.train.csv大致情况如下:

6. 定义超参数

device = 'cuda' if torch.cuda.is_available() else 'cpu'

config = {

'seed': 5201314, # Your seed number, you can pick your lucky number. :)

'select_all': False, # Whether to use all features.

'valid_ratio': 0.2, # validation_size = train_size * valid_ratio

'n_epochs': 3000, # Number of epochs.

'batch_size': 256,

'learning_rate': 1e-4,

'early_stop': 400, # If model has not improved for this many consecutive epochs, stop training.

'save_path': './models/model.ckpt' # Your model will be saved here.

}7. 定义DataLoader

从文件中读取数据并设置培训、验证和测试集。

# Set seed for reproducibility

same_seed(config['seed'])

# train_data size: 2699 x 118 (id + 37 states + 16 features x 5 days)

# test_data size: 1078 x 117 (without last day's positive rate)

train_data, test_data = pd.read_csv('./covid.train.csv').values, pd.read_csv('./covid.test.csv').values

train_data, valid_data = train_valid_split(train_data, config['valid_ratio'], config['seed'])

# Print out the data size.

print(f"""train_data size: {train_data.shape}

valid_data size: {valid_data.shape}

test_data size: {test_data.shape}""")

# Select features

x_train, x_valid, x_test, y_train, y_valid = select_feat(train_data, valid_data, test_data, config['select_all'])

# Print out the number of features.

print(f'number of features: {x_train.shape[1]}')

train_dataset, valid_dataset, test_dataset = COVID19Dataset(x_train, y_train), \

COVID19Dataset(x_valid, y_valid), \

COVID19Dataset(x_test)

# Pytorch data loader loads pytorch dataset into batches.

train_loader = DataLoader(train_dataset, batch_size=config['batch_size'], shuffle=True, pin_memory=True)

valid_loader = DataLoader(valid_dataset, batch_size=config['batch_size'], shuffle=True, pin_memory=True)

test_loader = DataLoader(test_dataset, batch_size=config['batch_size'], shuffle=False, pin_memory=True)8. 训练与预测

# Start Training !!!

model = My_Model(input_dim=x_train.shape[1]).to(device) # put your model and data on the same computation device.

trainer(train_loader, valid_loader, model, config, device)

# Start Predicting !!!

def save_pred(preds, file):

''' Save predictions to specified file '''

with open(file, 'w') as fp:

writer = csv.writer(fp)

writer.writerow(['id', 'tested_positive'])

for i, p in enumerate(preds):

writer.writerow([i, p])

model = My_Model(input_dim=x_train.shape[1]).to(device)

model.load_state_dict(torch.load(config['save_path']))

preds = predict(test_loader, model, device)

save_pred(preds, 'pred.csv') 五. 可视化分析

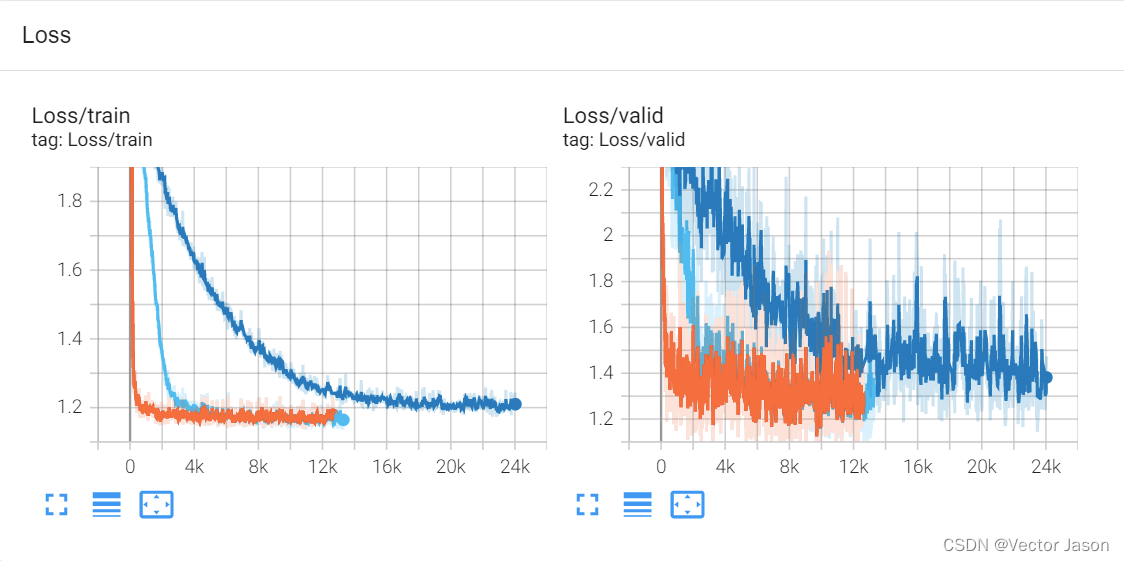

在深度学习框架中,有很多可视化的工具,其中,Tensorboard是一个工具,可以让您可视化训练进度。运行该配件时,需利用以下指令:

%reload_ext tensorboard

%tensorboard --logdir=./runs/ 训练过程Loss在Train和Valid的下降过程如下:

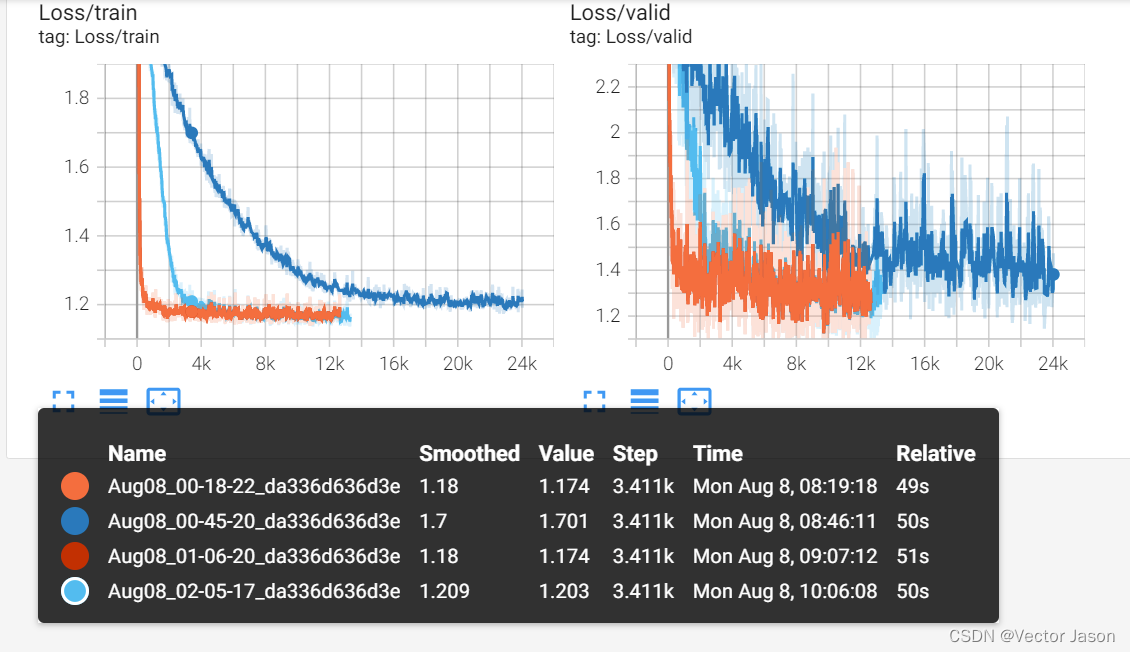

此外,可以在board上滑动鼠标进行数值的更进一步分析:

六. 优缺点分析与改进建议

优点:

1. 本文从相关性出发针对性地选取了前四天的tested_positive,同时利用优化后的全连接层完成了回归预测。

2. 对优化器进行了改进,利用Adam使Loss下降更加平稳,同时稍提高learning rate以增加Loss的收敛速度

缺点:

1. 由于能力受限,无法对整个数据集中的所有指标进行相关性分析

2. learning rate不能随着学习过程的变化进行动态更替,导致训练后期并不能收敛到一个较低的值,而是进行反复地上下波动

3. 未考虑是否存在过拟合情况

改进建议:

1. 查阅文献,可采用余弦退火的技巧对learning rate进行动态更新

2. 可利用sklearn中的特征选择方法对Feature Selection进行更改,同时可对各个指标进行归一化

3. 在DNN模型定义中加入L2正则化,Dropout等方法防止模型过拟合

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?