目录

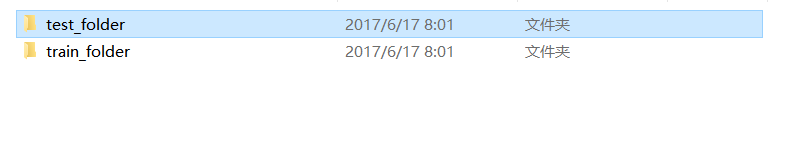

一、数据集准备

- Genki4k数据集下载

下载地址:https://inc.ucsd.edu/mplab/398.php - 划分数据集

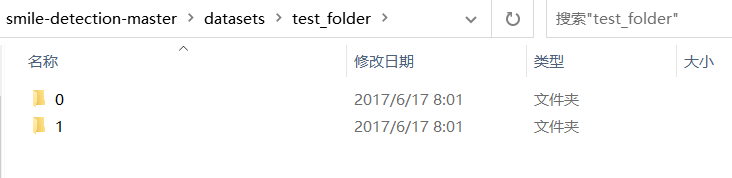

将Genki4k数据集分为测试集与训练集。

并按标签分开存放。

二、基于卷积神经网络训练模型

1. 构建模型

构建代码:

from keras import layers

from keras import models

model = models.Sequential()

model.add(layers.Conv2D(32, (3, 3), activation='relu',

input_shape=(150, 150, 3)))

model.add(layers.MaxPooling2D((2, 2)))

model.add(layers.Conv2D(64, (3, 3), activation='relu'))

model.add(layers.MaxPooling2D((2, 2)))

model.add(layers.Conv2D(128, (3, 3), activation='relu'))

model.add(layers.MaxPooling2D((2, 2)))

model.add(layers.Conv2D(128, (3, 3), activation='relu'))

model.add(layers.MaxPooling2D((2, 2)))

model.add(layers.Flatten())

model.add(layers.Dense(512, activation='relu'))

model.add(layers.Dense(1, activation='sigmoid'))

查看征图的尺寸是如何随着每一层变化的

model.summary()

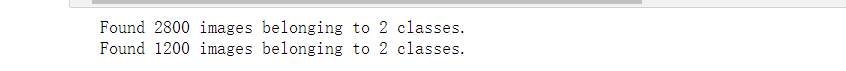

2. 图像数据预处理

from tensorflow.keras import optimizers

from tensorflow.keras.preprocessing.image import ImageDataGenerator

import os, shutil

datagen = ImageDataGenerator(

rotation_range=40,

width_shift_range=0.2,

height_shift_range=0.2,

shear_range=0.2,

zoom_range=0.2,

horizontal_flip=True,

fill_mode='nearest')

model.compile(loss='binary_crossentropy',

optimizer=optimizers.RMSprop(lr=1e-4),

metrics=['acc'])

base_dir = 'E:\\smile-detection-master\\datasets'

train_dir = os.path.join(base_dir, 'train_folder')

test_dir = os.path.join(base_dir, 'test_folder')

# All images will be rescaled by 1./255

# All images will be rescaled by 1./255

train_datagen = ImageDataGenerator(

rescale=1./255,

rotation_range=40,

width_shift_range=0.2,

height_shift_range=0.2,

shear_range=0.2,

zoom_range=0.2,

horizontal_flip=True,)

test_datagen = ImageDataGenerator(rescale=1./255)

train_generator = train_datagen.flow_from_directory(

# This is the target directory

train_dir,

# All images will be resized to 150x150

target_size=(150, 150),

batch_size=32,

# Since we use binary_crossentropy loss, we need binary labels

class_mode='binary')

test_generator = test_datagen.flow_from_directory(

test_dir,

target_size=(150, 150),

batch_size=32,

class_mode='binary')

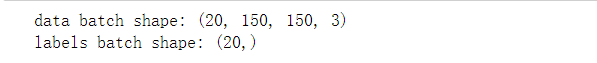

生成器的输出:

for data_batch, labels_batch in train_generator:

print('data batch shape:', data_batch.shape)

print('labels batch shape:', labels_batch.shape)

break

3. 训练

代码:

history = model.fit_generator(

train_generator,

steps_per_epoch=100,

epochs=100,

validation_data=test_generator,

validation_steps=50)

保存模型:

model.save('E:\\smile-detection-master\\models\\smiles_and_unsmiles_small_2.h5')

4. 绘制模型的损失图和准确性图像

acc = history.history['acc']

val_acc = history.history['val_acc']

loss = history.history['loss']

val_loss = history.history['val_loss']

epochs = range(len(acc))

plt.plot(epochs, acc, 'bo', label='Training acc')

plt.plot(epochs, val_acc, 'b', label='Validation acc')

plt.title('Training and validation accuracy')

plt.legend()

plt.figure()

plt.plot(epochs, loss, 'bo', label='Training loss')

plt.plot(epochs, val_loss, 'b', label='Validation loss')

plt.title('Training and validation loss')

plt.legend()

plt.show()

5. 使用该模型进行微笑识别

#检测视频或者摄像头中的人脸

import cv2

from keras.preprocessing import image

from keras.models import load_model

import numpy as np

import dlib

from PIL import Image

model = load_model('D:/smile1/smiles_and_unsmiles_small_2.h5')

detector = dlib.get_frontal_face_detector()

video=cv2.VideoCapture(0)

font = cv2.FONT_HERSHEY_SIMPLEX

def rec(img):

gray=cv2.cvtColor(img,cv2.COLOR_BGR2GRAY)

dets=detector(gray,1)

if dets is not None:

for face in dets:

left=face.left()

top=face.top()

right=face.right()

bottom=face.bottom()

cv2.rectangle(img,(left,top),(right,bottom),(0,255,0),2)

img1=cv2.resize(img[top:bottom,left:right],dsize=(150,150))

img1=cv2.cvtColor(img1,cv2.COLOR_BGR2RGB)

img1 = np.array(img1)/255.

img_tensor = img1.reshape(-1,150,150,3)

prediction =model.predict(img_tensor)

if prediction[0][0]>0.5:

result='smile'

else:

result='unsmile'

cv2.putText(img, result, (left,top), font, 2, (0, 255, 0), 2, cv2.LINE_AA)

cv2.imshow('Video', img)

while video.isOpened():

res, img_rd = video.read()

if not res:

break

rec(img_rd)

if cv2.waitKey(5) & 0xFF == ord('q'):

break

video.release()

cv2.destroyAllWindows()

这里采用上面训练出来的模型

smiles_and_unsmiles_small_1.h5

笑脸识别:

非笑脸识别:

三、使用OpenCV自带的微笑识别库

1.代码编写

import cv2

face_cascade = cv2.CascadeClassifier(cv2.data.haarcascades+'haarcascade_frontalface_default.xml')

eye_cascade = cv2.CascadeClassifier(cv2.data.haarcascades+'haarcascade_eye.xml')

smile_cascade = cv2.CascadeClassifier(cv2.data.haarcascades+'haarcascade_smile.xml')

# 调用摄像头摄像头

cap = cv2.VideoCapture(0)

while(True):

# 获取摄像头拍摄到的画面

ret, frame = cap.read()

faces = face_cascade.detectMultiScale(frame, 1.3, 2)

img = frame

for (x,y,w,h) in faces:

# 画出人脸框,颜色自己定义,画笔宽度微

img = cv2.rectangle(img,(x,y),(x+w,y+h),(255,225,0),2)

# 框选出人脸区域,在人脸区域而不是全图中进行人眼检测,节省计算资源

face_area = img[y:y+h, x:x+w]

## 人眼检测

# 用人眼级联分类器引擎在人脸区域进行人眼识别,返回的eyes为眼睛坐标列表

eyes = eye_cascade.detectMultiScale(face_area,1.3,10)

for (ex,ey,ew,eh) in eyes:

#画出人眼框,绿色,画笔宽度为1

cv2.rectangle(face_area,(ex,ey),(ex+ew,ey+eh),(0,255,0),1)

## 微笑检测

# 用微笑级联分类器引擎在人脸区域进行人眼识别,返回的eyes为眼睛坐标列表

smiles = smile_cascade.detectMultiScale(face_area,scaleFactor= 1.16,minNeighbors=65,minSize=(25, 25),flags=cv2.CASCADE_SCALE_IMAGE)

for (ex,ey,ew,eh) in smiles:

#画出微笑框,(BGR色彩体系),画笔宽度为1

cv2.rectangle(face_area,(ex,ey),(ex+ew,ey+eh),(0,0,255),1)

cv2.putText(img,'Smile',(x,y-7), 3, 1.2, (222, 0, 255), 2, cv2.LINE_AA)

# 实时展示效果画面

cv2.imshow('frame2',img)

# 每5毫秒监听一次键盘动作

if cv2.waitKey(5) & 0xFF == ord('q'):

break

# 最后,关闭所有窗口

cap.release()

cv2.destroyAllWindows()

2.运行测试:

笑脸识别:

非笑脸识别:

四、基于Dlib笑脸识别

1.代码编写

import sys

import dlib # 人脸识别的库dlib

import numpy as np # 数据处理的库numpy

import cv2 # 图像处理的库OpenCv

class face_emotion():

def __init__(self):

# 使用特征提取器get_frontal_face_detector

self.detector = dlib.get_frontal_face_detector()

# dlib的68点模型,使用作者训练好的特征预测器

self.predictor = dlib.shape_predictor(

"shape_predictor_68_face_landmarks.dat")

# 建cv2摄像头对象,这里使用电脑自带摄像头,如果接了外部摄像头,则自动切换到外部摄像头

self.cap = cv2.VideoCapture(0)

# 设置视频参数,propId设置的视频参数,value设置的参数值

self.cap.set(3, 480)

# 截图screenshoot的计数器

self.cnt = 0

def learning_face(self):

# 眉毛直线拟合数据缓冲

line_brow_x = []

line_brow_y = []

# cap.isOpened() 返回true/false 检查初始化是否成功

while (self.cap.isOpened()):

# cap.read()

# 返回两个值:

# 一个布尔值true/false,用来判断读取视频是否成功/是否到视频末尾

# 图像对象,图像的三维矩阵

flag, im_rd = self.cap.read()

# 每帧数据延时1ms,延时为0读取的是静态帧

k = cv2.waitKey(1)

# 取灰度

img_gray = cv2.cvtColor(im_rd, cv2.COLOR_RGB2GRAY)

# 使用人脸检测器检测每一帧图像中的人脸。并返回人脸数rects

faces = self.detector(img_gray, 0)

# 待会要显示在屏幕上的字体

font = cv2.FONT_HERSHEY_SIMPLEX

# 如果检测到人脸

if (len(faces) != 0):

# 对每个人脸都标出68个特征点

for i in range(len(faces)):

# enumerate方法同时返回数据对象的索引和数据,k为索引,d为faces中的对象

for k, d in enumerate(faces):

# 用红色矩形框出人脸

cv2.rectangle(im_rd, (d.left(), d.top()),

(d.right(), d.bottom()), (0, 0, 255))

# 计算人脸热别框边长

self.face_width = d.right() - d.left()

# 使用预测器得到68点数据的坐标

shape = self.predictor(im_rd, d)

# 圆圈显示每个特征点

for i in range(68):

cv2.circle(im_rd, (shape.part(i).x, shape.part(

i).y), 2, (222, 222, 0), -1, 8)

#cv2.putText(im_rd, str(i), (shape.part(i).x, shape.part(i).y), cv2.FONT_HERSHEY_SIMPLEX, 0.5,

# (255, 255, 255))

# 分析任意n点的位置关系来作为表情识别的依据

mouth_width = (shape.part(

54).x - shape.part(48).x) / self.face_width # 嘴巴咧开程度

mouth_higth = (shape.part(

66).y - shape.part(62).y) / self.face_width # 嘴巴张开程度

# 通过两个眉毛上的10个特征点,分析挑眉程度和皱眉程度

brow_sum = 0 # 高度之和

frown_sum = 0 # 两边眉毛距离之和

for j in range(17, 21):

brow_sum += (shape.part(j).y - d.top()) + \

(shape.part(j + 5).y - d.top())

frown_sum += shape.part(j + 5).x - shape.part(j).x

line_brow_x.append(shape.part(j).x)

line_brow_y.append(shape.part(j).y)

# self.brow_k, self.brow_d = self.fit_slr(line_brow_x, line_brow_y) # 计算眉毛的倾斜程度

tempx = np.array(line_brow_x)

tempy = np.array(line_brow_y)

z1 = np.polyfit(tempx, tempy, 1) # 拟合成一次直线

# 拟合出曲线的斜率和实际眉毛的倾斜方向是相反的

self.brow_k = -round(z1[0], 3)

brow_hight = (brow_sum / 10) / \

self.face_width # 眉毛高度占比

brow_width = (frown_sum / 5) / \

self.face_width # 眉毛距离占比

# 眼睛睁开程度

eye_sum = (shape.part(41).y - shape.part(37).y + shape.part(40).y - shape.part(38).y +

shape.part(47).y - shape.part(43).y + shape.part(46).y - shape.part(44).y)

eye_hight = (eye_sum / 4) / self.face_width

# 分情况讨论

# 张嘴,可能是开心或者惊讶

if round(mouth_higth >= 0.03):

if eye_hight >= 0.056:

cv2.putText(im_rd, "amazing", (d.left(), d.bottom() + 20), cv2.FONT_HERSHEY_SIMPLEX,

0.8,

(0, 0, 255), 2, 4)

else:

cv2.putText(im_rd, "smile", (d.left(), d.bottom() + 20), cv2.FONT_HERSHEY_SIMPLEX, 0.8,

(0, 0, 255), 2, 4)

# 没有张嘴,可能是正常和生气

else:

if self.brow_k <= -0.3:

cv2.putText(im_rd, "angry", (d.left(), d.bottom() + 20), cv2.FONT_HERSHEY_SIMPLEX, 0.8,

(0, 0, 255), 2, 4)

else:

cv2.putText(im_rd, "nosmile", (d.left(), d.bottom() + 20), cv2.FONT_HERSHEY_SIMPLEX, 0.8,

(0, 0, 255), 2, 4)

# 标出人脸数

cv2.putText(im_rd, "Faces: " + str(len(faces)),

(20, 50), font, 1, (0, 0, 255), 1, cv2.LINE_AA)

else:

# 没有检测到人脸

cv2.putText(im_rd, "No Face", (20, 50), font,

1, (0, 0, 255), 1, cv2.LINE_AA)

im_rd = cv2.putText(im_rd, "Q: quit", (20, 450),

font, 0.8, (0, 0, 255), 1, cv2.LINE_AA)

# 按下q键退出

if (k == ord('q')):

break

# 窗口显示

cv2.imshow("camera", im_rd)

# 释放摄像头

self.cap.release()

# 删除建立的窗口

cv2.destroyAllWindows()

if __name__ == "__main__":

my_face = face_emotion()

my_face.learning_face()

2. 运行测试

笑脸识别:

非笑脸识别:

五、总结

本文利用Genki4k人脸微笑数据集,分别通过搭建卷积神经网络模型、Dlib,以及OpenCV自带的微笑识别库,完成人脸实时的微笑识别,

六、参考

https://blog.csdn.net/lxzysx/article/details/107028257

https://blog.csdn.net/qq_41133375/article/details/107141898

7889

7889

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?