一、VGGNet

- 使用了更深层次的网络,和33的滤波器、22的池化层。

- 使用很小的滤波器堆叠,可以做到一个大滤波器同样的效果。并且可以减少参数。

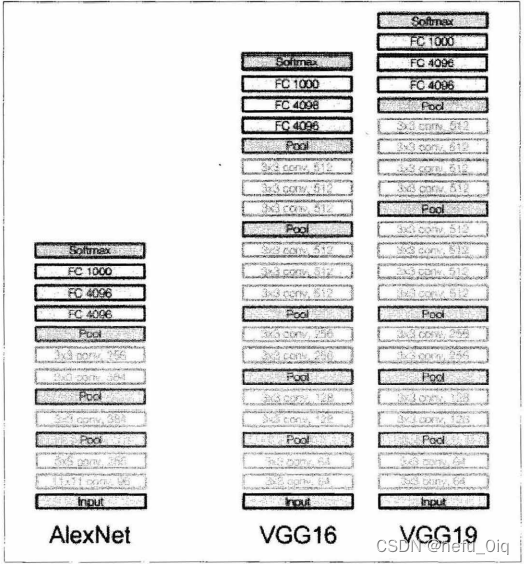

- 网络结构图

- 是2个卷积层与1个pool层作为一个整体。

- 在全连接层后,使用Softmax作为激活函数。

二、GoogLeNet

- GoogLeNet采用了比VGGNet更深的网络结构,一共有22层,但是参数比AlexNet少。没有使用全连接层

- GooLeNet使用了了Inception作为网络模块

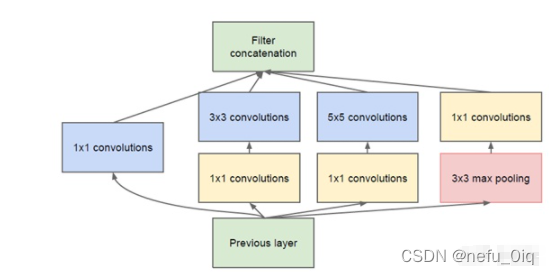

- 在以往的网络中,我们一层只使用一种操作,而且卷积核大小固定。

- 在GooLeNet中,我们使用Inception来使得网络可以自己进行选择,能更好的调节。

- Inception的结构

1. 维度减小的Inception模块,引入了1×1的卷积层,来降低输入层的维度,从而减小网络参数。 - 整体网络结构

- 代码实现

import flask

from torch import nn

import torch

from torch.nn import functional as F

class BasicConv2d(nn.Module):

def __init__(self,in_channels,out_channels,**kwargs):

super(BasicConv2d, self).__init__()

self.conv = nn.Conv2d(in_channels,out_channels,bias=False,**kwargs)

self.bn = nn.BatchNorm2d(out_channels,eps=0.001)

def forward(self,x):

x = self.conv(x)

x = self.bn(x)

return F.relu(x,inplace=True)

class Inception(nn.Module):

def __init__(self,in_channels,pool_features):

super(Inception, self).__init__()

self.branch1x1 = BasicConv2d(in_channels,64,kernel_size=1)

self.branch5x5_1 = BasicConv2d(in_channels,48,kernel_size=1)

self.branch5x5_2 = BasicConv2d(48,64, kernel_size=5,padding=2)

self.branch3x3_1 = BasicConv2d(in_channels, 64, kernel_size=1)

self.branch3x3_2 = BasicConv2d(64, 96, kernel_size=3, padding=1)

self.branch3x3_3 = BasicConv2d(96, 96, kernel_size=3, padding=1)

self.branch_pool = BasicConv2d(

in_channels,pool_features,kernel_size=1

)

def forward(self,x):

branch1x1 = self.branch1x1(x)

branch5x5 = self.branch5x5_1(x)

branch5x5 = self.branch5x5_2(branch5x5)

branch3x3 = self.branch3x3_1(x)

branch3x3 = self.branch3x3_2(branch3x3)

branch3x3 = self.branch3x3_3(branch3x3)

branch_pool = F.avg_pool2d(x,kernel_size=3,stride=1,padding=1)

branch_pool = self.branch_pool(branch_pool)

outputs = [branch1x1,branch3x3,branch5x5,branch_pool]

return outputs

3140

3140

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?