前言

Hadoop在2.6.0版本中引入了一个新特性异构存储.异构存储关键在于异构2个字.异构存储可以根据各个存储介质读写特性的不同发挥各自的优势.一个很适用的场景就是上篇文章提到的冷热数据的存储.针对冷数据,采用容量大的,读写性能不高的存储介质存储,比如最普通的Disk磁盘.而对于热数据而言,可以采用SSD的方式进行存储,这样就能保证高效的读性能,在速率上甚至能做到十倍于或百倍于普通磁盘读写的速度.换句话说,HDFS的异构存储特性的出现使得我们不需要搭建2套独立的集群来存放冷热2类数据,在一套集群内就能完成.所以这个功能特性还是有非常大的实用意义的.本文就带大家了解HDFS的异构存储分为哪几种类型,存储策略如何,HDFS如何做到智能化的异构存储.

异构存储类型

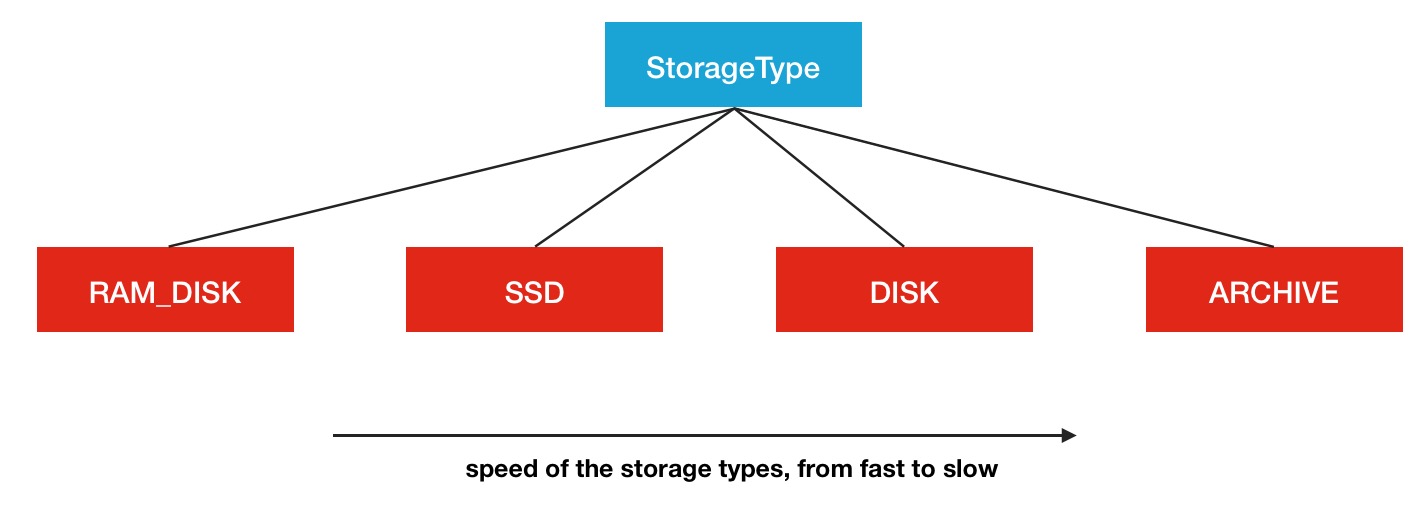

上文提到了多次的异构这个名词,那么到底异构存储分为了种类型呢,这里列举一下HDFS中所声明的Storage Type.

- RAM_DISK

- SSD

- DISK

- ARCHIVE

HDFS中是定义了这4种类型,SSD,DISK一看就知道是什么意思,这里看一下其余的2个,RAM_DISK,其实就是Memory内存,而ARCHIVE并没有特指哪种存储介质,主要的指的是高密度存储数据的介质来解决数据量的容量扩增的问题.这4类是被定义在了StorageType类中:

public enum StorageType {

// sorted by the speed of the storage types, from fast to slow

RAM_DISK(true),

SSD(false),

DISK(false),

ARCHIVE(false);

...旁边的true或者false代表的是此类存储类型是否是transient特性的.transient的意思是指转瞬即逝的,并非持久化的.在HDFS中,如果没有主动声明数据目录存储类型的,默认都是DISK.

Defines the types of supported storage media. The default storage

medium is assumed to be DISK.这4类存储介质之间一个很多的性能区别就在于读写速度,从上到下依次减慢,所以从冷热数据的处理来看,将数据存在内存中或是SSD中会是不错的选择,而冷数据则存放与DISK和ARCHIVE类型的介质中会更好.所以HDFS中冷热数据文件目录的StorageType的设定将会显得非常的重要.那么如何让HDFS知道集群中哪些数据存储目录是具体哪种类型的存储介质呢,这里需要配置的主动声明,HDFS可没有做自动检测识别的功能.在配置属性dfs.datanode.data.dir中进行本地对应存储目录的设置,同时带上一个存储类型标签,声明此目录用的是哪种类型的存储介质,例子如下:

[SSD]file:///grid/dn/ssd0如果目录前没有带上[SSD]/[DISK]/[ARCHIVE]/[RAM_DISK]这4种中的任何一种,则默认是DISK类型.下面是一张存储介质结构图

异构存储原理

了解完了异构存储的多种存储介质之后,我们有必要了解一下HDFS的异构存储的实现原理.在这里会结合部分HDFS源码进行阐述.概况性的总结为3小点:

- DataNode通过心跳汇报自身数据存储目录的StorageType给NameNode,

- 随后NameNode进行汇总并更新集群内各个节点的存储类型情况

- 待复制文件根据自身设定的存储策略信息向NameNode请求拥有此类型存储介质的DataNode作为候选节点

从以上3点来看,本质原理并不复杂.下面结合部分源码,来一步步追踪内部的过程细节.

DataNode存储目录汇报

首先是数据存储目录的解析与心跳汇报过程.在FsDatasetImpl的构造函数中对dataDir进行了存储目录的解析,生成了StorageType的List列表.

/**

* An FSDataset has a directory where it loads its data files.

*/

FsDatasetImpl(DataNode datanode, DataStorage storage, Configuration conf

) throws IOException {

...

String[] dataDirs = conf.getTrimmedStrings(DFSConfigKeys.DFS_DATANODE_DATA_DIR_KEY);

Collection<StorageLocation> dataLocations = DataNode.getStorageLocations(conf);

List<VolumeFailureInfo> volumeFailureInfos = getInitialVolumeFailureInfos(

dataLocations, storage);

...真正调用的是DataNode的getStorageLocations方法.

public static List<StorageLocation> getStorageLocations(Configuration conf) {

// 获取dfs.datanode.data.dir配置中多个目录地址字符串

Collection<String> rawLocations =

conf.getTrimmedStringCollection(DFS_DATANODE_DATA_DIR_KEY);

List<StorageLocation> locations =

new ArrayList<StorageLocation>(rawLocations.size());

for(String locationString : rawLocations) {

final StorageLocation location;

try {

// 解析为对应的StorageLocation

location = StorageLocation.parse(locationString);

} catch (IOException ioe) {

LOG.error("Failed to initialize storage directory " + locationString

+ ". Exception details: " + ioe);

// Ignore the exception.

continue;

} catch (SecurityException se) {

LOG.error("Failed to initialize storage directory " + locationString

+ ". Exception details: " + se);

// Ignore the exception.

continue;

}

// 将解析好的StorageLocation加入到列表中

locations.add(location);

}

return locations;

}当然我们最关心的过程就是如何解析配置并最终得到对应存储类型的过程,就是下面这行操作所执行的内容

location = StorageLocation.parse(locationString);进入到StorageLocation方法,查阅解析方法

public static StorageLocation parse(String rawLocation)

throws IOException, SecurityException {

// 采用正则匹配的方式的方式进行解析

Matcher matcher = regex.matcher(rawLocation);

StorageType storageType = StorageType.DEFAULT;

String location = rawLocation;

if (matcher.matches()) {

String classString = matcher.group(1);

location = matcher.group(2);

if (!classString.isEmpty()) {

storageType =

StorageType.valueOf(StringUtils.toUpperCase(classString));

}

}

return new StorageLocation(storageType, new Path(location).toUri());

}这里的StorageType.DEFAULT就是DISK,在StorageType中定义的

public static final StorageType DEFAULT = DISK;后续这些解析好的存储目录以及对应的存储介质类型会被加入到storageMap中.

private void addVolume(Collection<StorageLocation> dataLocations,

Storage.StorageDirectory sd) throws IOException {

final File dir = sd.getCurrentDir();

final StorageType storageType =

getStorageTypeFromLocations(dataLocations, sd.getRoot());

本文介绍了Hadoop HDFS的异构存储特性,包括存储类型如RAM_DISK、SSD、DISK和ARCHIVE,以及如何通过配置dfs.datanode.data.dir设置存储类型。HDFS通过DataNode心跳汇报存储类型给NameNode,并根据存储策略选择目标存储介质。BlockStoragePolicySuite提供了多种存储策略,如Hot、Cold、Warm等,用于优化冷热数据的存储。HDFS提供命令行工具用于设置和管理存储策略,实现数据的智能迁移。

本文介绍了Hadoop HDFS的异构存储特性,包括存储类型如RAM_DISK、SSD、DISK和ARCHIVE,以及如何通过配置dfs.datanode.data.dir设置存储类型。HDFS通过DataNode心跳汇报存储类型给NameNode,并根据存储策略选择目标存储介质。BlockStoragePolicySuite提供了多种存储策略,如Hot、Cold、Warm等,用于优化冷热数据的存储。HDFS提供命令行工具用于设置和管理存储策略,实现数据的智能迁移。

最低0.47元/天 解锁文章

最低0.47元/天 解锁文章

986

986

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?