代码:

package org.apache.hadoop.pr;

import java.io.IOException;

import java.util.Iterator;

import org.apache.hadoop.conf.Configuration;

import org.apache.hadoop.conf.Configured;

import org.apache.hadoop.fs.Path;

import org.apache.hadoop.io.IntWritable;

import org.apache.hadoop.io.Text;

import org.apache.hadoop.mapred.FileInputFormat;

import org.apache.hadoop.mapred.FileOutputFormat;

import org.apache.hadoop.mapred.JobClient;

import org.apache.hadoop.mapred.JobConf;

import org.apache.hadoop.mapred.KeyValueTextInputFormat;

import org.apache.hadoop.mapred.MapReduceBase;

import org.apache.hadoop.mapred.Mapper;

import org.apache.hadoop.mapred.OutputCollector;

import org.apache.hadoop.mapred.Reducer;

import org.apache.hadoop.mapred.Reporter;

import org.apache.hadoop.mapred.TextOutputFormat;

import org.apache.hadoop.util.Tool;

import org.apache.hadoop.util.ToolRunner;

public class CitationHistogram extends Configured implementsTool{

//Mapper类

publicstatic class MapClass extends MapReduceBase implementsMapper{

privatefinal static IntWritable uno = newIntWritable(1); //uno为1,final型

privateIntWritable citationCount = new IntWritable(); //实例化一个citationCount用来存传进来的value

public voidmap(Text key, Text value,

OutputCollector output,

Reporterreporter)

throwsIOException {

citationCount.set(Integer.parseInt(value.toString())); //把value值赋给citationCount

output.collect(citationCount, uno); //输出类型的键为citationCount即被引用的次数,值为1

}

}

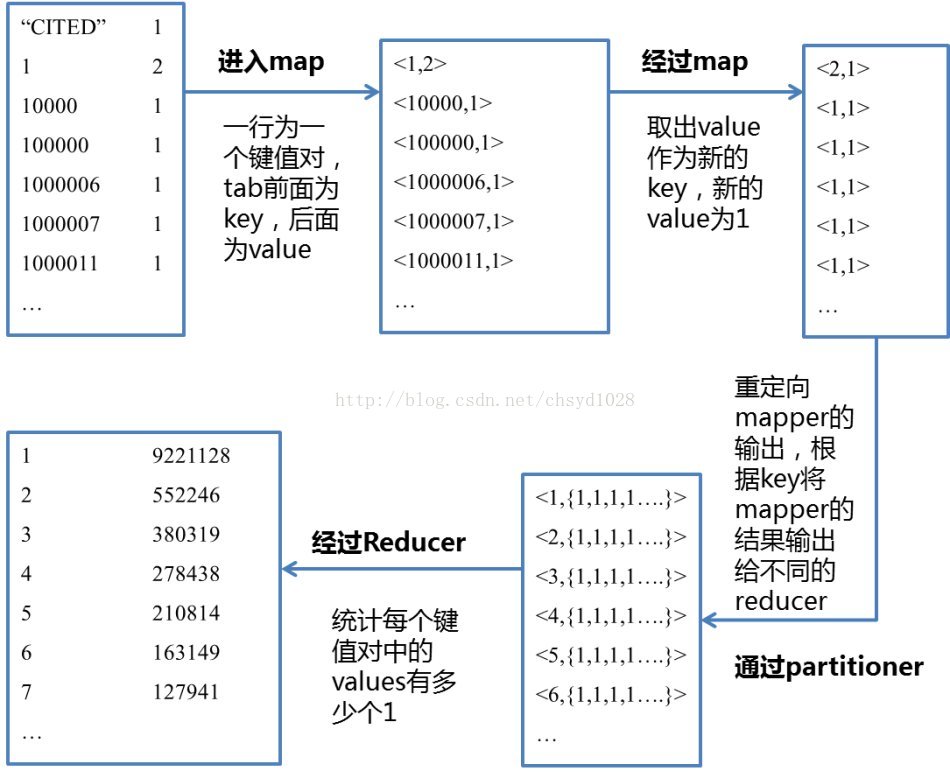

//mapper和reducer之间会有一个partitioner,重定向mapper的输出,根据key将mapper的结果输出给不同的reducer

//最后进reducer的时候形式就会是,而这个程序里的value都是1

//Reducer类

publicstatic class Reduce extends MapReduceBase implements Reducer{

public voidreduce(IntWritable key, Iterator values, OutputCollectoroutput,

Reporterreporter) throws IOException {

int count =0;

while(values.hasNext()){

count +=values.next().get(); //只要后面还有value,count就加一个value(也就是1)

}

output.collect(key, new IntWritable(count)); //最后的输出形式就是key和count值

}

}

public intrun(String[] args) throws Exception {

Configuration conf = getConf();

JobConf job= new JobConf(conf, CitationHistogram.class);

Path in =new Path(args[0]);

Path out =new Path(args[1]);

FileInputFormat.setInputPaths(job, in);

FileOutputFormat.setOutputPath(job, out);

job.setJobName("CitationHistogram");

job.setMapperClass(MapClass.class);

job.setReducerClass(Reduce.class);

job.setInputFormat(KeyValueTextInputFormat.class);

job.setOutputFormat(TextOutputFormat.class);

job.setOutputKeyClass(IntWritable.class);//因为上面Reducer中的K是IntWritable,这里必须保持一致

job.setOutputValueClass(IntWritable.class);//因为上面Reducer中的V都是IntWritable,这里必须保持一致

JobClient.runJob(job);

return0;

}

public static void main(String[] args) throwsException{

int res =ToolRunner.run(new Configuration(), new CitationHistogram(),args);

System.exit(res);

}

}

532

532

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?