该程序的功能:

(1)读取文件

(2)统计每个单词出现的数量

1.创建words.txt文件并上传到HDFS

创建words.txt文件,添加内容

vim words.txt

#添加单词(任意单词)

hadoop,hive,hbase

spark,flink,kafka

python,java,scala

sqoop,hello,world

sqoop,hello,world

sqoop,hello,world

sqoop,hello,world

sqoop,hello,world

上传到HDFS

hdfs dfs -put words.txt /input1

2.编写代码

package MapReduce;

import org.apache.hadoop.conf.Configuration;

import org.apache.hadoop.fs.Path;

import org.apache.hadoop.io.LongWritable;

import org.apache.hadoop.io.Text;

import org.apache.hadoop.mapreduce.Job;

import org.apache.hadoop.mapreduce.Mapper;

import org.apache.hadoop.mapreduce.Reducer;

import org.apache.hadoop.mapreduce.lib.input.FileInputFormat;

import org.apache.hadoop.mapreduce.lib.output.FileOutputFormat;

import java.io.IOException;

/**

* 读取文件

* 统计每个单词出现的数量

*/

public class Demo01WordCount {

//map

/**

* Mapper<IntWritable,Text,Text,IntWritable>

* Mapper<输入Map的key的类型,输入Map的value的类型,Map输出的key的类型,Map输出的value的类型>

* Map默认的inputformat是TextInputFormat

* TextInputFormat:会将数据每一行的偏移量作为key,每一行作为value输入到Map端

*/

public static class MyMapper extends Mapper<LongWritable,Text,Text,LongWritable>{

//实现自己的map逻辑

/**

*

* @param key:输入map的key

* @param value:输入map的value

* @param context:MapReduce程序的上下文环境,Map端的输出可以通过context发送到Reduce端

* @throws IOException

* @throws InterruptedException

*/

@Override

protected void map(LongWritable key, Text value, Mapper<LongWritable, Text, Text, LongWritable>.Context context) throws IOException, InterruptedException {

//获取一行数据

String line = value.toString();

String[] split = line.split(",");

for (String word : split) {

context.write(new Text(word),new LongWritable(1));

}

}

}

/**

* Reducer<Text,IntWritable,Text,IntWritable>

* Mapper<Map输出的key的类型,Map输出的value的类型,Reduce输出key的类型,Reduce输出的value的类型>

*/

//reduce

public static class MyReducer extends Reducer<Text,LongWritable,Text,LongWritable>{

/**

*

* @param key:从Map端输出并经过分组后的key(相当于对map输出的key做一个group by)

* @param values:从Map端输出并经过分组后的key,对应的value集合

* @param context:MapReduce程序的上下文环境 Reduce端的输出可以通过context最终写道hdfs集群上

* @throws IOException

* @throws InterruptedException

*/

@Override

protected void reduce(Text key, Iterable<LongWritable> values, Reducer<Text, LongWritable, Text, LongWritable>.Context context) throws IOException, InterruptedException {

int sum = 0;

for (LongWritable value : values) {

sum += value.get();

}

context.write(key,new LongWritable(sum));

}

}

public static void main(String[] args) throws IOException, ClassNotFoundException, InterruptedException {

Configuration conf = new Configuration();

// 配置reduce最终输出的key value的分隔符为 #

conf.set("mapred.textoutputformat.separator", "#");

// 做其他的配置

// 创建一个Job

Job job = Job.getInstance(conf);

// 设置reduce的个数

job.setNumReduceTasks(2);

// 设置Job的名字

job.setJobName("MyWordCountMapReduceApp");

// 设置MapReduce运行的类

job.setJarByClass(Demo01WordCount.class);

// 配置Map

// 配置Map Task运行的类

job.setMapperClass(MyMapper.class);

// 设置Map任务输出的key的类型

job.setMapOutputKeyClass(Text.class);

// 设置Map任务输出的value的类型

job.setMapOutputValueClass(LongWritable.class);

// 配置Reduce

// 配置Reduce Task运行的类

job.setReducerClass(MyReducer.class);

// 设置Reduce任务输出的key的类型

job.setOutputKeyClass(Text.class);

// 设置Reduce任务输出的value的类型

job.setOutputValueClass(LongWritable.class);

// 配置输入输出路径

// 将输入的第一个参数作为输入路径,第二个参数作为输出的路径

FileInputFormat.addInputPath(job, new Path(args[0]));

FileOutputFormat.setOutputPath(job, new Path(args[1]));

// 提交任务并等待运行结束

job.waitForCompletion(true);

}

}

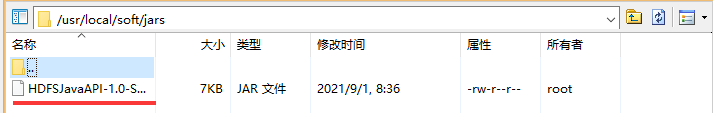

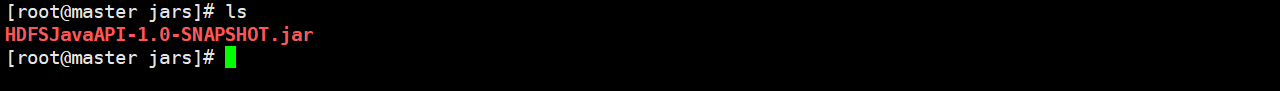

3.打包,上传到master上

打包.jar

通过Xftp上传至master

4.运行mapreduce程序

hadoop jar HDFSJavaAPI-1.0-SNAPSHOT.jar MapReduce.Demo01WordCount /input1/words.txt /output2

5.查看结果

hdfs dfs -ls /output2

566

566

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?