一、软件环境

我使用的软件版本如下:

- Intellij Idea 2017.1

- Maven 3.3.9

- macOS 本地配置Hadoop环境单服务(Docker Hadoop分布式环境( 安装教程可参考这里))

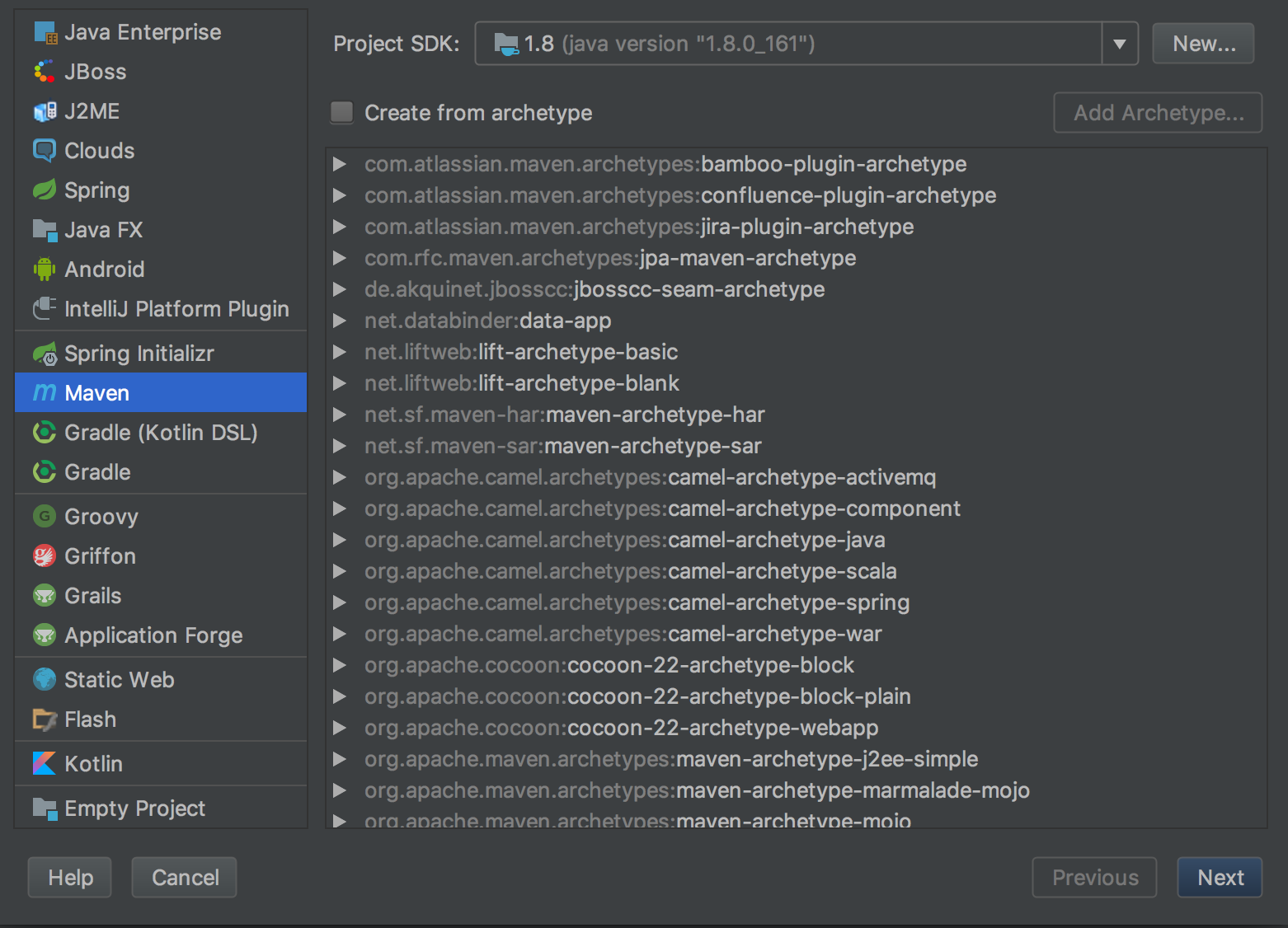

二、创建maven工程

打开Idea,file->new->Project,左侧面板选择maven工程。(如果只跑MapReduce创建java工程即可,不用勾选Creat from archetype,如果想创建web工程或者使用骨架可以勾选)

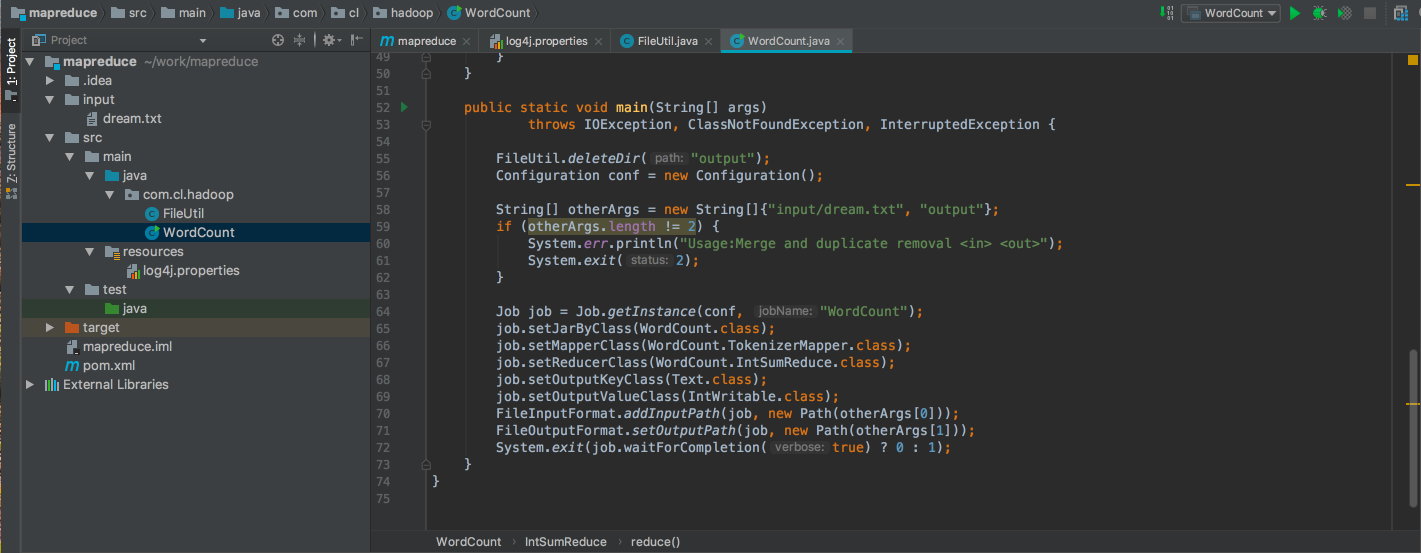

完整的工程路径如下图所示:

三、添加maven依赖

在pom.xml添加依赖,对于hadoop 2.8.3版本的hadoop,需要的jar包有以下几个:

- hadoop-common

- hadoop-hdfs

- hadoop-mapreduce-client-core

- hadoop-mapreduce-client-jobclient

log4j( 打印日志)

pom.xml中的依赖如下:

<dependencies> <dependency> <groupId>junit</groupId> <artifactId>junit</artifactId> <version>4.12</version> </dependency> <!-- hadoop 分布式文件系统类库 --> <dependency> <groupId>org.apache.hadoop</groupId> <artifactId>hadoop-hdfs</artifactId> <version>2.8.3</version> </dependency> <!-- hadoop 公共类库 --> <dependency> <groupId>org.apache.hadoop</groupId> <artifactId>hadoop-common</artifactId> <version>2.8.3</version> </dependency> <dependency> <groupId>org.apache.hadoop</groupId> <artifactId>hadoop-mapreduce-client-core</artifactId> <version>2.8.3</version> </dependency> <dependency> <groupId>org.apache.hadoop</groupId> <artifactId>hadoop-mapreduce-client-jobclient</artifactId> <version>2.8.3</version> </dependency> <dependency> <groupId>log4j</groupId> <artifactId>log4j</artifactId> <version>1.2.17</version> </dependency> </dependencies>四、配置log4j

在

src/main/resources目录下新增log4j的配置文件log4j.properties,内容如下:log4j.rootLogger = debug,stdout ### 输出信息到控制抬 ### log4j.appender.stdout = org.apache.log4j.ConsoleAppender log4j.appender.stdout.Target = System.out log4j.appender.stdout.layout = org.apache.log4j.PatternLayout log4j.appender.stdout.layout.ConversionPattern = [%-5p] %d{yyyy-MM-dd HH:mm:ss,SSS} method:%l%n%m%n五、启动Hadoop

访问http://localhost:50070/查看hadoop是否正常启动。cd /Users/cl/service/hadoop-2.8.3/sbin ./start-all.sh六、运行WordCount(从本地读取文件)

在工程根目录下新建input文件夹,input文件夹下新增dream.txt,随便写入一些单词:

在src/main/java目录下新建包,新增FileUtil.java,创建一个删除output文件的函数,以后就不用手动删除了。内容如下:hello java hello hadoop

编写WordCount的MapReduce程序WordCount.java,内容如下:package com.cl.hadoop; import java.io.File; public class FileUtil { public static boolean deleteDir(String path) { File dir = new File(path); if (dir.exists()) { for (File f : dir.listFiles()) { if (f.isDirectory()) { deleteDir(f.getName()); } else { f.delete(); } } dir.delete(); return true; } else { System.out.println("文件(夹)不存在!"); return false; } } }

运行完毕以后,会在工程根目录下增加一个output文件夹,打开output/part-r-00000,内容如下:package com.cl.hadoop; import org.apache.hadoop.conf.Configuration; import org.apache.hadoop.fs.Path; import org.apache.hadoop.io.IntWritable; import org.apache.hadoop.io.Text; import org.apache.hadoop.mapreduce.Job; import org.apache.hadoop.mapreduce.Mapper; import org.apache.hadoop.mapreduce.Reducer; import org.apache.hadoop.mapreduce.lib.input.FileInputFormat; import org.apache.hadoop.mapreduce.lib.output.FileOutputFormat; import java.io.IOException; import java.util.Iterator; import java.util.StringTokenizer; /** * 分词统计 */ public class WordCount { public static class TokenizerMapper extends Mapper<Object, Text, Text, IntWritable> { public void map(Object key, Text value, Context context) throws IOException, InterruptedException { //每一行的内容分割,如hello java,分割成一个String数组有两个数据,分别是hello,java StringTokenizer itr = new StringTokenizer(value.toString()); //循环数组,将其中的每个数据当做输出的键,值为1,表示这个键出现一次 while (itr.hasMoreTokens()) { //context.write方法可以将map得到的键值对输出 context.write(new Text(itr.nextToken()), new IntWritable(1)); } } } //自定义的Reducer类必须继承Reducer,并重写reduce方法实现自己的逻辑,泛型参数分别为输入的键类型,值类型;输出的键类型,值类型;之后的reduce类似 public static class IntSumReduce extends Reducer<Text, IntWritable, Text, IntWritable> { public void reduce(Text key, Iterable<IntWritable> values, Context context) throws IOException, InterruptedException { //key为每一个单独的单词,如:hello,world,you,me等 //value为这个单词在文本中出现的次数集合,如{1,1,1},表示总共出现了三次 int sum = 0; IntWritable val; //循环value,将其中的值相加,得到总次数 for (Iterator i = values.iterator(); i.hasNext(); sum += val.get()) { val = (IntWritable) i.next(); } //context.write输入新的键值对(结果) context.write(key, new IntWritable(sum)); } } public static void main(String[] args) throws IOException, ClassNotFoundException, InterruptedException { FileUtil.deleteDir("output"); Configuration conf = new Configuration(); String[] otherArgs = new String[]{"input/dream.txt", "output"}; if (otherArgs.length != 2) { System.err.println("Usage:Merge and duplicate removal <in> <out>"); System.exit(2); } Job job = Job.getInstance(conf, "WordCount"); job.setJarByClass(WordCount.class); job.setMapperClass(WordCount.TokenizerMapper.class); job.setReducerClass(WordCount.IntSumReduce.class); job.setOutputKeyClass(Text.class); job.setOutputValueClass(IntWritable.class); FileInputFormat.addInputPath(job, new Path(otherArgs[0])); FileOutputFormat.setOutputPath(job, new Path(otherArgs[1])); System.exit(job.waitForCompletion(true) ? 0 : 1); } }hadoop 1 hello 2 java 1运行过程

这里在main函数中新增了一个String类型的数组,如果想用main函数的args数组接受参数,在运行时指定输入和输出路径也是可以的。运行WordCount之前,配置Configuration并指定Program arguments即可。七、运行WordCount(从HDFS读取文件)

在HDFS上新建文件夹:

hadoop fs -mkdir /worddir

如果出现Namenode安全模式导致的不能创建文件夹提示:

mkdir: Cannot create directory /worddir. Name node is in safe mode.

运行以下命令关闭safe mode:

hadoop dfsadmin -safemode leave

上传本地文件:

hadoop fs -put dream.txt /worddir

修改otherArgs参数,指定输入为文件在HDFS上的路径:

String[] otherArgs = new String[]{"hdfs://localhost:9000/worddir/dream.txt","output"};

614

614

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?