以下主要分析记录map阶段:org.apache.hadoop.mapred.MapTask$MapOutputBuffer

public static class MapOutputBuffer<K extends Object, V extends Object>

implements MapOutputCollector<K, V>, IndexedSortable

{

//省略...

public void init(MapOutputCollector.Context context

) throws IOException, ClassNotFoundException {

job = context.getJobConf();

reporter = context.getReporter();

//map任务

mapTask = context.getMapTask();

mapOutputFile = mapTask.getMapOutputFile();

sortPhase = mapTask.getSortPhase();

spilledRecordsCounter = reporter.getCounter(TaskCounter.SPILLED_RECORDS);

//分区数,默认1

partitions = job.getNumReduceTasks();

rfs = ((LocalFileSystem)FileSystem.getLocal(job)).getRaw();

//sanity checks

final float spillper =

job.getFloat(JobContext.MAP_SORT_SPILL_PERCENT, (float)0.8);

final int sortmb = job.getInt(JobContext.IO_SORT_MB, 100);

indexCacheMemoryLimit = job.getInt(JobContext.INDEX_CACHE_MEMORY_LIMIT,

INDEX_CACHE_MEMORY_LIMIT_DEFAULT);

if (spillper > (float)1.0 || spillper <= (float)0.0) {

throw new IOException("Invalid \"" + JobContext.MAP_SORT_SPILL_PERCENT +

"\": " + spillper);

}

if ((sortmb & 0x7FF) != sortmb) {

throw new IOException(

"Invalid \"" + JobContext.IO_SORT_MB + "\": " + sortmb);

}

//map阶段的溢写文件时候会排序,默认是快排,也可以自己写算法配置map.sort.class

sorter = ReflectionUtils.newInstance(job.getClass("map.sort.class",

QuickSort.class, IndexedSorter.class), job);

// buffers and accounting

int maxMemUsage = sortmb << 20;

maxMemUsage -= maxMemUsage % METASIZE;

//环形缓冲区

kvbuffer = new byte[maxMemUsage];

bufvoid = kvbuffer.length;

//kv元数据,存储的是key、value的索引位置、以及partition分区和value的长度

kvmeta = ByteBuffer.wrap(kvbuffer)

.order(ByteOrder.nativeOrder())

.asIntBuffer();

setEquator(0);

bufstart = bufend = bufindex = equator;

kvstart = kvend = kvindex;

maxRec = kvmeta.capacity() / NMETA;

softLimit = (int)(kvbuffer.length * spillper);

bufferRemaining = softLimit;

// k/v serialization

//map阶段的排序对比器,对key的自定义排序,由配置项:mapreduce.job.output.key.comparator.class来设置

//或者job.setSortComparatorClass(ComboKeyComparator.class),ComboKeyComparator需要继承WritableComparator并实现compare方法即可使用

comparator = job.getOutputKeyComparator();

//key输出的class(由配置项:mapreduce.map.output.key.class)

keyClass = (Class<K>)job.getMapOutputKeyClass();

//value输出的class(由配置项:mapreduce.map.output.value.class)

valClass = (Class<V>)job.getMapOutputValueClass();

serializationFactory = new SerializationFactory(job);

keySerializer = serializationFactory.getSerializer(keyClass);

keySerializer.open(bb);

valSerializer = serializationFactory.getSerializer(valClass);

valSerializer.open(bb);

// output counters

mapOutputByteCounter = reporter.getCounter(TaskCounter.MAP_OUTPUT_BYTES);

mapOutputRecordCounter =

reporter.getCounter(TaskCounter.MAP_OUTPUT_RECORDS);

fileOutputByteCounter = reporter

.getCounter(TaskCounter.MAP_OUTPUT_MATERIALIZED_BYTES);

// compression

//map端输出压缩,在sortAndSpill方法中输出write中进行压缩输出,逐行进行压缩,默认false

//具体使用的类为:IFile.Writer类的构造方法中使用压缩

//由配置项:mapreduce.map.output.compress来配置是否需要压缩

//由配置项:mapreduce.map.output.compress.codec来配置使用哪种压缩方式,默认是org.apache.hadoop.io.compress.DefaultCodec

if (job.getCompressMapOutput()) {

Class<? extends CompressionCodec> codecClass =

job.getMapOutputCompressorClass(DefaultCodec.class);

codec = ReflectionUtils.newInstance(codecClass, job);

} else {

codec = null;

}

// combiner

final Counters.Counter combineInputCounter =

reporter.getCounter(TaskCounter.COMBINE_INPUT_RECORDS);

combinerRunner = CombinerRunner.create(job, getTaskID(),

combineInputCounter,

reporter, null);

//map端聚合combiner

//由配置项:mapred.combiner.class配置,在sortAndSpill方法中溢写时开始聚合

if (combinerRunner != null) {

final Counters.Counter combineOutputCounter =

reporter.getCounter(TaskCounter.COMBINE_OUTPUT_RECORDS);

combineCollector= new CombineOutputCollector<K,V>(combineOutputCounter, reporter, job);

} else {

combineCollector = null;

}

spillInProgress = false;

minSpillsForCombine = job.getInt(JobContext.MAP_COMBINE_MIN_SPILLS, 3);

spillThread.setDaemon(true);

spillThread.setName("SpillThread");

spillLock.lock();

try {

//开启溢写线程,如果达到内存缓存区的设置的阈值,则启动溢写开始输出spill文件

//由配置项:Mapreduce.task.io.sort.mb 配置内存缓冲区大小

//由配置项:Mapreduce.map.sort.spill.percent 配置内存缓冲区阈值

spillThread.start();

while (!spillThreadRunning) {

spillDone.await();

}

} catch (InterruptedException e) {

throw new IOException("Spill thread failed to initialize", e);

} finally {

spillLock.unlock();

}

if (sortSpillException != null) {

throw new IOException("Spill thread failed to initialize",

sortSpillException);

}

}

}MapTask类的SortAndSpill()方法解析:(sortAndSpill在溢写线程中调用,也在最后flush方法中调用)

private void sortAndSpill() throws IOException, ClassNotFoundException,

InterruptedException {

//approximate the length of the output file to be the length of the

//buffer + header lengths for the partitions

final long size = distanceTo(bufstart, bufend, bufvoid) +

partitions * APPROX_HEADER_LENGTH;

FSDataOutputStream out = null;

try {

// create spill file

final SpillRecord spillRec = new SpillRecord(partitions);

//溢出文件名

final Path filename =

mapOutputFile.getSpillFileForWrite(numSpills, size);

out = rfs.create(filename);

final int mstart = kvend / NMETA;

final int mend = 1 + // kvend is a valid record

(kvstart >= kvend

? kvstart

: kvmeta.capacity() + kvstart) / NMETA;

//排序,快排,自定义key中继承comparable实现compareto在此方法中调用

sorter.sort(MapOutputBuffer.this, mstart, mend, reporter);

int spindex = mstart;

final IndexRecord rec = new IndexRecord();

final InMemValBytes value = new InMemValBytes();

for (int i = 0; i < partitions; ++i) {

IFile.Writer<K, V> writer = null;

try {

long segmentStart = out.getPos();

FSDataOutputStream partitionOut = CryptoUtils.wrapIfNecessary(job, out);

//构造Writer,如果配置输出压缩,则在此方法内部进行压缩操作

writer = new Writer<K, V>(job, partitionOut, keyClass, valClass, codec,

spilledRecordsCounter);

//如果没有配置combiner

if (combinerRunner == null) {

// spill directly

DataInputBuffer key = new DataInputBuffer();

while (spindex < mend &&

kvmeta.get(offsetFor(spindex % maxRec) + PARTITION) == i) {

final int kvoff = offsetFor(spindex % maxRec);

int keystart = kvmeta.get(kvoff + KEYSTART);

int valstart = kvmeta.get(kvoff + VALSTART);

key.reset(kvbuffer, keystart, valstart - keystart);

getVBytesForOffset(kvoff, value);

writer.append(key, value);

++spindex;

}

} else {

//配置了combiner,则调用开发编写的combiner Class类

int spstart = spindex;

while (spindex < mend &&

kvmeta.get(offsetFor(spindex % maxRec)

+ PARTITION) == i) {

++spindex;

}

// Note: we would like to avoid the combiner if we've fewer

// than some threshold of records for a partition

if (spstart != spindex) {

combineCollector.setWriter(writer);

RawKeyValueIterator kvIter =

new MRResultIterator(spstart, spindex);

combinerRunner.combine(kvIter, combineCollector);

}

}

// close the writer

writer.close();

// record offsets

rec.startOffset = segmentStart;

rec.rawLength = writer.getRawLength() + CryptoUtils.cryptoPadding(job);

rec.partLength = writer.getCompressedLength() + CryptoUtils.cryptoPadding(job);

spillRec.putIndex(rec, i);

writer = null;

} finally {

if (null != writer) writer.close();

}

}

if (totalIndexCacheMemory >= indexCacheMemoryLimit) {

// create spill index file

Path indexFilename =

mapOutputFile.getSpillIndexFileForWrite(numSpills, partitions

* MAP_OUTPUT_INDEX_RECORD_LENGTH);

spillRec.writeToFile(indexFilename, job);

} else {

indexCacheList.add(spillRec);

totalIndexCacheMemory +=

spillRec.size() * MAP_OUTPUT_INDEX_RECORD_LENGTH;

}

LOG.info("Finished spill " + numSpills);

++numSpills;

} finally {

if (out != null) out.close();

}

}以wordcount为例:

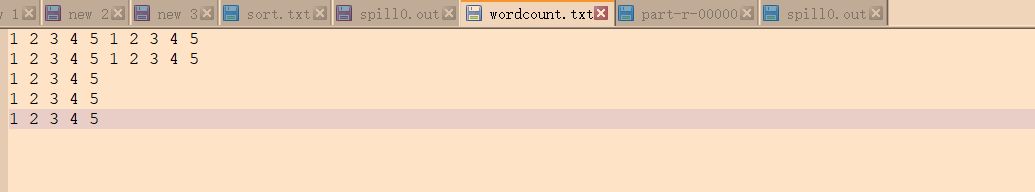

数据文件:

spill文件如下(分区并且有序):因为设置一个分区数且没有设置map端的combiner。

设置分区数为2,且没有combiner。如下:按照分区进行追加写入文件,并设置分区标识。

设置了combiner聚合类,spill文件结果如下,直接计算汇总出结果,极大的降低传输带宽:

849

849

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?