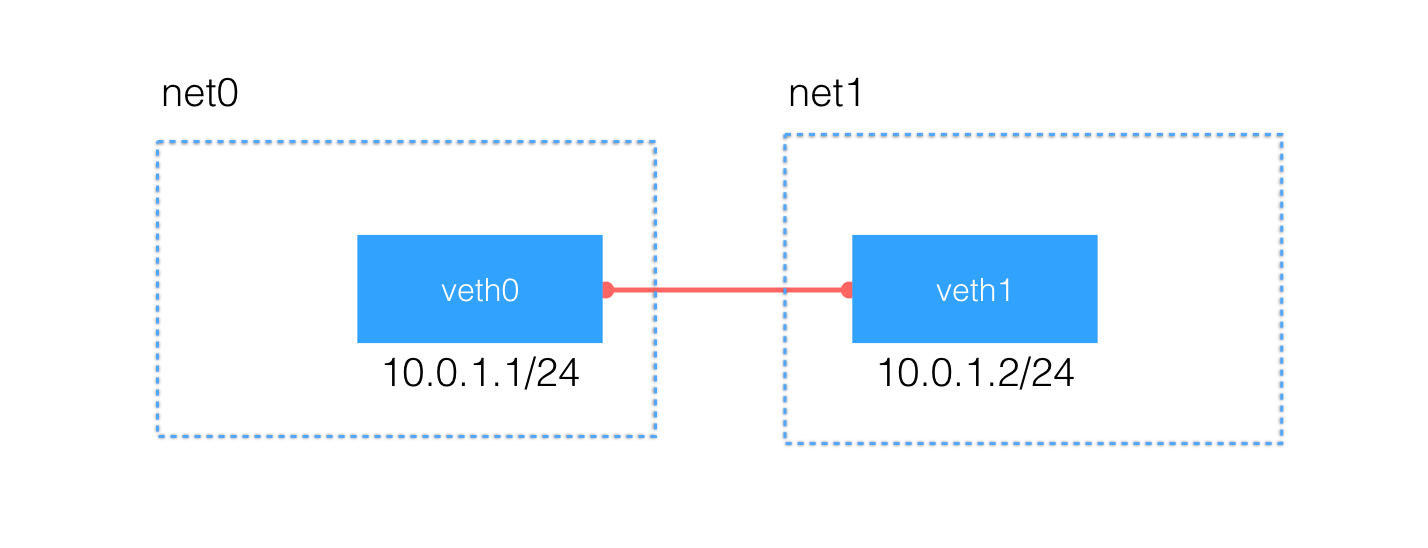

veth pair的思路很重要,本身ip netns add net1、ip netns add net2,这个创建网络命名空间是很简单的,让不同的docker容器都关联到不同的netns,就实现了网络的隔离。

但是同一个物理主机上的docker容器IP地址不一样,分属不同的netns,怎么互相通信,就要靠veth,ip link add type veth这个命令就是创建veth pair的,一对嘛

[root@localhost ~]# ip link add type veth

[root@localhost ~]# ip link

4: veth0: <BROADCAST,MULTICAST> mtu 1500 qdisc noop state DOWN mode DEFAULT qlen 1000

link/ether 36:88:73:83:c9:64 brd ff:ff:ff:ff:ff:ff

5: veth1: <BROADCAST,MULTICAST> mtu 1500 qdisc noop state DOWN mode DEFAULT qlen 1000

link/ether fe:7e:75:4d:79:2e brd ff:ff:ff:ff:ff:ffip link add veth0type veth peer name veth1,命令执行完成后就会看到2个虚拟出来的网络接口,通过ifconfig是可以看到的!然后让这2个虚拟网络接口分别搞到net1和net2里面去,实现互联互通。

[root@localhost ~]# ip link set veth0 netns net0

[root@localhost ~]# ip link set veth1 netns net1

[root@localhost ~]# ip netns exec net0 ip addr

1: lo: <LOOPBACK> mtu 65536 qdisc noop state DOWN

link/loopback 00:00:00:00:00:00 brd 00:00:00:00:00:00

4: veth0: <BROADCAST,MULTICAST> mtu 1500 qdisc noop state DOWN qlen 1000

link/ether 36:88:73:83:c9:64 brd ff:ff:ff:ff:ff:ff

[root@localhost ~]# ip netns exec net1 ip addr

1: lo: <LOOPBACK> mtu 65536 qdisc noop state DOWN

link/loopback 00:00:00:00:00:00 brd 00:00:00:00:00:00

5: veth1: <BROADCAST,MULTICAST> mtu 1500 qdisc noop state DOWN qlen 1000

link/ether fe:7e:75:4d:79:2e brd ff:ff:ff:ff:ff:ff给这一对veth分配配置1个IP地址,在同一个私有网段内10.0.1.0/24

[root@localhost ~]# ip netns exec net0 ip link set veth0 up

[root@localhost ~]# ip netns exec net0 ip addr add 10.0.1.1/24 dev veth0

[root@localhost ~]# ip netns exec net0 ip route

10.0.1.0/24 dev veth0 proto kernel scope link src 10.0.1.1

[root@localhost ~]# ip netns exec net1 ip link set veth1 up

[root@localhost ~]# ip netns exec net1 ip addr add 10.0.1.2/24 dev veth1配置好2个IP地址之后,还自动生成了对应的路由表信息,网络 10.0.1.0/24 数据报文都会通过 veth pair 进行传输,看看能不能ping通吧

[root@localhost ~]# ip netns exec net0 ping -c 3 10.0.1.2

PING 10.0.1.2 (10.0.1.2) 56(84) bytes of data.

64 bytes from 10.0.1.2: icmp_seq=1 ttl=64 time=0.039 ms

64 bytes from 10.0.1.2: icmp_seq=2 ttl=64 time=0.039 ms

64 bytes from 10.0.1.2: icmp_seq=3 ttl=64 time=0.139 ms

--- 10.0.1.2 ping statistics ---

3 packets transmitted, 3 received, 0% packet loss, time 2004ms

rtt min/avg/max/mdev = 0.039/0.072/0.139/0.047 ms

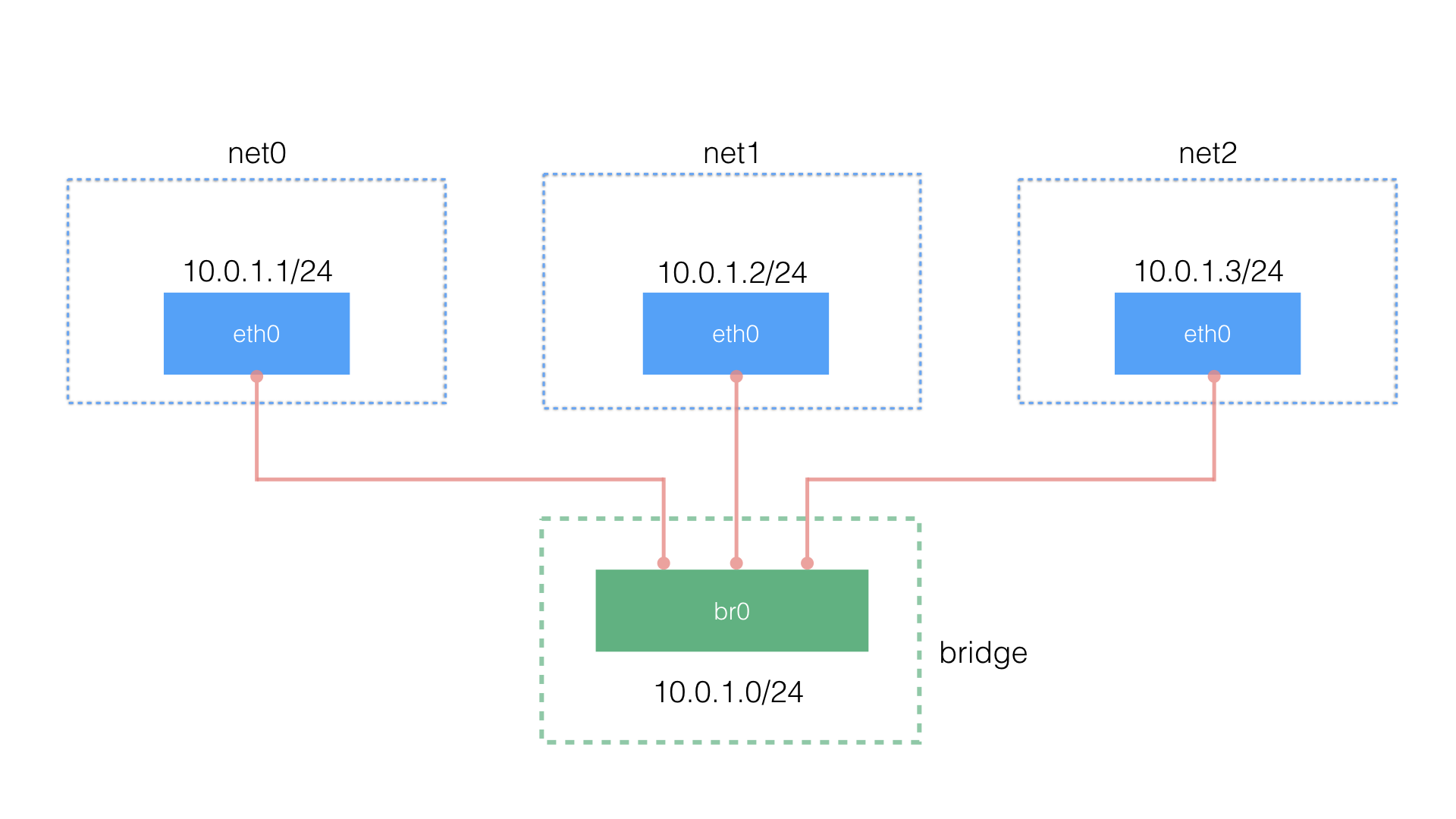

不使用veth实现2个namespace之间的通信,而是使用bridge

veth pair 可以实现两个 network namespace 之间的通信,但是当多个 namespace 需要通信的时候,就无能为力了,比如我们在一个机器上有5个容器,分别属于net0到net4,此时如何互联互通?既然是多设备通信,就必须使用交换机,linux支持通过ip命令创建虚拟交换机

[root@localhost ~]# ip link add br0 type bridge

[root@localhost ~]# ip link set dev br0 up

[root@localhost ~]# ip link set dev veth1 netns net0

[root@localhost ~]# ip netns exec net0 ip link set dev veth1 name eth0

[root@localhost ~]# ip netns exec net0 ip addr add 10.0.1.1/24 dev eth0

[root@localhost ~]# ip netns exec net0 ip link set dev eth0 up

[root@localhost ~]# ip link set dev veth0 master br0

[root@localhost ~]# ip link set dev veth0 up

[root@localhost ~]# bridge link

17: veth0 state UP : <BROADCAST,MULTICAST,UP,LOWER_UP> mtu 1500 master br0 state forwarding priority 32 cost 2

[root@localhost ~]# ip netns exec net0 ping -c 3 10.0.1.3

PING 10.0.1.3 (10.0.1.3) 56(84) bytes of data.

64 bytes from 10.0.1.3: icmp_seq=1 ttl=64 time=0.251 ms

64 bytes from 10.0.1.3: icmp_seq=2 ttl=64 time=0.047 ms

64 bytes from 10.0.1.3: icmp_seq=3 ttl=64 time=0.046 ms

--- 10.0.1.3 ping statistics ---

3 packets transmitted, 3 received, 0% packet loss, time 2008ms

这些技术都是云计算的基础技术,如果要做公有云,这些网络知识是非常重要的,否则无法实现租户网络隔离,无法实现SDN

1万+

1万+

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?