1.判断目前机器的显卡驱动是否为最新,是否需要更新驱动。

2.根据要安装的tensorflow_gpu的版本,注意最开始安装的tensorflow 1.14版本要求cuda的版本是10.0,而安装时10.1,所以报错。

| 版本 | Python 版本 | 编译器 | 编译工具 | cuDNN | CUDA |

| tensorflow_gpu-2.0.0-alpha0 | 2.7、3.3-3.6 | GCC 4.8 | Bazel 0.19.2 | 7.4.1以及更高版本 | CUDA 10.0 (需要 410.x 或更高版本) |

| tensorflow_gpu-1.13.0 | 2.7、3.3-3.6 | GCC 4.8 | Bazel 0.19.2 | 7.4 | 10.0 |

| tensorflow_gpu-1.12.0 | 2.7、3.3-3.6 | GCC 4.8 | Bazel 0.15.0 | 7 | 9 |

| tensorflow_gpu-1.11.0 | 2.7、3.3-3.6 | GCC 4.8 | Bazel 0.15.0 | 7 | 9 |

| tensorflow_gpu-1.10.0 | 2.7、3.3-3.6 | GCC 4.8 | Bazel 0.15.0 | 7 | 9 |

| tensorflow_gpu-1.9.0 | 2.7、3.3-3.6 | GCC 4.8 | Bazel 0.11.0 | 7 | 9 |

| tensorflow_gpu-1.8.0 | 2.7、3.3-3.6 | GCC 4.8 | Bazel 0.10.0 | 7 | 9 |

| tensorflow_gpu-1.7.0 | 2.7、3.3-3.6 | GCC 4.8 | Bazel 0.9.0 | 7 | 9 |

| tensorflow_gpu-1.6.0 | 2.7、3.3-3.6 | GCC 4.8 | Bazel 0.9.0 | 7 | 9 |

| tensorflow_gpu-1.5.0 | 2.7、3.3-3.6 | GCC 4.8 | Bazel 0.8.0 | 7 | 9 |

| tensorflow_gpu-1.4.0 | 2.7、3.3-3.6 | GCC 4.8 | Bazel 0.5.4 | 6 | 8 |

| tensorflow_gpu-1.3.0 | 2.7、3.3-3.6 | GCC 4.8 | Bazel 0.4.5 | 6 | 8 |

| tensorflow_gpu-1.2.0 | 2.7、3.3-3.6 | GCC 4.8 | Bazel 0.4.5 | 5.1 | 8 |

| tensorflow_gpu-1.1.0 | 2.7、3.3-3.6 | GCC 4.8 | Bazel 0.4.2 | 5.1 | 8 |

| tensorflow_gpu-1.0.0 | 2.7、3.3-3.6 | GCC 4.8 | Bazel 0.4.2 | 5.1 | 8 |

现在NVIDIA的显卡驱动程序已经更新到 10.1版本,最新的支持CUDA 10.1版本的cuDNN为7.5.0

3.下载cudnn 对应的包,放入到cuda安装的文件夹中。

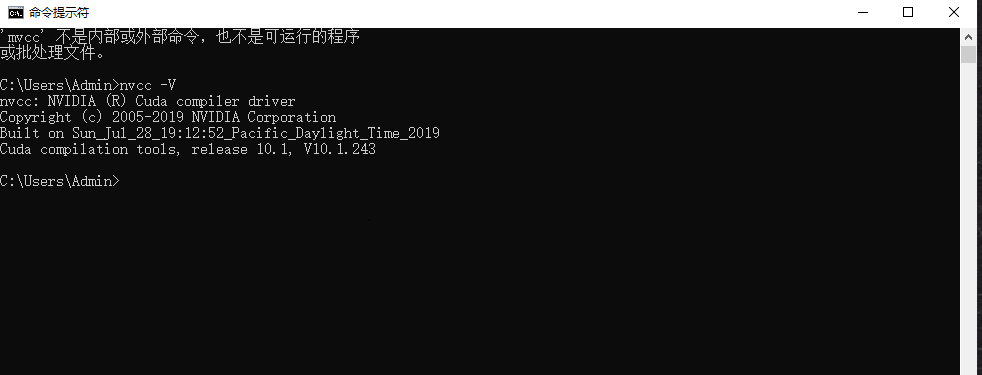

4。在cmd下输入 nvcc -V判断对应的cuda版本是否安装正常。

5.修改pip下载地址,从清华镜像下载tensorflow。

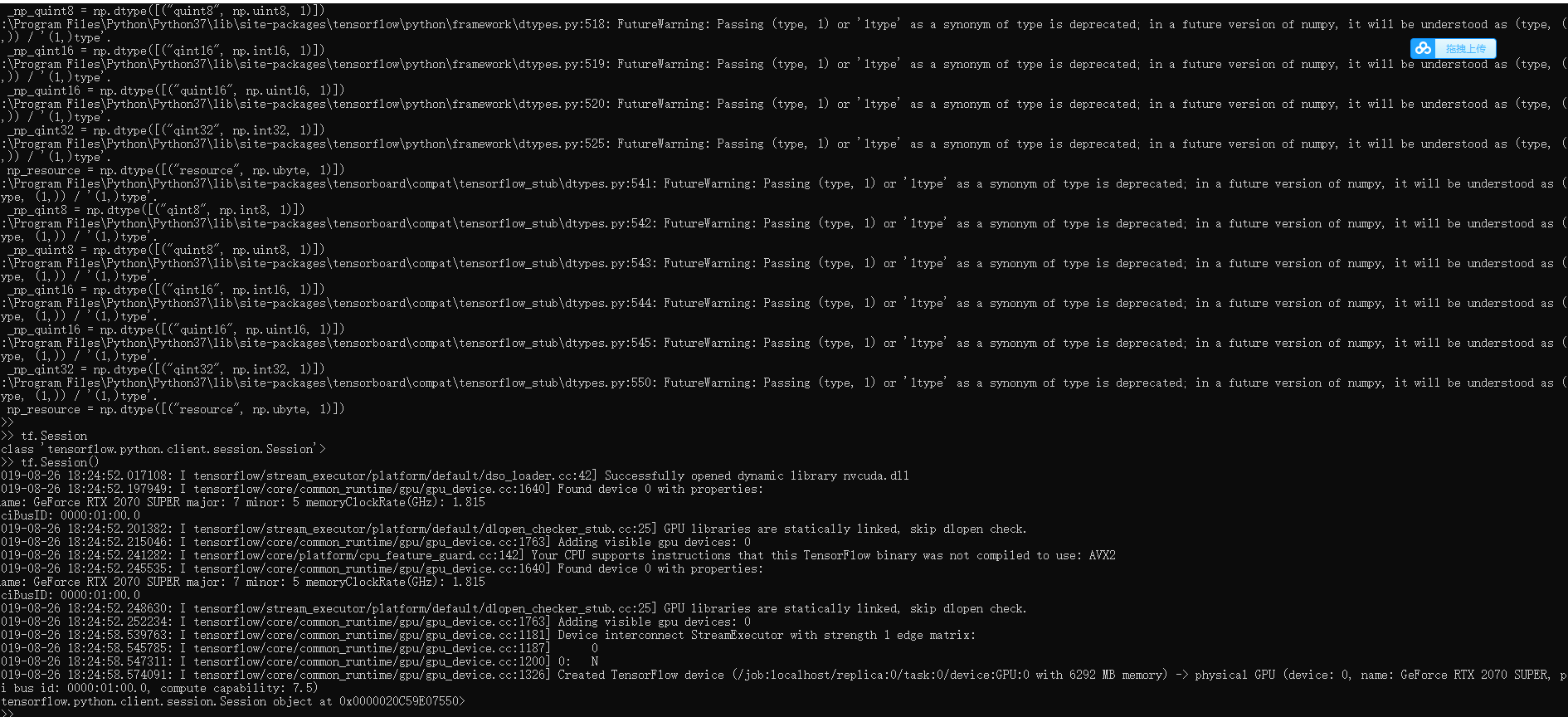

6.测试tensorflow——gpu环境是否配置好

1 #!D:/Code/python 2 # -*- coding: utf-8 -*- 3 # @Time : 2019/8/26 18:28 4 # @Author : Johnye 5 # @Site : 6 # @File : test_tensorflow_gpu.py 7 # @Software: PyCharm 8 import tensorflow as tf 9 hello=tf.constant("hello111") 10 sess = tf.Session() 11 print(sess.run(hello))

7.将numpy降级为1.16,以下警告解决

1411

1411

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?