1. 启动zookeeper集群

#h0和h1上分别执行

zkServer.sh start

#查看zookeeper状态

zkServer.sh status2. 启动storm

#h0上执行

nohup storm nimbus &

nohup storm ui &

#h1和h2上执行

nohup storm supervisor &3. 启动hdfs

#h0上执行

start-dfs.sh4. 启动kafka

#h0上启动kafka服务,启动两个broker

nohup kafka-server-start.sh $KAFKA_HOME/config/server1.properties &

nohup kafka-server-start.sh $KAFKA_HOME/config/server1.properties &

#查询所有topic

kafka-topics.sh --list --zookeeper h0:2181

#创建一个topic

kafka-topics.sh --create --zookeeper h0:2181 --replication-factor 1 --partitions 1 --topic test

#启动消息生产者

kafka-console-producer.sh --broker-list h0:9092 --topic test

#启动消息消费者

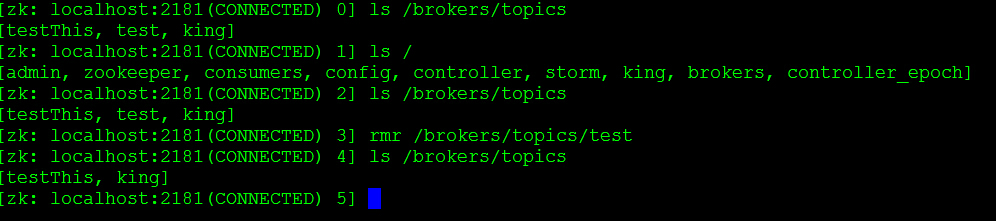

kafka-console-consumer.sh --zookeeper h0:2181 --topic test --from-beginning删除kafka中的topic,这里介绍一下在zookeeper中如何删除。执行

zkCli.sh,命令就看图吧:

5. 启动flume

1. 启动flume命令

flume-ng agent -c $FLUME_CONF_DIR -f $FLUME_CONF_DIR/hdfs.conf --name a1 -Dflume.root.logger=INFO,console2. flume简单配置,其他配置可以到用户手册中查找

# example.conf: A single-node Flume configuration

# Name the components on this agent

a1.sources = r1

a1.sinks = k1

a1.channels = c1

# Describe/configure the source

a1.sources.r1.type = netcat

a1.sources.r1.bind = localhost

a1.sources.r1.port = 44444

# Describe the sink

a1.sinks.k1.type = logger

# Use a channel which buffers events in memory

a1.channels.c1.type = memory

a1.channels.c1.capacity = 1000

a1.channels.c1.transactionCapacity = 100

# Bind the source and sink to the channel

a1.sources.r1.channels = c1

a1.sinks.k1.channel = c1

2514

2514

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?