求和

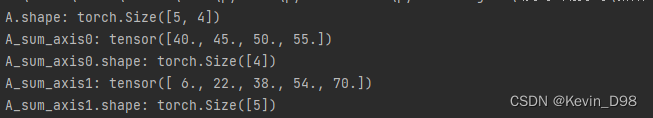

二维张量求和:

A=torch.arange(20,dtype=torch.float32).reshape(5,4)

print(f'A.shape: {A.shape}')

A_sum_axis0=A.sum(axis=0)

print(f'A_sum_axis0: {A_sum_axis0}')

print(f'A_sum_axis0.shape: {A_sum_axis0.shape}')

A_sum_axis1=A.sum(axis=1)

print(f'A_sum_axis1: {A_sum_axis1}')

print(f'A_sum_axis1.shape: {A_sum_axis1.shape}')

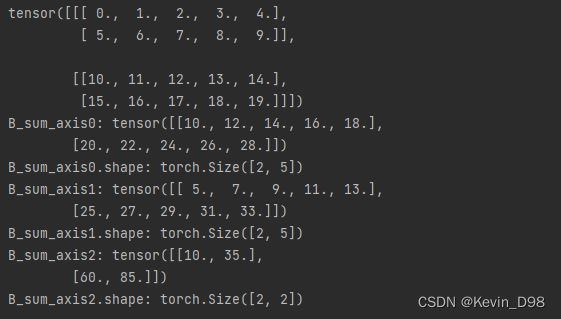

三维张量求和:

B=torch.arange(20,dtype=torch.float32).reshape(2,2,5)

print(B)

B_sum_axis0=B.sum(axis=0)

print(f'B_sum_axis0: {B_sum_axis0}')

print(f'B_sum_axis0.shape: {B_sum_axis0.shape}')

B_sum_axis1=B.sum(axis=1)

print(f'B_sum_axis1: {B_sum_axis1}')

print(f'B_sum_axis1.shape: {B_sum_axis1.shape}')

B_sum_axis2=B.sum(axis=2)

print(f'B_sum_axis2: {B_sum_axis2}')

print(f'B_sum_axis2.shape: {B_sum_axis2.shape}')

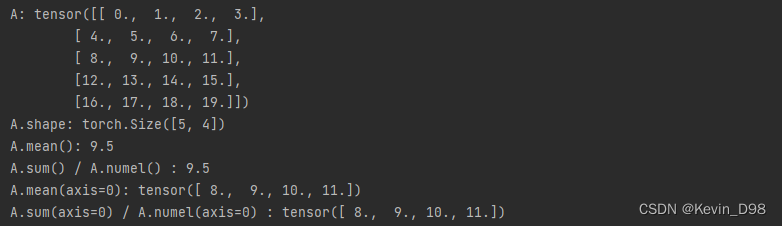

求均值

print(f'A.mean(): {A.mean()}')

print(f'A.sum() / A.numel() : {A.sum() / A.numel()}')

print(f'A.mean(axis=0): {A.mean(axis=0)}')

print(f'A.sum(axis=0) / A.shape[0] : {A.sum(axis=0) / A.shape[0]}')

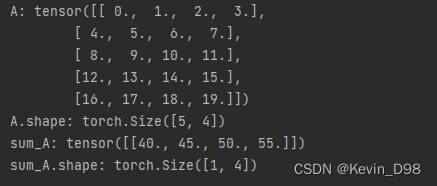

非降维求和

sum_A=A.sum(axis=0,keepdims=True)

print(f'sum_A: {sum_A}')

print(f'sum_A.shape: {sum_A.shape}')

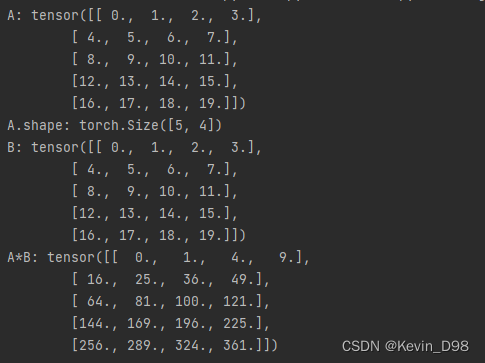

哈达玛积

对应位置元素相乘称为哈达玛积:

print(f'B: {B}')

print(f'A*B: {A*B}')

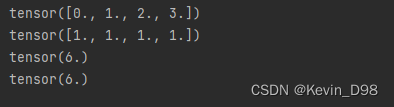

点积

相同位置的按元素乘积的和(等价于先按元素相乘,再对结果求和):

x=torch.arange(4,dtype=torch.float32)

y=torch.ones(4,dtype=torch.float32)

print(x)

print(y)

print(torch.dot(x,y))

print(torch.sum(x*y))

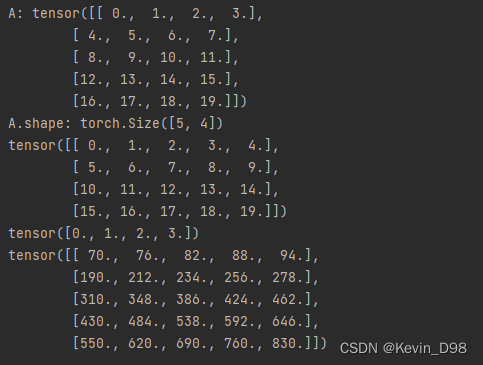

矩阵向量积

print(torch.mv(A,x))

矩阵-矩阵乘法

print(torch.mm(A,B) # 等价于 torch.matmul())

830

830

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?