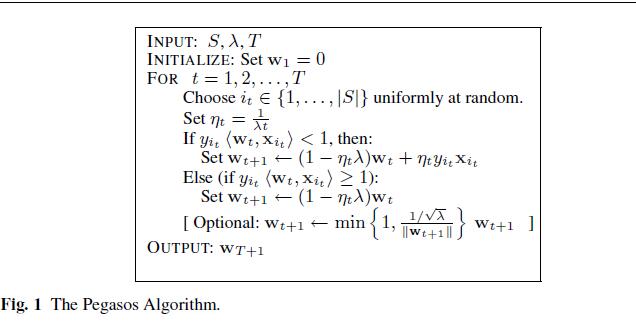

def seqPegasos(dataSet, labels, lam, T):

m, n = shape(dataSet)

w = zeros(n)

for t in range(1, T + 1):

i = random.randint(m)

eta = 1 / (lam * t)

p = predict(w, dataSet[i, :])

if labels[i] * p < 1:

w = (1 - eta * lam) * w + eta * labels[i] * dataSet[i, :]

else:

w = (1 - eta * lam) * w

return w

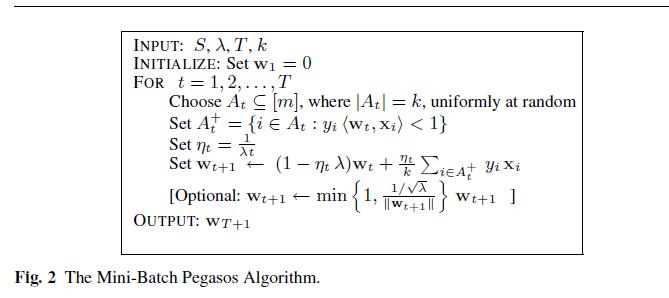

def batchPegasos(dataSet, labels, lam, T, k):

m, n = shape(dataSet);

w = zeros(n);

dataIndex = arange(m)

for t in range(1, T + 1):

wDelta = mat(zeros(n)) # reset wDelta

eta = 1.0 / (lam * t)

random.shuffle(dataIndex)

for j in range(k): # go over training set

i = dataIndex[j]

p = predict(w, dataSet[i, :]) # mapper code

if labels[i] * p < 1: # mapper code

wDelta += labels[i] * dataSet[i, :].A # accumulate changes

w = (1.0 - 1 / t) * w + (eta / k) * wDelta # apply changes at each T

return w

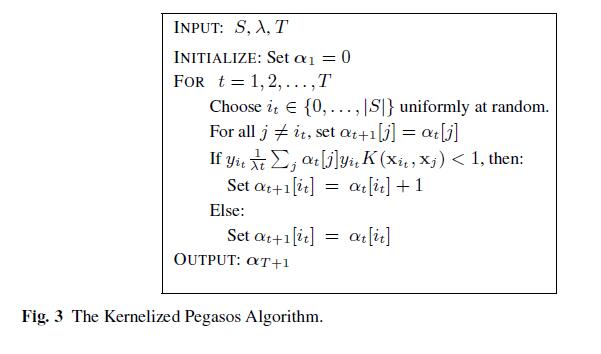

def KernelPegasos(dataSet, labels, lam, T):

m, n = shape(dataSet)

alpha = zeros((m, 1))

for t in range(1, T + 1):

i = random.randint(m)

if labels[i] / (lam * t) * ((alpha * labels[i]).T * kernel(dataSet, dataSet[i])) < 1:

alpha[i] = alpha[i] + 1

return alpha

def kernel(X, A): # calc the kernel or transform data to a higher dimensional space

k = 0.1

m, n = shape(X)

K = mat(zeros((m, 1)))

for j in range(m):

deltaRow = X[j, :] - A

K[j] = deltaRow * deltaRow.T

K = exp(K / (-1 * k ** 2)) # divide in NumPy is element-wise not matrix like Matlab

return K

参考文献:

- S.Shalev-Shwartz, Y.Singer, and N.Srebro. Pegasos: Primal estimated sub-gradient solver for svm.In Proceedings of the 24th International Conference on Machine Learning, pages 807–814, Helsinki,Finland, 2007.

3333

3333

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?