kafka准备工作:

开启zookeeper服务和kafka服务

zkServer.sh start

kafka-server-start.sh /opt/soft/kafka211/config/server.properties

创建副本为1,分区为4的topic:mydemo1

kafka-topics.sh --zookeeper 192.168.181.132:2181 --create --topic mydemo1 --replication-factor 1 --partitions 4

JAVA开多线程写入数据:

接口:

package org.example.commons;

import java.util.Map;

public interface Dbinfo {

public String getIp();

public int getPort();

public String getDbName();

public Map<String,String>getOther();

}

kafka配置类:

package org.example.commons;

import java.util.Map;

public class KafkaConfiguration implements Dbinfo

{

private String ip;

private int port;

private String Dbname;

public void setIp(String ip) {

this.ip = ip;

}

public void setPort(int port) {

this.port = port;

}

public String getDbname() {

return Dbname;

}

public void setDbname(String dbname) {

this.Dbname = dbname;

}

@Override

public String getIp() {

return this.ip;

}

@Override

public int getPort() {

return this.port;

}

@Override

public String getDbName() {

return this.Dbname;

}

@Override

public Map<String, String> getOther() {

return null;

}

}

kafkaConnector类:

package org.example.commons;

import org.apache.kafka.clients.admin.AdminClient;

import org.apache.kafka.clients.admin.DescribeTopicsResult;

import org.apache.kafka.clients.admin.KafkaAdminClient;

import org.apache.kafka.clients.admin.TopicDescription;

import org.apache.kafka.clients.producer.ProducerConfig;

import org.apache.kafka.common.KafkaFuture;

import org.apache.kafka.common.serialization.StringSerializer;

import java.io.FileNotFoundException;

import java.io.FileReader;

import java.io.IOException;

import java.io.LineNumberReader;

import java.util.*;

import java.util.concurrent.ExecutionException;

public class KafkaConnector {

private Dbinfo info;

private int totalRow;

private List<Long> rowSize = new ArrayList<>();

Properties prop = new Properties();

public KafkaConnector(Dbinfo info) {

this.info = info;

prop.put(ProducerConfig.BOOTSTRAP_SERVERS_CONFIG,info.getIp()+":"+info.getPort());

prop.put(ProducerConfig.ACKS_CONFIG,"all");

prop.put(ProducerConfig.RETRIES_CONFIG,"0");

prop.put(ProducerConfig.KEY_SERIALIZER_CLASS_CONFIG, StringSerializer.class.getTypeName());

prop.put(ProducerConfig.VALUE_SERIALIZER_CLASS_CONFIG,StringSerializer.class.getTypeName());

}

public void sendMessage(String path) throws FileNotFoundException {

getFileinfo(path);

int psize = getTopicPartitionNumber();

Map<Long, Integer> threadParams = calcPosAndRow(psize);

int thn = 0;

for (Long key : threadParams.keySet()) {

new CustomerKafkaProducer(prop,path,key,threadParams.get(key),info.getDbName(),thn+"").start();

thn++;

}

}

private Map<Long,Integer> calcPosAndRow(int partitionnum){

Map<Long,Integer> result = new HashMap<>();

int row = totalRow/partitionnum;

for (int i = 0; i < partitionnum; i++) {

if (i==(partitionnum-1)) {

result.put(getPos(row*i+1),row+totalRow%partitionnum);

}else{

result.put(getPos(row*i+1),row);

}

}

return result;

}

private Long getPos(int row){

return rowSize.get(row-1)+(row-1);

}

private int getTopicPartitionNumber(){

AdminClient client = KafkaAdminClient.create(prop);

DescribeTopicsResult result = client.describeTopics(Arrays.asList(info.getDbName()));

KafkaFuture<Map<String, TopicDescription>> kf = result.all();

int num = 0;

try {

num=kf.get().get(info.getDbName()).partitions().size();

} catch (InterruptedException e) {

e.printStackTrace();

} catch (ExecutionException e) {

e.printStackTrace();

}

return num;

}

private void getFileinfo(String path) throws FileNotFoundException {

LineNumberReader reader = new LineNumberReader(new FileReader(path));

try {

String str = null;

int total = 0;

while((str=reader.readLine())!=null){

total+=str.getBytes().length+1;

rowSize.add((long)total);

}

totalRow= reader.getLineNumber();

rowSize.add(0,0L);

} catch (IOException e) {

e.printStackTrace();

}finally {

try {

reader.close();

} catch (IOException e) {

e.printStackTrace();

}

}

}

}

simplePartition类:

package org.example.commons;

import org.apache.kafka.clients.producer.Partitioner;

import org.apache.kafka.common.Cluster;

import java.util.Map;

public class SimplePartitioner implements Partitioner

{

@Override

public int partition(String s, Object o, byte[] bytes, Object o1, byte[] bytes1, Cluster cluster) {

return Integer.parseInt(o.toString());

}

@Override

public void close() {

}

@Override

public void configure(Map<String, ?> map) {

}

}

kafkaProducer类:

package org.example.commons;

import org.apache.kafka.clients.producer.KafkaProducer;

import org.apache.kafka.clients.producer.ProducerRecord;

import java.io.File;

import java.io.IOException;

import java.io.RandomAccessFile;

import java.util.Properties;

public class CustomerKafkaProducer extends Thread {

private Properties prop;

private String path;

private long pos;

private int rows;

private String topics;

public CustomerKafkaProducer(Properties prop, String path, long pos, int rows, String topics,String threadName) {

this.prop = prop;

this.path = path;

this.pos = pos;

this.rows = rows;

this.topics = topics;

this.setName(threadName);

}

@Override

public void run() {

prop.put("partitioner.class",SimplePartitioner.class.getTypeName());

KafkaProducer producer = new KafkaProducer(prop);

try {

RandomAccessFile raf = new RandomAccessFile(new File(path), "r");

raf.seek(pos);

for (int i = 0; i < rows; i++) {

String ln= new String(raf.readLine().getBytes("iso-8859-1"),"UTF-8");

ProducerRecord pr = new ProducerRecord(topics, Thread.currentThread().getName(),ln);

producer.send(pr);

}

producer.close();

raf.close();

} catch (IOException e) {

}

}

}

App运行类:

package org.example.commons;

import java.io.FileNotFoundException;

public class App {

public static void main(String[] args) throws FileNotFoundException {

KafkaConfiguration kc = new KafkaConfiguration();

kc.setIp("192.168.181.132");

kc.setPort(9092);

kc.setDbname("mydemo1");

new KafkaConnector(kc).sendMessage("D:\\log_2020-01-01.log");

}

}

运行App类,然后去kafka查看topic中是否写入数据

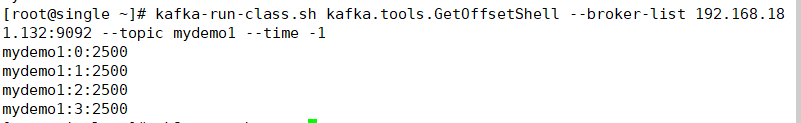

kafka-run-class.sh kafka.tools.GetOffsetShell --broker-list 192.168.181.132:9092 --topic mydemo1 --time -1

本地D盘中的log日志一共一万条数据,被四条线程写入了四个分区中:

如果需要把以上的代码打成jar包执行:

写一个Myexecute类:

public class Myexecute {

public static void main(String[] args) throws Exception {

if (args.length!=0) {

KafkaConfiguration kc = new KafkaConfiguration();

kc.setIp(args[0]);

kc.setPort(Integer.parseInt(args[1]));

kc.setDbname(args[2]);

new KafkaConnector(kc).sendkafka(args[3]);

}else{

throw new Exception("The arguments need 4 but"+args.length);

}

}

}

pom文件中修改builder内容,可以同时打胖包和瘦包:

<build>

<finalName>mykafka</finalName>

<plugins>

<plugin>

<artifactId>maven-compiler-plugin</artifactId>

<version>2.3.2</version>

<configuration>

<source>1.8</source>

<target>1.8</target>

</configuration>

</plugin>

<plugin>

<artifactId>maven-assembly-plugin</artifactId>

<configuration>

<descriptorRefs>

<descriptorRef>jar-with-dependencies</descriptorRef>

</descriptorRefs>

<archive>

<manifest>

<mainClass>org.njbaqn.common.Myexecute(写自己的主类名称)</mainClass>

</manifest>

</archive>

</configuration>

<executions>

<execution>

<id>make-assembly</id>

<phase>package</phase>

<goals>

<goal>single</goal>

</goals>

</execution>

</executions>

</plugin>

</plugins>

</build>

maven–>Lifecycle–>clean–>package

把生成的jar包复制到D盘下,运行cmd

java -jar mykafka-jar-with-dependencies.jar 192.168.181.132 9092 mydemo1 D:\log_2020-01-01.log

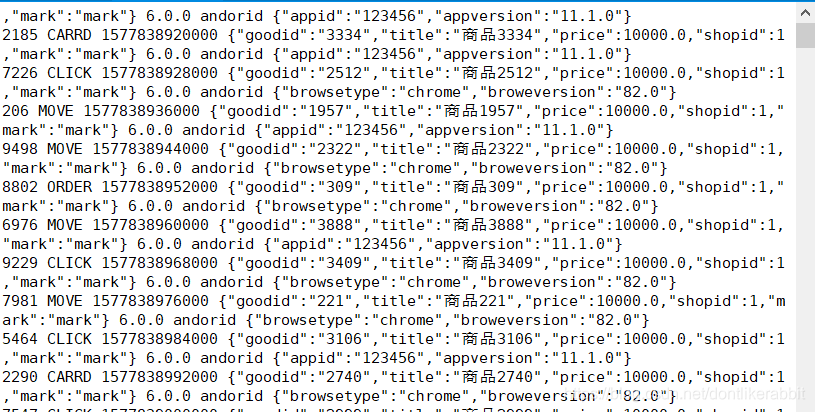

执行之后可以用kafka命令实时监控写入的信息:

kafka-console-consumer.sh --bootstrap-server 192.168.181.132.9092 --topic mydemo1 --from-beginning

2016

2016

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?