1. 同步数据到Elastic几种方式

目前要把kafka中的数据传输到elasticsearch大概有以下几种方法:

1) logstash

2) flume

3) spark streaming

4) kafka connect

5) 开发程序消费kafka写入elasticsearch

本文介绍如何使用Logstash将Kafka中的数据写入到ElasticSearch,这里Kafka、logstash、elasticsearch安装就详述了。

Logstash工作的流程由三部分组成:

input:输入(即source),表示从那里采集数据

filter:过滤,logstash对数据的ETL就是在这个里面进行。

output:输出(即sink),表示数据输出地方。

注意:input需要logstash-input-kafka插件,该插件logstash默认自带。

2. logstash配置

1) input输入

input {

kafka{

bootstrap_servers => ["172.20.34.22:9092"] #broker

client_id => "test" #客户端id

group_id => "logstash-es" #消费组ID

auto_offset_reset => "latest" #偏移量

consumer_threads => 1 #消费线程数,不大于分区个数

decorate_events => "true" #如果只用了单个logstash,希望订阅多个主题在es中为不同主题创建不同的索引,此属性会将当前topic、offset、group、partition等信息也带到message中

topics => ["test01","test02"] #消费主题

type => "kafka-to-elas" #类型,区分输出不同索引

codec => "json" #ES格式为json,如果不加,整条数据变成一个字符串存储到message字段里面

}

}说明:

decorate_events:此属性会将当前topic、offset、group、partition等信息也带到message中,可以达到订阅多个主题在es中为不同主题创建不同的索引。

codec => "json":表示会将消息格式为json,如果不加这个参数,整条数据变成一个字符串存储到message字段里面。

2) filter过滤

filter{

#为每个主题构建对应的[@metadata][index]

if [@metadata][kafka][topic] == "test01" {

mutate {

add_field => {"[@metadata][index]" => "kafka-test01-%{+YYYY.MM.dd}"}

}

}

if [@metadata][kafka][topic] == "test02" {

mutate {

add_field => {"[@metadata][index]" => "kafka-test02-%{+YYYY.MM.dd}"}

}

}

#移除多余的字段

mutate {

remove_field => ["kafka"]

}

}说明:根据业务需求进行ETL数据处理。

这里,我为每个主题构建对应的[@metadata][index],并在接下来output中引用。

3) output输出

output {

#stdout {

# codec => rubydebug

#}

if [type] == "kafka-to-elastic" {

elasticsearch {

hosts => ["172.20.32.241:9200"]

index => "%{[@metadata][index]}"

timeout => 300

}

}

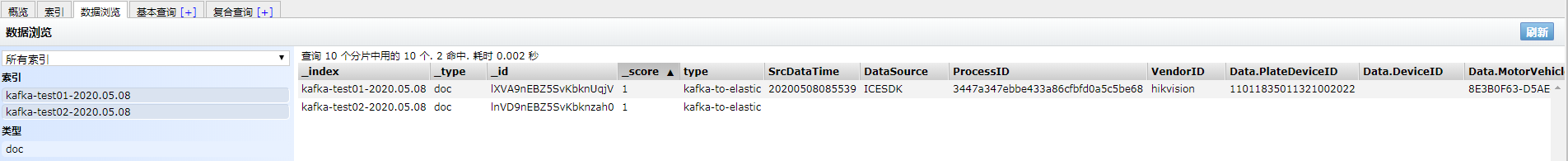

}3. 实例

场景:

消费kafka中多个主题数据(json格式),通过logstash采集并根据不同主题输出到Elasticsearch中不同的索引中。

运行测试(这里,我为了方便测试打开了stdout输出到屏幕):

主题test01对应的索引为 kafka-test01-2020.05.08

数据格式:

{

"_index": "kafka-data-2020.05.08",

"_type": "doc",

"_id": "knUr9nEBZ5SvKbknPKgD",

"_version": 1,

"_score": 1,

"_source": {

"SrcDataId": "8E3B0F63-D5AE-4C2C-AA50-DDF8F39951BE",

"@timestamp": "2020-05-08T21:22:39.903Z",

"SrcDataTime": "20200508085539",

"VendorID": "hikvision",

"type": "kafka-to-elas",

"DeviceModelID": "d1eddcbb86d84164b28f244efe155751",

"DataSource": "ICESDK",

"Data": {

"PassTime": "20200508085539",

"Direction": "0",

"MotorVehicleID": "8E3B0F63-D5AE-4C2C-AA50-DDF8F39951BE",

"PlateFileFormat": "jpeg",

"VehicleColor": "-1",

"VehicleClass": "1",

"MarkTime": "20200508085539",

"AppearTime": "20200508085539",

"PlateDeviceID": "11011835011321002022",

"MotorVehicleStoragePath": "http://192.168.1.171:17999/ICESDKPic/20200508/8/Plate_1588899348077133.jpeg",

"PlateEventSort": "16",

"DeviceID": "",

"TollgateID": "",

"PlateStoragePath": "http://192.168.1.171:17999/ICESDKPic/20200508/8/Plate_1588899348077133.jpeg",

"SourceID": "12",

"PlateNo": "辽LJY888",

"PlateShotTime": "20200508085539"

},

"DeviceId": "",

"ServerID": "0C68F9AA79A14D95A644598D1D7D1623",

"ProcessID": "3447a347ebbe433a86cfbfd0a5c5be68",

"DataType": "MotorVehicle",

"@version": "1"

}

}主题test02对应的索引为 kafka-test02-2020.05.08

数据格式:

{

"_index": "kafka-test02-2020.05.08",

"_type": "doc",

"_id": "lnVD9nEBZ5SvKbknzah0",

"_version": 1,

"_score": 1,

"_source": {

"PlateDeviceID": "11011835011321002022",

"DeviceID": "",

"MotorVehicleID": "8E3B0F63-D5AE-4C2C-AA50-DDF8F39951BE",

"VehicleClass": "1",

"type": "kafka-to-elastic",

"PlateNo": "辽LJY888",

"PassTime": "20200508085539",

"AppearTime": "20200508085539",

"PlateEventSort": "16",

"PlateShotTime": "20200508085539",

"Direction": "0",

"MotorVehicleStoragePath": "http:\192.168.1.171:17999\ICESDKPic\20200508//8/Plate_1588899348077133.jpeg",

"PlateStoragePath": "http:\192.168.1.171:17999\ICESDKPic\20200508//8/Plate_1588899348077133.jpeg",

"@timestamp": "2020-05-08T21:49:30.588Z",

"PlateFileFormat": "jpeg",

"TollgateID": "",

"VehicleColor": "-1",

"SourceID": "12",

"MarkTime": "20200508085539",

"@version": "1"

}

}最后,我们可以在head插件中查看输出的数据。

1503

1503

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?