简介:

1. 先看看对PSO的语言描述

一句话概括粒子群(Particle Swarm Optimization,PSO)的核心思想:

牛人往往都是成对出现的。

所以,如果想让自己变的更牛,那就得往牛人身边靠,果断抱大腿,PSO的最初的思路源于鸟类和鱼类在觅食,它基于以下的假设:在觅食鸟类的周围必定存在食物,因此我们最好在其周围寻找。比如,电线杆上面有站着一群鸟,有一只飞下来在路上吃稻子,则其他鸟类看到后便会飞到此鸟周围觅食,都是一样道理。

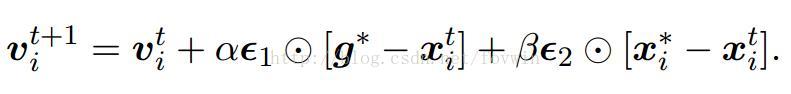

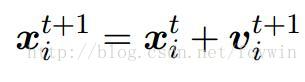

粒子群可以说是最简单的启发式优化算法。对它的理解只需要简单的数学向量的加减数乘即可。它的核心就两公式:

但是貌似参数还不少,α和β是常数;∈1和∈2是属于0到1之间的随机数向量;⊙为点乘,即

两个向量的乘累加。g*为全局”最牛“解。x*为个人历史”最牛“解。所以拿那两个”最牛“解去减去x,即为”向牛人学习“,在数学上的表示为”将自身指向最优解的向量叠加到自己的身上“,是不是和“向牛人学习之后的知识融入到自身的知识体系中”非常类似?!至于v,是一个中间的变量,在数学上是可以消掉的。

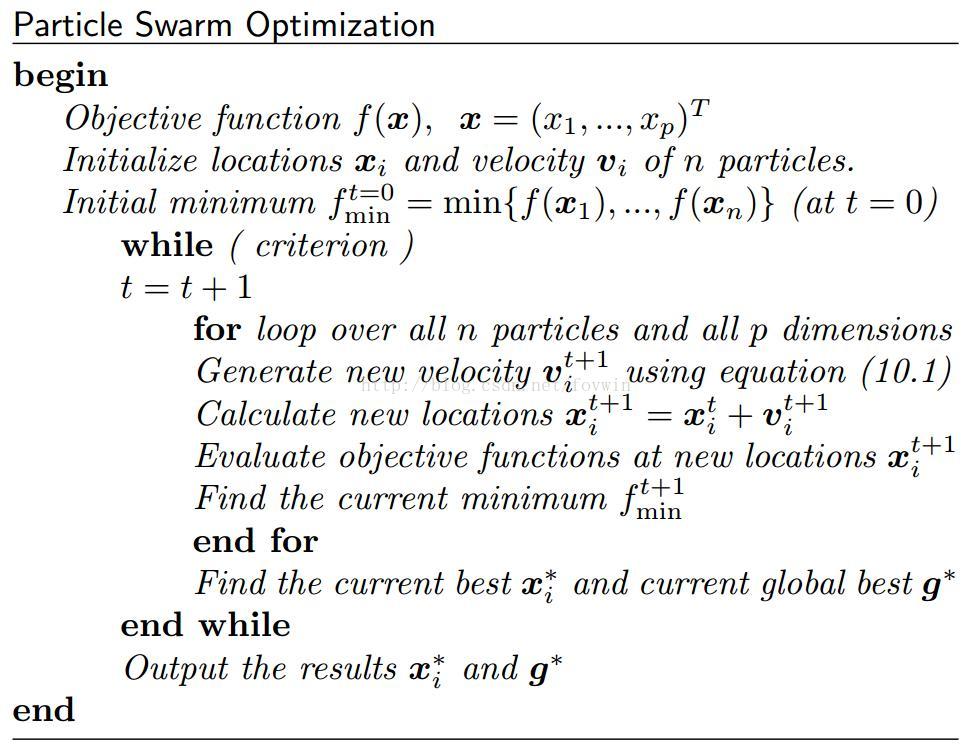

2. 看完描述再看看更接近代码形式的描述:

这里如果要对PSO进行改进的话:最容易想到的就是对这一个更新公式进行改进,当然还有别的,因为从Google Scholar上一搜,对PSO的开山之作已经超过了3万多次的引用。

3. 看完接近代码形式的描述再看看真代码的实现:

pso_simpledemo.m

|

1

2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 24 25 26 27 28 29 30 31 32 33 34 35 36 37 38 39 40 41 42 43 44 45 46 47 48 49 50 51 52 53 54 55 56 57 58 59 60 61 62 63 64 65 66 67 68 69 70 71 72 73 74 75 76 77 78 79 80 81 82 83 84 85 86 87 88 89 90 91 92 93 94 |

|

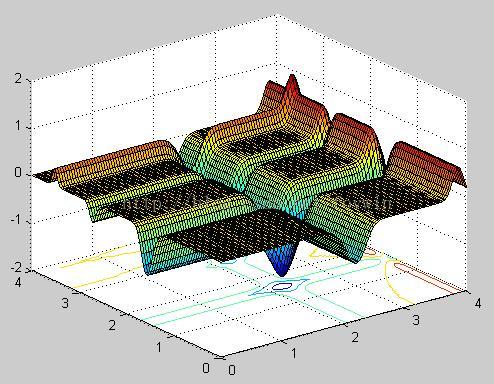

% The Particle Swarm Optimization

% (written by X S Yang, Cambridge University) % Usage: pso(number_of_particles,Num_iterations) % eg: best=pso_demo(20,10); % where best=[xbest ybest zbest] %an n by 3 matrix % xbest(i)/ybest(i) are the best at ith iteration function [best]=pso_simpledemo(n,Num_iterations) % n=number of particles % Num_iterations=total number of iterations if nargin<2, Num_iterations=10; end if nargin<1, n=20; end % Michaelewicz Function f*=-1.801 at [2.20319, 1.57049] % Splitting two parts to avoid a long line for printing str1='-sin(x)*(sin(x^2/3.14159))^20'; str2='-sin(y)*(sin(2*y^2/3.14159))^20'; funstr=strcat(str1,str2); % Converting to an inline function and vectorization f=vectorize(inline(funstr)); % range=[xmin xmax ymin ymax]; range=[0 4 0 4]; % ---------------------------------------------------- % Setting the parameters: alpha, beta % Random amplitude of roaming particles alpha=[0,1] % alpha=gamma^t=0.7^t; % Speed of convergence (0->1)=(slow->fast) beta=0.5; % ---------------------------------------------------- % Grid values of the objective function % These values are used for visualization only Ngrid=100; dx=(range(2)-range(1))/Ngrid; dy=(range(4)-range(3))/Ngrid; xgrid=range(1):dx:range(2); ygrid=range(3):dy:range(4); [x,y]=meshgrid(xgrid,ygrid); z=f(x,y); % Display the shape of the function to be optimized figure(1); surfc(x,y,z); % --------------------------------------------------- %best=zeros(Num_iterations,3); % initialize history % ----- Start Particle Swarm Optimization ----------- % generating the initial locations of n particles [xn,yn]=init_pso(n,range); % Display the paths of particles in a figure % with a contour of the objective function figure(2); % Start iterations for i=1:Num_iterations, % Show the contour of the function contour(x,y,z,15); hold on; % Find the current best location (xo,yo) zn=f(xn,yn); zn_min=min(zn); xo=min(xn(zn==zn_min)); yo=min(yn(zn==zn_min)); zo=min(zn(zn==zn_min)); % Trace the paths of all roaming particles % Display these roaming particles plot(xn,yn,'.',xo,yo,'*'); axis(range); % The accelerated PSO with alpha=gamma^t gamma=0.7; alpha=gamma.^i; % Move all the particles to new locations [xn,yn]=pso_move(xn,yn,xo,yo,alpha,beta,range); drawnow; % Use "hold on" to display paths of particles hold off; % History best(i,1)=xo; best(i,2)=yo; best(i,3)=zo; end %%%%% end of iterations % ----- All subfunctions are listed here ----- % Intial locations of n particles function [xn,yn]=init_pso(n,range) xrange=range(2)-range(1); yrange=range(4)-range(3); xn=rand(1,n)*xrange+range(1); yn=rand(1,n)*yrange+range(3); % Move all the particles toward (xo,yo) function [xn,yn]=pso_move(xn,yn,xo,yo,a,b,range) nn=size(yn,2); %a=alpha, b=beta xn=xn.*(1-b)+xo.*b+a.*(rand(1,nn)-0.5); yn=yn.*(1-b)+yo.*b+a.*(rand(1,nn)-0.5); [xn,yn]=findrange(xn,yn,range); % Make sure the particles are within the range function [xn,yn]=findrange(xn,yn,range) nn=length(yn); for i=1:nn, if xn(i)<=range(1), xn(i)=range(1); end if xn(i)>=range(2), xn(i)=range(2); end if yn(i)<=range(3), yn(i)=range(3); end if yn(i)>=range(4), yn(i)=range(4); end end |

实验结果:

参考资料:

Introduction to Mathematical Optimization (Xin-She Yang)

9078

9078

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?