前言

硬件要求

必须至少2核CPU,否则无法成功安装

k8s需要3台服务器

一、docker安装

下载4个包

containerd.io-1.2.6-3.3.el7.x86_64.rpm

container-selinux-2.119.2-1.911c772.el7_8.noarch.rpm

docker-ce-19.03.9-3.el7.x86_64.rpm

docker-ce-cli-19.03.9-3.el7.x86_64.rpm

安装rpm -ivh --nodeps --force containerd.io-1.2.6-3.3.el7.x86_64.rpm container-selinux-2.119.2-1.911c772.el7_8.noarch.rpm docker-ce-19.03.9-3.el7.x86_64.rpm docker-ce-cli-19.03.9-3.el7.x86_64.rpm

systemctl daemon-reload

systemctl enable docker

systemctl restart docker

docker --version

二、环境配置

1、配置/etc/hosts

cat >> /etc/hosts << EOF

192.168.43.86 k8s-master

192.168.43.87 k8s-node1

192.168.43.88 k8s-node2

EOF

2、验证uuid唯一

cat /sys/class/dmi/id/product_uuid

3、禁用swap

swapoff -a

sed -i.bak '/swap/s/^/#/' /etc/fstab

4、修改内核参数

sysctl net.bridge.bridge-nf-call-iptables=1

sysctl net.bridge.bridge-nf-call-ip6tables=1

cat < /etc/sysctl.d/k8s.conf

net.bridge.bridge-nf-call-ip6tables = 1

net.bridge.bridge-nf-call-iptables = 1

EOF

sysctl -p /etc/sysctl.d/k8s.conf

5、修改Cgroup Driver

修改daemon.json

cat < /etc/docker/daemon.json

{ "registry-mirrors": ["https://v16stybc.mirror.aliyuncs.com"],

"exec-opts": ["native.cgroupdriver=systemd"]

}

EOF

6、重新加载docker

systemctl daemon-reload

systemctl restart docker

如果遇到报错

systemctl restart docker

Job for docker.service failed because the control process exited with error code. See "systemctl status docker.service" and "journalctl -xe" for details.

就是/etc/docker/daemon.json 这个文件没配置好

7、配置DNS

vi /etc/resolv.conf

cat >> /etc/resolv.conf << EOF

nameserver 8.8.8.8

nameserver 114.114.114.114

EOF

三、安装k8s软件

1、配置yum源

vi /etc/yum.repos.d/kubernetes.repo

cat >> /etc/yum.repos.d/kubernetes.repo << EOF

[kubernetes]

name=Kubernetes

baseurl=https://mirrors.aliyun.com/kubernetes/yum/repos/kubernetes-el7-x86_64/

enabled=1

gpgcheck=1

repo_gpgcheck=1

gpgkey=https://mirrors.aliyun.com/kubernetes/yum/doc/yum-key.gpg https://mirrors.aliyun.com/kubernetes/yum/doc/rpm-package-key.gpg

EOF

yum clean all

yum -y makecache

cd /etc/yum.repos.d/

wget -O /etc/yum.repos.d/CentOS-Base.repo http://mirrors.aliyun.com/repo/Centos-7.repo

sed -i 's/$releasever/7/g' /etc/yum.repos.d/CentOS-Base.repo

yum repolist

2、安装

yum install -y kubelet-1.21.1-0.x86_64 kubeadm-1.21.1-0.x86_64 kubectl-1.21.1-0.x86_64

3、启动

systemctl enable kubelet && systemctl start kubelet

echo "source <(kubectl completion bash)" >> ~/.bash_profile

source ~/.bash_profile

四、拉取K8S镜像

1、配置外网DNS,服务器必须能访问国内网络

/etc/resolv.conf

cat < etc/resolv.conf

nameserver 8.8.8.8

nameserver 114.114.114.114

EOF

2、手动拉取国内阿里的镜像,然后tag改名

iptables -F

setenforce 0

docker pull registry.aliyuncs.com/google_containers/etcd:3.4.13-0

docker tag registry.aliyuncs.com/google_containers/etcd:3.4.13-0 k8s.gcr.io/etcd:3.4.13-0

docker pull registry.aliyuncs.com/google_containers/kube-apiserver:v1.21.1

docker tag registry.aliyuncs.com/google_containers/kube-apiserver:v1.21.1 k8s.gcr.io/kube-apiserver:v1.21.1

docker pull registry.aliyuncs.com/google_containers/kube-proxy:v1.21.1

docker tag registry.aliyuncs.com/google_containers/kube-proxy:v1.21.1 k8s.gcr.io/kube-proxy:v1.21.1

docker pull registry.aliyuncs.com/google_containers/kube-controller-manager:v1.21.1

docker tag registry.aliyuncs.com/google_containers/kube-controller-manager:v1.21.1 k8s.gcr.io/kube-controller-manager:v1.21.1

docker pull registry.aliyuncs.com/google_containers/kube-scheduler:v1.21.1

docker tag registry.aliyuncs.com/google_containers/kube-scheduler:v1.21.1 k8s.gcr.io/kube-scheduler:v1.21.1

docker pull registry.aliyuncs.com/google_containers/pause:3.4.1

docker tag registry.aliyuncs.com/google_containers/pause:3.4.1 k8s.gcr.io/pause:3.4.1

docker pull registry.aliyuncs.com/google_containers/coredns:1.8.0

docker tag registry.aliyuncs.com/google_containers/coredns:1.8.0 k8s.gcr.io/coredns/coredns:1.8.0

(后续的2个node节点,只需执行到以上这一步骤即可,后面是加入集群)

3、初始化kubernetes

kubeadm init --apiserver-advertise-address 192.168.43.86 --ignore-preflight-errors=Swap --pod-network-cidr=10.244.0.0/16 --kubernetes-version=v1.21.1

4、记录初始化后的信息

kubeadm join 192.168.43.86:6443 --token 29iwyn.9c150hb8kifexisc \

--discovery-token-ca-cert-hash sha256:eb3ee0d3206dae7e7d435d414c98a034ec39ca4a2e458394ec92cdc835832b10

记录kubeadm join的输出,后面需要这个命令将各个节点加入集群中。

5、相关命令

docker images查看镜像

docker rmi 镜像名 删除镜像

启动kubelet

systemctl enable kubelet && systemctl start kubelet

6、查看集群

kubectl get pods --all-namespaces -o wide

查看集群信息发现有2个进程是pending状态,而不是running状态

NAMESPACE NAME READY STATUS RESTARTS AGE IP NODE NOMINATED NODE READINESS GATES

kube-system coredns-558bd4d5db-7jgbp 1/1 pending 0 33m 10.244.0.3 k8s-master

kube-system coredns-558bd4d5db-shx9b 1/1 pending 0 33m 10.244.0.2 k8s-master

kube-system etcd-k8s-master 1/1 Running 0 33m 192.168.43.86 k8s-master

kube-system kube-apiserver-k8s-master 1/1 Running 0 33m 192.168.43.86 k8s-master

kube-system kube-controller-manager-k8s-master 1/1 Running 0 33m 192.168.43.86 k8s-master

kube-system kube-proxy-6ndvm 1/1 Running 0 33m 192.168.43.86 k8s-master

kube-system kube-scheduler-k8s-master 1/1 Running 0 33m 192.168.43.86 k8s-master

7、安装kube-flannel.yml插件

这个时候就要执行以下命令安装插件

vi kube-flannel.yml

apiVersion: policy/v1beta1

kind: PodSecurityPolicy

metadata:

name: psp.flannel.unprivileged

annotations:

seccomp.security.alpha.kubernetes.io/allowedProfileNames: docker/default

seccomp.security.alpha.kubernetes.io/defaultProfileName: docker/default

apparmor.security.beta.kubernetes.io/allowedProfileNames: runtime/default

apparmor.security.beta.kubernetes.io/defaultProfileName: runtime/default

spec:

privileged: false

volumes:

- configMap

- secret

- emptyDir

- hostPath

allowedHostPaths:

- pathPrefix: "/etc/cni/net.d"

- pathPrefix: "/etc/kube-flannel"

- pathPrefix: "/run/flannel"

readOnlyRootFilesystem: false

# Users and groups

runAsUser:

rule: RunAsAny

supplementalGroups:

rule: RunAsAny

fsGroup:

rule: RunAsAny

# Privilege Escalation

allowPrivilegeEscalation: false

defaultAllowPrivilegeEscalation: false

# Capabilities

allowedCapabilities: ['NET_ADMIN']

defaultAddCapabilities: []

requiredDropCapabilities: []

# Host namespaces

hostPID: false

hostIPC: false

hostNetwork: true

hostPorts:

- min: 0

max: 65535

# SELinux

seLinux:

# SELinux is unused in CaaSP

rule: 'RunAsAny'

---

kind: ClusterRole

apiVersion: rbac.authorization.k8s.io/v1beta1

metadata:

name: flannel

rules:

- apiGroups: ['extensions']

resources: ['podsecuritypolicies']

verbs: ['use']

resourceNames: ['psp.flannel.unprivileged']

- apiGroups:

- ""

resources:

- pods

verbs:

- get

- apiGroups:

- ""

resources:

- nodes

verbs:

- list

- watch

- apiGroups:

- ""

resources:

- nodes/status

verbs:

- patch

---

kind: ClusterRoleBinding

apiVersion: rbac.authorization.k8s.io/v1beta1

metadata:

name: flannel

roleRef:

apiGroup: rbac.authorization.k8s.io

kind: ClusterRole

name: flannel

subjects:

- kind: ServiceAccount

name: flannel

namespace: kube-system

---

apiVersion: v1

kind: ServiceAccount

metadata:

name: flannel

namespace: kube-system

---

kind: ConfigMap

apiVersion: v1

metadata:

name: kube-flannel-cfg

namespace: kube-system

labels:

tier: node

app: flannel

data:

cni-conf.json: |

{

"name": "cbr0",

"cniVersion": "0.3.1",

"plugins": [

{

"type": "flannel",

"delegate": {

"hairpinMode": true,

"isDefaultGateway": true

}

},

{

"type": "portmap",

"capabilities": {

"portMappings": true

}

}

]

}

net-conf.json: |

{

"Network": "10.244.0.0/16",

"Backend": {

"Type": "vxlan"

}

}

---

apiVersion: apps/v1

kind: DaemonSet

metadata:

name: kube-flannel-ds-amd64

namespace: kube-system

labels:

tier: node

app: flannel

spec:

selector:

matchLabels:

app: flannel

template:

metadata:

labels:

tier: node

app: flannel

spec:

affinity:

nodeAffinity:

requiredDuringSchedulingIgnoredDuringExecution:

nodeSelectorTerms:

- matchExpressions:

- key: beta.kubernetes.io/os

operator: In

values:

- linux

- key: beta.kubernetes.io/arch

operator: In

values:

- amd64

hostNetwork: true

tolerations:

- operator: Exists

effect: NoSchedule

serviceAccountName: flannel

initContainers:

- name: install-cni

image: quay.io/coreos/flannel:v0.11.0-amd64

command:

- cp

args:

- -f

- /etc/kube-flannel/cni-conf.json

- /etc/cni/net.d/10-flannel.conflist

volumeMounts:

- name: cni

mountPath: /etc/cni/net.d

- name: flannel-cfg

mountPath: /etc/kube-flannel/

containers:

- name: kube-flannel

image: quay.io/coreos/flannel:v0.11.0-amd64

command:

- /opt/bin/flanneld

args:

- --ip-masq

- --kube-subnet-mgr

resources:

requests:

cpu: "100m"

memory: "50Mi"

limits:

cpu: "100m"

memory: "50Mi"

securityContext:

privileged: false

capabilities:

add: ["NET_ADMIN"]

env:

- name: POD_NAME

valueFrom:

fieldRef:

fieldPath: metadata.name

- name: POD_NAMESPACE

valueFrom:

fieldRef:

fieldPath: metadata.namespace

volumeMounts:

- name: run

mountPath: /run/flannel

- name: flannel-cfg

mountPath: /etc/kube-flannel/

volumes:

- name: run

hostPath:

path: /run/flannel

- name: cni

hostPath:

path: /etc/cni/net.d

- name: flannel-cfg

configMap:

name: kube-flannel-cfg

---

apiVersion: apps/v1

kind: DaemonSet

metadata:

name: kube-flannel-ds-arm64

namespace: kube-system

labels:

tier: node

app: flannel

spec:

selector:

matchLabels:

app: flannel

template:

metadata:

labels:

tier: node

app: flannel

spec:

affinity:

nodeAffinity:

requiredDuringSchedulingIgnoredDuringExecution:

nodeSelectorTerms:

- matchExpressions:

- key: beta.kubernetes.io/os

operator: In

values:

- linux

- key: beta.kubernetes.io/arch

operator: In

values:

- arm64

hostNetwork: true

tolerations:

- operator: Exists

effect: NoSchedule

serviceAccountName: flannel

initContainers:

- name: install-cni

image: quay.io/coreos/flannel:v0.11.0-arm64

command:

- cp

args:

- -f

- /etc/kube-flannel/cni-conf.json

- /etc/cni/net.d/10-flannel.conflist

volumeMounts:

- name: cni

mountPath: /etc/cni/net.d

- name: flannel-cfg

mountPath: /etc/kube-flannel/

containers:

- name: kube-flannel

image: quay.io/coreos/flannel:v0.11.0-arm64

command:

- /opt/bin/flanneld

args:

- --ip-masq

- --kube-subnet-mgr

resources:

requests:

cpu: "100m"

memory: "50Mi"

limits:

cpu: "100m"

memory: "50Mi"

securityContext:

privileged: false

capabilities:

add: ["NET_ADMIN"]

env:

- name: POD_NAME

valueFrom:

fieldRef:

fieldPath: metadata.name

- name: POD_NAMESPACE

valueFrom:

fieldRef:

fieldPath: metadata.namespace

volumeMounts:

- name: run

mountPath: /run/flannel

- name: flannel-cfg

mountPath: /etc/kube-flannel/

volumes:

- name: run

hostPath:

path: /run/flannel

- name: cni

hostPath:

path: /etc/cni/net.d

- name: flannel-cfg

configMap:

name: kube-flannel-cfg

---

apiVersion: apps/v1

kind: DaemonSet

metadata:

name: kube-flannel-ds-arm

namespace: kube-system

labels:

tier: node

app: flannel

spec:

selector:

matchLabels:

app: flannel

template:

metadata:

labels:

tier: node

app: flannel

spec:

affinity:

nodeAffinity:

requiredDuringSchedulingIgnoredDuringExecution:

nodeSelectorTerms:

- matchExpressions:

- key: beta.kubernetes.io/os

operator: In

values:

- linux

- key: beta.kubernetes.io/arch

operator: In

values:

- arm

hostNetwork: true

tolerations:

- operator: Exists

effect: NoSchedule

serviceAccountName: flannel

initContainers:

- name: install-cni

image: quay.io/coreos/flannel:v0.11.0-arm

command:

- cp

args:

- -f

- /etc/kube-flannel/cni-conf.json

- /etc/cni/net.d/10-flannel.conflist

volumeMounts:

- name: cni

mountPath: /etc/cni/net.d

- name: flannel-cfg

mountPath: /etc/kube-flannel/

containers:

- name: kube-flannel

image: quay.io/coreos/flannel:v0.11.0-arm

command:

- /opt/bin/flanneld

args:

- --ip-masq

- --kube-subnet-mgr

resources:

requests:

cpu: "100m"

memory: "50Mi"

limits:

cpu: "100m"

memory: "50Mi"

securityContext:

privileged: false

capabilities:

add: ["NET_ADMIN"]

env:

- name: POD_NAME

valueFrom:

fieldRef:

fieldPath: metadata.name

- name: POD_NAMESPACE

valueFrom:

fieldRef:

fieldPath: metadata.namespace

volumeMounts:

- name: run

mountPath: /run/flannel

- name: flannel-cfg

mountPath: /etc/kube-flannel/

volumes:

- name: run

hostPath:

path: /run/flannel

- name: cni

hostPath:

path: /etc/cni/net.d

- name: flannel-cfg

configMap:

name: kube-flannel-cfg

---

apiVersion: apps/v1

kind: DaemonSet

metadata:

name: kube-flannel-ds-ppc64le

namespace: kube-system

labels:

tier: node

app: flannel

spec:

selector:

matchLabels:

app: flannel

template:

metadata:

labels:

tier: node

app: flannel

spec:

affinity:

nodeAffinity:

requiredDuringSchedulingIgnoredDuringExecution:

nodeSelectorTerms:

- matchExpressions:

- key: beta.kubernetes.io/os

operator: In

values:

- linux

- key: beta.kubernetes.io/arch

operator: In

values:

- ppc64le

hostNetwork: true

tolerations:

- operator: Exists

effect: NoSchedule

serviceAccountName: flannel

initContainers:

- name: install-cni

image: quay.io/coreos/flannel:v0.11.0-ppc64le

command:

- cp

args:

- -f

- /etc/kube-flannel/cni-conf.json

- /etc/cni/net.d/10-flannel.conflist

volumeMounts:

- name: cni

mountPath: /etc/cni/net.d

- name: flannel-cfg

mountPath: /etc/kube-flannel/

containers:

- name: kube-flannel

image: quay.io/coreos/flannel:v0.11.0-ppc64le

command:

- /opt/bin/flanneld

args:

- --ip-masq

- --kube-subnet-mgr

resources:

requests:

cpu: "100m"

memory: "50Mi"

limits:

cpu: "100m"

memory: "50Mi"

securityContext:

privileged: false

capabilities:

add: ["NET_ADMIN"]

env:

- name: POD_NAME

valueFrom:

fieldRef:

fieldPath: metadata.name

- name: POD_NAMESPACE

valueFrom:

fieldRef:

fieldPath: metadata.namespace

volumeMounts:

- name: run

mountPath: /run/flannel

- name: flannel-cfg

mountPath: /etc/kube-flannel/

volumes:

- name: run

hostPath:

path: /run/flannel

- name: cni

hostPath:

path: /etc/cni/net.d

- name: flannel-cfg

configMap:

name: kube-flannel-cfg

---

apiVersion: apps/v1

kind: DaemonSet

metadata:

name: kube-flannel-ds-s390x

namespace: kube-system

labels:

tier: node

app: flannel

spec:

selector:

matchLabels:

app: flannel

template:

metadata:

labels:

tier: node

app: flannel

spec:

affinity:

nodeAffinity:

requiredDuringSchedulingIgnoredDuringExecution:

nodeSelectorTerms:

- matchExpressions:

- key: beta.kubernetes.io/os

operator: In

values:

- linux

- key: beta.kubernetes.io/arch

operator: In

values:

- s390x

hostNetwork: true

tolerations:

- operator: Exists

effect: NoSchedule

serviceAccountName: flannel

initContainers:

- name: install-cni

image: quay.io/coreos/flannel:v0.11.0-s390x

command:

- cp

args:

- -f

- /etc/kube-flannel/cni-conf.json

- /etc/cni/net.d/10-flannel.conflist

volumeMounts:

- name: cni

mountPath: /etc/cni/net.d

- name: flannel-cfg

mountPath: /etc/kube-flannel/

containers:

- name: kube-flannel

image: quay.io/coreos/flannel:v0.11.0-s390x

command:

- /opt/bin/flanneld

args:

- --ip-masq

- --kube-subnet-mgr

resources:

requests:

cpu: "100m"

memory: "50Mi"

limits:

cpu: "100m"

memory: "50Mi"

securityContext:

privileged: false

capabilities:

add: ["NET_ADMIN"]

env:

- name: POD_NAME

valueFrom:

fieldRef:

fieldPath: metadata.name

- name: POD_NAMESPACE

valueFrom:

fieldRef:

fieldPath: metadata.namespace

volumeMounts:

- name: run

mountPath: /run/flannel

- name: flannel-cfg

mountPath: /etc/kube-flannel/

volumes:

- name: run

hostPath:

path: /run/flannel

- name: cni

hostPath:

path: /etc/cni/net.d

- name: flannel-cfg

configMap:

name: kube-flannel-cfg

8、应用插件

kubectl apply -f kube-flannel.yml

9、

出现以下信息,安装成功

kubectl get pods --all-namespaces -o wide

NAMESPACE NAME READY STATUS RESTARTS AGE IP NODE NOMINATED NODE READINESS GATES

kube-system coredns-558bd4d5db-7jgbp 1/1 Running 0 33m 10.244.0.3 k8s-master

kube-system coredns-558bd4d5db-shx9b 1/1 Running 0 33m 10.244.0.2 k8s-master

kube-system etcd-k8s-master 1/1 Running 0 33m 192.168.43.86 k8s-master

kube-system kube-apiserver-k8s-master 1/1 Running 0 33m 192.168.43.86 k8s-master

kube-system kube-controller-manager-k8s-master 1/1 Running 0 33m 192.168.43.86 k8s-master

kube-system kube-flannel-ds-amd64-tnxmc 1/1 Running 0 8m 192.168.43.86 k8s-master

kube-system kube-proxy-6ndvm 1/1 Running 0 33m 192.168.43.86 k8s-master

kube-system kube-scheduler-k8s-master 1/1 Running 0 33m 192.168.43.86 k8s-master

10、

删除master节点默认污点

给master打污点标记

kubectl taint nodes --all node-role.kubernetes.io/master-

taint:污点的意思。如果一个节点被打上了污点,那么pod是不允许运行在这个节点上面的

默认情况下集群不会在master上调度pod

kubectl describe node master|grep -i taints

污点机制

语法:

kubectl taint node [node] key=value[effect]

其中[effect] 可取值: [ NoSchedule | PreferNoSchedule | NoExecute ]

NoSchedule: 一定不能被调度

PreferNoSchedule: 尽量不要调度

NoExecute: 不仅不会调度, 还会驱逐Node上已有的Pod1.2.3.4.5.

打污点

[root@master ~]# kubectl taint node master key1=value1:NoSchedulenode/master tainted[root@master ~]# kubectl describe node master|grep -i taintsTaints: key1=value1:NoSchedule1.2.3.4.

key为key1,value为value1(value可以为空),effect为NoSchedule表示一定不能被调度

删除污点:

[root@master ~]# kubectl taint nodes master key1- node/master untainted[root@master ~]# kubectl describe node master|grep -i taintsTaints: 1.2.3.4.

删除指定key所有的effect,‘-’表示移除所有以key1为键的污点

五、Node节点安装(2个节点)

1、完整重复 从一 到 三 的步骤

2、 加入集群

以下操作master上执行

(1)查看令牌

kubeadm token list

如果发现之前初始化时的令牌已过期

(2) 生成新的令牌

kubeadm token create

(3) 生成新的加密串

openssl x509 -pubkey -in /etc/kubernetes/pki/ca.crt | openssl rsa -pubin -outform der 2>/dev/null | openssl dgst -sha256 -hex | sed 's/^.* //'

(4) node节点加入集群

在node节点上分别执行如下操作

kubeadm join 192.168.43.86:6443 --token whkktm.8f5gtby7ftk80557 --discovery-token-ca-cert-hash sha256:b83659d072332b493f712d962753be2146f34dc577495938533a3de7f3fdfd3c

六、Dashboard安装(master节点操作)

(1)配置文件kubernetes-dashboard.yaml

vi kubernetes-dashboard.yaml

# Copyright 2017 The Kubernetes Authors.

#

# Licensed under the Apache License, Version 2.0 (the "License");

# you may not use this file except in compliance with the License.

# You may obtain a copy of the License at

#

# http://www.apache.org/licenses/LICENSE-2.0

#

# Unless required by applicable law or agreed to in writing, software

# distributed under the License is distributed on an "AS IS" BASIS,

# WITHOUT WARRANTIES OR CONDITIONS OF ANY KIND, either express or implied.

# See the License for the specific language governing permissions and

# limitations under the License.

# Configuration to deploy release version of the Dashboard UI compatible with

# Kubernetes 1.8.

#

# Example usage: kubectl create -f

# ------------------- Dashboard Secret ------------------- #

apiVersion: v1

kind: Secret

metadata:

labels:

k8s-app: kubernetes-dashboard

name: kubernetes-dashboard-certs

namespace: kube-system

type: Opaque

---

# ------------------- Dashboard Service Account ------------------- #

apiVersion: v1

kind: ServiceAccount

metadata:

labels:

k8s-app: kubernetes-dashboard

name: kubernetes-dashboard

namespace: kube-system

---

# ------------------- Dashboard Role & Role Binding ------------------- #

kind: Role

apiVersion: rbac.authorization.k8s.io/v1

metadata:

name: kubernetes-dashboard-minimal

namespace: kube-system

rules:

# Allow Dashboard to create 'kubernetes-dashboard2-key-holder' secret.

- apiGroups: [""]

resources: ["secrets"]

verbs: ["create"]

# Allow Dashboard to create 'kubernetes-dashboard2-settings' config map.

- apiGroups: [""]

resources: ["configmaps"]

verbs: ["create"]

# Allow Dashboard to get, update and delete Dashboard exclusive secrets.

- apiGroups: [""]

resources: ["secrets"]

resourceNames: ["kubernetes-dashboard-key-holder", "kubernetes-dashboard-certs"]

verbs: ["get", "update", "delete"]

# Allow Dashboard to get and update 'kubernetes-dashboard2-settings' config map.

- apiGroups: [""]

resources: ["configmaps"]

resourceNames: ["kubernetes-dashboard-settings"]

verbs: ["get", "update"]

# Allow Dashboard to get metrics from heapster.

- apiGroups: [""]

resources: ["services"]

resourceNames: ["heapster"]

verbs: ["proxy"]

- apiGroups: [""]

resources: ["services/proxy"]

resourceNames: ["heapster", "http:heapster:", "https:heapster:"]

verbs: ["get"]

---

apiVersion: rbac.authorization.k8s.io/v1

kind: RoleBinding

metadata:

name: kubernetes-dashboard-minimal

namespace: kube-system

roleRef:

apiGroup: rbac.authorization.k8s.io

kind: Role

name: kubernetes-dashboard-minimal

subjects:

- kind: ServiceAccount

name: kubernetes-dashboard

namespace: kube-system

---

# ------------------- Dashboard Deployment ------------------- #

kind: Deployment

apiVersion: apps/v1

metadata:

labels:

k8s-app: kubernetes-dashboard

name: kubernetes-dashboard

namespace: kube-system

spec:

replicas: 1

revisionHistoryLimit: 10

selector:

matchLabels:

k8s-app: kubernetes-dashboard

template:

metadata:

labels:

k8s-app: kubernetes-dashboard

spec:

containers:

- name: kubernetes-dashboard

image: mirrorgooglecontainers/kubernetes-dashboard-amd64:v1.10.1

ports:

- containerPort: 8443

protocol: TCP

args:

- --auto-generate-certificates

# Uncomment the following line to manually specify Kubernetes API server Host

# If not specified, Dashboard will attempt to auto discover the API server and connect

# to it. Uncomment only if the default does not work.

# - --apiserver-host=http://my-address:port

volumeMounts:

- name: kubernetes-dashboard-certs

mountPath: /certs

# Create on-disk volume to store exec logs

- mountPath: /tmp

name: tmp-volume

livenessProbe:

httpGet:

scheme: HTTPS

path: /

port: 8443

initialDelaySeconds: 30

timeoutSeconds: 30

volumes:

- name: kubernetes-dashboard-certs

secret:

secretName: kubernetes-dashboard-certs

- name: tmp-volume

emptyDir: {}

serviceAccountName: kubernetes-dashboard

# Comment the following tolerations if Dashboard must not be deployed on master

tolerations:

- key: node-role.kubernetes.io/master

effect: NoSchedule

---

# ------------------- Dashboard Service ------------------- #

kind: Service

apiVersion: v1

metadata:

labels:

k8s-app: kubernetes-dashboard

name: kubernetes-dashboard

namespace: kube-system

spec:

type: NodePort

ports:

- port: 80

targetPort: 8443

nodePort: 30000

selector:

k8s-app: kubernetes-dashboard

(2)修改镜像

由于默认的镜像仓库网络访问不通,故改成阿里镜像

sed -i 's/k8s.gcr.io/registry.cn-hangzhou.aliyuncs.com\/kuberneters/g' kubernetes-dashboard.yaml

配置NodePort,外部通过 访问Dashboard,此时端口为30001

sed -i '/targetPort:/a\ \ \ \ \ \ nodePort: 30001\n\ \ type: NodePort' kubernetes-dashboard.yaml

(3)新增管理员帐号

cat >> kubernetes-dashboard.yaml << EOF ---# ------------------- dashboard-admin ------------------- #apiVersion: v1 kind: ServiceAccount metadata: name: dashboard-admin namespace: kube-system --- apiVersion: rbac.authorization.k8s.io/v1beta1 kind: ClusterRoleBinding metadata: name: dashboard-admin subjects: - kind: ServiceAccount name: dashboard-admin namespace: kube-system roleRef: apiGroup: rbac.authorization.k8s.io kind: ClusterRole name: cluster-admin EOF

创建超级管理员的账号用于登录Dashboard

(4)部署Dashboard

kubectl apply -f kubernetes-dashboard.yaml

(5)状态查看

kubectl get deployment kubernetes-dashboard -n kube-system

kubectl get pods -n kube-system -o wide

kubectl get services -n kube-system

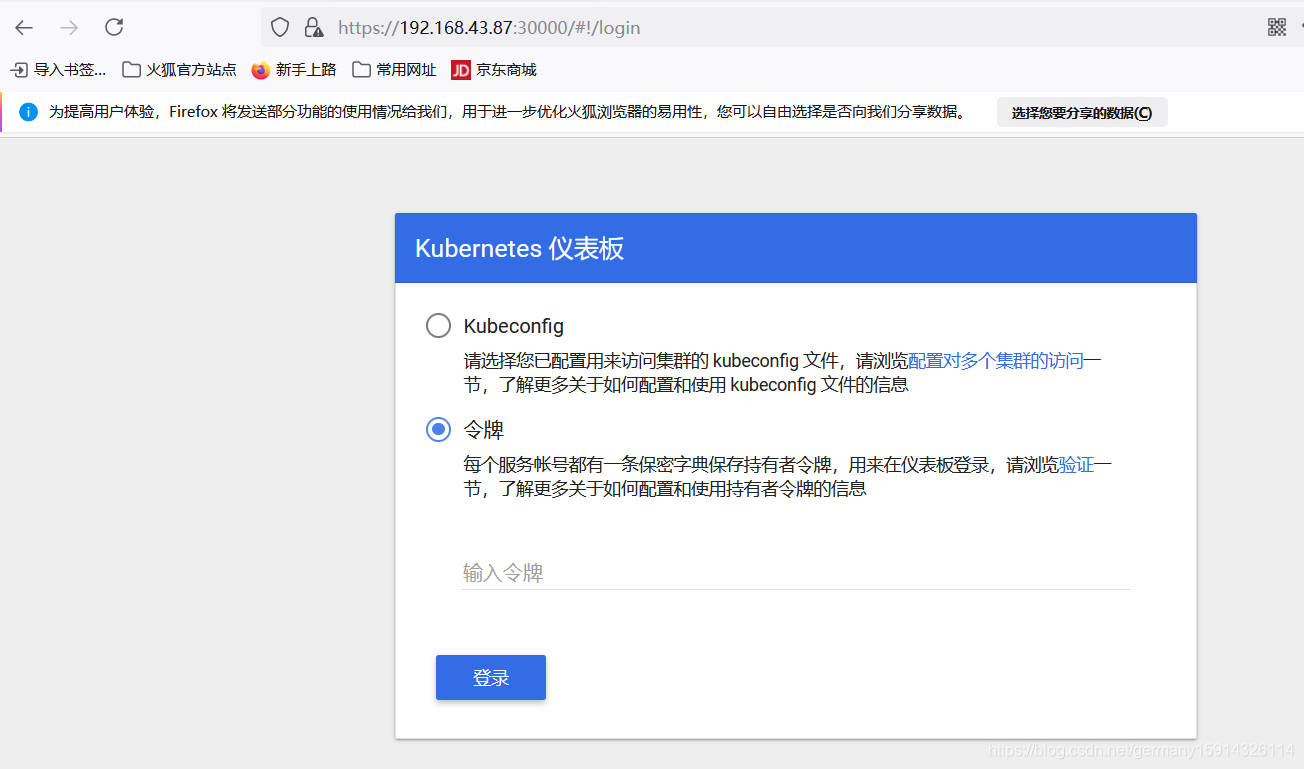

(6)令牌查看

kubectl describe secrets -n kube-system kubernetes-dashboard

记录令牌为:

注意这里的kube-system可以通过kubectl get deployment kubernetes-dashboard -n kube-system命令查看

[root@k8s-master opt]# kubectl get deployment kubernetes-dashboard -n kube-system

NAME READY UP-TO-DATE AVAILABLE AGE

kubernetes-dashboard 1/1 1 1 2d20h

所以再执行kubectl describe secrets -n kube-system kubernetes-dashboard查看令牌

[root@k8s-master opt]# kubectl describe secrets -n kube-system kubernetes-dashboard

Name: kubernetes-dashboard-certs

Namespace: kube-system

Labels: k8s-app=kubernetes-dashboard

Annotations:

Type: Opaque

Data

====

Name: kubernetes-dashboard-key-holder

Namespace: kube-system

Labels:

Annotations:

Type: Opaque

Data

====

priv: 1675 bytes

pub: 459 bytes

Name: kubernetes-dashboard-token-jk7m9

Namespace: kube-system

Labels:

Annotations: kubernetes.io/service-account.name: kubernetes-dashboard

kubernetes.io/service-account.uid: 41202a0a-d503-46db-a40f-163a7445251c

Type: kubernetes.io/service-account-token

Data

====

token: eyJhbGciOiJSUzI1NiIsImtpZCI6Ilp1aldHREJUR01YU3dLSTBYQkZWYWRjZ0JsUkx5bGs2dG42bUZBQkhYUk0ifQ.eyJpc3MiOiJrdWJlcm5ldGVzL3NlcnZpY2VhY2NvdW50Iiwia3ViZXJuZXRlcy5pby9zZXJ2aWNlYWNjb3VudC9uYW1lc3BhY2UiOiJrdWJlLXN5c3RlbSIsImt1YmVybmV0ZXMuaW8vc2VydmljZWFjY291bnQvc2VjcmV0Lm5hbWUiOiJrdWJlcm5ldGVzLWRhc2hib2FyZC10b2tlbi1qazdtOSIsImt1YmVybmV0ZXMuaW8vc2VydmljZWFjY291bnQvc2VydmljZS1hY2NvdW50Lm5hbWUiOiJrdWJlcm5ldGVzLWRhc2hib2FyZCIsImt1YmVybmV0ZXMuaW8vc2VydmljZWFjY291bnQvc2VydmljZS1hY2NvdW50LnVpZCI6IjQxMjAyYTBhLWQ1MDMtNDZkYi1hNDBmLTE2M2E3NDQ1MjUxYyIsInN1YiI6InN5c3RlbTpzZXJ2aWNlYWNjb3VudDprdWJlLXN5c3RlbTprdWJlcm5ldGVzLWRhc2hib2FyZCJ9.lRd8K6eSIIT15mPgn8H90gtXn7Zx3edFjiWxd04PJVrtUXJwmBFpbamvZY-fAn9t9QGgZXGv_Bx1BYEZ9O5Ee0NjGhR-ecv11j74pGy8auUVy6k01_Nauj2k9H1nxJTU5Ep1D3QMJnwD6gehbOSxH8UyyFu4SAcmpf_EofZro1_grEJSUOchD2YFm2jehx0S9NjgyeWZPtQC7KwgmYIJxm_t8KQ96TXRZJkRzjUCkzogRWIsRyS0FoGQt5jrK3FOqa67sUWSrV4bMbiO7OYcvpSd6VH49msiivG7uQSA11UwaXDH98GsTxKNbngqFwUcnOQWq5QgvAsl9XVxmhxSug

ca.crt: 1066 bytes

namespace: 11 bytes

token就是令牌号

通过执行kubectl get services -n kube-system查看端口号是30000

[root@k8s-master opt]# kubectl get services -n kube-system

NAME TYPE CLUSTER-IP EXTERNAL-IP PORT(S) AGE

kube-dns ClusterIP 10.96.0.10 53/UDP,53/TCP,9153/TCP 3d22h

kubernetes-dashboard NodePort 10.99.104.138 80:30000/TCP 2d19h

访问节点

https://192.168.43.87:30000

使用火狐浏览器访问

通过令牌方式登录

Dashboard提供了可以实现集群管理、工作负载、服务发现和负载均衡、存储、字典配置、日志视图等功能。

六、集群测试

1. 部署应用

1.1 命令方式

kubectl run httpd-app --image=httpd --replicas=3

通过命令行方式部署apache服务

1.2 配置文件方式

cat >> nginx.yml << EOF apiVersion: extensions/v1beta1 kind: Deployment metadata: name: nginx spec: replicas: 3 template: metadata: labels: app: nginx spec: restartPolicy: Always containers: - name: nginx image: nginx:latest EOF

kubectl apply -f nginx.yml

2. 状态查看

2.1 查看节点状态

kubectl get nodes

2.2 查看pod状态

kubectl get pod --all-namespaces

2.3 查看副本数

kubectl get deployments

kubectl get pod -o wide

可以看到nginx和httpd的3个副本pod均匀分布在3个节点上

2.4 查看deployment详细信息

kubectl describe deployments

2.5 查看集群基本组件状态

kubectl get cs

至此完成Centos7.6下k8s(v1.14.2)集群部署。

本文详细介绍K8s集群在Centos7.6下的部署流程,包括硬件要求、环境配置、软件安装等关键步骤,并演示如何通过安装插件解决网络问题,最后进行集群测试。

本文详细介绍K8s集群在Centos7.6下的部署流程,包括硬件要求、环境配置、软件安装等关键步骤,并演示如何通过安装插件解决网络问题,最后进行集群测试。

622

622

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?