文章目录

第一步 添加Windows依赖文件夹,拷贝hadoop-3.1.0到非中文路径

hadoop-3.1.0 的Windows文件夹可以评论或私聊我获取 :)

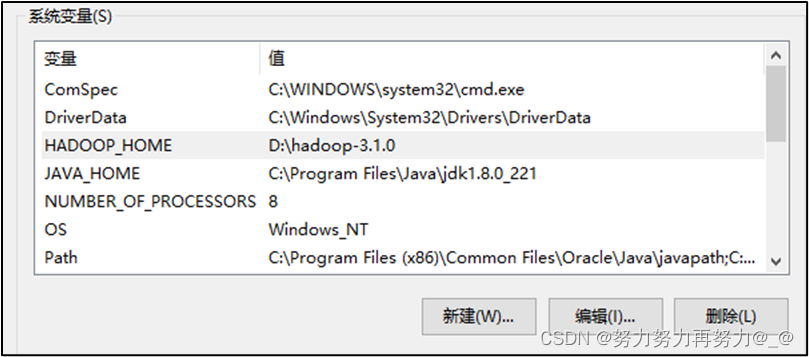

第二步 配置HADOOP_HOME环境变量

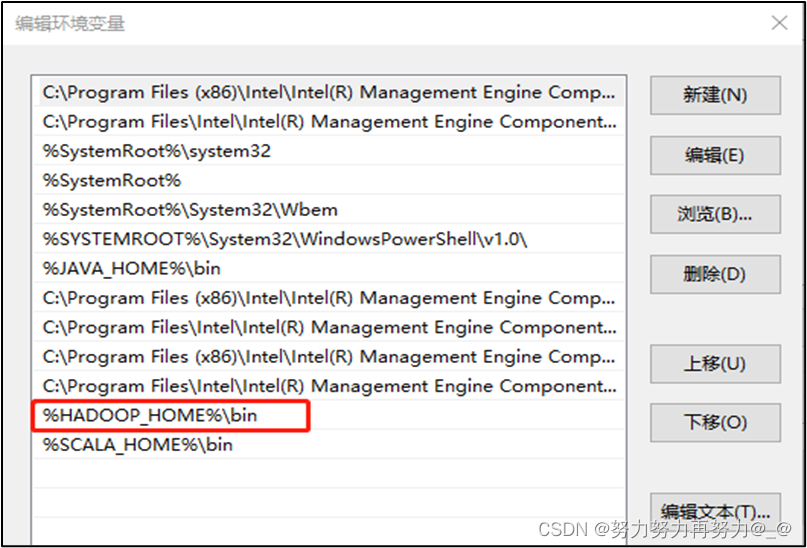

第三步 配置Path环境变量

防止环境变量不起作用,建议重启一下电脑

第四步 双击winutils.exe

如果出现如下错误,说明缺少微软运行库。

有需要微软运行库的也可以私聊或评论我获取:)

第五步 创建maven工程,编写代码

pom.xml

<dependencies>

<dependency>

<groupId>org.apache.hadoop</groupId>

<artifactId>hadoop-client</artifactId>

<version>3.1.3</version>

</dependency>

<dependency>

<groupId>junit</groupId>

<artifactId>junit</artifactId>

<version>4.12</version>

</dependency>

<dependency>

<groupId>org.slf4j</groupId>

<artifactId>slf4j-log4j12</artifactId>

<version>1.7.30</version>

</dependency>

</dependencies>

第六步 在src/main/resources目录下创建log4j.properties

log4j.rootLogger=INFO, stdout

log4j.appender.stdout=org.apache.log4j.ConsoleAppender

log4j.appender.stdout.layout=org.apache.log4j.PatternLayout

log4j.appender.stdout.layout.ConversionPattern=%d %p [%c] - %m%n

log4j.appender.logfile=org.apache.log4j.FileAppender

log4j.appender.logfile.File=target/spring.log

log4j.appender.logfile.layout=org.apache.log4j.PatternLayout

log4j.appender.logfile.layout.ConversionPattern=%d %p [%c] - %m%n

第七步 创建包,创建类HdfsClient类

import org.apache.hadoop.conf.Configuration;

import org.apache.hadoop.fs.FileSystem;

import org.apache.hadoop.fs.Path;

import org.junit.Test;

import java.io.IOException;

import java.net.URI;

import java.net.URISyntaxException;

public class HDFSClient {

@Test

public void testMkdir() throws URISyntaxException, IOException, InterruptedException {

URI uri = new URI("hdfs://hadoop102:8020");

Configuration configuration = new Configuration();

String user = "stu";

FileSystem fs = FileSystem.get(uri, configuration, user);

fs.mkdirs(new Path("/xiyou/huaguoshan"));

fs.close();

}

}

第八步 查看运行结果:能够正常在HDFS创建目录,但是出现如下错误

重启一下电脑,就可以解决了

2022-08-18 20:11:27,842 WARN [org.apache.hadoop.util.Shell] - Did not find winutils.exe: {}

java.io.FileNotFoundException: java.io.FileNotFoundException: HADOOP_HOME and hadoop.home.dir are unset. -see https://wiki.apache.org/hadoop/WindowsProblems

at org.apache.hadoop.util.Shell.fileNotFoundException(Shell.java:549)

at org.apache.hadoop.util.Shell.getHadoopHomeDir(Shell.java:570)

at org.apache.hadoop.util.Shell.getQualifiedBin(Shell.java:593)

at org.apache.hadoop.util.Shell.<clinit>(Shell.java:690)

at org.apache.hadoop.util.StringUtils.<clinit>(StringUtils.java:78)

at org.apache.hadoop.fs.FileSystem$Cache$Key.<init>(FileSystem.java:3482)

at org.apache.hadoop.fs.FileSystem$Cache$Key.<init>(FileSystem.java:3477)

at org.apache.hadoop.fs.FileSystem$Cache.get(FileSystem.java:3319)

at org.apache.hadoop.fs.FileSystem.get(FileSystem.java:479)

at org.apache.hadoop.fs.FileSystem$1.run(FileSystem.java:217)

at org.apache.hadoop.fs.FileSystem$1.run(FileSystem.java:214)

at java.security.AccessController.doPrivileged(Native Method)

at javax.security.auth.Subject.doAs(Subject.java:422)

at org.apache.hadoop.security.UserGroupInformation.doAs(UserGroupInformation.java:1729)

at org.apache.hadoop.fs.FileSystem.get(FileSystem.java:214)

at com.stu.hdfs.HDFSClient.testMkdir(HDFSClient.java:19)

at sun.reflect.NativeMethodAccessorImpl.invoke0(Native Method)

at sun.reflect.NativeMethodAccessorImpl.invoke(NativeMethodAccessorImpl.java:62)

at sun.reflect.DelegatingMethodAccessorImpl.invoke(DelegatingMethodAccessorImpl.java:43)

at java.lang.reflect.Method.invoke(Method.java:498)

at org.junit.runners.model.FrameworkMethod$1.runReflectiveCall(FrameworkMethod.java:50)

at org.junit.internal.runners.model.ReflectiveCallable.run(ReflectiveCallable.java:12)

at org.junit.runners.model.FrameworkMethod.invokeExplosively(FrameworkMethod.java:47)

at org.junit.internal.runners.statements.InvokeMethod.evaluate(InvokeMethod.java:17)

at org.junit.runners.ParentRunner.runLeaf(ParentRunner.java:325)

at org.junit.runners.BlockJUnit4ClassRunner.runChild(BlockJUnit4ClassRunner.java:78)

at org.junit.runners.BlockJUnit4ClassRunner.runChild(BlockJUnit4ClassRunner.java:57)

at org.junit.runners.ParentRunner$3.run(ParentRunner.java:290)

at org.junit.runners.ParentRunner$1.schedule(ParentRunner.java:71)

at org.junit.runners.ParentRunner.runChildren(ParentRunner.java:288)

at org.junit.runners.ParentRunner.access$000(ParentRunner.java:58)

at org.junit.runners.ParentRunner$2.evaluate(ParentRunner.java:268)

at org.junit.runners.ParentRunner.run(ParentRunner.java:363)

at org.junit.runner.JUnitCore.run(JUnitCore.java:137)

at com.intellij.junit4.JUnit4IdeaTestRunner.startRunnerWithArgs(JUnit4IdeaTestRunner.java:69)

at com.intellij.rt.junit.IdeaTestRunner$Repeater$1.execute(IdeaTestRunner.java:38)

at com.intellij.rt.execution.junit.TestsRepeater.repeat(TestsRepeater.java:11)

at com.intellij.rt.junit.IdeaTestRunner$Repeater.startRunnerWithArgs(IdeaTestRunner.java:35)

at com.intellij.rt.junit.JUnitStarter.prepareStreamsAndStart(JUnitStarter.java:235)

at com.intellij.rt.junit.JUnitStarter.main(JUnitStarter.java:54)

Caused by: java.io.FileNotFoundException: HADOOP_HOME and hadoop.home.dir are unset.

at org.apache.hadoop.util.Shell.checkHadoopHomeInner(Shell.java:469)

at org.apache.hadoop.util.Shell.checkHadoopHome(Shell.java:440)

at org.apache.hadoop.util.Shell.<clinit>(Shell.java:517)

... 36 more

2022-08-18 20:11:28,269 WARN [org.apache.hadoop.util.NativeCodeLoader] - Unable to load native-hadoop library for your platform... using builtin-java classes where applicable

Process finished with exit code 0

9763

9763

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?