前言

别瞅了!认真看完你肯定行

一、打开动漫详细页面

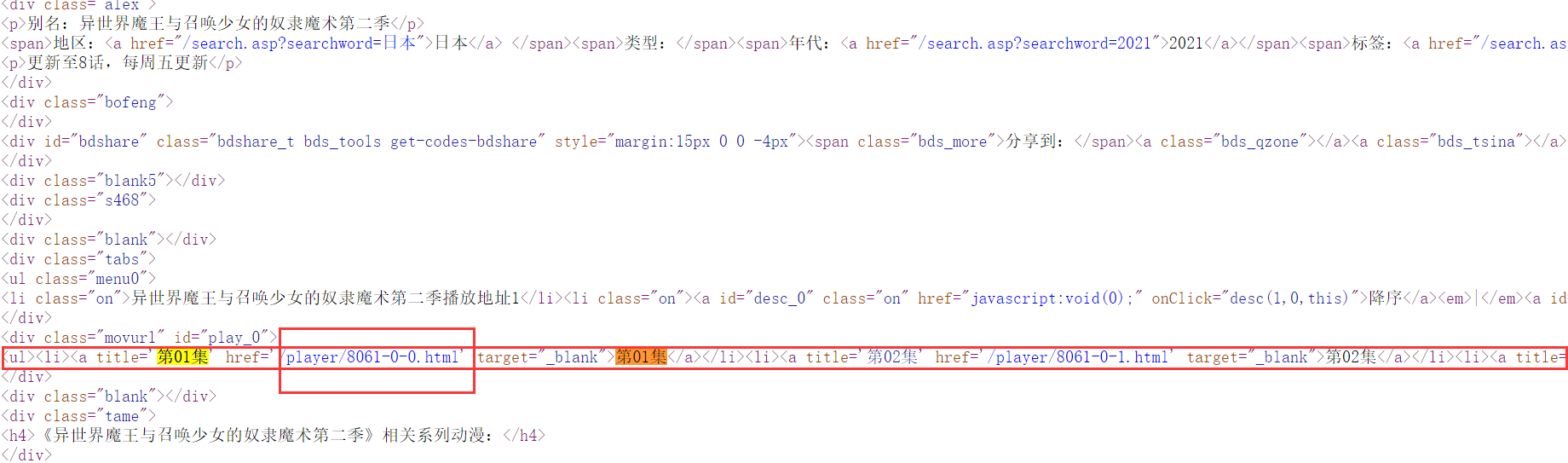

二、查看网页源码

查看网页源码搜索关词能够找到相关内容,我们可以看见详情页地址并不完整,所以我们需要出拼接出完整url

def url_parse():

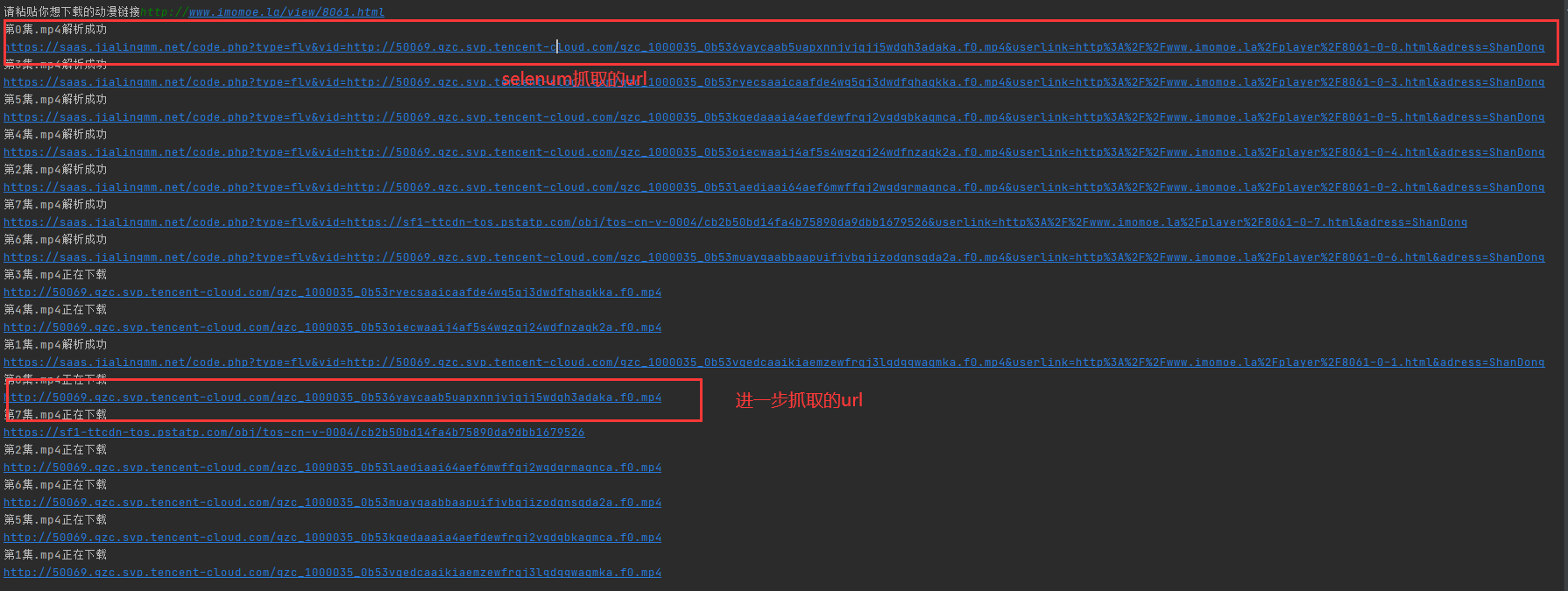

new_url = input("请粘贴你想下载的动漫链接")

headers = {'User-Agent': 'Mozilla/5.0 (Windows NT 10.0; Win64; x64) AppleWebKit/537.36 (KHTML, like Gecko) Chrome/86.0.4240.75 Safari/537.36'}

response = requests.get(url=new_url, headers=headers).text

tree = etree.HTML(response)

li_list = tree.xpath("//div[@id='play_0']/ul/li")

dic ={}

for li in li_list:

url = "http://www.imomoe.ai" + li.xpath("a/@href")[0]

name = li.xpath("a/@title")[0]

dic[name]=url

return dic

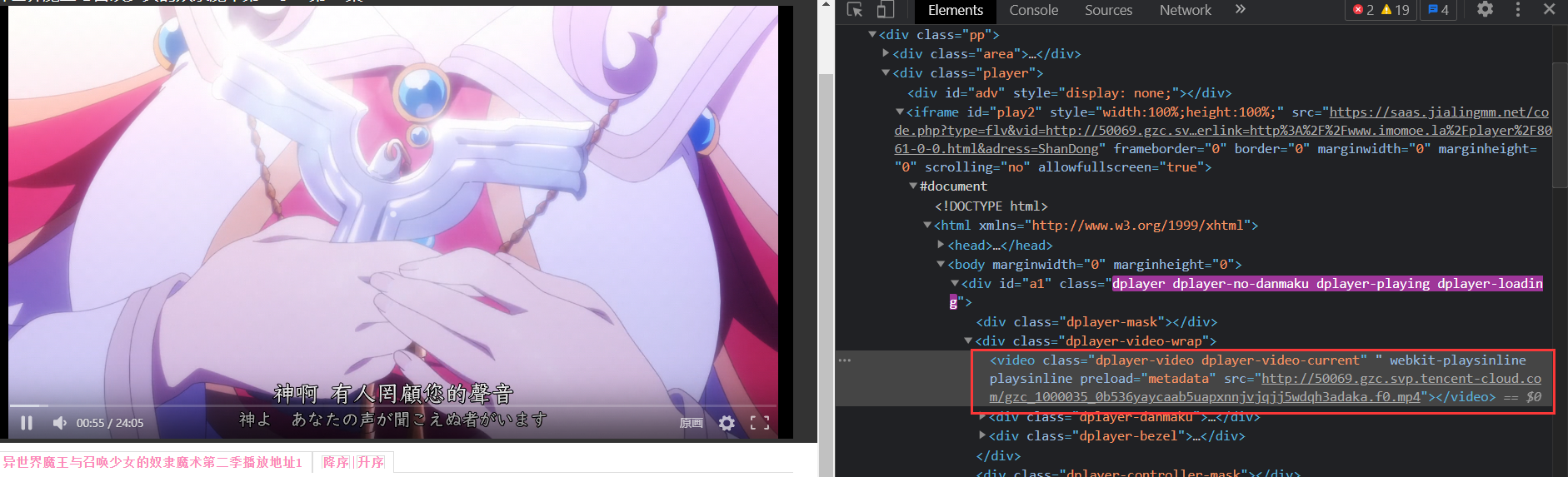

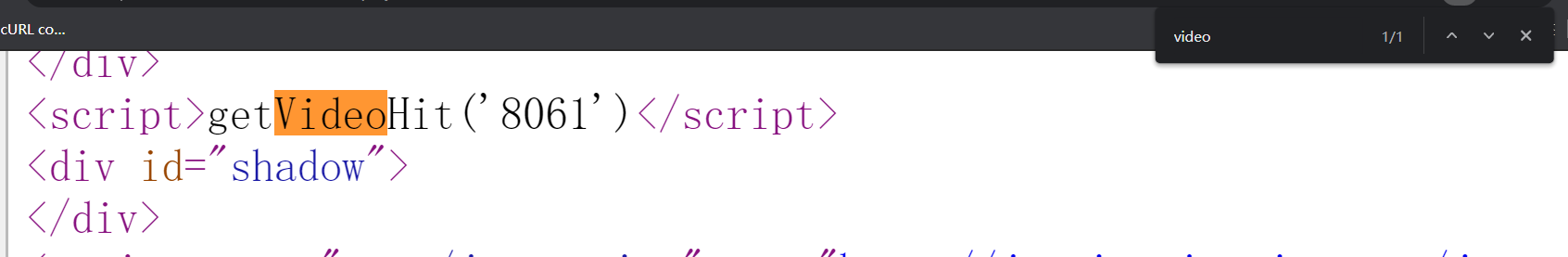

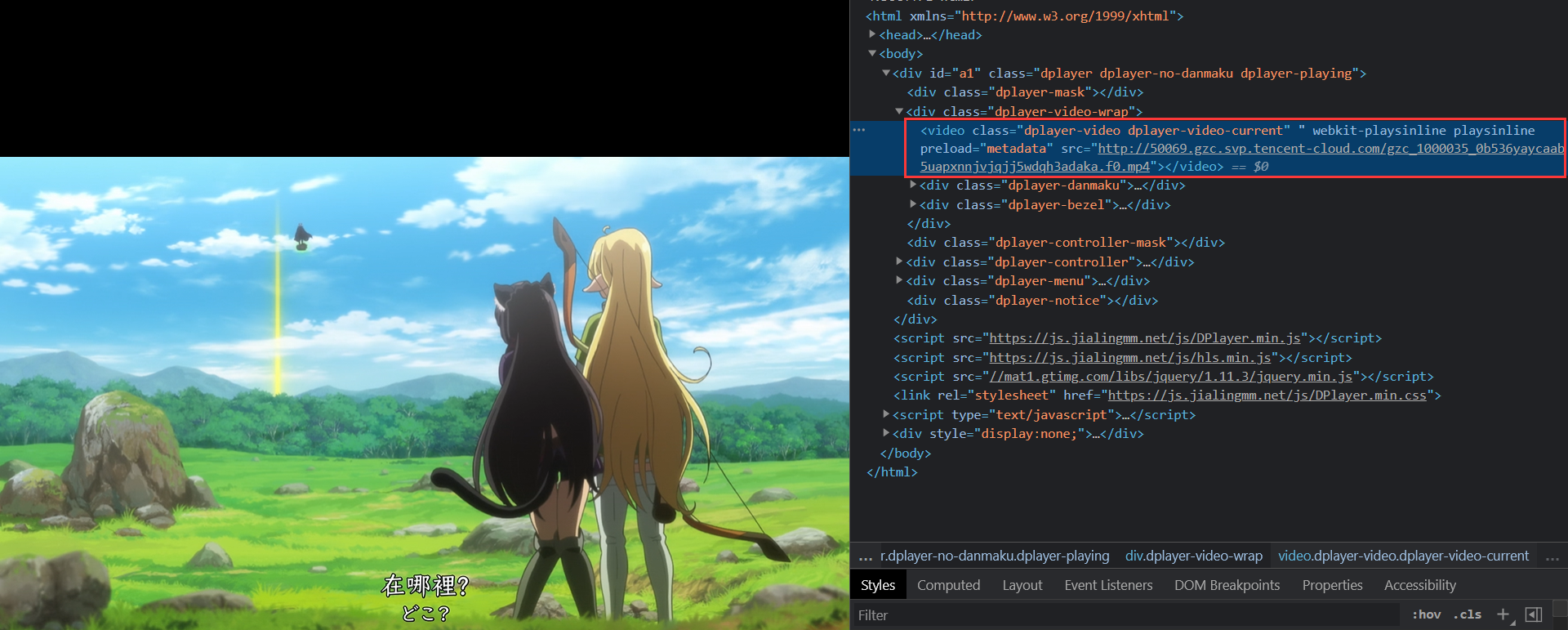

三、进入详情页查看

打开开发者工具(F12)点击视频我们可以看到video标签中src属性为视频地址

查看网页源码发现并没有找到我们想要video标签中的属性,我们用selenum获取网页源码

def data(url):

bro = webdriver.Chrome(executable_path="./chromedriver")

r = bro.get(url)

page_data = bro.page_source

bro.quit()

tree1 = etree.HTML(page_data)

video= tree1.xpath('//div[@class="player"]/iframe/@src')[0]

name = "第"+video.split(".")[-2].split("-")[-1]+"集.mp4"

x = threading.Thread(target=videos, args=(name, video,))

x.start()

print(name + "解析成功")

四、线程创建

通过selenum提取url需要依次提取,这简直太费时间了,所以我们创建线程进行同步提取来节省时间

def data_parse(dic):

tasks=[]

urls=list(dic.values())

for url in urls:

x=threading.Thread(target=data,args=(url,))

tasks.append(x)

for task in tasks:

task.start()

sleep(1)

通过selenum提取的url跟我们刚才所看到视频url并不相同,不过跳转到我们selenum抓取的url在重复抓取一下就能找到视频url

def videos(name,url):

bro= webdriver.Chrome(executable_path="./chromedriver.exe")

bro.get(url)

mp4_data = bro.page_source

bro.quit()

tree2 = etree.HTML(mp4_data)

video= tree2.xpath("//*[@id='a1']/div[2]/video/@src")[0]

x = threading.Thread(target=download, args=(name, video,))

x.start()

print(name+"正在下载")

# print(video)

五、视频下载

def download(name,url):

headers={"User-Agent":UserAgent().random}

response=requests.get(url=url,headers=headers).content

with open(name,"wb")as fp:

fp.write(response)

print(name+"下载完成")

代码

import requests

import threading

from fake_useragent import UserAgent

from selenium import webdriver

from lxml import etree

from time import sleep

# from selenium.webdriver.chrome.options import Options

# chrome_options = Options()

# chrome_options.add_argument('--headless')

# chrome_options.add_argument('--disable-gpu')

def url_parse():

new_url = input("请粘贴你想下载的动漫链接")

headers = {'User-Agent': 'Mozilla/5.0 (Windows NT 10.0; Win64; x64) AppleWebKit/537.36 (KHTML, like Gecko) Chrome/86.0.4240.75 Safari/537.36'}

response = requests.get(url=new_url, headers=headers).text

tree = etree.HTML(response)

li_list = tree.xpath("//div[@id='play_0']/ul/li")

dic ={}

for li in li_list:

url = "http://www.imomoe.ai" + li.xpath("a/@href")[0]

name = li.xpath("a/@title")[0]

dic[name]=url

return dic

def data_parse(dic):

tasks=[]

urls=list(dic.values())

for url in urls:

x=threading.Thread(target=data,args=(url,))

tasks.append(x)

for task in tasks:

task.start()

sleep(1)

def data(url):

bro = webdriver.Chrome(executable_path="./chromedriver")

r = bro.get(url)

page_data = bro.page_source

bro.quit()

tree1 = etree.HTML(page_data)

video= tree1.xpath('//div[@class="player"]/iframe/@src')[0]

name = "第"+video.split(".")[-2].split("-")[-1]+"集.mp4"

x = threading.Thread(target=videos, args=(name, video,))

x.start()

print(name + "解析成功")

# print(video)

def videos(name,url):

bro= webdriver.Chrome(executable_path="./chromedriver.exe")

bro.get(url)

mp4_data = bro.page_source

bro.quit()

tree2 = etree.HTML(mp4_data)

video= tree2.xpath("//*[@id='a1']/div[2]/video/@src")[0]

x = threading.Thread(target=download, args=(name, video,))

x.start()

print(name+"正在下载")

# print(video)

def download(name,url):

headers={"User-Agent":UserAgent().random}

response=requests.get(url=url,headers=headers).content

with open(name,"wb")as fp:

fp.write(response)

print(name+"下载完成")

def main():

dic = url_parse()

data_parse(dic)

if __name__ == '__main__':

main()

1万+

1万+

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?