SURF C++代码详细阅读(一)

SURF算法概述

- SURF是尺度不变特征变换算法(SIFT)的加速版,比SIFT要快好几倍(≈4),SURF算法用来完成特征点检测并构造相应描述符,可以应用于物体识别、图像拼接、3D重构等领域,由于其速度快、鲁棒性高,尤其为实时应用(目标检测、目标跟踪、视频拼接、3D重建)提供了可能。

特征点和描述符检测效果如下:

可以看到检测到的特征点和相应描述符

蓝色代表亮背景的暗点,红色代表暗背景的亮点 ,线段方向为主方向

关于SURF的原理有很多,在此不作说明。

代码阅读

源码来自opensurf和surf图像拼接开源项目并稍作修改:

http://opensurf1.googlecode.com/files/OpenSURFcpp.zip

https://github.com/ziqiguo/CS205-ImageStitching/tree/master/surf_sequential

surfDetDes

1.实现main函数中的方法 需要调用即surflib 中的surfDetDes函数,surfDetDes函数为surfDet和surfDes函数结合,检测特征点并构造描述符。

//! Library function builds vector of described interest points

inline void surfDetDes(IplImage *img, /* image to find Ipoints in */

std::vector<Ipoint> &ipts, /* reference to vector of Ipoints */

int single_mem_cpy,

bool upright = false, /* run in rotation invariant mode? */

int octaves = OCTAVES, /* number of octaves to calculate */

int intervals = INTERVALS, /* number of intervals per octave */

int init_sample = INIT_SAMPLE, /* initial sampling step */

float thres = THRES /* blob response threshold */)

{

// Create integral-image representation of the image

IplImage *int_img = Integral(img);

// Create Fast Hessian Object

FastHessian fh(int_img, ipts, single_mem_cpy, octaves, intervals, init_sample, thres);

// Extract interest points and store in vector ipts

clock_t start = clock();

fh.getIpoints();

clock_t end = clock();

std::cout<< "Extract keypoints took: " << float(end - start) / CLOCKS_PER_SEC << " seconds" << std::endl;

// Create Surf Descriptor Object

Surf des(int_img, ipts);

// Extract the descriptors for the ipts

start = clock();

des.getDescriptors(upright);

end = clock();

std::cout<< "Extract descriptor took: " << float(end - start) / CLOCKS_PER_SEC << " seconds" << std::endl;

// Deallocate the integral image

cvReleaseImage(&int_img);

}

以 inline 修饰的函数叫做内联函数,编译时C++编译器会在调用内联函数的地方展开,没有函数压栈的开销,提升程序运行的效率。

在之后金字塔构造时也会用到内联函数

主要包括以下模块:

- 创建积分图像

IplImage *int_img = Integral(img); - 创建快速海森矩阵

FastHessian fh(int_img, ipts, single_mem_cpy, octaves, intervals, init_sample, thres); - 提取特征点

fh.getIpoints(); - 创建描述符对象并得到描述符

des.getDescriptors(upright);

并通过clock()时钟函数以 float(end - start) / CLOCKS_PER_SEC返回每一步用时。

1.创建积分图像

目的:加速运算。每个像素的灰度都是它与坐标原点(0,0)形成的对角线的矩形内的所有像素的灰度值之和。在计算某个矩阵框内的像素灰度值之和时,就可以很快得出结果。

//! Computes the integral image of image img. Assumes source image to be a

//! 32-bit floating point. Returns IplImage of 32-bit float form.

/* 计算图像的积分图 */

IplImage *Integral(IplImage *source)

{

// convert the image to single channel 32f

IplImage *img = getGray(source); //灰度图

IplImage *int_img = cvCreateImage(cvGetSize(img), IPL_DEPTH_32F, 1);// 建一个宽高与原图一致的单精度浮点数(32位)单通道图像

// set up variables for data access

int height = img->height;

int width = img->width;

int step = img->widthStep/sizeof(float); //存储一行像素需要的字节数(4的倍数)/每一个像素的字节数4 = 每一行的像素数

float *data = (float *) img->imageData; //图像像素的首指针

float *i_data = (float *) int_img->imageData;

// first row only

// 第一行的情况

float rs = 0.0f;

for(int j=0; j<width; j++)

{

rs += data[j];

i_data[j] = rs;

}

// remaining cells are sum above and to the left

//i++需要一个暂时变量,然后将i加1后,返回的是暂时变量。而++i就是自增后返回i。在空间损耗上,i++要略高于++i,因此,在不影响代码逻辑的前提下,要尽量使用++i。

//其他行

for(int i=1; i<height; ++i)

{

rs = 0.0f;

for(int j=0; j<width; ++j) //后续每个像素块把上方左方求和 上方和即积分图上一行像素值 左方和遍历一次

{

rs += data[i*step+j];

i_data[i*step+j] = rs + i_data[(i-1)*step+j];

}

}

// release the gray image

//cvReleaseImage后,会设置此时图片占用的内存数据为NULL,但是该内存地址不会被释放,当有新图片使用cvLoadImage读时,会放在这个被置位NULL的内存地址处

cvReleaseImage(&img);

// return the integral image

return int_img;

}

widthStep:表示存储一行像素需要的字节数。 在cv::Iplimage属性中,widthStep必须是4的倍数,实现字节对齐,有利于提高运算速度。

循环中的++i: i++需要一个暂时变量,然后将i加1后,返回的是暂时变量。而++i就是自增后返回i。在空间损耗上,i++要略高于++i,因此,在不影响代码逻辑的前提下,要尽量使用++i。

cvReleaseImage:设置此时图片占用的内存数据为NULL,但是该内存地址不会被释放,当有新图片使用cvLoadImage读时,会放在这个被置位NULL的内存地址处。

补 程序四模块:初始化、运算、控制、释放。

创建灰度图(单精度浮点数(32位)单通道),首先将第一行相加,后续每个像素块把上方左方求和 上方和即积分图上一行像素值 左方和遍历一次。

积分函数会在之后的海森矩阵、盒滤波器计算中用到。

2.创建快速海森矩阵

FastHessian类 主要包含:

- 构造函数

- 获取特征点 getIpoints() 是最主要的函数,其需要用到其他函数包括:

构建并计算海森矩阵行列式响应图(DoH) void buildResponseMap(); buildResponseLayer(ResponseLayer *r)

判断极值 isExtremum

插值函数(改编SIFT)interpolateExtremum

class FastHessian {

public:

//! Constructor without image

FastHessian(std::vector<Ipoint> &ipts,

const int single_mem_cpy,

const int octaves = OCTAVES,

const int intervals = INTERVALS,

const int init_sample = INIT_SAMPLE,

const float thres = THRES);

//! Constructor with image

FastHessian(IplImage *img,

std::vector<Ipoint> &ipts,

const int single_mem_cpy,

const int octaves = OCTAVES,

const int intervals = INTERVALS,

const int init_sample = INIT_SAMPLE,

const float thres = THRES);

//! Destructor

~FastHessian();

//! Save the parameters

void saveParameters(const int octaves,

const int intervals,

const int init_sample,

const float thres,

const int single_mem_cpy);

//! Set or re-set the integral image source

void setIntImage(IplImage *img);

//! Find the image features and write into vector of features

void getIpoints();

private:

//---------------- Private Functions -----------------//

//! Build map of DoH responses

void buildResponseMap();

//! Calculate DoH responses for supplied layer

void buildResponseLayer(ResponseLayer *r);

//! 3x3x3 Extrema test

int isExtremum(int r, int c, ResponseLayer *t, ResponseLayer *m, ResponseLayer *b);

//! Interpolation functions - adapted from Lowe's SIFT implementation

void interpolateExtremum(int r, int c, ResponseLayer *t, ResponseLayer *m, ResponseLayer *b);//, Ipoint* ipts_tmp, int* changed);

void interpolateStep(int r, int c, ResponseLayer *t, ResponseLayer *m, ResponseLayer *b,

double* xi, double* xr, double* xc );

double** deriv3D(int r, int c, ResponseLayer *t, ResponseLayer *m, ResponseLayer *b);

double** hessian3D(int r, int c, ResponseLayer *t, ResponseLayer *m, ResponseLayer *b);

//---------------- Private Variables -----------------//

//! Pointer to the integral Image, and its attributes

IplImage *img;

int i_width, i_height;

int single_mem_cpy;

//! Reference to vector of features passed from outside

std::vector<Ipoint> &ipts;

//! Response stack of determinant of hessian values

std::vector<ResponseLayer *> responseMap;

//! Number of Octaves

int octaves;

//! Number of Intervals per octave

int intervals;

//! Initial sampling step for Ipoint detection

int init_sample;

//! Threshold value for blob resonses

float thresh;

};

2.1构造函数

构造一个关于点向量或图像的FastHessian类 都要设置一次参数saveParameters

图像还要设置初始图像

注意img是积分图像 不然会影响后边计算DoH的逻辑理解

//! Constructor without image

FastHessian::FastHessian(std::vector<Ipoint> &ipts, const int single_mem_cpy,

const int octaves, const int intervals, const int init_sample,

const float thresh)

: ipts(ipts), i_width(0), i_height(0)

{

// Save parameter set

saveParameters(octaves, intervals, init_sample, thresh, single_mem_cpy);

}

//-------------------------------------------------------

//! Constructor with image 注意初始化图像是积分图像

FastHessian::FastHessian(IplImage *img, std::vector<Ipoint> &ipts, const int single_mem_cpy,

const int octaves, const int intervals, const int init_sample,

const float thresh)

: ipts(ipts), i_width(0), i_height(0)

{

// Save parameter set

saveParameters(octaves, intervals, init_sample, thresh, single_mem_cpy);

// Set the current image

setIntImage(img);

}

每次设置参数(小写) 超出范围则使用全局静态常量(大写)

默认构造金字塔 层数5 每一层间隔(DoH响应图)数4 采样因子2 阈值0.0004

//! Save the parameters

//! 每次设置参数 超出范围则使用全局静态常量

void FastHessian::saveParameters(const int octaves, const int intervals,

const int init_sample, const float thresh, const int single_mem_cpy)

{

// Initialise variables with bounds-checked values

this->octaves =

(octaves > 0 && octaves <= 4 ? octaves : OCTAVES);

this->intervals =

(intervals > 0 && intervals <= 4 ? intervals : INTERVALS);

this->init_sample =

(init_sample > 0 && init_sample <= 6 ? init_sample : INIT_SAMPLE);

this->thresh = (thresh >= 0 ? thresh : THRES);

this->single_mem_cpy = single_mem_cpy;

}

2.2 获取特征点

//! Find the image features and write into vector of features

void FastHessian::getIpoints()

{

// filter index map

static const int filter_map [OCTAVES][INTERVALS] = {{0,1,2,3}, {1,3,4,5}, {3,5,6,7}, {5,7,8,9}, {7,9,10,11}};

// Clear the vector of exisiting ipts

ipts.clear();

std::clock_t start;

start = std::clock();

// Build the response map

buildResponseMap();

std::cout << "buildResponseMap took: " << (std::clock() - start) / (double)(CLOCKS_PER_SEC / 1000) << " ms" << std::endl;

start = std::clock();

// Get the response layers 判断是否是极值点

ResponseLayer *b, *m, *t;

for (int o = 0; o < octaves; ++o)

{

for (int i = 0; i <= 1; ++i)

{

b = responseMap.at(filter_map[o][i]);

m = responseMap.at(filter_map[o][i+1]);

t = responseMap.at(filter_map[o][i+2]);

// Ipoint ipts_tmp[t->height][t->width];

// int changed[t->height][t->width];

// loop over middle response layer at density of the most

// sparse layer (always top), to find maxima across scale and space

for (int r = 0; r < t->height; ++r)

{

for (int c = 0; c < t->width; ++c)

{

if (isExtremum(r, c, t, m, b))

{

interpolateExtremum(r, c, t, m, b);//, &ipts_tmp[r][c], &changed[r][c]);

}

}

}

// for (int r = 0; r < t->height; ++r)

// {

// for (int c = 0; c < t->width; ++c)

// {

// if (changed[r][c] == 1)

// {

// ipts.push_back(ipts_tmp[r][c]);

// }

// }

// }

}

}

std::cout << "Ipoint vector took: " << (std::clock() - start) / (double)(CLOCKS_PER_SEC / 1000) << " ms" << std::endl;

}

其过程为:

初始化过滤后的图像(DoH)static const int filter_map [OCTAVES][INTERVALS]

创建并计算DoH响应图 buildResponseMap();

在临近的三幅DoH图(ResponseLayer *b, *m, *t)中寻找极值点并进行插值

内容多,分篇写。这一篇写到:计算海森矩阵行列式响应图(DoH)

2.2.1 创建DoH响应图 buildResponseMap()

//! Build map of DoH responses

void FastHessian::buildResponseMap()

{

// Calculate responses for the first 4 octaves:

// Oct1: 9, 15, 21, 27

// Oct2: 15, 27, 39, 51

// Oct3: 27, 51, 75, 99

// Oct4: 51, 99, 147,195

// Oct5: 99, 195,291,387

// Deallocate memory and clear any existing response layers

//删除分配内存和响应层

for(unsigned int i = 0; i < responseMap.size(); ++i)

delete responseMap[i];

responseMap.clear();

// Get image attributes

int w = (i_width / init_sample);

int h = (i_height / init_sample);

int s = (init_sample);//采样因子

int rsize;

// Calculate approximated determinant of hessian values

//计算hessian值的近似行列式

//参数为 宽、高、采样因子、盒滤波器大小

if (octaves >= 1)

{

responseMap.push_back(new ResponseLayer(w, h, s, 9));

responseMap.push_back(new ResponseLayer(w, h, s, 15));

responseMap.push_back(new ResponseLayer(w, h, s, 21));

responseMap.push_back(new ResponseLayer(w, h, s, 27));

rsize = 4;

}

if (octaves >= 2)

{

responseMap.push_back(new ResponseLayer(w/2, h/2, s*2, 39));

responseMap.push_back(new ResponseLayer(w/2, h/2, s*2, 51));

rsize = 6;

}

if (octaves >= 3)

{

responseMap.push_back(new ResponseLayer(w/4, h/4, s*4, 75));

responseMap.push_back(new ResponseLayer(w/4, h/4, s*4, 99));

rsize = 8;

}

if (octaves >= 4)

{

responseMap.push_back(new ResponseLayer(w/8, h/8, s*8, 147));

responseMap.push_back(new ResponseLayer(w/8, h/8, s*8, 195));

rsize = 10;

}

if (octaves >= 5)

{

responseMap.push_back(new ResponseLayer(w/16, h/16, s*16, 291));

responseMap.push_back(new ResponseLayer(w/16, h/16, s*16, 387));

rsize = 12;

}

for (unsigned int i = 0; i < responseMap.size(); ++i)

{

buildResponseLayer(responseMap[i]);

}

}

- 五层金字塔盒滤波器大小在注释中,用过ResponseLayer创建12个不同大小的盒滤波器,利用尺度(盒滤波器大小)的重复性,这12个盒滤波器就可以代表5层Octaves每层4个DoH共5*4=20个盒滤波器响应层。

接着每一个盒滤波器响应层 通过函数buildResponseLayer(responseMap[i]);得到 - 这里responseMap是一个响应层数组,每个元素为ResponseLayer

std::vector<ResponseLayer *> responseMap;

ResponseLayer结构

ResponseLayer(int width, int height, int step, int filter)

{

assert(width > 0 && height > 0);

this->width = width;

this->height = height;

this->step = step;//采样因子

this->filter = filter;//盒滤波的大小

responses = new float[width * height];//存放每个大小的filer下的图像像素点的海森矩阵的行列式值

//开辟一个存放float数的空间,返回一个指向该存储空间的地址

laplacian = new unsigned char[width * height];//存放laplacian标识符,判断是暗背景上的亮斑还是亮背景的暗斑

//开辟一个存放unsigned char的空间,返回一个指向该存储空间的地址

memset(responses,0,sizeof(float)*width*height);//申请的内存进行初始化

memset(laplacian,0,sizeof(unsigned char)*width*height);

}

2.2.2 计算DoH响应层

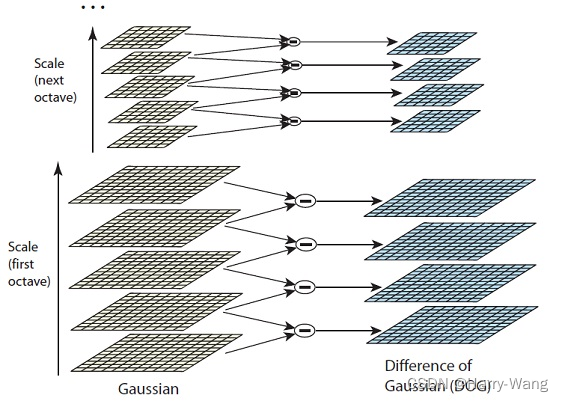

- SURF的金字塔和SIFT大致结构一样,主要区别在:且用盒滤波器直接近似了高斯滤波模糊和拉普拉斯二阶导求极值,所以盒滤波器过滤图,即Hessian矩阵行列式的图像(DoH),就相当于SIFT算法中的高斯差分图像(DoG),且一副原图生产一副DoH(用积分图计算),而SIFT金字塔通过不同尺度图像做差产生DoG。

附一张SIFT金字塔做对比

- DoH的计算过程

//! Calculate DoH responses for supplied layer

void FastHessian::buildResponseLayer(ResponseLayer *rl)

{

float *responses = rl->responses; // response storage

unsigned char *laplacian = rl->laplacian; // laplacian sign storage

int step = rl->step; // step size for this filter

int b = (rl->filter - 1) / 2 + 1; // border for this filter

int l = rl->filter / 3; // lobe for this filter (filter size / 3)

int w = rl->filter; // filter size

float inverse_area = 1.f/(w*w); // normalisation factor

float Dxx, Dyy, Dxy;

int height = rl->height;

int width = rl->width;

int img_step = img->widthStep/sizeof(float); //每一行的像素个数

int img_height = img->height;

int img_width = img->width;

float *img_data = (float *) img->imageData;

int r, c;

{

for(int ar = 0; ar < height; ++ar)

{

for(int ac = 0; ac < width; ++ac)

{

// get the image coordinates

r = ar * step;//这里的step为盒滤波器的采样因子(构造函数的第三参数),不是图像每一行的像素个数

c = ac * step;

// Compute response components

// 利用积分图计算海森矩阵的行列式近似值

//参数:图像首地址 r1 c1 r2与r1差 c2与c1差 每行像素个数 高 宽

Dxx = BoxIntegral_acc(img_data, r - l + 1, c - b, 2*l - 1, w, img_step, img_height, img_width)

- BoxIntegral_acc(img_data, r - l + 1, c - l / 2, 2*l - 1, l, img_step, img_height, img_width)*3;

Dyy = BoxIntegral_acc(img_data, r - b, c - l + 1, w, 2*l - 1, img_step, img_height, img_width)

- BoxIntegral_acc(img_data, r - l / 2, c - l + 1, l, 2*l - 1, img_step, img_height, img_width)*3;

Dxy = BoxIntegral_acc(img_data, r - l, c + 1, l, l, img_step, img_height, img_width)

+ BoxIntegral_acc(img_data, r + 1, c - l, l, l, img_step, img_height, img_width)

- BoxIntegral_acc(img_data, r - l, c - l, l, l, img_step, img_height, img_width)

- BoxIntegral_acc(img_data, r + 1, c + 1, l, l, img_step, img_height, img_width);

// Normalise the filter responses with respect to their size

Dxx *= inverse_area;

Dyy *= inverse_area;

Dxy *= inverse_area;

responses[ar*width + ac] = (Dxx * Dyy - 0.81 * Dxy * Dxy);//海森矩阵行列式 0.81为近似(积分图+盒滤波近似代替高斯平滑和二阶导)的修正

laplacian[ar*width + ac] = (Dxx + Dyy >= 0 ? 1 : 0);

//求得的 近似Hessian矩阵行列式的图像(DoH) 就相当于 SIFT算法中的高斯差分图像(DoG)

}

}

}

}

注意step与img_step的区别

r = ar * step; //这里的step为盒滤波器的采样因子(构造函数的第三参数),不是图像每一行的像素个数

- 参数含义 b:滤波器中心离边缘的距离 ;l:滤波器大小/3 ; w: 滤波器中心离边缘的距离

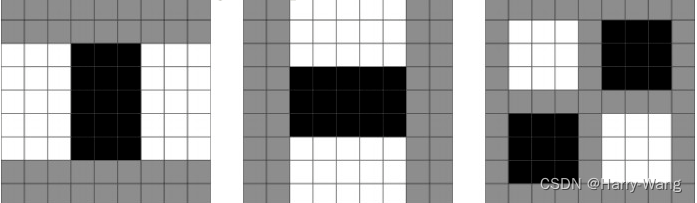

这里举例大小为9的盒滤波器,图像中每一点的Dxx,Dyy,Dxy(从左到后)分别由 以下滤波器形状与原图像素卷积后形成,滤波器中白色和黑色总和区域系数为+1,黑色区域为-3,灰色区域为0

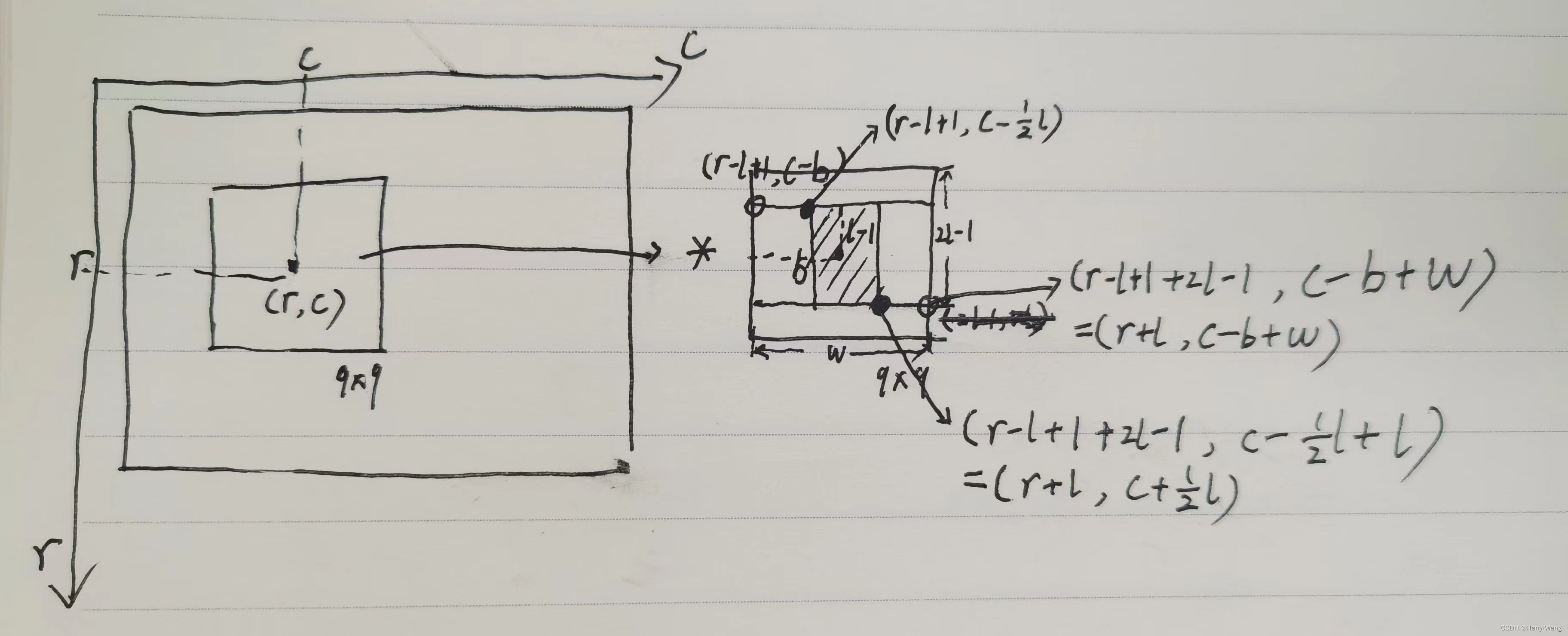

- 按其中Dxx计算举例如下:

Dxx = BoxIntegral_acc(img_data, r - l + 1, c - b, 2*l - 1, w, img_step, img_height, img_width)

- BoxIntegral_acc(img_data, r - l + 1, c - l / 2, 2*l - 1, l, img_step, img_height, img_width)*3;

- BoxIntegral_acc参数:图像首地址 r1 c1 r2与r1差 c2与c1差 每行像素个数 高 宽

- 将图像任意一点(r,c)的Dxx卷积计算过程如下,:

边缘时积分图像会取图像的边缘,见BoxIntegral_acc代码

边缘时积分图像会取图像的边缘,见BoxIntegral_acc代码

注意这里的img_data为积分图像的首地址,在构造FastHessian时的属性img即为积分图像。

inline float BoxIntegral_acc(float *img_data, int row, int col, int rows, int cols, int step, int height, int width)

{

// The subtraction by one for row/col is because row/col is inclusive.

int r1 = std::min(row, height) - 1;

int c1 = std::min(col, width) - 1;

int r2 = std::min(row + rows, height) - 1;

int c2 = std::min(col + cols, width) - 1;

float A(0.0f), B(0.0f), C(0.0f), D(0.0f);

if (r1 >= 0 && c1 >= 0) A = img_data[r1 * step + c1];

if (r1 >= 0 && c2 >= 0) B = img_data[r1 * step + c2];

if (r2 >= 0 && c1 >= 0) C = img_data[r2 * step + c1];

if (r2 >= 0 && c2 >= 0) D = img_data[r2 * step + c2];

return std::max(0.f, A - B - C + D);

}

接着通过公式 Dxx * Dyy - 0.81 * Dxy * Dxy将近似(积分图+盒滤波近似代替高斯平滑和拉普拉斯二阶导)作修正

得到每一像素点的海森矩阵行列式近似值,组成海森矩阵响应层DoH。

未完待续

2511

2511

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?