Outline

PyTorch

Training & Testing Neural Networks in Pytorch

T

r

a

i

n

i

n

g

⇌

V

a

l

i

d

a

t

i

o

n

⟶

T

e

s

t

i

n

g

Training\rightleftharpoons Validation \longrightarrow Testing

Training⇌Validation⟶Testing

step 1: Dataset & Dataloader

读进资料

- Dataset : stores data samples and expected values

- Dataloader: groups data in batches,enables multipocessing

dataset = MyDataset(file)

dataloader = DataLoader(dataset, batch_size, shuffle=True)

Training:True

Testing:False

Tensors:High-dimensional matrices(arrays) 读进的数据都是数列

2.1 Creating Tensors

- Directly from data(list or numpy.ndarray)

x = torch.tensor([[1,-1], [-1,1]])

x = torch.from_numpy(np.array([[1,-1], [-1,1]]))

tensor([[1., -1.],

[-1.,1.]])

- Tensor of constant zeros & ones

x = torch.zeros([2,2]) # shape

x = torch.ones([1, 2, 5])

tensor([[0., 0.],

[0.,0.]])

tensor([[1., 1., 1., 1., 1.],

[1., 1., 1., 1., 1.]])

Common Operation

Common arthmetic functions are supported, such as:

- addition : z = x + y

- subtraction : z = x - y

- Power : y = x.pow(2)

- Summation : y = x.sum()

- mean: y = x.mean()

2.2 Transpose

2.3 Squeeze

remove the specified dimension with length = 1

2.4 unsqueeze

– expand a new dimension

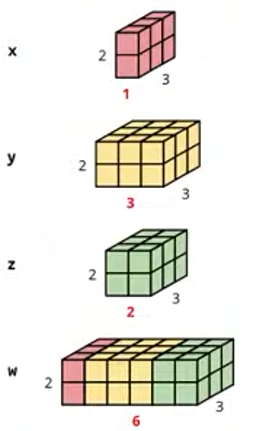

2.4 Cat

– concatenate multiple tensors

x = torch.zeros([2, 1, 3])

y = torch.zeros([2, 3, 3])

z = torch.zeros([2, 2, 3])

w = torch.cat([x, y, z], dim=1)

w.shape

torch,Size([2, 6, 3])

2.5 Data Type

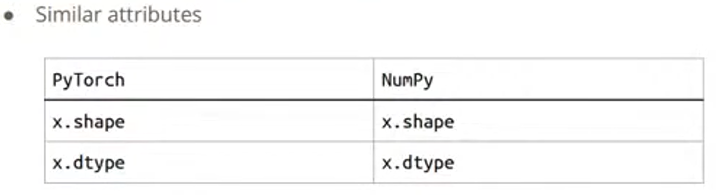

2.6 PyTorch v.s. NumPy

2.7 Device

– Use .to() to move tensors to appropriate devices

--CPU

x = x.to('cpu')

--GPU

x = x.to('cuda')

2.7.1 Check if your computer has NVIDIA GPU

torch.cuda.is_available

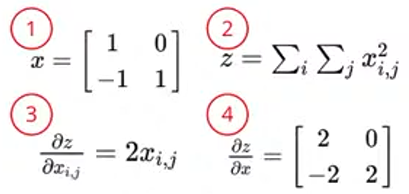

2.8 Gradient Caluclulation

- x = torch.tensor([[1., 0], [-1., 1.]], requiers_grad=True)

- z = x.pow(2).sum()

- z.backward()

- x.grad

tensor([[2., 0],

[-2., 2.]])

step2 : torch 定义神经网络

3.1 Network Layers–torch.nn

3.1.1 linear layer(fully-connected layer)

>>>layer = torch.nn.linear(32, 64)

>>>layer.weight.shape

torch.size([64, 32])

>>>layer.bias.shape

torch.size([64])

3.1.2 Non-Linear activation Function

- sigmoid Activation

nn.sigmoid() - ReLU Activation

nn.ReLU()

3.2 Build your own neural network

import torch.nn as nn

class MyModel(nn.Module):

# initialize your model & define layers

def __init__(self):

super(MyModel, self).__init__()

self.net = nn.Sequerntial(

nn.Linear(10, 32),

nn.Sigmoid(),

nn.Linear(32, 1)

)

# Compute output of your NN

def forward(self, x):

return self.net(x)

等价于

import torch.nn as nn

class MyModel(nn.Module):

def __init__(self):

super(MyModel, self).__init__()

self.layer1 = nn.Linear(10, 32)

self.layer2 = nn.Sigmoid()

self.layer3 = nn.Linear(32, 1)

def foeward(self, x):

out = self.layer1(x)

out = self.layer2(out)

out = self.layer3(out)

return out

step3 计算Loss Function

Mean squared error(for regression tasks)

criterion = nn.MSELoss()

Cross Entropy (for classification tasks)

criterion = nn.CrossEntropyLoss()

loss = criterion(model_output, expected_value)

step4 : Optimization Algorithm

–用梯度调整模型参数

step5 : 整合在一起Entire Procedure

定义好模型,转到CPU去训练,定义好loss function和优化

2439

2439

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?