Using an Appropriate Scale to pick Hyperparameters使用适当的尺度选择超参数

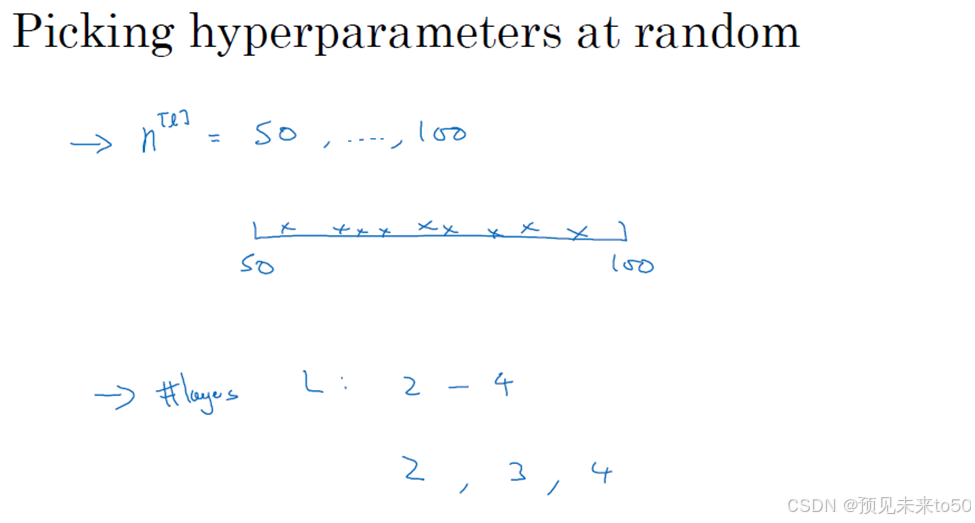

In the last video, you saw how sampling at random, over the range of hyperparameters, can allow you to search over the space of hyperparameters more efficiently. But it turns out that sampling at random doesn't mean sampling uniformly at random, over the range of valid values. Instead, it's important to pick the appropriate scale on which to explore the hyperparameters. In this video, I want to show you how to do that. Let's say that you're trying to choose the number of hidden units, n[l], for a given layer l. And let's say that you think a good range of values is somewhere from 50 to 100. In that case, if you look at the number line from 50 to 100, maybe picking some number values at random within this number line. There's a pretty visible way to search for this particular hyperparameter. Or if you're trying to decide on the number of layers in your neural network, we're calling that capital L. Maybe you think the total number of layers should be somewhere between 2 to 4. Then sampling uniformly at random, along 2, 3 and 4, might be reasonable. Or even using a grid search, where you explicitly evaluate the values 2, 3 and 4 might be reasonable. So these were a couple examples where sampling uniformly at random over the range you're contemplating; might be a reasonable thing to do. But this is not true for all hyperparameters.

Let's look at another example. Say your searching for the hyperparameter alpha, the learning rate. And let's say that you suspect 0.0001 might be on the low end, or maybe it could be as high as 1. Now if you draw the number line from 0.0001 to 1, and sample values uniformly at random over this number line. Well about 90% of the values you sample would be between 0.1 and 1. So you're using 90% of the resources to search between 0.1 and 1, and only 10% of the resources to search between

最低0.47元/天 解锁文章

最低0.47元/天 解锁文章

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?