1、运行平台

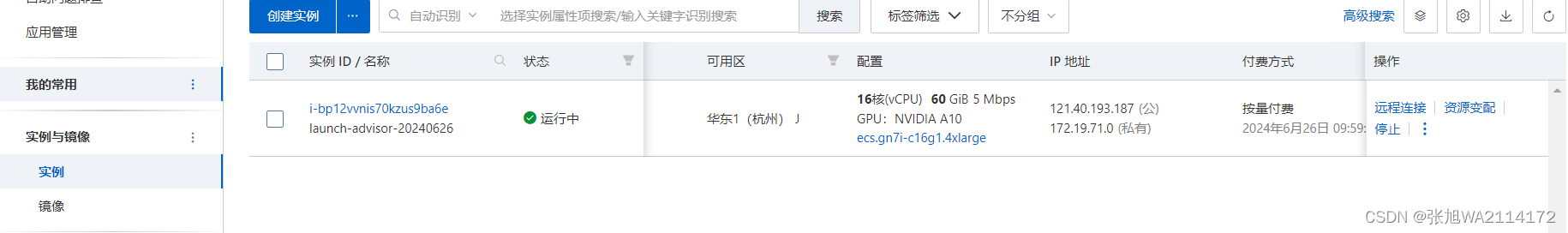

由于希冀平台的计算能力有限,我们使用阿里云的服务器来运行代码文件。

1.1服务器准备

在阿里云中创建一个gpu服务器

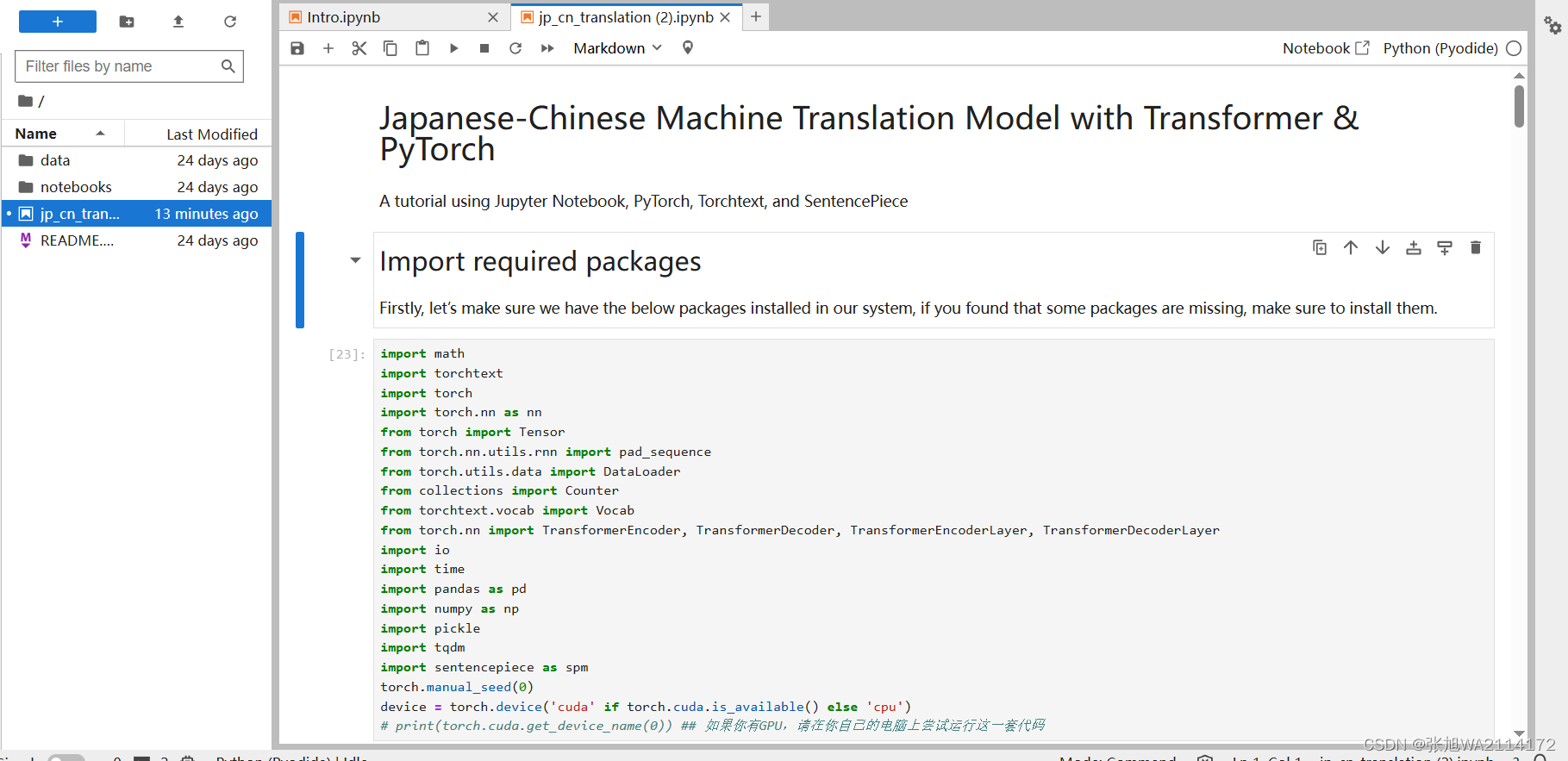

1.2环境

ipython 7.16.3

jupyter 1.0.0

sentencepiece 0.2.0

torchtest 0.6.0

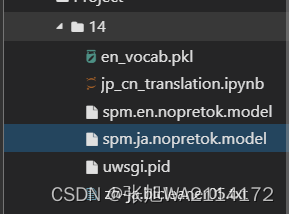

1.3上传文件

1.4使用jupyter运行ipynb文件

2、数据集准备

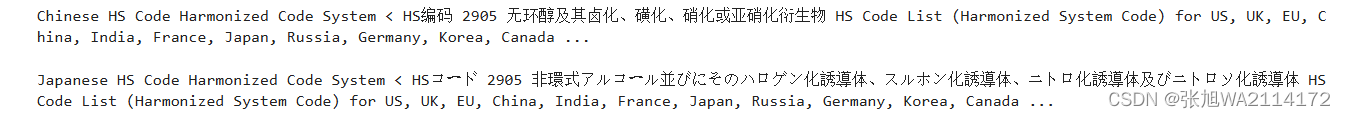

我们将使用从JParaCrawl下载的日英平行语料库。JParaCrawl被描述为“由NTT创建的最大的公开可用的英日平行语料库”。该语料库主要通过网络抓取和自动对齐平行句子而创建。

首先,我们使用以下代码导入数据集并准备工作:

df = pd.read_csv('./zh-ja.bicleaner05.txt', sep='\\t', engine='python', header=None)

trainen = df[2].values.tolist()#[:10000]

trainja = df[3].values.tolist()#[:10000]

这段代码通过Pandas库读取了名为zh-ja.bicleaner05.txt的数据文件,该文件是以制表符分隔的文本文件。然后,将第二列(英文句子)和第三列(日文句子)分别存储为Python列表。由于数据集的最后一条数据存在缺失值,我们使用.pop()方法将其从列表中删除,以确保数据的完整性和正确性。

总共,这个数据集包含约597万条英日平行句子。然而,为了学习和测试的目的,通常建议先对数据进行抽样处理,以验证代码是否按预期运行,同时也可以节省处理时间。

print(trainen[500])

print(trainja[500])

3、1.4构建 TorchText Vocab 对象并将句子转换为 Torch 张量

def build_vocab(sentences, tokenizer):

counter = Counter()

for sentence in sentences:

counter.update(tokenizer.encode(sentence, out_type=str))

return Vocab(counter, specials=['<unk>', '<pad>', '<bos>', '<eos>'])

接下来,我们定义一个data_process函数来将原始的日语和英语句子转换为Torch张量。在这个函数中,我们使用之前构建的vocab和tokenizer对象来将每个句子转换为对应的索引张量。

def data_process(ja, en):

data = []

for (raw_ja, raw_en) in zip(ja, en):

ja_tensor_ = torch.tensor([ja_vocab[token] for token in ja_tokenizer.encode(raw_ja.rstrip("\n"), out_type=str)],

dtype=torch.long)

en_tensor_ = torch.tensor([en_vocab[token] for token in en_tokenizer.encode(raw_en.rstrip("\n"), out_type=str)],

dtype=torch.long)

data.append((ja_tensor_, en_tensor_))

return data

train_data = data_process(trainja, trainen)4、创建要在训练期间迭代的DataLoader对象

通过设置合适的批量大小(BATCH_SIZE),结合序列到序列模型所需的数据处理(如添加起始和结束标记,以及填充序列),可以有效地创建用于训练的DataLoader对象。

# BATCH_SIZE = 8

BATCH_SIZE = 32

PAD_IDX = ja_vocab['<pad>']

BOS_IDX = ja_vocab['<bos>']

EOS_IDX = ja_vocab['<eos>']

def generate_batch(data_batch):

ja_batch, en_batch = [], []

for (ja_item, en_item) in data_batch:

ja_batch.append(torch.cat([torch.tensor([BOS_IDX]), ja_item, torch.tensor([EOS_IDX])], dim=0))

en_batch.append(torch.cat([torch.tensor([BOS_IDX]), en_item, torch.tensor([EOS_IDX])], dim=0))

ja_batch = pad_sequence(ja_batch, padding_value=PAD_IDX)

en_batch = pad_sequence(en_batch, padding_value=PAD_IDX)

return ja_batch, en_batch

train_iter = DataLoader(train_data, batch_size=BATCH_SIZE,

shuffle=True, collate_fn=generate_batch)5、关键函数

下面是本次代码的一些关键函数

class Seq2SeqTransformer(nn.Module):

def __init__(self, num_encoder_layers: int, num_decoder_layers: int,

emb_size: int, src_vocab_size: int, tgt_vocab_size: int,

dim_feedforward:int = 512, dropout:float = 0.1):

super(Seq2SeqTransformer, self).__init__()

encoder_layer = TransformerEncoderLayer(d_model=emb_size, nhead=NHEAD,

dim_feedforward=dim_feedforward)

self.transformer_encoder = TransformerEncoder(encoder_layer, num_layers=num_encoder_layers)

decoder_layer = TransformerDecoderLayer(d_model=emb_size, nhead=NHEAD,

dim_feedforward=dim_feedforward)

self.transformer_decoder = TransformerDecoder(decoder_layer, num_layers=num_decoder_layers)

self.generator = nn.Linear(emb_size, tgt_vocab_size)

self.src_tok_emb = TokenEmbedding(src_vocab_size, emb_size)

self.tgt_tok_emb = TokenEmbedding(tgt_vocab_size, emb_size)

self.positional_encoding = PositionalEncoding(emb_size, dropout=dropout)

def forward(self, src: Tensor, trg: Tensor, src_mask: Tensor,

tgt_mask: Tensor, src_padding_mask: Tensor,

tgt_padding_mask: Tensor, memory_key_padding_mask: Tensor):

src_emb = self.positional_encoding(self.src_tok_emb(src))

tgt_emb = self.positional_encoding(self.tgt_tok_emb(trg))

memory = self.transformer_encoder(src_emb, src_mask, src_padding_mask)

outs = self.transformer_decoder(tgt_emb, memory, tgt_mask, None,

tgt_padding_mask, memory_key_padding_mask)

return self.generator(outs)

def encode(self, src: Tensor, src_mask: Tensor):

return self.transformer_encoder(self.positional_encoding(

self.src_tok_emb(src)), src_mask)

def decode(self, tgt: Tensor, memory: Tensor, tgt_mask: Tensor):

return self.transformer_decoder(self.positional_encoding(

self.tgt_tok_emb(tgt)), memory,

tgt_mask)文本标记通过使用标记嵌入来表示。位置编码被添加到标记嵌入中,以引入单词顺序的概念。

class PositionalEncoding(nn.Module):

def __init__(self, emb_size: int, dropout, maxlen: int = 5000):

super(PositionalEncoding, self).__init__()

den = torch.exp(- torch.arange(0, emb_size, 2) * math.log(10000) / emb_size)

pos = torch.arange(0, maxlen).reshape(maxlen, 1)

pos_embedding = torch.zeros((maxlen, emb_size))

pos_embedding[:, 0::2] = torch.sin(pos * den)

pos_embedding[:, 1::2] = torch.cos(pos * den)

pos_embedding = pos_embedding.unsqueeze(-2)

self.dropout = nn.Dropout(dropout)

self.register_buffer('pos_embedding', pos_embedding)

def forward(self, token_embedding: Tensor):

return self.dropout(token_embedding +

self.pos_embedding[:token_embedding.size(0),:])

class TokenEmbedding(nn.Module):

def __init__(self, vocab_size: int, emb_size):

super(TokenEmbedding, self).__init__()

self.embedding = nn.Embedding(vocab_size, emb_size)

self.emb_size = emb_size

def forward(self, tokens: Tensor):

return self.embedding(tokens.long()) * math.sqrt(self.emb_size)SRC_VOCAB_SIZE = len(ja_vocab)

TGT_VOCAB_SIZE = len(en_vocab)

EMB_SIZE = 512

NHEAD = 8

FFN_HID_DIM = 512

# BATCH_SIZE = 16

BATCH_SIZE = 32

NUM_ENCODER_LAYERS = 3

NUM_DECODER_LAYERS = 3

NUM_EPOCHS = 16

transformer = Seq2SeqTransformer(NUM_ENCODER_LAYERS, NUM_DECODER_LAYERS,

EMB_SIZE, SRC_VOCAB_SIZE, TGT_VOCAB_SIZE,

FFN_HID_DIM)

for p in transformer.parameters():

if p.dim() > 1:

nn.init.xavier_uniform_(p)

transformer = transformer.to(device)

loss_fn = torch.nn.CrossEntropyLoss(ignore_index=PAD_IDX)

optimizer = torch.optim.Adam(

transformer.parameters(), lr=0.0001, betas=(0.9, 0.98), eps=1e-9

)

def train_epoch(model, train_iter, optimizer):

model.train()

losses = 0

for idx, (src, tgt) in enumerate(train_iter):

src = src.to(device)

tgt = tgt.to(device)

tgt_input = tgt[:-1, :]

src_mask, tgt_mask, src_padding_mask, tgt_padding_mask = create_mask(src, tgt_input)

logits = model(src, tgt_input, src_mask, tgt_mask,

src_padding_mask, tgt_padding_mask, src_padding_mask)

optimizer.zero_grad()

tgt_out = tgt[1:,:]

loss = loss_fn(logits.reshape(-1, logits.shape[-1]), tgt_out.reshape(-1))

loss.backward()

optimizer.step()

losses += loss.item()

return losses / len(train_iter)

def evaluate(model, val_iter):

model.eval()

losses = 0

for idx, (src, tgt) in (enumerate(valid_iter)):

src = src.to(device)

tgt = tgt.to(device)

tgt_input = tgt[:-1, :]

src_mask, tgt_mask, src_padding_mask, tgt_padding_mask = create_mask(src, tgt_input)

logits = model(src, tgt_input, src_mask, tgt_mask,

src_padding_mask, tgt_padding_mask, src_padding_mask)

tgt_out = tgt[1:,:]

loss = loss_fn(logits.reshape(-1, logits.shape[-1]), tgt_out.reshape(-1))

losses += loss.item()

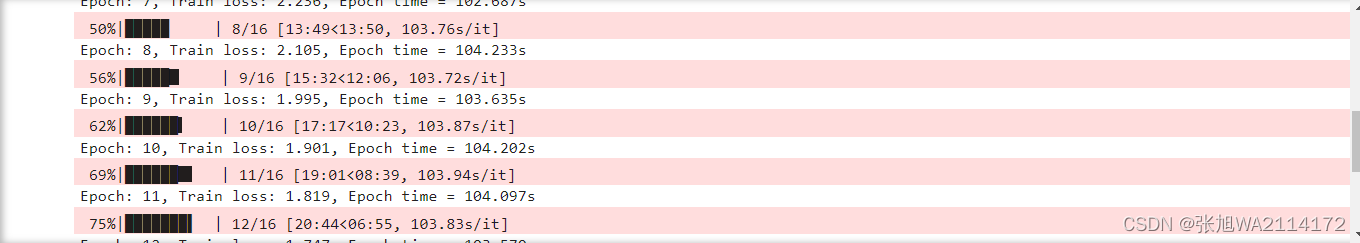

return losses / len(val_iter)6、开始训练

for epoch in tqdm.tqdm(range(1, NUM_EPOCHS+1)):

start_time = time.time()

train_loss = train_epoch(transformer, train_iter, optimizer)

end_time = time.time()

print((f"Epoch: {epoch}, Train loss: {train_loss:.3f}, "

f"Epoch time = {(end_time - start_time):.3f}s"))

7、使用模型翻译日语句子

为了使用训练好的模型将日语句子翻译成英语,我们首先需要编写一个函数,包括以下步骤:获取日语句子、分词、转换为张量、推理并将结果解码回英语句子。

def greedy_decode(model, src, src_mask, max_len, start_symbol):

src = src.to(device)

src_mask = src_mask.to(device)

memory = model.encode(src, src_mask)

ys = torch.ones(1, 1).fill_(start_symbol).type(torch.long).to(device)

for i in range(max_len-1):

memory = memory.to(device)

memory_mask = torch.zeros(ys.shape[0], memory.shape[0]).to(device).type(torch.bool)

tgt_mask = (generate_square_subsequent_mask(ys.size(0))

.type(torch.bool)).to(device)

out = model.decode(ys, memory, tgt_mask)

out = out.transpose(0, 1)

prob = model.generator(out[:, -1])

_, next_word = torch.max(prob, dim = 1)

next_word = next_word.item()

ys = torch.cat([ys,

torch.ones(1, 1).type_as(src.data).fill_(next_word)], dim=0)

if next_word == EOS_IDX:

break

return ys

接下来,我们可以调用 translate 函数并传入必要的参数来进行翻译。这个函数会将日语句子翻译成英语。

在这个过程中,模型会将输入的日语句子编码为内部表示,然后解码为相应的英语句子。这种方法利用了transformer模型的序列到序列的结构,通过贪婪解码策略生成输出序列。

1403

1403

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?