1、概述

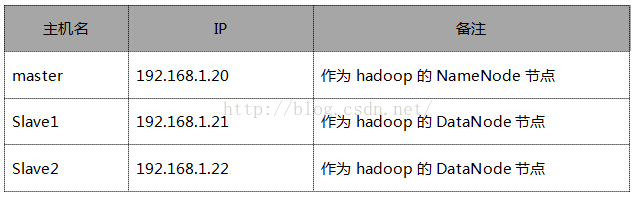

2、Hadoop分布式集群搭建Hadoop安装分为三种方式,分别为单机、伪分布式、完全分布式,安装过程不难,在此主要详细叙述完全分布式的安装配置过程,毕竟生产环境都使用的完全分布式,前两者作为学习和研究使用。按照下述步骤一步一步配置一定可以正确的安装Hadoop分布式集群环境。

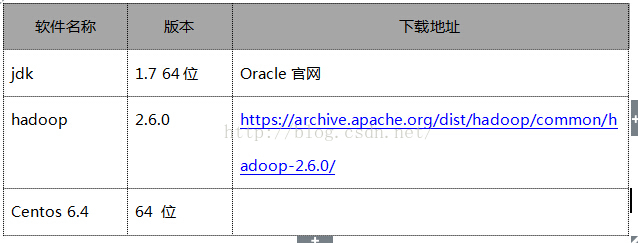

2.1、软件准备

2.2、环境准备

2.3、操作步骤

- 配置hosts

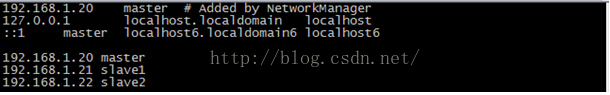

说明:配置hosts文件的作用,主要用于确定每个节点的IP地址,方便后续master节点能快速查询到并访问各个节点,hosts文件路径为/etc/hosts,三台服务器上的配置过程如下:

以下操作在三台服务器上都操作。

[root@master ~]# vi /etc/hosts

配置好之后在各台服务器上使用域名看是否能ping通

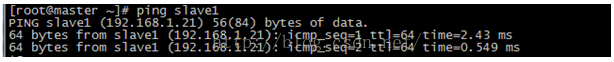

在master上ping slave1

在master上ping slave2

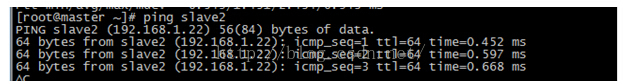

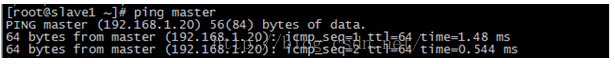

在slave1上ping master

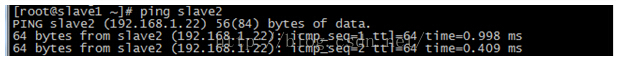

在slave1上ping slave2

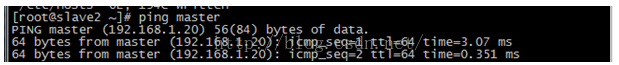

在slave2上ping master

在slave2上ping slave1

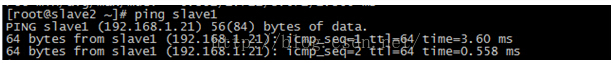

如果以上步骤均和图中操作一致则说明hosts没有配置错误,接下来关闭三台服务器的防火墙操作,以免在后面的操作引起不必要的错误。

- 创建Hadoop账户

说明:创建hadoop集群专门设置的一个用户组以及用户。

以下操作在三台服务器上都操作

#创建用户组为hadoop

#[root@master ~]# groupadd hadoop

添加一个hadoop用户,此用户属于hadoop组,并且具有root权限

[root@master ~]# useradd -s /bin/bash -d /home/hodoop -m hadoop -g hadoop -G root

#设置hadoop用户的密码 为hadoop(自定义即可)

[root@master ~]# passwd hadoop

更改用户 hadoop 的密码 。

新的 密码:

无效的密码: 它基于字典单词

无效的密码: 过于简单

重新输入新的 密码:

passwd: 所有的身份验证令牌已经成功更新。

#创建好账户之后最好用su hadoop验证下能否正常切换至hadoop账户

[root@slave1 ~]# su hadoop

[hadoop@slave1 root]$

至此创建hadoop账户步骤完成,很简单吧,创建hadoop密码建议三台服务器的hadoop账户密码均为一样,避免在后续的操作中出现不必要的麻烦和错误,因为后续很多地方需要输入hadoop密码。

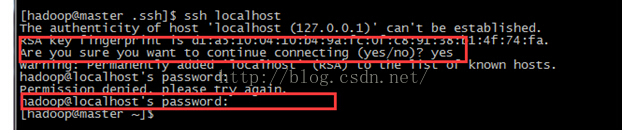

- SSH无密码访问主机

说明:这一部分在搭建hadoop集群的环境过程很重要,非常重要,很多细小的点需要注意,请仔细按照步骤一步一步的来完成配置,在此也对SSH做一个简单的介绍,SSH主要通过RSA算法来产生公钥与私钥,在数据传输过程中对数据进行加密来保障数据的安全性和可靠性,公钥部分是公共部分,网络上任一节点均可以访问,私钥主要用来对数据进行加密。以防数据被盗取,总而言之,这是一种非对称加密算法,想要破解还是非常有难度的,hadoop集群的各个节点之间需要进行数据访问,被访问的节点对于访问用户节点的可靠性必须进行验证,hadoop采用的是ssh的方法通过密钥验证以及数据加解密的方式进行远程安全登录操作,当然,如果hadoop对每个节点的访问均需要进行验证,其效率将会大大的降低,所以才需要配置SSH免密码的方法直接远程连入被访问的节点,这样将大大提高访问效率。

以下操作三台服务器均要操作

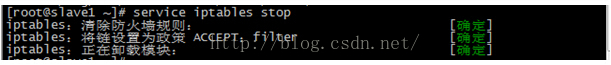

#确定每台服务器的是否安装ssh服务,如下则说明ssh服务已经正常安装,下面操作为通过ssh远程访问登陆自己

[root@master ~]# ssh localhost

root@localhost's password:

Last login: Fri Jun 26 16:48:43 2015 from 192.168.1.102

[root@master ~]#

#退出当前登陆

[root@master ~]# exit

logout

Connection to localhost closed.

[root@master ~]#

#当然还可以通过以下方式验证ssh的安装(非必须)

#如果没有上述截图所显示的,可以通过yum或者rpm安装进行安装(非必须)

[root@master ~]# yum install ssh

#安装好之后开启ssh服务,ssh服务一般又叫做sshd(非必须,前提是没有安装ssh)

[root@master ~]# service sshd start

#或者使用/etc/init.d/sshd start(非必须)

[root@master ~]# /etc/init.d/sshd start

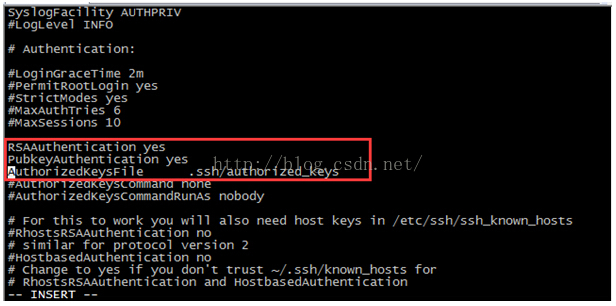

#查看或者编辑ssh服务配置文件(必须,且三台服务器都要操作)

[root@master ~]# vi /etc/ssh/sshd_config

#找到sshd_config配置文件中的如下三行,并将前面的#去掉

说明:当然你可以在该配置文件中对ssh服务进行各种配置,比如说端口,在此我们就只更改上述图中三行配置,其他保持默认即可

#重启ssh服务(必须)

[root@master ~]# service sshd restart

停止 sshd: [确定]

正在启动 sshd: [确定]

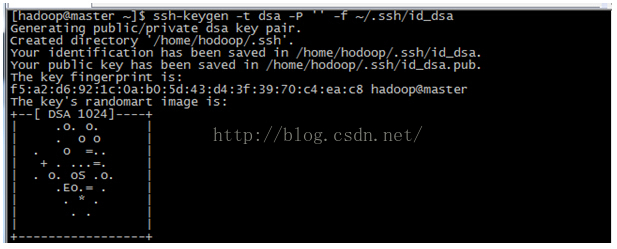

说明:接下来的步骤非常关键,严格对照用户账户,以及路径进行操作

#每个节点分别产生公私钥。

[hadoop@master ~]$ ssh-keygen -t dsa -P '' -f ~/.ssh/id_dsa

以上是产生公私密钥,产生的目录在用户主目录下的.ssh目录下

说明:id_dsa.pub为公钥,id_dsa为私钥。

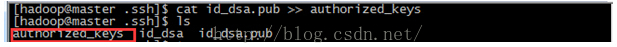

#将公钥文件复制成authorized_keys文件,这个步骤很关键,且authorized_keys文件名要认真核对,它和之前的步骤修改sshd_config中需要去掉注释中的文件名是一致的,不能出错。

[hadoop@master .ssh]$ cat id_dsa.pub >> authorized_keys

可以注意到红线框中标注,为什么还需要提示输入确认指令以及密码,不是免密码登陆吗?先将疑问抛在此处,在后续操作中我们解决这个问题。

#修改authorized_keys文件权限为600

[hadoop@master.ssh]$ chmod 600 authorized_keys

以上步骤三台服务器均要操作,再次提醒!!!

#让主节点(master)能够通过SSH免密码登陆两个节点(slave)

说明:为了实现这个功能,两个slave节点的公钥文件中必须包含主节点(master)的公钥信息,这样当master就可以顺利安全地访问这两个slave节点了

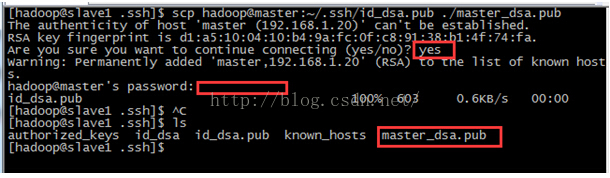

注意:以下操作只在slave1和slave2节点上操作

[hadoop@slave1 .ssh]$ scp hadoop@master:~/.ssh/id_dsa.pub ./master_dsa.pub

上图中密码红线框处很关键,是master主机的hadoop账户的密码,如果你三台服务器hadoop账户的密码设置不一致,就有可能出现忘记或者输入错误密码的问题。同时在.ssh目录下多出了master_dsa.pub公钥文件(master主机中的公钥)

#将master_dsa.pub文件包含的公钥信息追加到slave1的authorized_keys

[hadoop@slave1 .ssh]$ cat master_dsa.pub >> authorized_keys

说明:操作至此还不能实现master中ssh免密码登陆slave1,为什么呢?因为ssh访问机制中需要远程账户有权限控制

#修改authorized_keys文件权限为600

[hadoop@slave1 .ssh]$ chmod 600 authorized_keys

#重启sshd服务,此时需要切换账号为root账号

[root@slave1 hodoop]# service sshd restart

停止 sshd: [确定]

正在启动 sshd: [确定]

#在master节点中实现ssh slave1免密码远程登陆slave1

[hadoop@slave1 ~]$ ssh slave1

The authenticity of host 'slave1 (::1)' can't be established.

RSA key fingerprint is c8:0e:ff:b2:91:d7:3a:bb:63:43:c8:0a:3e:2a:94:04.

Are you sure you want to continue connecting (yes/no)? yes

Warning: Permanently added 'slave1' (RSA) to the list of known hosts.

Last login: Sun Jun 28 02:20:38 2015 from master

[hadoop@slave1 ~]$ exit

logout

Connection to slave1 closed.

[hadoop@slave1 ~]$ ssh slave1

Last login: Sun Jun 28 02:24:05 2015 from slave1

[hadoop@slave1 ~]$

说明:上述红色标注命令处在master节点首次远程访问slave1时,需要yes指令确认链接,当yes输入链接成功后,退出exit,在此ssh slave1时,就不要求输入yes指令,此时你就可以像上述显示中一样通过hadoop账户登陆到slave1中了。

slave2节点也要进行上述操作,最终实现如下即可表明master节点可以免密码登陆到slave2节点中

[hadoop@master ~]$ ssh slave2

Last login: Sat Jun 27 02:01:15 2015 from localhost.localdomain

[hadoop@slave2 ~]$

至此SSH免密码登陆各个节点配置完成。

- 安装JDK

此过程请参考以下操作,最终结果保证三台服务器jdk均能正常运行即可

http://www.cnblogs.com/zhoulf/archive/2013/02/04/2891608.html

注意选择服务器对应的版本

jdk1.7.0_51

- 安装Hadoop

以下操作均在master上进行,注意对应的账户

#解压缩hadoop-2.6.0.tar.gz

[root@master src]# tar -zxvf hadoop-2.6.0.tar.gz

#修改解压缩后文件为hadoop

[root@master src]# mv hadoop-2.6.0 hadoop

[root@master src]# ll

总用量 310468

drwxr-xr-x. 2 root root 4096 11月 11 2010 debug

drwxr-xr-x. 9 20000 20000 4096 11月 14 2014 hadoop

-rw-r--r--. 1 root root 195257604 6月 26 2015 hadoop-2.6.0.tar.gz

-rw-r--r--. 1 root root 122639592 9月 26 2014 jdk-7u51-Linux-x64.rpm

drwxr-xr-x. 2 root root 4096 11月 11 2010 kernels

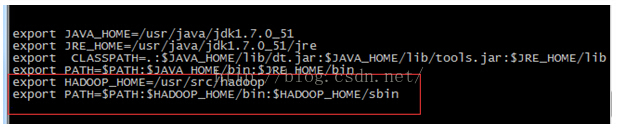

#配置hadoop环境变量

export JAVA_HOME=/usr/Java/jdk1.7.0_51

export JRE_HOME=/usr/java/jdk1.7.0_51/jre

export CLASSPATH=.:$JAVA_HOME/lib/dt.jar:$JAVA_HOME/lib/tools.jar:$JRE_HOME/lib

export PATH=$PATH:$JAVA_HOME/bin:$JRE_HOME/bin

export HADOOP_HOME=/usr/src/hadoop

export PATH=$PATH:$HADOOP_HOME/bin:$HADOOP_HOME/sbin

#修改hadoop-env.sh文件

说明:该文件目录为 <HADOOP_HOME>/etc/hadoop/hadoop-env.sh

#配置slaves

说明:该文件目录为 <HADOOP_HOME>/etc/hadoop/slaves

#配置core-site.xml文件

说明:改文件目录为 <HADOOP_HOME>/etc/hadoop/core-site.xml

[root@master hadoop]# vi core-site.xml

<property>

<name>fs.defaultFS</name>

<value>hdfs://master:9000</value>

</property>

<property>

<name>io.file.buffer.size</name>

<value>131072</value>

</property>

<property>

<name>hadoop.tmp.dir</name>

<value>file:/usr/src/hadoop/tmp</value>

<description>Abasefor other temporary directories.</description>

</property>

<property>

<name>hadoop.proxyuser.Spark.hosts</name>

<value>*</value>

</property>

<property>

<name>hadoop.proxyuser.spark.groups</name>

<value>*</value>

</property>

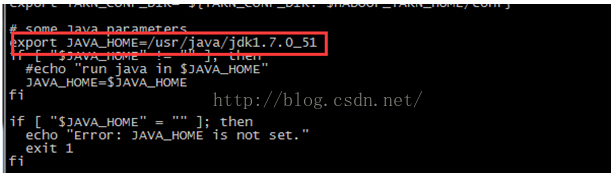

#配置 yarn-env.sh 在开头添加如下环境变量

说明:该文件目录<HADOOP_HOME>/etc/hadoop/yarn-env.sh

# some Java parameters

export JAVA_HOME=/usr/java/jdk1.7.0_51

#配置hdfs-site.xml

说明:该文件目录为<HADOOP_HOME>/etc/hadoop/hdfs-site.xml

<property>

<name>dfs.namenode.secondary.http-address</name>

<value>master:9001</value>

</property>

<property>

<name>dfs.namenode.name.dir</name>

<value>file:/usr/src/hadoop/dfs/name</value>

</property>

<property>

<name>dfs.datanode.data.dir</name>

<value>file:/usr/src/hadoop/dfs/data</value>

</property>

<property>

<name>dfs.replication</name>

<value>2</value>

</property>

<property>

<name>dfs.webhdfs.enabled</name>

<value>true</value>

</property>

#配置 mapred-site.xml

说明:

该文件目录为该文件目录为<HADOOP_HOME>/etc/hadoop/mapred-site.xml

[root@master hadoop]# cp mapred-site.xml.template mapred-site.xml

<property>

<name>mapreduce.framework.name</name>

<value>yarn</value>

</property>

<property>

<name>mapreduce.jobhistory.address</name>

<value>master:10020</value>

</property>

<property>

<name>mapreduce.jobhistory.webapp.address</name>

<value>master:19888</value>

</property>

#配置yarn-site.xml文件

说明:该文件在<HADOOP_HOME>/etc/hadoop/yarn-site.xml

<property>

<name>yarn.nodemanager.aux-services</name>

<value>mapreduce_shuffle</value>

</property>

<property>

<name>yarn.nodemanager.aux-services.mapreduce.shuffle.class</name>

<value>org.apache.hadoop.mapred.ShuffleHandler</value>

</property>

<property>

<name>yarn.resourcemanager.address</name>

<value>master:8032</value>

</property>

<property>

<name>yarn.resourcemanager.scheduler.address</name>

<value>master:8030</value>

</property>

<property>

<name>yarn.resourcemanager.resource-tracker.address</name>

<value>master:8035</value>

</property>

<property>

<name>yarn.resourcemanager.admin.address</name>

<value>master:8033</value>

</property>

<property>

<name>yarn.resourcemanager.webapp.address</name>

<value>master:8088</value>

</property>

#将hadoop目录读权限分配给hadoop用户

[root@master src]# chown -R hadoop:hadoop hadoop

[root@master src]# ll

总用量 310468

drwxr-xr-x. 2 root root 4096 11月 11 2010 debug

drwxr-xr-x. 9 hadoop hadoop 4096 11月 14 2014 hadoop

-rw-r--r--. 1 root root 195257604 6月 26 2015 hadoop-2.6.0.tar.gz

-rw-r--r--. 1 root root 122639592 9月 26 2014 jdk-7u51-linux-x64.rpm

drwxr-xr-x. 2 root root 4096 11月 11 2010 kernels

[root@master src]#

#创建tmp目录,并且将读权限分配给hadoop用户

[root@master src]# cd hadoop

[root@master hadoop]# ll

总用量 52

drwxr-xr-x. 2 hadoop hadoop 4096 11月 14 2014 bin

drwxr-xr-x. 3 hadoop hadoop 4096 11月 14 2014 etc

drwxr-xr-x. 2 hadoop hadoop 4096 11月 14 2014 include

drwxr-xr-x. 3 hadoop hadoop 4096 11月 14 2014 lib

drwxr-xr-x. 2 hadoop hadoop 4096 11月 14 2014 libexec

-rw-r--r--. 1 hadoop hadoop 15429 11月 14 2014 LICENSE.txt

-rw-r--r--. 1 hadoop hadoop 101 11月 14 2014 NOTICE.txt

-rw-r--r--. 1 hadoop hadoop 1366 11月 14 2014 README.txt

drwxr-xr-x. 2 hadoop hadoop 4096 11月 14 2014 sbin

drwxr-xr-x. 4 hadoop hadoop 4096 11月 14 2014 share

[root@master hadoop]# mkdir tmp

[root@master hadoop]# ll

总用量 56

drwxr-xr-x. 2 hadoop hadoop 4096 11月 14 2014 bin

drwxr-xr-x. 3 hadoop hadoop 4096 11月 14 2014 etc

drwxr-xr-x. 2 hadoop hadoop 4096 11月 14 2014 include

drwxr-xr-x. 3 hadoop hadoop 4096 11月 14 2014 lib

drwxr-xr-x. 2 hadoop hadoop 4096 11月 14 2014 libexec

-rw-r--r--. 1 hadoop hadoop 15429 11月 14 2014 LICENSE.txt

-rw-r--r--. 1 hadoop hadoop 101 11月 14 2014 NOTICE.txt

-rw-r--r--. 1 hadoop hadoop 1366 11月 14 2014 README.txt

drwxr-xr-x. 2 hadoop hadoop 4096 11月 14 2014 sbin

drwxr-xr-x. 4 hadoop hadoop 4096 11月 14 2014 share

drwxr-xr-x. 2 root root 4096 6月 26 19:17 tmp

[root@master hadoop]# chown -R hadoop:hadoop tmp

[root@master hadoop]# ll

总用量 56

drwxr-xr-x. 2 hadoop hadoop 4096 11月 14 2014 bin

drwxr-xr-x. 3 hadoop hadoop 4096 11月 14 2014 etc

drwxr-xr-x. 2 hadoop hadoop 4096 11月 14 2014 include

drwxr-xr-x. 3 hadoop hadoop 4096 11月 14 2014 lib

drwxr-xr-x. 2 hadoop hadoop 4096 11月 14 2014 libexec

-rw-r--r--. 1 hadoop hadoop 15429 11月 14 2014 LICENSE.txt

-rw-r--r--. 1 hadoop hadoop 101 11月 14 2014 NOTICE.txt

-rw-r--r--. 1 hadoop hadoop 1366 11月 14 2014 README.txt

drwxr-xr-x. 2 hadoop hadoop 4096 11月 14 2014 sbin

drwxr-xr-x. 4 hadoop hadoop 4096 11月 14 2014 share

drwxr-xr-x. 2 hadoop hadoop 4096 6月 26 19:17 tmp

[root@master hadoop]#

master服务器上配置hadoop就完成了,以上过程只在master上进行操作

Slave1配置:

#从master上将hadoop复制到slave1的/usr/src目录下,这个路径很关键,因为在配置core-site.xml中有配置node的目录,所以应该和那里的路径保存一致

命令:scp -r /usr/hadoop root@服务器IP:/usr/

[root@master src]# scp -r hadoop root@slave1:/usr/src

#同样复制到slave2中

[root@master src]# scp -r hadoop root@slave2:/usr/src

以上两部操作均在master上进行

#确认slave1上复制成功hadoop文件

[hadoop@slave1 src]$ ll

总用量 119780

drwxr-xr-x. 2 root root 4096 11月 11 2010 debug

drwxr-xr-x. 10 root root 4096 6月 28 03:40 hadoop

-rw-r--r--. 1 root root 122639592 9月 26 2014 jdk-7u51-linux-x64.rpm

drwxr-xr-x. 2 root root 4096 11月 11 2010 kernels

[hadoop@slave1 src]$

#切换root用户,修改hadoop的文件目录权限为hadoop用户

[root@slave1 src]# chown -R hadoop:hadoop hadoop

[root@slave1 src]# ll

总用量 119780

drwxr-xr-x. 2 root root 4096 11月 11 2010 debug

drwxr-xr-x. 10 hadoop hadoop 4096 6月 28 03:40 hadoop

-rw-r--r--. 1 root root 122639592 9月 26 2014 jdk-7u51-linux-x64.rpm

drwxr-xr-x. 2 root root 4096 11月 11 2010 kernels

[root@slave1 src]#

#在slave1配置hadoop的环境变量

#使环境变量生效

[root@slave1 src]# source /etc/profile

[root@slave1 src]# echo $HADOOP_HOME

/usr/src/hadoop

[root@slave1 src]#

#确认slave2上的hadoop文件复制成功

[hadoop@slave2 src]$ ll

总用量 119780

drwxr-xr-x. 2 root root 4096 11月 11 2010 debug

drwxr-xr-x. 10 root root 4096 6月 27 03:32 hadoop

-rw-r--r--. 1 root root 122639592 9月 26 2014 jdk-7u51-linux-x64.rpm

drwxr-xr-x. 2 root root 4096 11月 11 2010 kernels

[hadoop@slave2 src]$

#修改hadoop目录权限为hadoop用户

[root@slave2 src]# chown -R hadoop:hadoop hadoop

[root@slave2 src]# ll

总用量 119780

drwxr-xr-x. 2 root root 4096 11月 11 2010 debug

drwxr-xr-x. 10 hadoop hadoop 4096 6月 27 03:32 hadoop

-rw-r--r--. 1 root root 122639592 9月 26 2014 jdk-7u51-linux-x64.rpm

drwxr-xr-x. 2 root root 4096 11月 11 2010 kernels

[root@slave2 src]#

#配置slave2的hadoop环境变量

#使其生效,并验证

[root@slave2 src]# source /etc/profile

[root@slave2 src]# echo $HADOOP_HOME

/usr/src/hadoop

[root@slave2 src]#

至此master、slave1、slave2节点上hadoop配置安装均已完成

最后在三台服务器上确定下防火墙是否都已经关闭

[root@slave2 .ssh]# service iptables stop

在master上执行

#格式化namenode操作

[hadoop@master hadoop]$ hdfs namenode -format

15/06/26 19:38:57 INFO namenode.NameNode: STARTUP_MSG:

/************************************************************

STARTUP_MSG: Starting NameNode

STARTUP_MSG: host = master/192.168.1.20

STARTUP_MSG: args = [-format]

STARTUP_MSG: version = 2.6.0

STARTUP_MSG: classpath = /usr/src/hadoop/etc/hadoop:/usr/src/hadoop/share/hadoop/common/lib/zookeeper-3.4.6.jar:/usr/src/hadoop/share/hadoop/common/lib/jettison-1.1.jar:/usr/src/hadoop/share/hadoop/common/lib/gson-2.2.4.jar:/usr/src/hadoop/share/hadoop/common/lib/api-asn1-api-1.0.0-M20.jar:/usr/src/hadoop/share/hadoop/common/lib/commons-el-1.0.jar:/usr/src/hadoop/share/hadoop/common/lib/activation-1.1.jar:/usr/src/hadoop/share/hadoop/common/lib/stax-api-1.0-2.jar:/usr/src/hadoop/share/hadoop/common/lib/apacheds-kerberos-codec-2.0.0-M15.jar:/usr/src/hadoop/share/hadoop/common/lib/protobuf-java-2.5.0.jar:/usr/src/hadoop/share/hadoop/common/lib/jsr305-1.3.9.jar:/usr/src/hadoop/share/hadoop/common/lib/htrace-core-3.0.4.jar:/usr/src/hadoop/share/hadoop/common/lib/commons-math3-3.1.1.jar:/usr/src/hadoop/share/hadoop/common/lib/jersey-core-1.9.jar:/usr/src/hadoop/share/hadoop/common/lib/commons-configuration-1.6.jar:/usr/src/hadoop/share/hadoop/common/lib/commons-collections-3.2.1.jar:/usr/src/hadoop/share/hadoop/common/lib/hamcrest-core-1.3.jar:/usr/src/hadoop/share/hadoop/common/lib/commons-lang-2.6.jar:/usr/src/hadoop/share/hadoop/common/lib/commons-beanutils-core-1.8.0.jar:/usr/src/hadoop/share/hadoop/common/lib/jetty-util-6.1.26.jar:/usr/src/hadoop/share/hadoop/common/lib/commons-codec-1.4.jar:/usr/src/hadoop/share/hadoop/common/lib/jackson-core-asl-1.9.13.jar:/usr/src/hadoop/share/hadoop/common/lib/commons-net-3.1.jar:/usr/src/hadoop/share/hadoop/common/lib/jsch-0.1.42.jar:/usr/src/hadoop/share/hadoop/common/lib/hadoop-auth-2.6.0.jar:/usr/src/hadoop/share/hadoop/common/lib/commons-digester-1.8.jar:/usr/src/hadoop/share/hadoop/common/lib/apacheds-i18n-2.0.0-M15.jar:/usr/src/hadoop/share/hadoop/common/lib/commons-logging-1.1.3.jar:/usr/src/hadoop/share/hadoop/common/lib/jersey-json-1.9.jar:/usr/src/hadoop/share/hadoop/common/lib/mockito-all-1.8.5.jar:/usr/src/hadoop/share/hadoop/common/lib/servlet-api-2.5.jar:/usr/src/hadoop/share/hadoop/common/lib/curator-client-2.6.0.jar:/usr/src/hadoop/share/hadoop/common/lib/commons-cli-1.2.jar:/usr/src/hadoop/share/hadoop/common/lib/jaxb-impl-2.2.3-1.jar:/usr/src/hadoop/share/hadoop/common/lib/jetty-6.1.26.jar:/usr/src/hadoop/share/hadoop/common/lib/commons-compress-1.4.1.jar:/usr/src/hadoop/share/hadoop/common/lib/httpclient-4.2.5.jar:/usr/src/hadoop/share/hadoop/common/lib/jackson-jaxrs-1.9.13.jar:/usr/src/hadoop/share/hadoop/common/lib/curator-recipes-2.6.0.jar:/usr/src/hadoop/share/hadoop/common/lib/commons-httpclient-3.1.jar:/usr/src/hadoop/share/hadoop/common/lib/jaxb-api-2.2.2.jar:/usr/src/hadoop/share/hadoop/common/lib/paranamer-2.3.jar:/usr/src/hadoop/share/hadoop/common/lib/jsp-api-2.1.jar:/usr/src/hadoop/share/hadoop/common/lib/netty-3.6.2.Final.jar:/usr/src/hadoop/share/hadoop/common/lib/commons-beanutils-1.7.0.jar:/usr/src/hadoop/share/hadoop/common/lib/xz-1.0.jar:/usr/src/hadoop/share/hadoop/common/lib/hadoop-annotations-2.6.0.jar:/usr/src/hadoop/share/hadoop/common/lib/asm-3.2.jar:/usr/src/hadoop/share/hadoop/common/lib/curator-framework-2.6.0.jar:/usr/src/hadoop/share/hadoop/common/lib/httpcore-4.2.5.jar:/usr/src/hadoop/share/hadoop/common/lib/java-xmlbuilder-0.4.jar:/usr/src/hadoop/share/hadoop/common/lib/avro-1.7.4.jar:/usr/src/hadoop/share/hadoop/common/lib/junit-4.11.jar:/usr/src/hadoop/share/hadoop/common/lib/jackson-mapper-asl-1.9.13.jar:/usr/src/hadoop/share/hadoop/common/lib/commons-io-2.4.jar:/usr/src/hadoop/share/hadoop/common/lib/guava-11.0.2.jar:/usr/src/hadoop/share/hadoop/common/lib/slf4j-api-1.7.5.jar:/usr/src/hadoop/share/hadoop/common/lib/jackson-xc-1.9.13.jar:/usr/src/hadoop/share/hadoop/common/lib/jersey-server-1.9.jar:/usr/src/hadoop/share/hadoop/common/lib/jets3t-0.9.0.jar:/usr/src/hadoop/share/hadoop/common/lib/slf4j-log4j12-1.7.5.jar:/usr/src/hadoop/share/hadoop/common/lib/log4j-1.2.17.jar:/usr/src/hadoop/share/hadoop/common/lib/jasper-runtime-5.5.23.jar:/usr/src/hadoop/share/hadoop/common/lib/api-util-1.0.0-M20.jar:/usr/src/hadoop/share/hadoop/common/lib/snappy-java-1.0.4.1.jar:/usr/src/hadoop/share/hadoop/common/lib/xmlenc-0.52.jar:/usr/src/hadoop/share/hadoop/common/lib/jasper-compiler-5.5.23.jar:/usr/src/hadoop/share/hadoop/common/hadoop-common-2.6.0-tests.jar:/usr/src/hadoop/share/hadoop/common/hadoop-nfs-2.6.0.jar:/usr/src/hadoop/share/hadoop/common/hadoop-common-2.6.0.jar:/usr/src/hadoop/share/hadoop/hdfs:/usr/src/hadoop/share/hadoop/hdfs/lib/commons-el-1.0.jar:/usr/src/hadoop/share/hadoop/hdfs/lib/protobuf-java-2.5.0.jar:/usr/src/hadoop/share/hadoop/hdfs/lib/jsr305-1.3.9.jar:/usr/src/hadoop/share/hadoop/hdfs/lib/xml-apis-1.3.04.jar:/usr/src/hadoop/share/hadoop/hdfs/lib/htrace-core-3.0.4.jar:/usr/src/hadoop/share/hadoop/hdfs/lib/jersey-core-1.9.jar:/usr/src/hadoop/share/hadoop/hdfs/lib/commons-lang-2.6.jar:/usr/src/hadoop/share/hadoop/hdfs/lib/jetty-util-6.1.26.jar:/usr/src/hadoop/share/hadoop/hdfs/lib/commons-codec-1.4.jar:/usr/src/hadoop/share/hadoop/hdfs/lib/jackson-core-asl-1.9.13.jar:/usr/src/hadoop/share/hadoop/hdfs/lib/commons-daemon-1.0.13.jar:/usr/src/hadoop/share/hadoop/hdfs/lib/commons-logging-1.1.3.jar:/usr/src/hadoop/share/hadoop/hdfs/lib/servlet-api-2.5.jar:/usr/src/hadoop/share/hadoop/hdfs/lib/xercesImpl-2.9.1.jar:/usr/src/hadoop/share/hadoop/hdfs/lib/commons-cli-1.2.jar:/usr/src/hadoop/share/hadoop/hdfs/lib/jetty-6.1.26.jar:/usr/src/hadoop/share/hadoop/hdfs/lib/jsp-api-2.1.jar:/usr/src/hadoop/share/hadoop/hdfs/lib/netty-3.6.2.Final.jar:/usr/src/hadoop/share/hadoop/hdfs/lib/asm-3.2.jar:/usr/src/hadoop/share/hadoop/hdfs/lib/jackson-mapper-asl-1.9.13.jar:/usr/src/hadoop/share/hadoop/hdfs/lib/commons-io-2.4.jar:/usr/src/hadoop/share/hadoop/hdfs/lib/guava-11.0.2.jar:/usr/src/hadoop/share/hadoop/hdfs/lib/jersey-server-1.9.jar:/usr/src/hadoop/share/hadoop/hdfs/lib/log4j-1.2.17.jar:/usr/src/hadoop/share/hadoop/hdfs/lib/jasper-runtime-5.5.23.jar:/usr/src/hadoop/share/hadoop/hdfs/lib/xmlenc-0.52.jar:/usr/src/hadoop/share/hadoop/hdfs/hadoop-hdfs-2.6.0.jar:/usr/src/hadoop/share/hadoop/hdfs/hadoop-hdfs-2.6.0-tests.jar:/usr/src/hadoop/share/hadoop/hdfs/hadoop-hdfs-nfs-2.6.0.jar:/usr/src/hadoop/share/hadoop/yarn/lib/jersey-guice-1.9.jar:/usr/src/hadoop/share/hadoop/yarn/lib/zookeeper-3.4.6.jar:/usr/src/hadoop/share/hadoop/yarn/lib/jettison-1.1.jar:/usr/src/hadoop/share/hadoop/yarn/lib/activation-1.1.jar:/usr/src/hadoop/share/hadoop/yarn/lib/stax-api-1.0-2.jar:/usr/src/hadoop/share/hadoop/yarn/lib/protobuf-java-2.5.0.jar:/usr/src/hadoop/share/hadoop/yarn/lib/jsr305-1.3.9.jar:/usr/src/hadoop/share/hadoop/yarn/lib/guice-servlet-3.0.jar:/usr/src/hadoop/share/hadoop/yarn/lib/jersey-core-1.9.jar:/usr/src/hadoop/share/hadoop/yarn/lib/commons-collections-3.2.1.jar:/usr/src/hadoop/share/hadoop/yarn/lib/commons-lang-2.6.jar:/usr/src/hadoop/share/hadoop/yarn/lib/jetty-util-6.1.26.jar:/usr/src/hadoop/share/hadoop/yarn/lib/commons-codec-1.4.jar:/usr/src/hadoop/share/hadoop/yarn/lib/jackson-core-asl-1.9.13.jar:/usr/src/hadoop/share/hadoop/yarn/lib/aopalliance-1.0.jar:/usr/src/hadoop/share/hadoop/yarn/lib/commons-logging-1.1.3.jar:/usr/src/hadoop/share/hadoop/yarn/lib/jersey-json-1.9.jar:/usr/src/hadoop/share/hadoop/yarn/lib/servlet-api-2.5.jar:/usr/src/hadoop/share/hadoop/yarn/lib/commons-cli-1.2.jar:/usr/src/hadoop/share/hadoop/yarn/lib/jaxb-impl-2.2.3-1.jar:/usr/src/hadoop/share/hadoop/yarn/lib/jetty-6.1.26.jar:/usr/src/hadoop/share/hadoop/yarn/lib/commons-compress-1.4.1.jar:/usr/src/hadoop/share/hadoop/yarn/lib/jackson-jaxrs-1.9.13.jar:/usr/src/hadoop/share/hadoop/yarn/lib/guice-3.0.jar:/usr/src/hadoop/share/hadoop/yarn/lib/commons-httpclient-3.1.jar:/usr/src/hadoop/share/hadoop/yarn/lib/jaxb-api-2.2.2.jar:/usr/src/hadoop/share/hadoop/yarn/lib/javax.inject-1.jar:/usr/src/hadoop/share/hadoop/yarn/lib/leveldbjni-all-1.8.jar:/usr/src/hadoop/share/hadoop/yarn/lib/netty-3.6.2.Final.jar:/usr/src/hadoop/share/hadoop/yarn/lib/xz-1.0.jar:/usr/src/hadoop/share/hadoop/yarn/lib/asm-3.2.jar:/usr/src/hadoop/share/hadoop/yarn/lib/jackson-mapper-asl-1.9.13.jar:/usr/src/hadoop/share/hadoop/yarn/lib/commons-io-2.4.jar:/usr/src/hadoop/share/hadoop/yarn/lib/guava-11.0.2.jar:/usr/src/hadoop/share/hadoop/yarn/lib/jackson-xc-1.9.13.jar:/usr/src/hadoop/share/hadoop/yarn/lib/jersey-server-1.9.jar:/usr/src/hadoop/share/hadoop/yarn/lib/log4j-1.2.17.jar:/usr/src/hadoop/share/hadoop/yarn/lib/jline-0.9.94.jar:/usr/src/hadoop/share/hadoop/yarn/lib/jersey-client-1.9.jar:/usr/src/hadoop/share/hadoop/yarn/hadoop-yarn-applications-unmanaged-am-launcher-2.6.0.jar:/usr/src/hadoop/share/hadoop/yarn/hadoop-yarn-registry-2.6.0.jar:/usr/src/hadoop/share/hadoop/yarn/hadoop-yarn-server-common-2.6.0.jar:/usr/src/hadoop/share/hadoop/yarn/hadoop-yarn-api-2.6.0.jar:/usr/src/hadoop/share/hadoop/yarn/hadoop-yarn-server-web-proxy-2.6.0.jar:/usr/src/hadoop/share/hadoop/yarn/hadoop-yarn-client-2.6.0.jar:/usr/src/hadoop/share/hadoop/yarn/hadoop-yarn-applications-distributedshell-2.6.0.jar:/usr/src/hadoop/share/hadoop/yarn/hadoop-yarn-common-2.6.0.jar:/usr/src/hadoop/share/hadoop/yarn/hadoop-yarn-server-tests-2.6.0.jar:/usr/src/hadoop/share/hadoop/yarn/hadoop-yarn-server-applicationhistoryservice-2.6.0.jar:/usr/src/hadoop/share/hadoop/yarn/hadoop-yarn-server-resourcemanager-2.6.0.jar:/usr/src/hadoop/share/hadoop/yarn/hadoop-yarn-server-nodemanager-2.6.0.jar:/usr/src/hadoop/share/hadoop/mapreduce/lib/jersey-guice-1.9.jar:/usr/src/hadoop/share/hadoop/mapreduce/lib/protobuf-java-2.5.0.jar:/usr/src/hadoop/share/hadoop/mapreduce/lib/guice-servlet-3.0.jar:/usr/src/hadoop/share/hadoop/mapreduce/lib/jersey-core-1.9.jar:/usr/src/hadoop/share/hadoop/mapreduce/lib/hamcrest-core-1.3.jar:/usr/src/hadoop/share/hadoop/mapreduce/lib/jackson-core-asl-1.9.13.jar:/usr/src/hadoop/share/hadoop/mapreduce/lib/aopalliance-1.0.jar:/usr/src/hadoop/share/hadoop/mapreduce/lib/commons-compress-1.4.1.jar:/usr/src/hadoop/share/hadoop/mapreduce/lib/guice-3.0.jar:/usr/src/hadoop/share/hadoop/mapreduce/lib/javax.inject-1.jar:/usr/src/hadoop/share/hadoop/mapreduce/lib/paranamer-2.3.jar:/usr/src/hadoop/share/hadoop/mapreduce/lib/leveldbjni-all-1.8.jar:/usr/src/hadoop/share/hadoop/mapreduce/lib/netty-3.6.2.Final.jar:/usr/src/hadoop/share/hadoop/mapreduce/lib/xz-1.0.jar:/usr/src/hadoop/share/hadoop/mapreduce/lib/hadoop-annotations-2.6.0.jar:/usr/src/hadoop/share/hadoop/mapreduce/lib/asm-3.2.jar:/usr/src/hadoop/share/hadoop/mapreduce/lib/avro-1.7.4.jar:/usr/src/hadoop/share/hadoop/mapreduce/lib/junit-4.11.jar:/usr/src/hadoop/share/hadoop/mapreduce/lib/jackson-mapper-asl-1.9.13.jar:/usr/src/hadoop/share/hadoop/mapreduce/lib/commons-io-2.4.jar:/usr/src/hadoop/share/hadoop/mapreduce/lib/jersey-server-1.9.jar:/usr/src/hadoop/share/hadoop/mapreduce/lib/log4j-1.2.17.jar:/usr/src/hadoop/share/hadoop/mapreduce/lib/snappy-java-1.0.4.1.jar:/usr/src/hadoop/share/hadoop/mapreduce/hadoop-mapreduce-client-jobclient-2.6.0.jar:/usr/src/hadoop/share/hadoop/mapreduce/hadoop-mapreduce-client-shuffle-2.6.0.jar:/usr/src/hadoop/share/hadoop/mapreduce/hadoop-mapreduce-client-hs-plugins-2.6.0.jar:/usr/src/hadoop/share/hadoop/mapreduce/hadoop-mapreduce-client-jobclient-2.6.0-tests.jar:/usr/src/hadoop/share/hadoop/mapreduce/hadoop-mapreduce-client-common-2.6.0.jar:/usr/src/hadoop/share/hadoop/mapreduce/hadoop-mapreduce-client-app-2.6.0.jar:/usr/src/hadoop/share/hadoop/mapreduce/hadoop-mapreduce-client-core-2.6.0.jar:/usr/src/hadoop/share/hadoop/mapreduce/hadoop-mapreduce-client-hs-2.6.0.jar:/usr/src/hadoop/share/hadoop/mapreduce/hadoop-mapreduce-examples-2.6.0.jar:/usr/src/hadoop/contrib/capacity-scheduler/*.jar

STARTUP_MSG: build = https://Git-wip-us.apache.org/repos/asf/hadoop.git -r e3496499ecb8d220fba99dc5ed4c99c8f9e33bb1; compiled by 'jenkins' on 2014-11-13T21:10Z

STARTUP_MSG: java = 1.7.0_51

************************************************************/

15/06/26 19:38:57 INFO namenode.NameNode: registered UNIX signal handlers for [TERM, HUP, INT]

15/06/26 19:38:57 INFO namenode.NameNode: createNameNode [-format]

15/06/26 19:38:59 WARN util.NativeCodeLoader: Unable to load native-hadoop library for your platform... using builtin-java classes where applicable

Formatting using clusterid: CID-fcc57b68-258e-4678-a20d-0798a1583185

15/06/26 19:39:01 INFO namenode.FSNamesystem: No KeyProvider found.

15/06/26 19:39:01 INFO namenode.FSNamesystem: fsLock is fair:true

15/06/26 19:39:01 INFO blockmanagement.DatanodeManager: dfs.block.invalidate.limit=1000

15/06/26 19:39:01 INFO blockmanagement.DatanodeManager: dfs.namenode.datanode.registration.ip-hostname-check=true

15/06/26 19:39:01 INFO blockmanagement.BlockManager: dfs.namenode.startup.delay.block.deletion.sec is set to 000:00:00:00.000

15/06/26 19:39:01 INFO blockmanagement.BlockManager: The block deletion will start around 2015 六月 26 19:39:01

15/06/26 19:39:01 INFO util.GSet: Computing capacity for map BlocksMap

15/06/26 19:39:01 INFO util.GSet: VM type = 64-bit

15/06/26 19:39:01 INFO util.GSet: 2.0% max memory 966.7 MB = 19.3 MB

15/06/26 19:39:01 INFO util.GSet: capacity = 2^21 = 2097152 entries

15/06/26 19:39:01 INFO blockmanagement.BlockManager: dfs.block.access.token.enable=false

15/06/26 19:39:01 INFO blockmanagement.BlockManager: defaultReplication = 2

15/06/26 19:39:01 INFO blockmanagement.BlockManager: maxReplication = 512

15/06/26 19:39:01 INFO blockmanagement.BlockManager: minReplication = 1

15/06/26 19:39:01 INFO blockmanagement.BlockManager: maxReplicationStreams = 2

15/06/26 19:39:01 INFO blockmanagement.BlockManager: shouldCheckForEnoughRacks = false

15/06/26 19:39:01 INFO blockmanagement.BlockManager: replicationRecheckInterval = 3000

15/06/26 19:39:01 INFO blockmanagement.BlockManager: encryptDataTransfer = false

15/06/26 19:39:01 INFO blockmanagement.BlockManager: maxNumBlocksToLog = 1000

15/06/26 19:39:01 INFO namenode.FSNamesystem: fsOwner = hadoop (auth:SIMPLE)

15/06/26 19:39:01 INFO namenode.FSNamesystem: supergroup = supergroup

15/06/26 19:39:01 INFO namenode.FSNamesystem: isPermissionEnabled = true

15/06/26 19:39:01 INFO namenode.FSNamesystem: Determined nameservice ID: hadoop-cluster1

15/06/26 19:39:01 INFO namenode.FSNamesystem: HA Enabled: false

15/06/26 19:39:01 INFO namenode.FSNamesystem: Append Enabled: true

15/06/26 19:39:02 INFO util.GSet: Computing capacity for map INodeMap

15/06/26 19:39:02 INFO util.GSet: VM type = 64-bit

15/06/26 19:39:02 INFO util.GSet: 1.0% max memory 966.7 MB = 9.7 MB

15/06/26 19:39:02 INFO util.GSet: capacity = 2^20 = 1048576 entries

15/06/26 19:39:02 INFO namenode.NameNode: Caching file names occuring more than 10 times

15/06/26 19:39:02 INFO util.GSet: Computing capacity for map cachedBlocks

15/06/26 19:39:02 INFO util.GSet: VM type = 64-bit

15/06/26 19:39:02 INFO util.GSet: 0.25% max memory 966.7 MB = 2.4 MB

15/06/26 19:39:02 INFO util.GSet: capacity = 2^18 = 262144 entries

15/06/26 19:39:02 INFO namenode.FSNamesystem: dfs.namenode.safemode.threshold-pct = 0.9990000128746033

15/06/26 19:39:02 INFO namenode.FSNamesystem: dfs.namenode.safemode.min.datanodes = 0

15/06/26 19:39:02 INFO namenode.FSNamesystem: dfs.namenode.safemode.extension = 30000

15/06/26 19:39:02 INFO namenode.FSNamesystem: Retry cache on namenode is enabled

15/06/26 19:39:02 INFO namenode.FSNamesystem: Retry cache will use 0.03 of total heap and retry cache entry expiry time is 600000 millis

15/06/26 19:39:02 INFO util.GSet: Computing capacity for map NameNodeRetryCache

15/06/26 19:39:02 INFO util.GSet: VM type = 64-bit

15/06/26 19:39:02 INFO util.GSet: 0.029999999329447746% max memory 966.7 MB = 297.0 KB

15/06/26 19:39:02 INFO util.GSet: capacity = 2^15 = 32768 entries

15/06/26 19:39:02 INFO namenode.NNConf: ACLs enabled? false

15/06/26 19:39:02 INFO namenode.NNConf: XAttrs enabled? true

15/06/26 19:39:02 INFO namenode.NNConf: Maximum size of an xattr: 16384

15/06/26 19:39:02 INFO namenode.FSImage: Allocated new BlockPoolId: BP-1680202825-192.168.1.20-1435318742720

15/06/26 19:39:02 INFO common.Storage: Storage directory /usr/src/hadoop/dfs/name has been successfully formatted.

15/06/26 19:39:03 INFO namenode.NNStorageRetentionManager: Going to retain 1 images with txid >= 0

15/06/26 19:39:03 INFO util.ExitUtil: Exiting with status 0

15/06/26 19:39:03 INFO namenode.NameNode: SHUTDOWN_MSG:

/************************************************************

SHUTDOWN_MSG: Shutting down NameNode at master/192.168.1.20

************************************************************/

[hadoop@master hadoop]$

#启动所有服务

************************************************************/

[hadoop@master hadoop]$ start-all.sh

This script is Deprecated. Instead use start-dfs.sh and start-yarn.sh

15/06/26 20:19:18 WARN util.NativeCodeLoader: Unable to load native-hadoop library for your platform... using builtin-java classes where applicable

Starting namenodes on [master]

master: starting namenode, logging to /usr/src/hadoop/logs/hadoop-hadoop-namenode-master.out

slave2: starting datanode, logging to /usr/src/hadoop/logs/hadoop-hadoop-datanode-slave2.out

slave1: starting datanode, logging to /usr/src/hadoop/logs/hadoop-hadoop-datanode-slave1.out

Starting secondary namenodes [master]

master: starting secondarynamenode, logging to /usr/src/hadoop/logs/hadoop-hadoop-secondarynamenode-master.out

15/06/26 20:19:47 WARN util.NativeCodeLoader: Unable to load native-hadoop library for your platform... using builtin-java classes where applicable

starting yarn daemons

starting resourcemanager, logging to /usr/src/hadoop/logs/yarn-hadoop-resourcemanager-master.out

slave1: starting nodemanager, logging to /usr/src/hadoop/logs/yarn-hadoop-nodemanager-slave1.out

slave2: starting nodemanager, logging to /usr/src/hadoop/logs/yarn-hadoop-nodemanager-slave2.out

[hadoop@master hadoop]$ jps

30406 SecondaryNameNode

30803 Jps

30224 NameNode

30546 ResourceManager

在slave1上验证

[hadoop@master hadoop]$ ssh slave1

Last login: Sun Jun 28 04:36:22 2015 from master

[hadoop@slave1 ~]$ jps

27615 DataNode

27849 Jps

27716 NodeManager

[hadoop@slave1 ~]$

在slave2上验证

[hadoop@master hadoop]$ ssh slave2

Last login: Sat Jun 27 04:26:30 2015 from master

[hadoop@slave2 ~]$ jps

4605 DataNode

4704 NodeManager

4837 Jps

[hadoop@slave2 ~]$

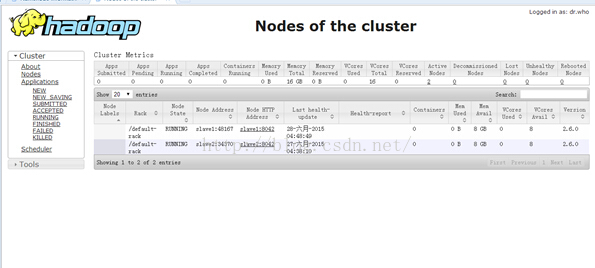

查看集群状态

Connection to slave2 closed.

[hadoop@master hadoop]$ hdfs dfsadmin -report

15/06/26 20:21:12 WARN util.NativeCodeLoader: Unable to load native-hadoop library for your platform... using builtin-java classes where applicable

Configured Capacity: 37073182720 (34.53 GB)

Present Capacity: 27540463616 (25.65 GB)

DFS Remaining: 27540414464 (25.65 GB)

DFS Used: 49152 (48 KB)

DFS Used%: 0.00%

Under replicated blocks: 0

Blocks with corrupt replicas: 0

Missing blocks: 0

-------------------------------------------------

Live datanodes (2):

Name: 192.168.1.22:50010 (slave2)

Hostname: slave2

Decommission Status : Normal

Configured Capacity: 18536591360 (17.26 GB)

DFS Used: 24576 (24 KB)

Non DFS Used: 4712751104 (4.39 GB)

DFS Remaining: 13823815680 (12.87 GB)

DFS Used%: 0.00%

DFS Remaining%: 74.58%

Configured Cache Capacity: 0 (0 B)

Cache Used: 0 (0 B)

Cache Remaining: 0 (0 B)

Cache Used%: 100.00%

Cache Remaining%: 0.00%

Xceivers: 1

Last contact: Fri Jun 26 20:21:13 CST 2015

Name: 192.168.1.21:50010 (slave1)

Hostname: slave1

Decommission Status : Normal

Configured Capacity: 18536591360 (17.26 GB)

DFS Used: 24576 (24 KB)

Non DFS Used: 4819968000 (4.49 GB)

DFS Remaining: 13716598784 (12.77 GB)

DFS Used%: 0.00%

DFS Remaining%: 74.00%

Configured Cache Capacity: 0 (0 B)

Cache Used: 0 (0 B)

Cache Remaining: 0 (0 B)

Cache Used%: 100.00%

Cache Remaining%: 0.00%

Xceivers: 1

Last contact: Fri Jun 26 20:21:14 CST 2015

[hadoop@master hadoop]$

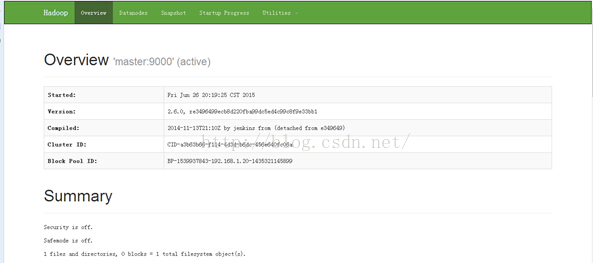

至此hadoop集群搭建完成

在本机配置三台服务器的hosts

192.168.1.20 master

192.168.1.21 slave1

192.168.1.22 slave2

此时可以在本地访问master的web管理

通过浏览器输入:http://master:50070/,在此可以访问到hadoop集群各种状态

运行示例程序wordcount

[hadoop@master hadoop]$ hdfs dfs -ls /

15/06/26 20:37:49 WARN util.NativeCodeLoader: Unable to load native-hadoop library for your platform... using builtin-java classes where applicable

[hadoop@master hadoop]$ hdfs dfs -mkdir /input

15/06/26 20:38:44 WARN util.NativeCodeLoader: Unable to load native-hadoop library for your platform... using builtin-java classes where applicable

[hadoop@master hadoop]$ hdfs dfs -ls /

15/06/26 20:38:50 WARN util.NativeCodeLoader: Unable to load native-hadoop library for your platform... using builtin-java classes where applicable

Found 1 items

drwxr-xr-x - hadoop supergroup 0 2015-06-26 20:38 /input

在本地创建三个文本11.txt 12.txt 13.txt,分别输入以下内容

11.txt :hello world!

12.txt: hello hadoop

13.txt:hello JD.com

[root@master src]# su hadoop

[hadoop@master src]$ ll

总用量 310480

-rw-r--r--. 1 root root 13 6月 26 20:40 11.txt

-rw-r--r--. 1 root root 14 6月 26 20:41 12.txt

-rw-r--r--. 1 root root 13 6月 26 20:41 13.txt

drwxr-xr-x. 2 root root 4096 11月 11 2010 debug

drwxr-xr-x. 12 hadoop hadoop 4096 6月 26 20:15 hadoop

-rw-r--r--. 1 root root 195257604 6月 26 2015 hadoop-2.6.0.tar.gz

-rw-r--r--. 1 root root 122639592 9月 26 2014 jdk-7u51-linux-x64.rpm

drwxr-xr-x. 2 root root 4096 11月 11 2010 kernels

[hadoop@master src]$

将本地文本保存打hdfs文件系统中

[hadoop@master src]$ hdfs dfs -put 1*.txt /input

15/06/26 20:43:04 WARN util.NativeCodeLoader: Unable to load native-hadoop library for your platform... using builtin-java classes where applicable

[hadoop@master src]$ hdfs dfs -ls /input

15/06/26 20:43:18 WARN util.NativeCodeLoader: Unable to load native-hadoop library for your platform... using builtin-java classes where applicable

Found 3 items

-rw-r--r-- 2 hadoop supergroup 13 2015-06-26 20:43 /input/11.txt

-rw-r--r-- 2 hadoop supergroup 14 2015-06-26 20:43 /input/12.txt

-rw-r--r-- 2 hadoop supergroup 13 2015-06-26 20:43 /input/13.txt

[hadoop@master hadoop]$ hadoop jar share/hadoop/mapreduce/hadoop-mapreduce-examples-2.6.0.jar wordcount /input /out

查看统计结果:

[hadoop@master hadoop]$ hdfs dfs -ls /out

15/06/27 18:18:20 WARN util.NativeCodeLoader: Unable to load native-hadoop library for your platform... using builtin-java classes where applicable

Found 1 items

-rw-r--r-- 2 hadoop supergroup 35 2015-06-27 18:18 /out/part-r-00000

[hadoop@master hadoop]$ hdfs dfs -cat /out/part-r-00000

15/06/27 18:18:40 WARN util.NativeCodeLoader: Unable to load native-hadoop library for your platform... using builtin-java classes where applicable

JD.com 1

hadoop 1

hello 3

world! 1

[hadoop@master hadoop]$

停止服务

[hadoop@master hadoop]$ stop-all.sh

This script is Deprecated. Instead use stop-dfs.sh and stop-yarn.sh

15/06/27 18:19:37 WARN util.NativeCodeLoader: Unable to load native-hadoop library for your platform... using builtin-java classes where applicable

Stopping namenodes on [master]

master: stopping namenode

slave2: stopping datanode

slave1: stopping datanode

Stopping secondary namenodes [master]

master: stopping secondarynamenode

15/06/27 18:20:08 WARN util.NativeCodeLoader: Unable to load native-hadoop library for your platform... using builtin-java classes where applicable

stopping yarn daemons

stopping resourcemanager

slave2: stopping nodemanager

slave1: stopping nodemanager

slave2: nodemanager did not stop gracefully after 5 seconds: killing with kill -9

slave1: nodemanager did not stop gracefully after 5 seconds: killing with kill -9

no proxyserver to stop

[hadoop@master hadoop]$

至此hadoop集群环境测试完成!!!

7409

7409

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?