基于用户的协同过滤是推荐系统中最古老的算法,而且这个算法思路也是非常直接,通过找某个user类似的user喜好进行推荐。

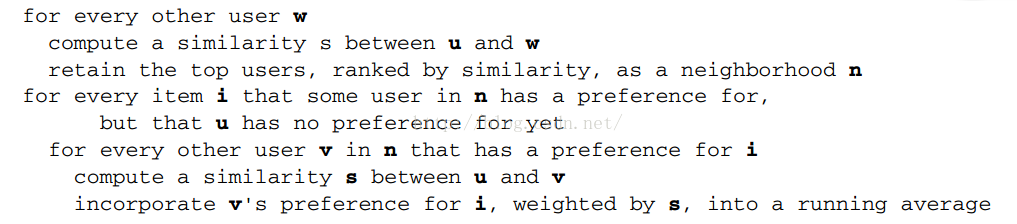

具体实现流程如下:

u 代表一个user ,上述流程是一个最朴素的基于用户的推荐流程。但是这个在实际当中效率太低下,实际中的基于用户推荐流程如下:

最主要区别就是首先先找到相似用户集合,然后跟相似用户集合相关的item 称为候选集。

一个最经典的调用代码:

package recommender;

import java.io.File;

import java.util.List;

import org.apache.mahout.cf.taste.impl.model.file.FileDataModel;

import org.apache.mahout.cf.taste.impl.neighborhood.NearestNUserNeighborhood;

import org.apache.mahout.cf.taste.impl.recommender.GenericUserBasedRecommender;

import org.apache.mahout.cf.taste.impl.similarity.PearsonCorrelationSimilarity;

import org.apache.mahout.cf.taste.model.DataModel;

import org.apache.mahout.cf.taste.neighborhood.UserNeighborhood;

import org.apache.mahout.cf.taste.recommender.RecommendedItem;

import org.apache.mahout.cf.taste.recommender.Recommender;

import org.apache.mahout.cf.taste.similarity.UserSimilarity;

class RecommenderIntro {

private RecommenderIntro() {

}

public static void main(String[] args) throws Exception {

File modelFile = null;

if (args.length > 0)

modelFile = new File(args[0]);

if (modelFile == null || !modelFile.exists())

modelFile = new File("E:\\hello.txt");

if (!modelFile.exists()) {

System.err

.println("Please, specify name of file, or put file 'input.csv' into current directory!");

System.exit(1);

}

DataModel model = new FileDataModel(modelFile);

UserSimilarity similarity = new PearsonCorrelationSimilarity(model);

UserNeighborhood neighborhood = new NearestNUserNeighborhood(2,

similarity, model);

Recommender recommender = new GenericUserBasedRecommender(model,

neighborhood, similarity);

recommender.refresh(null);

List<RecommendedItem> recommendations = recommender.recommend(1, 3);

for (RecommendedItem recommendation : recommendations) {

System.out.println(recommendation);

}

}

}

其中DataModel在前面博文中已经详细介绍了,这里不再赘述,这里主要说下相似性衡量。

这里用到是PearsonCorrelationSimilarity相关系数,这里详细介绍下这个皮尔逊相关系数。

假设有两个变量X、Y,那么两变量间的皮尔逊相关系数可通过以下公式计算:

公式一:

皮尔逊相关系数计算公式

公式二:

皮尔逊相关系数计算公式

公式三:

皮尔逊相关系数计算公式

公式四:

皮尔逊相关系数计算公式

以上列出的四个公式等价,其中E是数学期望,cov表示协方差,N表示变量取值的个数。

皮尔逊相关度评价算法首先会找出两位评论者都曾评论过的物品,然后计算两者的评分总和与平方和,并求得评分的乘积之各。利用上面的公式四计算出皮尔逊相关系数。

其实不同工具选择了不同实现公式,但是原理肯定是一样的,下面是一个python语言版本的实现。

critics = {

'bob':{'A':5.0,'B':3.0,'C':2.5},

'alice':{'A':5.0,'C':3.0}}

from math import sqrt

def sim_pearson(prefs, p1, p2):

# Get the list of mutually rated items

si = {}

for item in prefs[p1]:

if item in prefs[p2]:

si[item] = 1

print si

# if they are no ratings in common, return 0

if len(si) == 0:

return 0

# Sum calculations

n = len(si)

# Sums of all the preferences

sum1 = sum([prefs[p1][it] for it in si])

sum2 = sum([prefs[p2][it] for it in si])

# Sums of the squares

sum1Sq = sum([pow(prefs[p1][it], 2) for it in si])

sum2Sq = sum([pow(prefs[p2][it], 2) for it in si])

# Sum of the products

pSum = sum([prefs[p1][it] * prefs[p2][it] for it in si])

# Calculate r (Pearson score)

num = pSum - (sum1 * sum2 / n)

den = sqrt((sum1Sq - pow(sum1, 2) / n) * (sum2Sq - pow(sum2, 2) / n))

if den == 0:

return 0

r = num / den

return r

if __name__=="__main__":

print critics['bob']

print(sim_pearson(critics,'bob','alice'))

package org.apache.mahout.cf.taste.impl.similarity;

import org.apache.mahout.cf.taste.common.TasteException;

import org.apache.mahout.cf.taste.common.Weighting;

import org.apache.mahout.cf.taste.model.DataModel;

import com.google.common.base.Preconditions;

/**

* <p>

* An implementation of the Pearson correlation. For users X and Y, the following values are calculated:

* </p>

*

* <ul>

* <li>sumX2: sum of the square of all X's preference values</li>

* <li>sumY2: sum of the square of all Y's preference values</li>

* <li>sumXY: sum of the product of X and Y's preference value for all items for which both X and Y express a

* preference</li>

* </ul>

*

* <p>

* The correlation is then:

*

* <p>

* {@code sumXY / sqrt(sumX2 * sumY2)}

* </p>

*

* <p>

* Note that this correlation "centers" its data, shifts the user's preference values so that each of their

* means is 0. This is necessary to achieve expected behavior on all data sets.

* </p>

*

* <p>

* This correlation implementation is equivalent to the cosine similarity since the data it receives

* is assumed to be centered -- mean is 0. The correlation may be interpreted as the cosine of the angle

* between the two vectors defined by the users' preference values.

* </p>

*

* <p>

* For cosine similarity on uncentered data, see {@link UncenteredCosineSimilarity}.

* </p>

*/

public final class PearsonCorrelationSimilarity extends AbstractSimilarity {

/**

* @throws IllegalArgumentException if {@link DataModel} does not have preference values

*/

public PearsonCorrelationSimilarity(DataModel dataModel) throws TasteException {

this(dataModel, Weighting.UNWEIGHTED);

}

/**

* @throws IllegalArgumentException if {@link DataModel} does not have preference values

*/

public PearsonCorrelationSimilarity(DataModel dataModel, Weighting weighting) throws TasteException {

super(dataModel, weighting, true);

Preconditions.checkArgument(dataModel.hasPreferenceValues(), "DataModel doesn't have preference values");

}

@Override

double computeResult(int n, double sumXY, double sumX2, double sumY2, double sumXYdiff2) {

if (n == 0) {

return Double.NaN;

}

// Note that sum of X and sum of Y don't appear here since they are assumed to be 0;

// the data is assumed to be centered.

double denominator = Math.sqrt(sumX2) * Math.sqrt(sumY2);

if (denominator == 0.0) {

// One or both parties has -all- the same ratings;

// can't really say much similarity under this measure

return Double.NaN;

}

return sumXY / denominator;

}

}

看了两个皮尔逊相关系数实现,现在来说下这个相似性度量在推荐系统中使用的问题。

先给一个实际计算例子

直观上来看皮尔逊相关系数是不错的,仔细分析我们可以知道这个相似性衡量的一些缺陷。

首先,皮尔逊相关系数没有考虑两个user Preference 重合的个数,这可能是在推荐引擎中使用的弱点,从上图例子来说就是user1 和user5 对三个item表达了类似的Preference但是user1和user4的相似性更高,这有点反直觉的现象。

第二,如果两个user 只对同一个item 表达了Preference,那么这两个user 无法计算皮尔逊相关系数,如上图的user1 和user3。

最后,假如user5 对所有的item Preference都是3.0 ,同样的该相似性计算是没有定义的(参考公式4 发现分母为0)。

所以虽然很多论文都会选这个相似性衡量,但在实际当中我们需要根据业务场景进行多方面的衡量选择相似性衡量标准。

681

681

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?