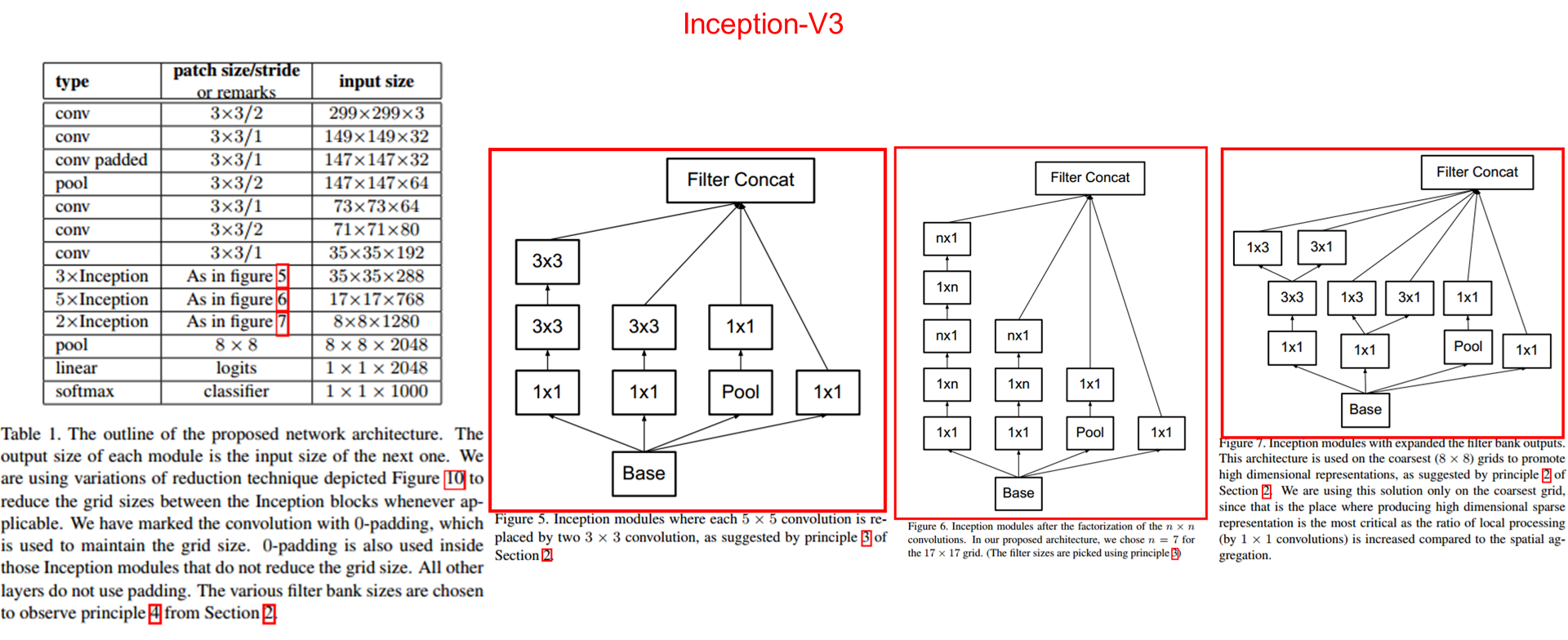

下面代码的网络模型是 Inception_v3:

下图是inception_v3的网络结构图,和原文章里的有点细节不太一样,但重要的Inception部分原理相同。

# -*- coding:utf-8 -*-

#

# inception_v3 net

# default_image_size = 299

import tensorflow as tf

slim = tf.contrib.slim

def inception_v3_base(inputs, num_classes, scope=None):

end_points = {}

with tf.variable_scope(scope, 'inception_v3', [inputs]):

with scopes.arg_scope([slim.conv2d, slim.fc, slim.batch_norm, slim.dropout],

is_training=is_training):

# First part:5 conv layer

with slim.arg_scope([slim.conv2d, slim.max_pool2d, slim.avg_pool2d],

stride=1, padding='VALID'):

# 299 x 299 x 3

end_points['Conv2d_1a'] = slim.conv2d(inputs, 32, [3, 3], stride=2, scope='Conv2d_1a')

# 149 x 149 x 32

end_points['Conv2d_2a'] = slim.conv2d(end_points['Conv2d_1a'], 32, [3, 3], scope='Conv2d_2a')

# 147 x 147 x 32

end_points['Conv2d_2b'] = slim.conv2d(end_points['Conv2d_2a'], 64, [3, 3], padding='SAME', scope='Conv2d_2b')

# 147 x 147 x 64

end_points['MaxPool_3a'] = slim.max_pool2d(end_points['Conv2d_2b'], [3, 3], stride=2, scope='MaxPool_3a')

# 73 x 73 x 64

end_points['Conv2d_3b'] = slim.conv2d(end_points['MaxPool_3a'], 80, [1, 1], scope='Conv2d_3b')

# 73 x 73 x 80

end_points['Conv2d_4a'] = slim.conv2d(end_points['Conv2d_3b'], 192, [3, 3], scope='Conv2d_4a')

# 71 x 71 x 192

end_points['MaxPool_5a'] = slim.max_pool2d(end_points['Conv2d_4a'], [3, 3], stride=2, scope='MaxPool_5a')

# 35 x 35 x 192

net = end_points['MaxPool_5a']

# Inception blocks

with slim.arg_scope([slim.conv2d, slim.max_pool2d, slim.avg_pool2d],

stride=1, padding='SAME'):

# mixed_0: 35 x 35 x 256

with tf.variable_scope('Mixed_5b'):

with tf.variable_scope('Branch_0'):

branch_0 = slim.conv2d(net, 64, [1, 1], scope='Conv_1x1')

with tf.variable_scope('Branch_1'):

branch_1 = slim.conv2d(net, 48, [1, 1], scope='Conv_1x1')

branch_1 = slim.conv2d(branch_1, 64, [5, 5], scope='Conv_5x5')

with tf.variable_scope('Branch_2'):

branch_2 = slim.conv2d(net, 64, [1, 1], scope='Conv_1x1')

branch_2 = slim.conv2d(branch_2, 96, [3, 3], scope='Conv_3x3_0')

branch_2 = slim.conv2d(branch_2, 96, [3, 3], scope='Conv_3x3_1')

with tf.variable_scope('Branch_3'):

branch_3 = slim.avg_pool2d(net, [3, 3], scope='AvgPool_3x3')

branch_3 = slim.conv2d(branch_3, 32, [1, 1], scope='Conv_1x1')

# 256 = 64 + 64 + 96 + 32

net = tf.concat(axis=3, values=[branch_0, branch_1, branch_2, branch_3])

end_points['Mixed_5b'] = net

# mixed_1: 35 x 35 x 288

with tf.variable_scope('Mixed_5c'):

with tf.variable_scope('Branch_0'):

branch_0 = slim.conv2d(net, 64, [1, 1], scope='Conv_1x1')

with tf.variable_scope('Branch_1'):

branch_1 = slim.conv2d(net, 48, [1, 1], scope='Conv_1x1')

branch_1 = slim.conv2d(branch_1, 64, [5, 5], scope='Conv_5x5')

最低0.47元/天 解锁文章

最低0.47元/天 解锁文章

337

337

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?