2012年AlexNet横空出世,正式将深度学习推向高潮。如今10年过去,整个深度学习生态已经发展到非常庞大。这里使用pytorch进行AlexNet网络实现及在图像分类任务上的训练过程。

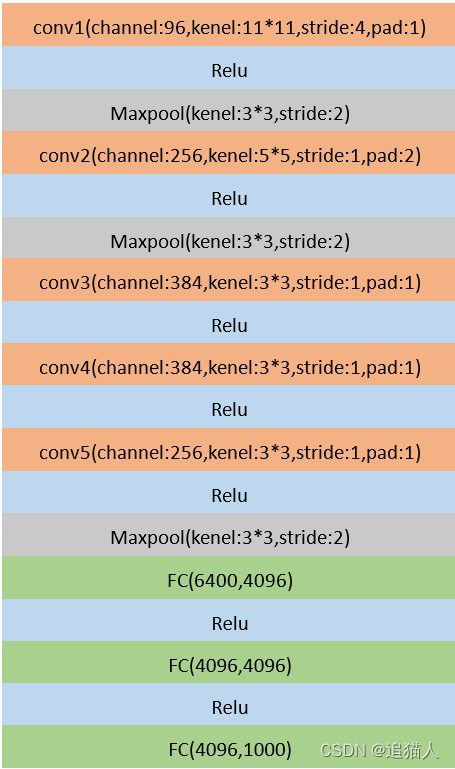

AlexNet是在LeNet基础上发展而来,模型结构更深。LeNet5由2层卷积层加3层全连接层构成,AlexNet则在此基础上将卷积层增加到5层,网络深度达到了8层,并且增加了Dropout,将池化层由平均池化换成最大池化,激活函数改为Relu,整体参数量达到千万级。

以图形分类任务开始讲解AlexNet构建,首先需要将一张输入图像转换为[3, 224, 224]的矩阵,一般的彩色图像为RGB三通道构成,因此模型的输入通道即为3。

第一个卷积层输入通道为3,输出通道96,卷积核大小为11,步长为4,填充为1,经过第一个卷积层后,特征图由[3, 224,224]变为[96,54,54]。卷积计算后进行Relu激活函数和最大池化处理,池化层卷积核大小为3,步长为2,特征图由[96,54,54]变为[96,26,26]。

第二个卷积层输入通道96,输出通道256,卷积核大小为5,填充为2,步长为1,经过第二个卷积层后特征图宽高不变,仅通道数改变。第二个卷积层后同样接一个Relu和池化层,经过池化层后特征图由[256,26,26]变为[256,12,12]。

第三个卷积层和第四个卷积层没有接池化层,卷积核大小为3,填充为1,因此经过第三四个卷积层后特征图尺寸不变,第三个卷积层输出通道为384,第四个卷积层输出通道不变。

第五个卷积层输入通道384,输出通道256,卷积核3,填充1,经过池化层后特征图由[256,12,12]变为[256,5,5]。

卷积之后通过三个全连接层将输出通道逐步降维到分类数量。在全连接之前需要将特征图打平,[256,5,5]打平以后变为6400。全连接层输入输出通道可根据任务分类数自行设计,如:[6400, 4096]-->[4096,4096]-->[4096,1000],最后的1000就是ImageNet数据集图像分类数量。

整体结构:

模型构建:

def AlexNet(input_channel, class_num):

model = nn.Sequential(

nn.Conv2d(input_channel, 96, kernel_size=11, stride=4, padding=1), # [3,224,224]-->[96,54,54]

nn.ReLU(),

nn.MaxPool2d(kernel_size=3, stride=2), # [96,54,54]-->[96,26,26]

nn.Conv2d(96, 256, kernel_size=5, padding=2), # [96,26,26]-->[256,26,26]

nn.ReLU(),

nn.MaxPool2d(kernel_size=3, stride=2), # [256,26,26]-->[256,12,12]

nn.ReLU(),

nn.Conv2d(256, 384, kernel_size=3, padding=1), # [256,12,12]-->[384,12,12]

nn.ReLU(),

nn.Conv2d(384, 384, kernel_size=3, padding=1), # [384,12,12]-->[384,12,12]

nn.ReLU(),

nn.Conv2d(384, 256, kernel_size=3, padding=1), # [384,12,12]-->[]256,12,12]

nn.ReLU(),

nn.MaxPool2d(kernel_size=3, stride=2), # [256,12,12]-->[256,5,5]

nn.Flatten(),

nn.Linear(6400, 4096),

nn.ReLU(),

nn.Dropout(),

nn.Linear(4096, 4096),

nn.ReLU(),

nn.Dropout(),

nn.Linear(4096, class_num)

)

return model

完成模型构建后,这里使用自定义数据集进行训练。

def train():

# 如有GPU,默认使用第一块GPU

device = torch.device('cuda:0' if torch.cuda.is_available() else 'cpu')

print('using device {}'.format(device))

#数据预处理

data_transform = {

"train": transforms.Compose([

transforms.RandomResizedCrop(224), #随机缩放裁剪

transforms.RandomHorizontalFlip(), #随机水平翻转

transforms.ToTensor(), #转换为Tensor

transforms.Normalize((0.5, 0.5, 0.5), (0.5, 0.5, 0.5)) #归一化

]),

"val": transforms.Compose([

transforms.RandomResizedCrop(224),

transforms.ToTensor(),

transforms.Normalize((0.5, 0.5, 0.5), (0.5, 0.5, 0.5))

])

}

#加载数据集

batch_size = 32

data_path = 'dataset/dogs'

assert os.path.exists(data_path), "{} does not exist".format(data_path)

train_dataset = datasets.ImageFolder(root=os.path.join(data_path, 'train'), transform=data_transform['train'])

val_dataset = datasets.ImageFolder(root=os.path.join(data_path, 'val'), transform=data_transform['val'])

train_loader = torch.utils.data.DataLoader(train_dataset, batch_size=batch_size, shuffle=True, num_workers=8)

val_loader = torch.utils.data.DataLoader(val_dataset, batch_size=5, shuffle=False, num_workers=8)

model = AlexNet(3, 2)

model.to(device)

loss_function = nn.CrossEntropyLoss()

optimizer = torch.optim.Adam(model.parameters(), lr=0.0001)

epochs = 10

save_path = 'alexnet.pt'

best_acc = 0.0

steps = len(train_loader)

for epoch in range(epochs):

model.train()

running_loss = 0.0

for step, data in enumerate(train_loader):

images, labels = data

optimizer.zero_grad()

outputs = model(images.to(device))

loss = loss_function(outputs, labels.to(device))

loss.backward()

optimizer.step()

running_loss += loss.item()

print('epoch:{},step:{}/{},loss:{}'.format(epoch + 1, step + 1, steps, loss))

model.eval()

acc = 0.0

with torch.no_grad():

for val_data in val_loader:

images, labels = val_data

outputs = model(images.to(device))

predict = torch.max(outputs, dim=1)[1]

acc += torch.eq(predict, labels.to(device)).sum().item()

val_acc = acc / len(val_dataset)

print('epoch:{}, acc:{}'.format(epoch + 1, val_acc))

if val_acc > best_acc:

best_acc = val_acc

torch.save(model.state_dict(), save_path)

print("finish train")

if __name__ == '__main__':

train()

本文详细介绍了使用PyTorch构建和训练AlexNet模型的过程,从LeNet发展说起,涉及模型结构升级、参数调整和全连接层设计,以及在图像分类任务中的应用。

本文详细介绍了使用PyTorch构建和训练AlexNet模型的过程,从LeNet发展说起,涉及模型结构升级、参数调整和全连接层设计,以及在图像分类任务中的应用。

1331

1331

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?