首先说明,本文采用多分类40类自制数据集(数据集形式在下附图)

一、Model

二、dataset

三、train

四、遇到的问题

一、Model

框架搭建根据Alexnet网络构建

class Alexnet(nn.Module):

def __init__(self):

super(Alexnet,self).__init__()

self.backbone = nn.Sequential(

nn.Conv2d(3,96,11,4),

nn.ReLU(),

nn.MaxPool2d(3,2),

nn.Conv2d(96,256,5,1,2),

nn.MaxPool2d(3,2),

nn.ReLU(),

nn.Conv2d(256,384,3,1,1),

nn.ReLU(),

nn.Conv2d(384,384,3,1,1),

nn.ReLU(),

nn.Conv2d(384, 128, 3, 1, 1),

nn.ReLU(),

nn.MaxPool2d(3,2),

)

self.fc = nn.Sequential(

nn.Linear(128*6*6,4096),

nn.ReLU(),

nn.Dropout(0.5),

nn.Linear(4096,2000),

nn.ReLU(),

nn.Dropout(0.5),

nn.Linear(2000,40),

)

def forward(self,x):

f = self.backbone(x)

output = torch.flatten(f,1)

output = self.fc(output)

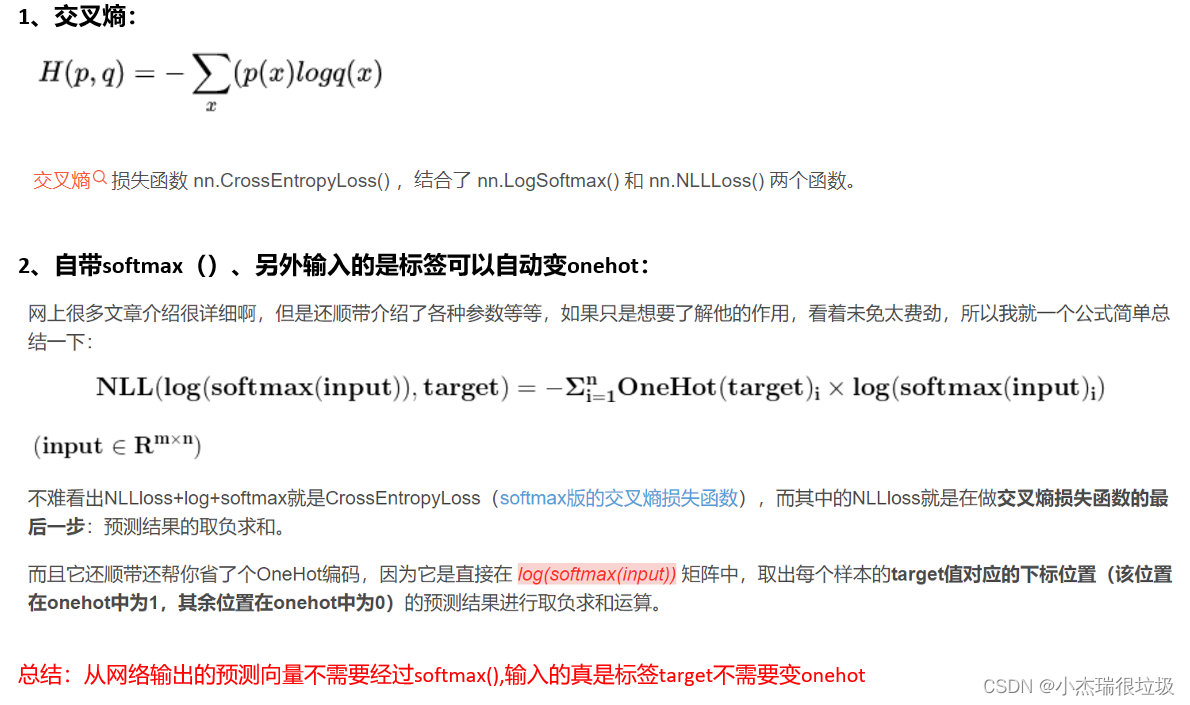

return output注意:最后fc层只是变成40,并没有加softmax,因为训练将使用crossentorpyloss里面自带softmax

二、dataset

class Train_Mydataset(Dataset):

def __init__(self,root):

self.dataset = []

self.root = root

label_list = os.listdir(root)#0 1 名

for img_list in label_list:

new_img_list = os.path.join(root,img_list)#'./0' './1'地址

new_img_list = new_img_list.replace("\\", "/")

new_img_name = os.listdir(new_img_list)#每个图的名字

for name in new_img_name:

img_dir = os.path.join(new_img_list,name)

img_dir = img_dir.replace("\\",'/')

self.dataset.append((img_dir))

def __len__(self):

return len(self.dataset)

def __getitem__(self, index):

data = self.dataset[index]

img = cv2.imread(data)/255

img = cv2.resize(img,(256,256))

img = np.transpose(img,(2,0,1))

label = int(data.split(('/'))[-2])

# print(label)

return np.float32(img),np.float32(label)注意:\的使用可能会被误认为转义字符,做好使用/

三、train

def train(net,train_root):

empoches = 100;

batch_size = 8;

net = net.to(device)

print("training on",device)

#定义一个优化器

optimizer = torch.optim.Adam(net.parameters(),lr=0.003)

#学习率调整

scheduler = lr_scheduler.StepLR(optimizer,step_size=10,gamma=0.5)

#定义一个损失函数

loss = nn.CrossEntropyLoss()

for epoch in range(empoches):

loss_sum,acc_sum = 0.0,0.0

dataset_train = Train_Mydataset(train_root)

# train_n = dataset_train.__len__()

dataloader_train = DataLoader(dataset_train,batch_size = batch_size,shuffle=True)

train_n = len(dataloader_train)

# dataset_val = Train_Mydataset(val_root)

# dataloader_val = DataLoader(dataset_val,batch_size = batch_size,shuffle=True)

# val_n = dataset_val.__len__()

scheduler.step()

for img,label in tqdm(dataloader_train):

label = label.long().to(device)

# label = onehot(label,40).cuda()

p = net(img.to(device).cuda())

loss_c = loss(p,label)

p = nn.softmax(p)

p = torch.argmax(p,dim=1)

#反向传播

optimizer.zero_grad()

loss_c.backward()

optimizer.step()

loss_sum += loss_c.cpu().item()

acc_sum += (p == label).sum().cpu().item()

print('train--epoch %d,lr%8f,loss%8f,acc%.3f'%(epoch,scheduler.get_lr()[0],loss_sum/train_n,acc_sum/train_n))注意;nn.CrossEntopyLoss输入的预测值不需要softmax,输入的标签也不需要转成onehot

1万+

1万+

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?