1. StreamingFileSink

Flink有输出到文件的预实现方法:如 writeAsText()、writeAsCsv() 直接将输出结果保存到文本文件或 Csv 文件。但这种方式是不支持同时写入一份文件,Sink 操作并行度只能为 1,拖慢系统效率且无法保证故障恢复后的状态一致性,已被弃用

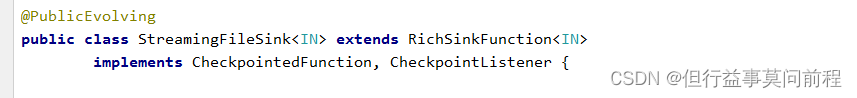

对此,Flink 专门提供了一个流式文件系统的连接器:StreamingFileSink,它继承自抽象类RichSinkFunction,而且集成了 Flink 的检查点(checkpoint)机制,用来保证exactly once的一致性语义

StreamingFileSink 主要操作是将数据写入桶(buckets),每个桶中的数据都可以分割成一个个大小有限的分区文件,这样一来就实现真正意义上的分布式文件存储

Sink that emits its input elements to files within buckets

可以通过各种配置来控制“分桶”的操作;默认的分桶方式是基于时间的,每小时写入一个新的桶

In some scenarios, the open buckets are required to change based on time. In these cases, the user can specify a {@code bucketCheckInterval} (by default 1m) and the sink will check periodically and roll the part file if the specified rolling policy says so

StreamingFileSink 支持行编码(Row-encoded)和批量编码(Bulk-encoded)格式StreamingFileSink 的静态方法:

(1) 行编码:StreamingFileSink.forRowFormat(basePath,rowEncoder)

(2) 批量编码:StreamingFileSink.forBulkFormat(basePath,bulkWriterFactory)

2. 代码示例

POJO对象

public class Event {

public String user;

public String url;

public long timestamp;

public Event() {

}

public Event(String user, String url, Long timestamp) {

this.user = user;

this.url = url;

this.timestamp = timestamp;

}

@Override

public int hashCode() {

return super.hashCode();

}

public String getUser() {

return user;

}

public void setUser(String user) {

this.user = user;

}

public String getUrl() {

return url;

}

public void setUrl(String url) {

this.url = url;

}

public Long getTimestamp() {

return timestamp;

}

public void setTimestamp(Long timestamp) {

this.timestamp = timestamp;

}

@Override

public String toString() {

return "Event{" +

"user='" + user + '\'' +

", url='" + url + '\'' +

", timestamp=" + new Timestamp(timestamp) +

'}';

}

}

读取kafka中的数据,写入本地文件系统:

步骤:

(1) 从kafka集群中,消费message

(2) flatMap扁平映射成自定义的Event对象,

(3) rescale 对数据进行重缩放分区后,设置滚动策略写入文本文件

public class SinkToFileTest {

public static void main(String[] args) throws Exception {

StreamExecutionEnvironment env =

StreamExecutionEnvironment.getExecutionEnvironment();

Properties properties = new Properties();

properties.setProperty("bootstrap.servers", "192.168.42.102:9092,192.168.42.103:9092,192.168.42.104:9092");

properties.setProperty("group.id", "consumer-group");

properties.setProperty("key.deserializer",

"org.apache.kafka.common.serialization.StringDeserializer");

properties.setProperty("value.deserializer",

"org.apache.kafka.common.serialization.StringDeserializer");

properties.setProperty("auto.offset.reset", "latest");

DataStreamSource<String> stream = env.addSource(new

FlinkKafkaConsumer<String>(

"clicks",

new SimpleStringSchema(),

properties

)).setParallelism(2);

SingleOutputStreamOperator<Event> flatMap = stream.flatMap(new FlatMapFunction<String, Event>() {

@Override

public void flatMap(String s, Collector<Event> collector) throws Exception {

String[] split = s.split(",");

collector.collect(new Event(split[0], split[1], Long.parseLong(split[2])));

}

});

StreamingFileSink<Event> fileSink = StreamingFileSink.forRowFormat(new Path("./"), new SimpleStringEncoder<Event>("UTF-8")) .withRollingPolicy(DefaultRollingPolicy.builder().withRolloverInterval(TimeUnit.MINUTES.toMillis(15)).withMaxPartSize(128 * 1024 * 1024).withInactivityInterval(TimeUnit.MINUTES.toMillis(5)).build())

.build();

flatMap.rescale().addSink(fileSink).setParallelism(4);

env.execute();

}

}

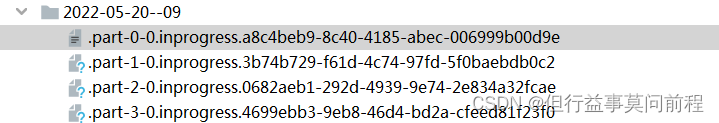

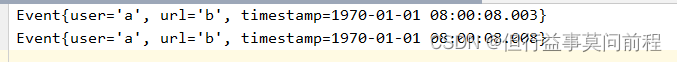

结果:

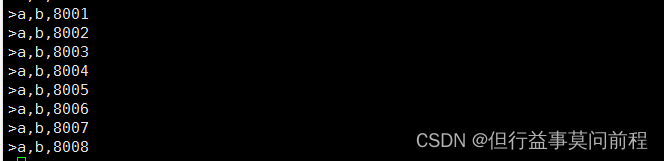

./kafka-console-producer.sh --broker-list 192.168.42.102:9092,192.168.42.103:9092,192.168.42.104:9092 --topic clicks

查看文件

3921

3921

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?