背景:虚拟机Ubuntu16.04,爬取https://hr.tencent.com/招聘信息!

第一步:新建项目:

scrapy startproject tencent第二步:编写items文件

1 # -*- coding: utf-8 -*-

2

3 # Define here the models for your scraped items

4 #

5 # See documentation in:

6 # https://doc.scrapy.org/en/latest/topics/items.html

7

8 import scrapy

9

10

11 class TencentItem(scrapy.Item):

12 # define the fields for your item here like:

13 # name = scrapy.Field()

14

15 #职位名

16 positionname = scrapy.Field()

17 #详情链接

18 positionlink = scrapy.Field()

19 #职位类别

20 positionType = scrapy.Field()

21 #招聘人数

22 peopleNum = scrapy.Field()

23 #工作地点

24 workLocation = scrapy.Field()

25 #发布时间

26 publishTime = scrapy.Field()第三步:进入spider文件,编写爬虫文件:

1 # -*- coding: utf-8 -*-

2 import scrapy

3 from tencent.items import TencentItem

4

5 class TencentpositionSpider(scrapy.Spider):

6 name = 'tencent'

7 allowed_domains = ['tencent.com']

8 #start_urls = ['http://tencent.com/']

9 url = "https://hr.tencent.com/position.php?&start="

10 offset = 0

11 start_urls = [url+str(offset)]

12 def parse(self, response):

13 for each in response.xpath("//tr[@class='even'] | //tr[@class='odd']"):

14 #初始化模型对象

15 item = TencentItem()

16

17 #职位名

18 item['positionname'] = each.xpath("./td[1]/a/text()").extract()[0]

19 #详情链接

20 item['positionlink'] = each.xpath("./td[1]/a/@href").extract()[0]

21 #职位类别

22 try:

23 item['positionType'] = each.xpath("./td[2]/text()").extract()[0]

24 except IndexError:

25 pass

26 #招聘人数

27 item['peopleNum'] = each.xpath("./td[3]/text()").extract()[0]

28 #工作地点

29 item['workLocation'] = each.xpath("./td[4]/text()").extract()[0]

30 #发布时间

31 item['publishTime'] = each.xpath("./td[5]/text()").extract()[0]

32 #将数据交给pipeline

33 yield item

34

35 if self.offset < 1650:

36 self.offset += 10

37

38 #每次处理完一页的数据之后,重新发送下一页页面请求

39 #self.offset自增10,同时拼接为新的url,并调用回调函数self.parse处理Response

40 yield scrapy.Request(self.url+str(self.offset),callback = self.parse)

41

42 第四步:编写管道文件:

1 # -*- coding: utf-8 -*-

2

3 # Define your item pipelines here

4 #

5 # Don't forget to add your pipeline to the ITEM_PIPELINES setting

6 # See: https://doc.scrapy.org/en/latest/topics/item-pipeline.html

7 import json

8

9 class TencentPipeline(object):

10 def __init__(self):

11 self.filename = open("tencent.json","w")

12

13 def process_item(self, item, spider):

14 text = json.dumps(dict(item),ensure_ascii=False) + "\n"

15 self.filename.write(text.encode("utf-8"))

16 return item

17 def close_spider(self,spider):

18 self.filename.close()第五步:设置setting文件:

#设置header报头

41 # Override the default request headers:

42 DEFAULT_REQUEST_HEADERS = {

43 'User-Agent':'Mozilla/5.0 (X11; Ubuntu; Linux x86_64; rv:45.0) Gecko/20100101 Firefox/45.0',

44 'Accept': 'text/html,application/xhtml+xml,application/xml;q=0.9,*/*;q=0.8',

45 }

46

#设置管道文件

65 # Configure item pipelines

66 # See https://doc.scrapy.org/en/latest/topics/item-pipeline.html

67 ITEM_PIPELINES = {

68 'tencent.pipelines.TencentPipeline': 300,

69 }

# 设置下载延时

30 DOWNLOAD_DELAY = 1

第六步:运行:

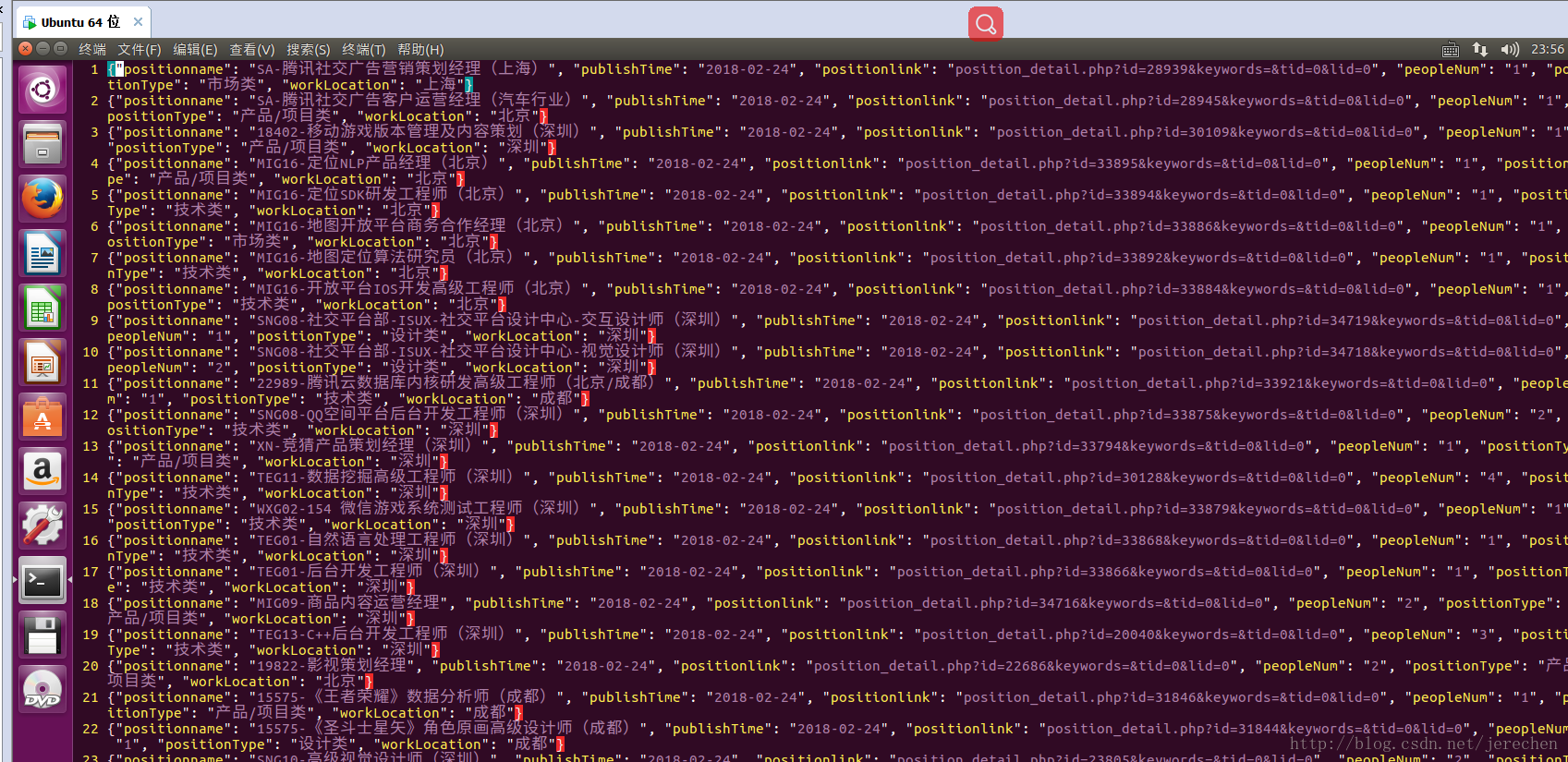

scrapy crawl tencent结果:

139

139

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?