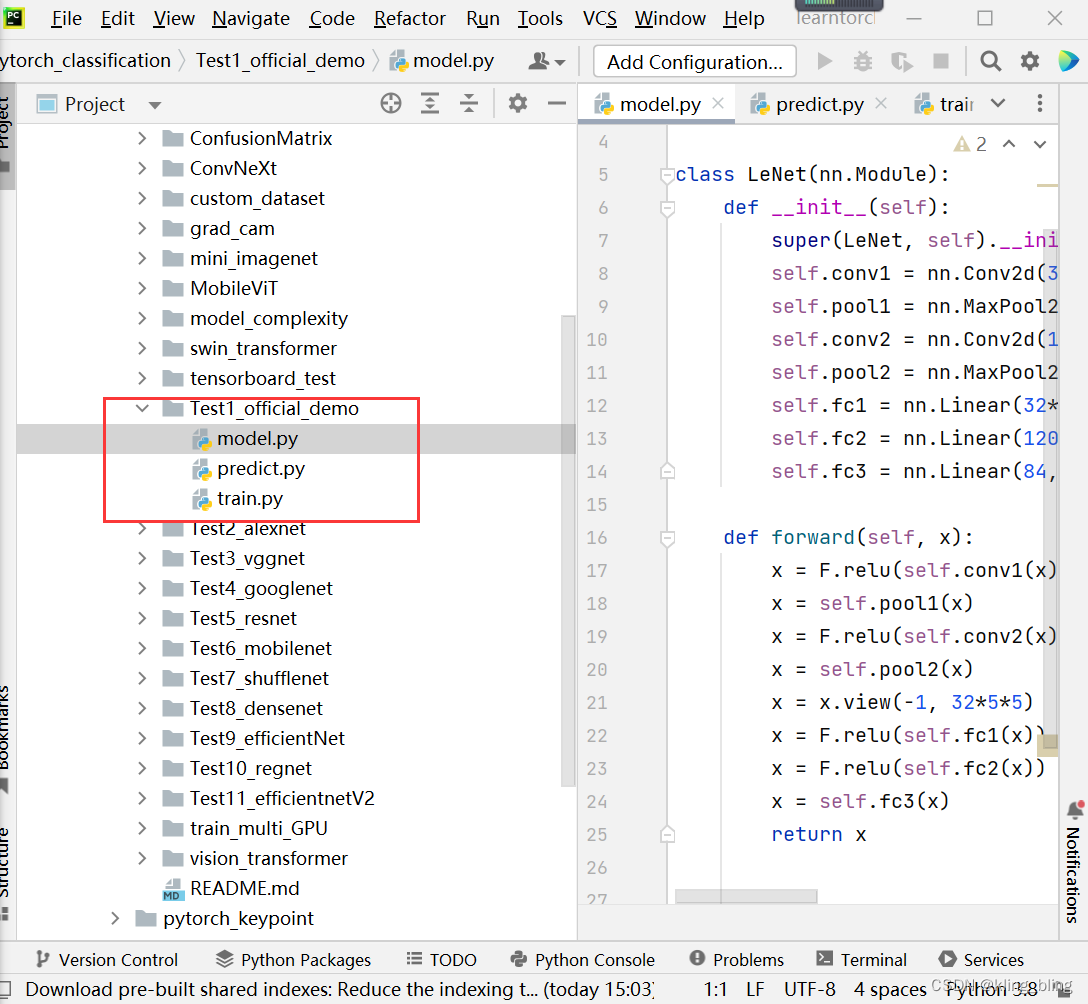

这个小文件夹有三个部分组成,分别有model,predict和train

首先从train开始学习

import torch

import torchvision

import torch.nn as nn

from model import LeNet

import torch.optim as optim

import torchvision.transforms as transforms

def main():

transform = transforms.Compose(

[transforms.ToTensor(),

transforms.Normalize((0.5, 0.5, 0.5), (0.5, 0.5, 0.5))])

# 50000张训练图片

# 第一次使用时要将download设置为True才会自动去下载数据集

train_set = torchvision.datasets.CIFAR10(root='./data', train=True,

download=False, transform=transform)

train_loader = torch.utils.data.DataLoader(train_set, batch_size=36,

shuffle=True, num_workers=0)

# 10000张验证图片

# 第一次使用时要将download设置为True才会自动去下载数据集

val_set = torchvision.datasets.CIFAR10(root='./data', train=False,

download=False, transform=transform)

val_loader = torch.utils.data.DataLoader(val_set, batch_size=5000,

shuffle=False, num_workers=0)

val_data_iter = iter(val_loader)

val_image, val_label = val_data_iter.next()

# classes = ('plane', 'car', 'bird', 'cat',

# 'deer', 'dog', 'frog', 'horse', 'ship', 'truck')

net = LeNet()

loss_function = nn.CrossEntropyLoss()

optimizer = optim.Adam(net.parameters(), lr=0.001)

for epoch in range(5): # loop over the dataset multiple times

running_loss = 0.0

for step, data in enumerate(train_loader, start=0):

# get the inputs; data is a list of [inputs, labels]

inputs, labels = data

# zero the parameter gradients

optimizer.zero_grad()

# forward + backward + optimize

outputs = net(inputs)

loss = loss_function(outputs, labels)

loss.backward()

optimizer.step()

# print statistics

running_loss += loss.item()

if step % 500 == 499: # print every 500 mini-batches

with torch.no_grad():

outputs = net(val_image) # [batch, 10]

predict_y = torch.max(outputs, dim=1)[1]

accuracy = torch.eq(predict_y, val_label).sum().item() / val_label.size(0)

print('[%d, %5d] train_loss: %.3f test_accuracy: %.3f' %

(epoch + 1, step + 1, running_loss / 500, accuracy))

running_loss = 0.0

print('Finished Training')

save_path = './Lenet.pth'

torch.save(net.state_dict(), save_path)

if __name__ == '__main__':

main()

主函数中,首先是transform

def main():

transform=transform.Compose([transforms.Totensor(),transforms.Normalize((0.5,0.5,0.5),(0.5,0.5,0.5))])这里看你的想法添加操作,比如旋转、切割等等

train_set=torchvision.datasets.CIFAR10(root='./data',train=True,download=False, transform=transform)设置训练数据

train_loader=torch.utils.data.Dataloader(train_set, batch_size=36,shuffle=True,num_workers=0)

加载数据集

val_sset=torchvision.dataset.CIFAR10(root=./data/,train=false,download=false,transform=transform)这里可以和训练使用不一样的transform

val_loader=torch.utils.data.Dataloader(val_set,natch_size=50000,shuffle=false,num_worker=0)

val_data_iter=iter(val_loader)

val_image,val_label=val_data_iter.next()每次取一个,相当于之前那个dataset的作用

# classes = ('plane', 'car', 'bird', 'cat',

# 'deer', 'dog', 'frog', 'horse', 'ship', 'truck')d

定义网络

net=lenet()

loss_function=nn.CrossEntroyLoss()设置损失函数

optimizer=optim.Adam(net.parameters(),lr=0.001)

for epoch in range(5)开始训练

running_loss=0.0

for step,data in enumerate(train_loader,start=0):

inputs,labels=data

[inputs, labels]

optimizer.zero_grad()

outputs=net(inputs)

loss=loss_function(outputs,labels)

loss.backward()

optimizer.step()

running_loss+=loss.item()

这里都是常规操作,记住就行

if step %500==499:开始验证

with torch.no_grad():

outputs=net(val_image)

predict_y=torch.max(outputs,dim=1)[1]

accuary=torch.eq(predict_y,val_label).sum().item()/val_label.size(0)

running_loss=0.0

保存模型

save_path='./lenet.pth'

torch.save(net.state_dict(),save.path)

接下来看predict.py

import torch

import torchvision.transforms as transforms

from PIL import Image

from model import LeNet

def main():

transform = transforms.Compose(

[transforms.Resize((32, 32)),

transforms.ToTensor(),

transforms.Normalize((0.5, 0.5, 0.5), (0.5, 0.5, 0.5))])

classes = ('plane', 'car', 'bird', 'cat',

'deer', 'dog', 'frog', 'horse', 'ship', 'truck')

net = LeNet()

net.load_state_dict(torch.load('Lenet.pth'))

im = Image.open('1.jpg')

im = transform(im) # [C, H, W]

im = torch.unsqueeze(im, dim=0) # [N, C, H, W]

with torch.no_grad():

outputs = net(im)

predict = torch.max(outputs, dim=1)[1].numpy()

print(classes[int(predict)])

if __name__ == '__main__':

main()

def main():

transform=transform.Compose([transforms.Resize((32,32)),transforms.ToTensor(),

transforms.Normalize((0.5,0.5,0.5),(0.5,0.5,0.5))])

classes=('plane', 'car', 'bird', 'cat', 'deer', 'dog', 'frog', 'horse', 'ship', 'truck')

net=LeNet()

net.load_state_dict(torch.load('Lenet.pth'))

im=Image.open('1.jpg')

im=transform(im)

im=torch.unsqueeze(im,dim=0)

with torch.no_grad():

outputs=net(im)

predict=torch.max( outputs,dim=1)[1].numpy()

最后是moedl

本文中的模型是简单的lenet

import torch.nn as nn

import torch.nn.functional as F

class LeNet(nn.Module):

def __init__(self):

super(LeNet, self).__init__()

self.conv1 = nn.Conv2d(3, 16, 5)

self.pool1 = nn.MaxPool2d(2, 2)

self.conv2 = nn.Conv2d(16, 32, 5)

self.pool2 = nn.MaxPool2d(2, 2)

self.fc1 = nn.Linear(32*5*5, 120)

self.fc2 = nn.Linear(120, 84)

self.fc3 = nn.Linear(84, 10)

def forward(self, x):

x = F.relu(self.conv1(x)) # input(3, 32, 32) output(16, 28, 28)

x = self.pool1(x) # output(16, 14, 14)

x = F.relu(self.conv2(x)) # output(32, 10, 10)

x = self.pool2(x) # output(32, 5, 5)

x = x.view(-1, 32*5*5) # output(32*5*5)

x = F.relu(self.fc1(x)) # output(120)

x = F.relu(self.fc2(x)) # output(84)

x = self.fc3(x) # output(10)

return x

这个网络非常简单,由卷积、池化、全连接这些层构成

class LeNet(nn.Module):

def __init__(self):

super(LeNet,self).__init__()

self.conv1=nn.Conv2d(3,16,5)

self.pool1=nn.MaxPool2d(2,2)

self.conv2d=nn/Conv2d(16,32,5)

self.pool2=nn.MaxPool2d(2,2)

self.fc1=nn.Linear(32**5,120)

self.fc2=nn.Linear(120,84)

self.fc3=nn.Linear(84,10)

def forward(self,x):

x=F.relu(self.conv1(x)

x=self.pool1(x)

x=F.relu(self.conv2d(X)

x=self.pool2(x)

x=x.view(-1,32*5*5)调整尺寸

x=F.relu(self.fc1(x))

x=F.relu(self.fc2(x))

x=self.fc3(x)

return x

1755

1755

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?