logistic regression

一、Logistic 回归(利用matlib实现:基础版)

1、logistic regression数学基础

1.1 此示例为二元分类,二元分类的最终预测结果h为{0, 1},为获得此效果,使用sigmoid函数/logistic函数:

g ( z ) = 1 / 1 + e x p ( − z ) g(z) = 1 / 1 + exp(-z) g(z)=1/1+exp(−z)

效果图如下:

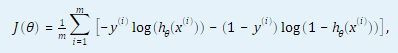

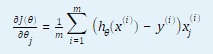

1.2 计算代价函数和代价函数对θ(j) (j = 0, …,n,n为特征数量)的偏导数。

2. 代码分析

2.1 对数据进行预处理,获得特征矩阵X,和答案向量y;

data = load('ex2data1.txt');

[m, n] = size(data);

X = data(:,1:2);

X = [ones(m,1), X];

y = data(:,3);

2.2 将数据分为正样本,负样本,并进行可视化, plotData(X, y).m;

function plotData(X, y)

figure; hold on;

pos = find(y);

neg = find(y == 0);

plot(X(pos,1), X(pos, 2), 'k+','LineWidth', 2, 'MarkerSize', 7);

plot(X(neg,1), X(neg, 2), 'ko', 'MarkerFaceColor', 'y','MarkerSize', 7);

end

2.3 初始化训练参数theta

initial_theta = zeros(n,1);

2.4 计算初始化的cost 和 grad,定义函数costFunction(initial_theta, X, y)

function [J, grad] = costFunction(theta, X, y)

m = length(y); % number of training examples

J = 0;

grad = zeros(size(theta));

h = sigmoid(X * theta);

J = -sum(y .* log(h) + (1-y) .* log(1-h))/m;

error = h - y;

grad = (X' * error)/m;

end

2.5 使用fminunc得到优化的theta和对应的cost:fminunc()函数会自动计算出合适的theta,并求出对应的cost,只需输入初始化theta,并且无需编写theta = theta - gradient,只需在fminunc@的函数中写出J 和 gradient

% 设置fminunc的选项

options = optimoptions(@fminunc, 'Algorithm', 'Quasi-Newton', 'GradObj', 'on', 'MaxIter',400);

% 运行fminunc获得最优的theta

% 这个方程将会返回theta和cost

[theta, cost] = fminunc(@(t)costFunction(t,X,y), initial_theta, options);

2.6 画出决策边界;定义函数plotDecisionBoundary(theta,X,y);

function plotDecisionBoundary(theta, X, y)

plotData(X(:,2:3), y);

hold on

if size(X, 2) <= 3

plot_x = [min(X(:,2))-2, max(X(:,2))+2];

plot_y = (-1./theta(3)).*(theta(2).*plot_x + theta(1));

plot(plot_x, plot_y)

legend('Admitted', 'Not admitted', 'Decision Boundary')

axis([30, 100, 30, 100])

else

u = linspace(-1, 1.5, 50);

v = linspace(-1, 1.5, 50);

z = zeros(length(u

最低0.47元/天 解锁文章

最低0.47元/天 解锁文章

3220

3220

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?