我的环境

-语言环境:Python3.11

-tensorflow版本 2.14.0

代码部分

from tensorflow import keras

from tensorflow.keras import layers, models

import os, PIL, pathlib

import matplotlib.pyplot as plt

import tensorflow as tf

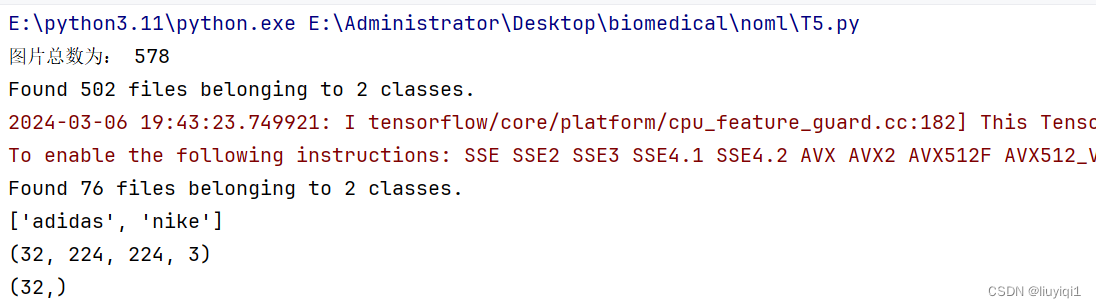

data_dir = "E:/Administrator/Desktop/biomedical/noml/deeplearning/data/46-data"

data_dir = pathlib.Path(data_dir)

image_count = len(list(data_dir.glob('*/*/*.jpg')))

print("图片总数为:",image_count)

roses = list(data_dir.glob('train/nike/*.jpg'))

PIL.Image.open(str(roses[0]))

batch_size = 32

img_height = 224

img_width = 224

train_ds = tf.keras.preprocessing.image_dataset_from_directory(

"E:/Administrator/Desktop/biomedical/noml/deeplearning/data/46-data/train/",

seed=123,

image_size=(img_height, img_width),

batch_size=batch_size)

val_ds = tf.keras.preprocessing.image_dataset_from_directory(

"E:/Administrator/Desktop/biomedical/noml/deeplearning/data/46-data/test/",

seed=123,

image_size=(img_height, img_width),

batch_size=batch_size)

class_names = train_ds.class_names

print(class_names)

plt.figure(figsize=(20, 10))

for images, labels in train_ds.take(1):

for i in range(20):

ax = plt.subplot(5, 10, i + 1)

plt.imshow(images[i].numpy().astype("uint8"))

plt.title(class_names[labels[i]])

plt.axis("off")

for image_batch, labels_batch in train_ds:

print(image_batch.shape)

print(labels_batch.shape)

break

AUTOTUNE = tf.data.AUTOTUNE

train_ds = train_ds.cache().shuffle(1000).prefetch(buffer_size=AUTOTUNE)

val_ds = val_ds.cache().prefetch(buffer_size=AUTOTUNE)

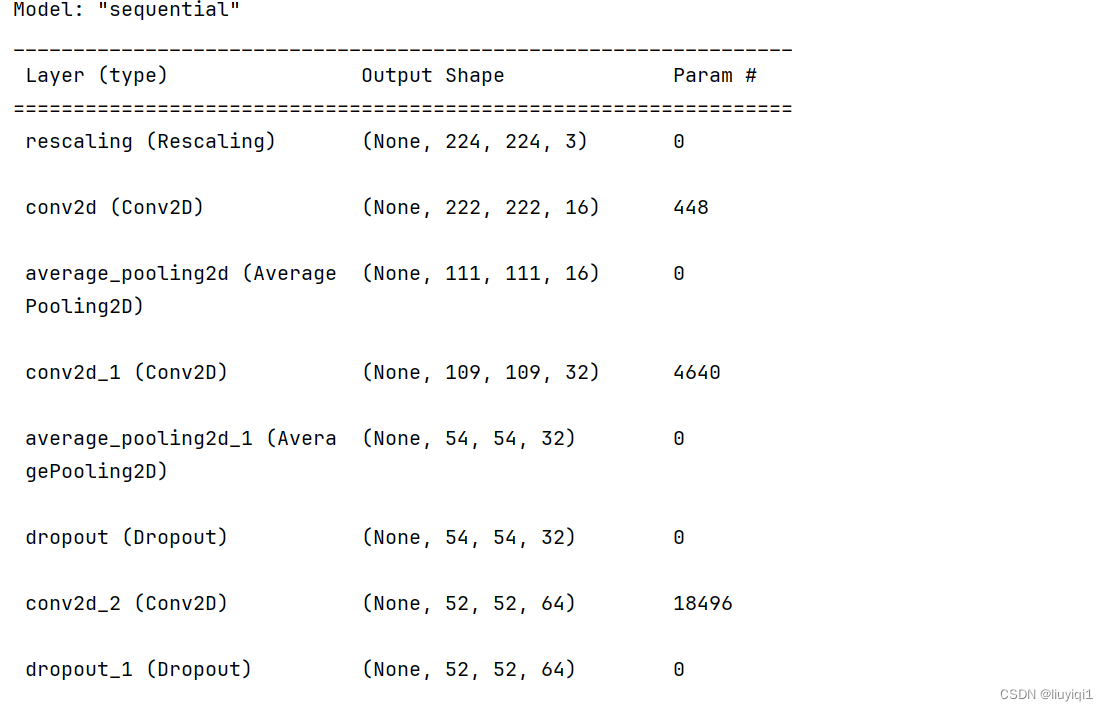

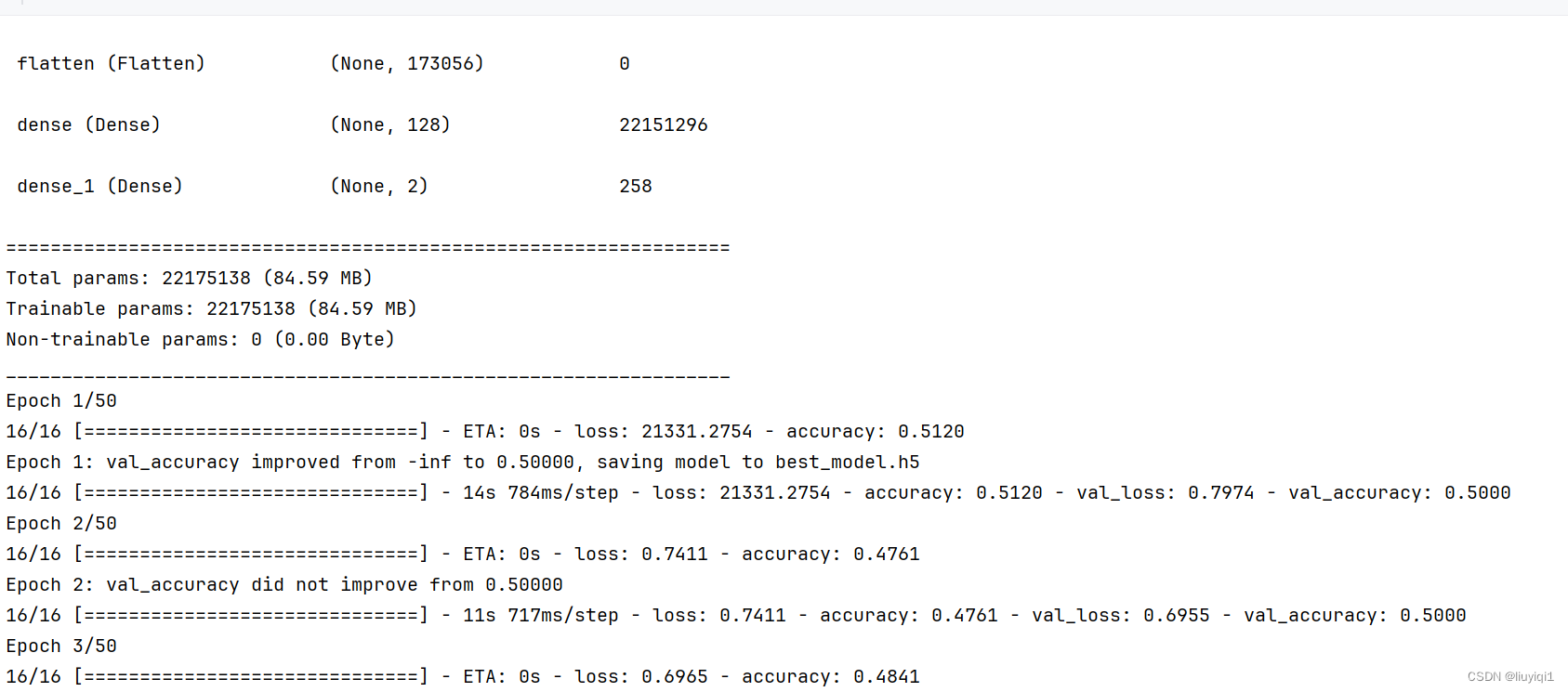

model = models.Sequential([

layers.experimental.preprocessing.Rescaling(1. / 255, input_shape=(img_height, img_width, 3)),

layers.Conv2D(16, (3, 3), activation='relu', input_shape=(img_height, img_width, 3)),

layers.AveragePooling2D((2, 2)),

layers.Conv2D(32, (3, 3), activation='relu'),

layers.AveragePooling2D((2, 2)),

layers.Dropout(0.3),

layers.Conv2D(64, (3, 3), activation='relu'),

layers.Dropout(0.3),

layers.Flatten(),

layers.Dense(128, activation='relu'),

layers.Dense(len(class_names))

])

model.summary()

# 设置初始学习率

initial_learning_rate = 0.1

lr_schedule = tf.keras.optimizers.schedules.ExponentialDecay(

initial_learning_rate,

decay_steps=10,

decay_rate=0.92,

staircase=True)

# 将指数衰减学习率送入优化器

optimizer = tf.keras.optimizers.Adam(learning_rate=lr_schedule)

model.compile(optimizer=optimizer,

loss=tf.keras.losses.SparseCategoricalCrossentropy(from_logits=True),

metrics=['accuracy'])

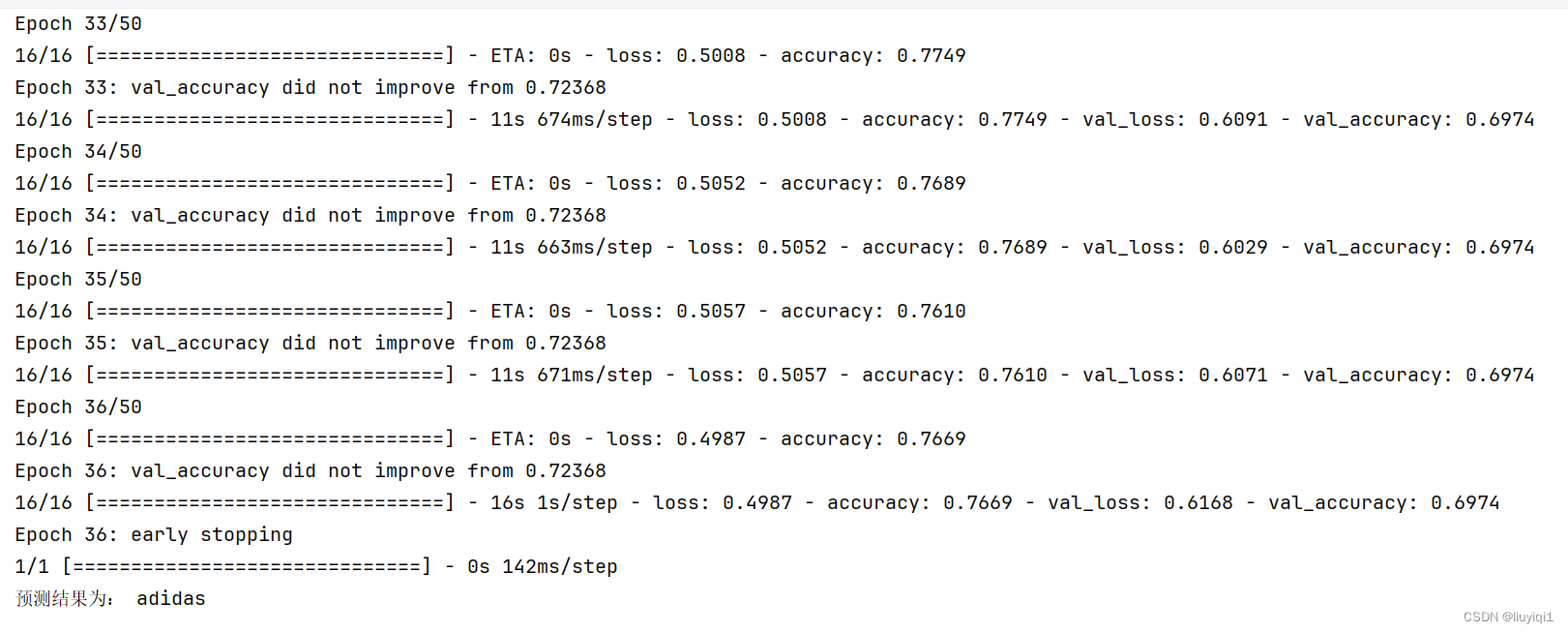

from tensorflow.keras.callbacks import ModelCheckpoint, EarlyStopping

epochs = 50

# 保存最佳模型参数

checkpointer = ModelCheckpoint('best_model.h5',

monitor='val_accuracy',

verbose=1,

save_best_only=True,

save_weights_only=True)

# 设置早停

earlystopper = EarlyStopping(monitor='val_accuracy',

min_delta=0.001,

patience=20,

verbose=1)

history = model.fit(train_ds,

validation_data=val_ds,

epochs=epochs,

callbacks=[checkpointer, earlystopper])

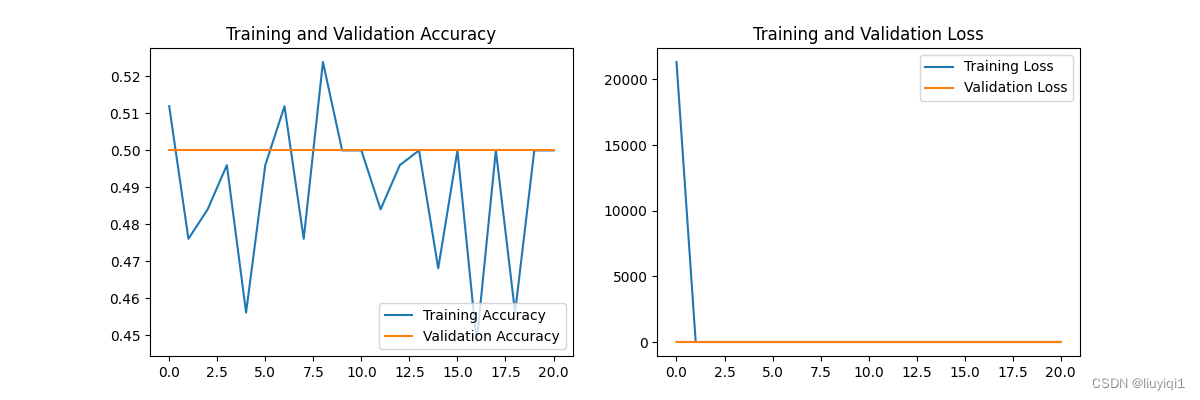

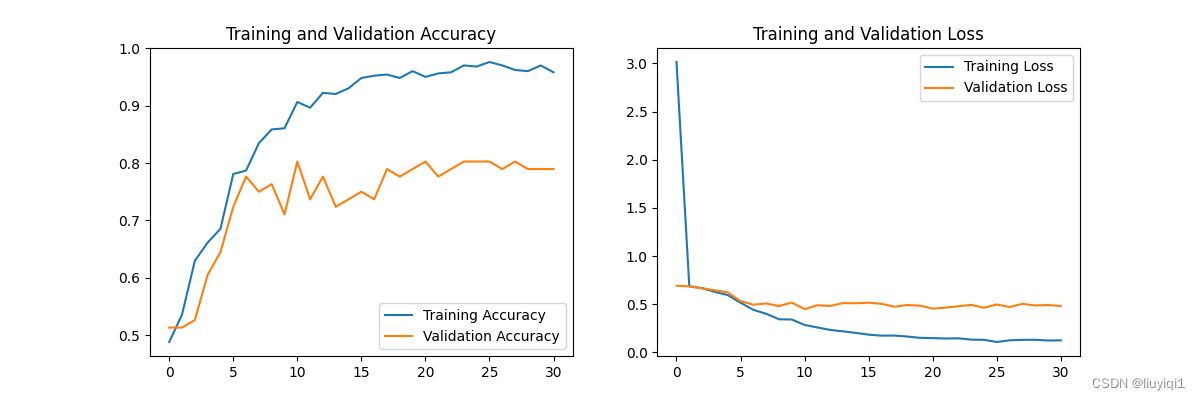

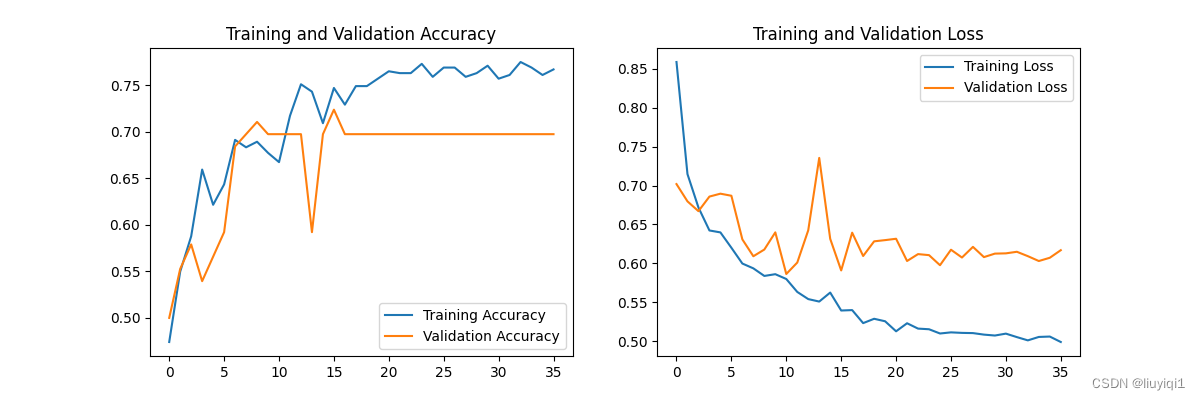

acc = history.history['accuracy']

val_acc = history.history['val_accuracy']

loss = history.history['loss']

val_loss = history.history['val_loss']

epochs_range = range(len(loss))

plt.figure(figsize=(12, 4))

plt.subplot(1, 2, 1)

plt.plot(epochs_range, acc, label='Training Accuracy')

plt.plot(epochs_range, val_acc, label='Validation Accuracy')

plt.legend(loc='lower right')

plt.title('Training and Validation Accuracy')

plt.subplot(1, 2, 2)

plt.plot(epochs_range, loss, label='Training Loss')

plt.plot(epochs_range, val_loss, label='Validation Loss')

plt.legend(loc='upper right')

plt.title('Training and Validation Loss')

plt.show()

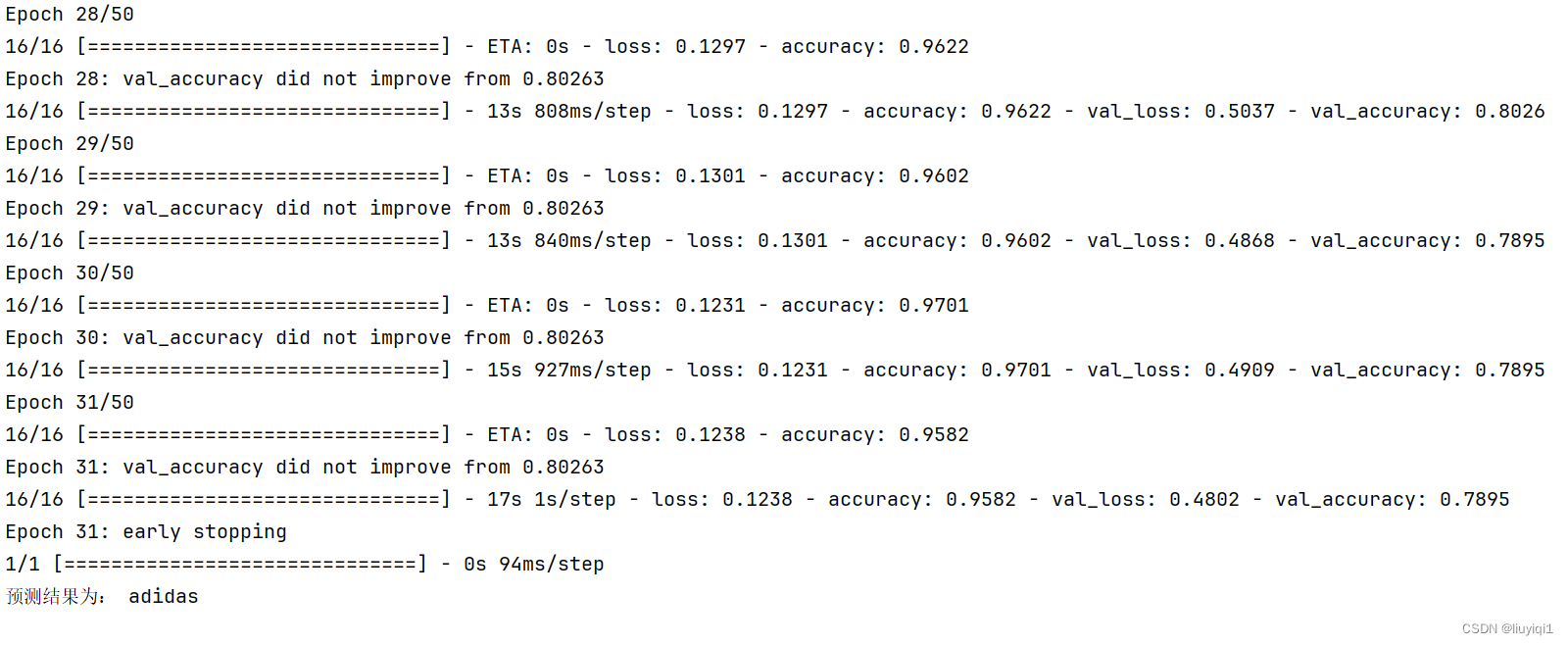

# 加载效果最好的模型权重

model.load_weights('best_model.h5')

from PIL import Image

import numpy as np

# img = Image.open("./45-data/Monkeypox/M06_01_04.jpg")

img = Image.open("E:/Administrator/Desktop/biomedical/noml/deeplearning/data/46-data/test/nike/1.jpg") #这里选择你需要预测的图片

image = tf.image.resize(img, [img_height, img_width])

img_array = tf.expand_dims(image, 0) #/255.0

predictions = model.predict(img_array)

print("预测结果为:",class_names[np.argmax(predictions)])结果部分

把学习率调到0.001后

学习率调到0.0001

个人总结:学到了动态设置学习率和让训练早停,训练早停就可以避免浪费不必要的时间啦

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?