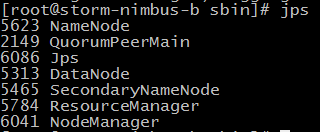

1、先启动hadoop相关的服务

./hadoop-daemon.sh start namenode

./hadoop-daemon.sh start datanode

./hadoop-daemon.sh start secondarynamenode

./yarn-daemon.sh start resourcemanager

./yarn-daemon.sh start nodemanager

2、配置hive

配置jdk、hadoop的环境变量

vi /etc/profile --增加环境变量

export JAVA_HOME=/opt/jdk1.7.0_67

export HADOOP_HOME=/opt/hadoop-2.4.1

export PATH=$PATH:$JAVA_HOME/bin:$HADOOP_HOME/bin

source /etc/profile --让环境变量生效

echo $JAVA_HOME --检验环境变量是否生效

配置mysql meta store

vi hive-site.xml

<configuration>

<property>

<name>javax.jdo.option.ConnectionURL</name>

<value>jdbc:mysql://192.168.17.1:3306/hive?createDatabaseIfNotExist=true</value>

</property>

<property>

<name>javax.jdo.option.ConnectionDriverName</name>

<value>com.mysql.jdbc.Driver</value>

</property>

<property>

<name>javax.jdo.option.ConnectionUserName</name>

<value>root</value>

</property>

<property>

<name>javax.jdo.option.ConnectionPassword</name>

<value>123</value>

</property>

</configuration>

将mysql的驱动放到hive的lib下

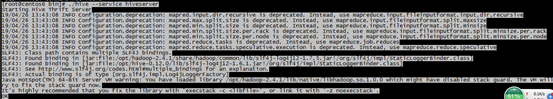

3、启动hive的服务端

./hive --service hiveserver

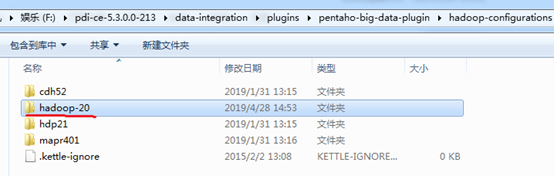

4、配置kettle

修改配置文件plugin

F:\pdi-ce-5.3.0.0-213\data-integration\plugins\pentaho-big-data-plugin

修改属性active.hadoop.configuration

active.hadoop.configuration=hadoop-20

hadoop-20是data-integration\plugins\pentaho-big-data-plugin\hadoop-configurations中的文件夹

将配置文件复制到hadoop-20下

包括hive-site (hive的配置文件)

yarn-site、core-site、hdfs-site (hadoop的配置文件)

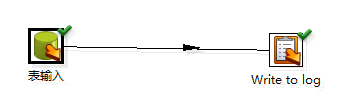

Kettle中建立连接并测试

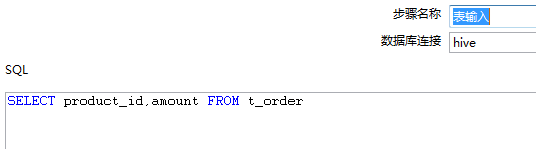

运行相关组件

6366

6366

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?