1.下载并解压hbase

tar –zxvf hbase-0.98.15-hadoop2-bin.tar.gz2.并配置环境变量

3.修改hbase-env.sh

修改conf/hbase-env.sh

export JAVA_HOME=/usr/local/jdk1.7.0_80

export HBASE_MANAGES_ZK=false

export HBASE_CLASSPATH=/usr/local/hadoop-2.6.0/etc/Hadoop###HBASE_MANAGES_ZK=false

###HBase是否管理它自己的ZooKeeper的实例。启动指定的ZooKeeper,而非自带的ZooKeeper。

##守护进程设置为4G,机器内存不够可不设置The maximum amount of heap to use.##Default is left to JVM default.

export HBASE_HEAPSIZE=4G4.修改hbase-site.xml

修改Conf/hbase-site.xml

<configuration>

<!--$HADOOP_HOME/conf/core-site.xml的fs.default.name的主机和端口号一致-->

<property>

<name>hbase.rootdir</name>

<value>hdfs://cluster/hbase</value>

</property>

<!--完全分布式模式-->

<property>

<name>hbase.cluster.distributed</name>

<value>true</value>

</property>

<!--配置zookeeper集群地址-->

<property>

<name>hbase.zookeeper.quorum</name>

<value>node01,node02,node03</value>

</property>

<property>

<name>hbase.master.port</name>

<value>60000</value>

</property>

<property>

<name>hbase.zookeeper.property.clientPort</name>

<value>2181</value>

</property>

<property>

<name>zookeeper.session.timeout</name>

<value>180000</value>

</property>

<property>

<name>hbase.regionserver.restart.on.zk.expire</name>

<value>true</value>

</property>

<property>

<name>hbase.rpc.timeout</name>

<value>180000</value>

</property>

<property>

<name>hbase.client.scanner.timeout.period</name>

<value>180000</value>

<description> hbase.regionserver.lease.period已过时,客户端每次scan|get的超时时间</description>

</property>

</configuration>5.修改regionservers

node01

node02

node036.替换不一致的jar包

##将$HADOOP_HOME/share/hadoop/common/lib下的htrace-core-3.0.4.jar复制

##到$HBASE_HOME/lib下。

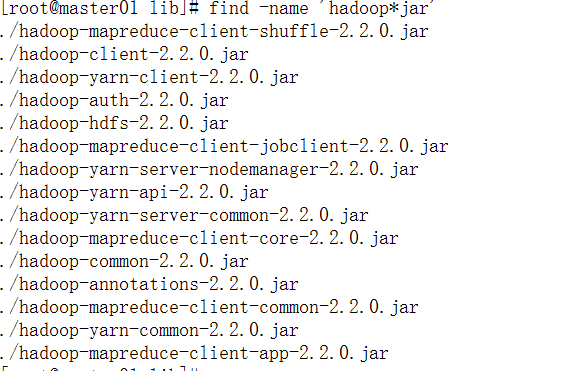

cp $HADOOP_HOME/share/hadoop/common/lib/htrace-core-3.0.4.jar $HBASE_HOME/lib进入 hbase 的 lib 目录,查看 hadoop jar 包的版本

cd $HBASE_HOME/lib

find -name 'hadoop*jar'

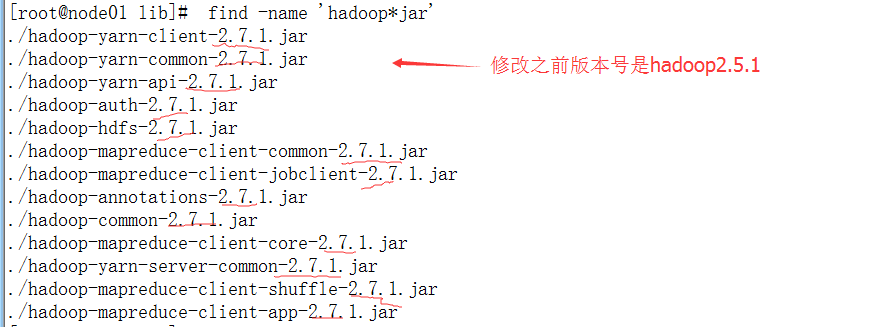

发现与 hadoop 集群的版本号不一致,需要用 hadoop 目录下的 jar 替换 hbase/lib 目录下的 jar 文件。

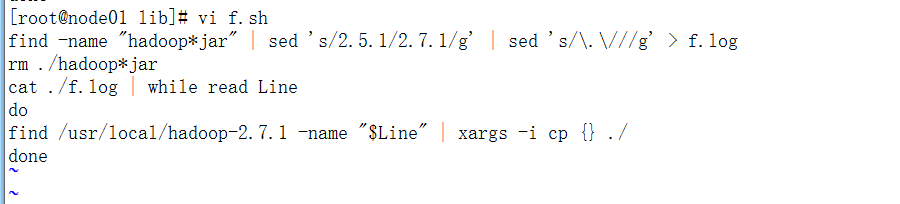

编写脚本来完成替换,如下所示:

find -name "hadoop*jar" | sed 's/2.5.1/2.7.1/g' | sed 's/\.\///g' > f.log

rm ./hadoop*jar

cat ./f.log | while read Line

do

find /usr/local/hadoop-2.7.1 -name "$Line" | xargs -i cp {} ./

done

chmod u+x f.sh

./f.sh

find-name 'hadoop*jar'OK,jar 包替换成功;hbase/lib 目录下还有个 slf4j-log4j12-XXX.jar,在机器有装hadoop时,由于classpath中会有hadoop中的这个jar包,会有冲突,直接删除掉

rm`find-name 'slf4j-log4j12-*jar'`拷贝hbase到其他节点

复制hbase-0.98.15到其它的节点上

scp -r /usr/local/ hbase-0.98.15/ root@node02:/usr/local/

scp -r /usr/local/ hbase-0.98.15/ root@node03:/usr/local/7.启动Hbase集群

###确保已启动HADOOP集群、确保已启动zookeeper

hbase-0.98.15-hadoop2/bin/start-hbase.sh

hbase-0.98.15-hadoop2/bin/hbase shell验证jps ,查看Hmater、HRegionServer是否启动

查看日志HBASE_HOME/logs/,是否报错

//建表,表名、列族

hbase(main):002:0> create 'tab1','fam1'

//列出所有的表

hbase(main):003:0> list

//插表,表名、行名、列族:列名、值

hbase(main):004:0> put 'tab1','row1','fam1:col1','val1'

//查看表中的信息

hbase(main):007:0> scan 'tab1'

//get指令获取表中的一行数据

hbase(main):008:0> get 'tab1','row2'

//delete删除一行数据

hbase(main):009:0> delete 'tab1','row2','fam1:col2'

//删除表

hbase(main):011:0> disable 'tab1'

hbase(main):012:0> drop 'tab1'注意:扫描时,设置客户端缓存来提高网络通信效率

scan.setCaching(10000);node01

http://node01:60010/

RegionServer

http://node01:60030/

hbase启动时报错:java.lang.NoClassDefFoundError: org/htrace/Trace

hadoop2.6,搭配hbase-0.98.15,启动hadoop时,报错如下:

java.lang.NoClassDefFoundError: org/htrace/Trace

at org.apache.hadoop.ipc.ProtobufRpcEngine$Invoker.invoke(ProtobufRpcEngine.java:214)

at com.sun.proxy.$Proxy15.getFileInfo(Unknown Source)

at org.apache.hadoop.hdfs.protocolPB.ClientNamenodeProtocolTranslatorPB.getFileInfo(ClientNamenodeProtocolTranslatorPB.java:752)

at sun.reflect.NativeMethodAccessorImpl.invoke0(Native Method)

at sun.reflect.NativeMethodAccessorImpl.invoke(NativeMethodAccessorImpl.java:57)

at sun.reflect.DelegatingMethodAccessorImpl.invoke(DelegatingMethodAccessorImpl.java:43)

at java.lang.reflect.Method.invoke(Method.java:606)

at org.apache.hadoop.io.retry.RetryInvocationHandler.invokeMethod(RetryInvocationHandler.java:187)

at org.apache.hadoop.io.retry.RetryInvocationHandler.invoke(RetryInvocationHandler.java:102)

at com.sun.proxy.$Proxy16.getFileInfo(Unknown Source)

at sun.reflect.NativeMethodAccessorImpl.invoke0(Native Method)

at sun.reflect.NativeMethodAccessorImpl.invoke(NativeMethodAccessorImpl.java:57)

at sun.reflect.DelegatingMethodAccessorImpl.invoke(DelegatingMethodAccessorImpl.java:43)

at java.lang.reflect.Method.invoke(Method.java:606)

at org.apache.hadoop.hbase.fs.HFileSystem$1.invoke(HFileSystem.java:279)

at com.sun.proxy.$Proxy17.getFileInfo(Unknown Source)

at org.apache.hadoop.hdfs.DFSClient.getFileInfo(DFSClient.java:1988)

at org.apache.hadoop.hdfs.DistributedFileSystem$18.doCall(DistributedFileSystem.java:1118)

at org.apache.hadoop.hdfs.DistributedFileSystem$18.doCall(DistributedFileSystem.java:1114)

at org.apache.hadoop.fs.FileSystemLinkResolver.resolve(FileSystemLinkResolver.java:81)

at org.apache.hadoop.hdfs.DistributedFileSystem.getFileStatus(DistributedFileSystem.java:1114)

at org.apache.hadoop.fs.FilterFileSystem.getFileStatus(FilterFileSystem.java:409)

at org.apache.hadoop.fs.FileSystem.exists(FileSystem.java:1400)

at org.apache.hadoop.hbase.regionserver.HRegionServer.setupWALAndReplication(HRegionServer.java:1526)

at org.apache.hadoop.hbase.regionserver.HRegionServer.handleReportForDutyResponse(HRegionServer.java:1275)

at org.apache.hadoop.hbase.regionserver.HRegionServer.run(HRegionServer.java:831)

at java.lang.Thread.run(Thread.java:745)

Caused by: java.lang.ClassNotFoundException: org.htrace.Trace

at java.net.URLClassLoader$1.run(URLClassLoader.java:366)

at java.net.URLClassLoader$1.run(URLClassLoader.java:355)

at java.security.AccessController.doPrivileged(Native Method)

at java.net.URLClassLoader.findClass(URLClassLoader.java:354)

at java.lang.ClassLoader.loadClass(ClassLoader.java:425)

at sun.misc.Launcher$AppClassLoader.loadClass(Launcher.java:308)

at java.lang.ClassLoader.loadClass(ClassLoader.java:358)

... 27 more解决办法:

将

HADOOPHOME/share/hadoop/common/lib下的htrace−core−3.0.4.jar复制到

HBASE_HOME/lib下。

cp

HADOOPHOME/share/hadoop/common/lib/htrace−core−3.0.4.jar

HBASE_HOME/lib

2181

2181

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?