如有问题qq群:316665712 欢迎您的加入,让我们的家庭更壮大

一、基本信息和环境准备

以下最低要求应支持带有核心服务和多个CirrOS实例的概念验证环境:

控制器节点:1个处理器,4 GB内存和5 GB存储

计算节点:1个处理器,2 GB内存和10 GB存储我这里采用硬件:统一配置2个处理器,4G内存,100GB存储,根据自己实际情况

1.1、硬件信息

[root@controller ~]# cat /etc/redhat-release

CentOS Linux release 7.8.2003 (Core)

[root@controller ~]# uname -r

3.10.0-1127.el7.x86_64

1.2、IP配置

要求双网卡(桥接和vm8)

控制节点IP

ens33: IP:172.31.100.22/16 (桥接) 默认配置可以上网

ens34:IP:192.168.47.128/24 (vm8) 默认配置可以上网

计算节点 IP

ens33: IP:172.31.100.23/16 (桥接) 默认配置可以上网

ens34 :IP:192.168.47.129/24 (vm8 )默认配置可以上网

1.3、注意节点需要开启虚拟化

二、 环境

2.1、主机名

在controller执行

[root@controller ~]# cat /etc/hosts

127.0.0.1 localhost localhost.localdomain localhost4 localhost4.localdomain4

::1 localhost localhost.localdomain localhost6 localhost6.localdomain6

172.31.100.22 controller

172.31.100.23 compute1

[root@compute1 ~]# hostnamectl set-hostname controller

[root@compute1 ~]# bash

在compute1执行

[root@compute1 ~]# cat /etc/hosts

127.0.0.1 localhost localhost.localdomain localhost4 localhost4.localdomain4

::1 localhost localhost.localdomain localhost6 localhost6.localdomain6

172.31.100.22 controller

172.31.100.23 compute1

[root@compute1 ~]# hostnamectl set-hostname compute1

[root@compute1 ~]# bash

2.2 关闭防火墙和selinux

(两台节点都执行)

systemctl stop firewalld #关闭防火墙

systemctl disable firewalld #禁用防火墙开机自动启动

setenforce 0 #临时关闭selinux

vim /etc/selinux/config #永久关闭selinux

SELINUX=disabled #修改

注意:建议修改完最好重启两天节点服务器

2.3选择tzselect和 ntp同步时间

选择时区:tzselect(两台节点都执行)

(两台节点都执行)

yum install -y ntp

(controller 上执行)

controller 作为ntp服务器,修改ntp配置文件。

[root@controller ~]#vim /etc/ntp.conf

server 127.127.1.0 # local clock

fudge 127.127.1.0 stratum 10 #stratum设置为其它值也是可以的,其范围为0~15

重启ntp服务。(controller上执行)

[root@controller ~]#systemctl restart ntpd.service

[root@controller ~]# systemctl start ntpd

同步时间(在compute1执行)

[root@compute1 ~]# ntpdate controller

7 May 02:45:34 ntpdate[12777]: adjust time server 172.31.100.22 offset -0.038221 sec

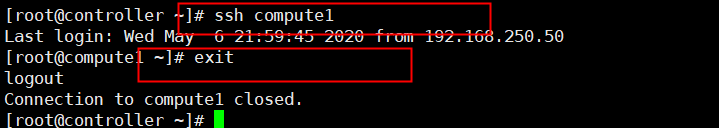

2.4 ssh免秘钥登录

[root@controller ~]# ssh-keygen -t dsa -P ‘’ -f ~/.ssh/id_dsa

[root@controller ~]# ssh-copy-id -i /root/.ssh/id_dsa.pub compute1

验证 登录compute1 不需要输入密码

[root@controller ~]# ssh compute1

[root@compute1 ~]# exit #记得退出,

2.5 换阿里源(两台节点)

备份

mv /etc/yum.repos.d/CentOS-Base.repo /etc/yum.repos.d/CentOS-Base.repo_bak

获取阿里源文件

wget -O /etc/yum.repos.d/CentOS-Base.repo http://mirrors.aliyun.com/repo/Centos-7.repo

更新cache

yum makecache

更新

yum -y update

三、OpenStack软件包

(两台都执行)

3.1 在安装Rocky版本时,运行:

yum install centos-release-openstack-rocky -y

3.2 在所有节点上升级软件包:

yum upgrade -y

3.3 安装OpenStack客户端:

yum install python-openstackclient -y

3.4 CentOS 默认情况下启用SELinux。安装 openstack-selinux软件包以自动管理OpenStack服务的安全策略:

yum install openstack-selinux -y

四、sql databases

controller执行

4.1安装软件包:

[root@controller ~]# yum install mariadb mariadb-server python2-PyMySQL -y

4.2编辑(/etc/my.cnf.d/如果需要,请备份现有的配置文件)并完成以下操作:

创建一个[mysqld]部分,并将bind-address

密钥设置为控制器节点的管理IP地址,以允许其他节点通过管理网络进行访问。设置其他键以启用有用的选项和UTF-8字符集:

[root@controller ~]# vim /etc/my.cnf.d/openstack.cnf

[mysqld]

bind-address = 172.31.100.22 ##控制ip

default-storage-engine = innodb

innodb_file_per_table = on

max_connections = 4096

collation-server = utf8_general_ci

character-set-server = utf8

4.3启动数据库服务,并将其配置为在系统引导时启动:

[root@controller ~]# systemctl enable mariadb.service

[root@controller ~]# systemctl start mariadb.service

4.4 通过运行mysql_secure_installation 脚本来保护数据库服务。特别是,为数据库root帐户选择合适的密码 :

设置密码 (运行加固脚本)

一路输入“Y” 遇到输入密码mysql 密码123456(设置mysql密码)

[root@controller ~]# mysql_secure_installation

5.5 测试mysql登录

[root@controller ~]# mysql -u root -p123456

exit 退出

五 、Message queue 消息队列

controller执行

5.1 安装软件包:

[root@controller ~]# yum install rabbitmq-server -y

5.2 启动消息队列服务,并将其配置为在系统引导时启动:

[root@controller ~]# systemctl enable rabbitmq-server.service

[root@controller ~]# systemctl start rabbitmq-server.service

5.3 添加openstack用户:

[root@controller ~]# rabbitmqctl add_user openstack RABBIT_PASS

如报错解决方法,成功则跳过一下步骤:

1、报错解决方法1 https://blog.csdn.net/lxy___/article/details/105973301

2、报错解决方法2 如第一步还是解决不了,注释/etc/hosts 添加的解析主机名

[root@controller ~]# cat /etc/hosts

#172.31.100.22 controller

#172.31.100.23 compute1

5.4 允许用户配置,写入和读取访问权限 openstack:

[root@controller ~]# rabbitmqctl set_permissions openstack ".*" ".*" ".*"

六、 Memcached

controller执行

6.1安装软件包:

[root@controller ~]# yum install memcached python-memcached -y

6.2编辑 /etc/sysconfig/memcached文件并完成以下操作:

[root@controller ~]# vim /etc/sysconfig/memcached

OPTIONS="-l 127.0.0.1,::1,controller" #配置服务以使用控制器节点的管理IP地址。这是为了允许其他节点通过管理网络进行访问:

6.3 启动Memcached服务,并将其配置为在系统启动时启动:

[root@controller ~]# systemctl enable memcached.service

[root@controller ~]# systemctl start memcached.service

七、配置etcd

controller执行

7.1 安装软件包

[root@controller ~]# yum install etcd -y

7.2 编辑/etc/etcd/etcd.conf文件,并设置ETCD_INITIAL_CLUSTER, ETCD_INITIAL_ADVERTISE_PEER_URLS,ETCD_ADVERTISE_CLIENT_URLS, ETCD_LISTEN_CLIENT_URLS控制器节点,以使经由管理网络通过其他节点的访问的管理IP地址:

[root@controller ~]# vim /etc/etcd/etcd.conf

#[Member]

ETCD_DATA_DIR="/var/lib/etcd/default.etcd"

ETCD_LISTEN_PEER_URLS="http://172.31.100.22:2380"

ETCD_LISTEN_CLIENT_URLS="http://172.31.100.22:2379"

ETCD_NAME="controller"

#[Clustering]

ETCD_INITIAL_ADVERTISE_PEER_URLS="http://172.31.100.22:2380"

ETCD_ADVERTISE_CLIENT_URLS="http://172.31.100.22:2379"

ETCD_INITIAL_CLUSTER="controller=http://172.31.100.22:2380"

ETCD_INITIAL_CLUSTER_TOKEN="etcd-cluster-01"

ETCD_INITIAL_CLUSTER_STATE="new"

7.3 启用并启动etcd服务:

[root@controller ~]# systemctl enable etcd

[root@controller ~]# systemctl start etcd

安装OpenStack服务

八、 keystonec 身份服务

controller执行

8.1 使用数据库访问客户端以root用户身份连接到数据库服务器:

[root@controller ~]# mysql -u root -p123456

8.2 创建keystone数据库:

MariaDB [(none)]> CREATE DATABASE keystone;

8.3 授予对keystone数据库的适当访问权限:

MariaDB [(none)]> GRANT ALL PRIVILEGES ON keystone.* TO 'keystone'@'localhost' \

IDENTIFIED BY 'KEYSTONE_DBPASS';

MariaDB [(none)]> GRANT ALL PRIVILEGES ON keystone.* TO 'keystone'@'%' \

IDENTIFIED BY 'KEYSTONE_DBPASS';

MariaDB [(none)]> exit

退出数据库访问客户端。

8.4 安装软件包:

[root@controller ~]# yum install openstack-keystone httpd mod_wsgi -y

8.5 编辑keystone.conf文件

[root@controller ~]# vim /etc/keystone/keystone.conf

[database] #在该[database]部分中,配置数据库访问:

connection = mysql+pymysql://keystone:KEYSTONE_DBPASS@controller/keystone

[token] #在该[token]部分中,配置Fernet令牌提供者:

provider = fernet

8.6 填充身份服务数据库:

[root@controller ~]#su -s /bin/sh -c "keystone-manage db_sync" keystone

8.7 初始化Fernet密钥存储库:

[root@controller ~]# keystone-manage fernet_setup --keystone-user keystone --keystone-group keystone

[root@controller ~]# keystone-manage credential_setup --keystone-user keystone --keystone-group keystone

8.8 引导身份服务:

[root@controller ~]# keystone-manage bootstrap --bootstrap-password ADMIN_PASS \

--bootstrap-admin-url http://controller:5000/v3/ \

--bootstrap-internal-url http://controller:5000/v3/ \

--bootstrap-public-url http://controller:5000/v3/ \

--bootstrap-region-id RegionOne

8.9 配置Apache HTTP服务器

[root@controller ~]#vim /etc/httpd/conf/httpd.conf

ServerName controller #ServerName选项以引用控制器节点:

8.10 创建到/usr/share/keystone/wsgi-keystone.conf文件的链接:

[root@controller ~]#ln -s /usr/share/keystone/wsgi-keystone.conf /etc/httpd/conf.d/

8.11 启动Apache HTTP服务,并将其配置为在系统启动时启动:

[root@controller ~]#systemctl enable httpd.service

[root@controller ~]#systemctl start httpd.service

8.12 配置管理帐户

[root@controller ~]# export OS_USERNAME=admin

[root@controller ~]#export OS_PASSWORD=ADMIN_PASS

[root@controller ~]# export OS_PROJECT_NAME=admin

[root@controller ~]# export OS_USER_DOMAIN_NAME=Default

[root@controller ~]# export OS_PROJECT_DOMAIN_NAME=Default

[root@controller ~]# export OS_AUTH_URL=http://controller:5000/v3

[root@controller ~]# export OS_IDENTITY_API_VERSION=3

创建域,项目,用户和角色

8.12 尽管本指南的梯形失真管理引导程序步骤中已经存在“默认”域,但是创建新域的正式方法是:

[root@controller ~]#openstack domain create --description "An Example Domain" example

8.13 本指南使用一个服务项目,其中包含您添加到环境中的每个服务的唯一用户。创建service 项目:

[root@controller ~]#openstack project create --domain default \

--description "Service Project" service

8.14 常规(非管理员)任务应使用没有特权的项目和用户。例如,本指南创建myproject项目和myuser 用户。

- 8.14.1 创建myproject项目:

[root@controller ~]# openstack project create --domain default \

--description "Demo Project" myproject

- 8.14.2 创建myuser用户

[root@controller ~]# openstack user create --domain default \

--password-prompt myuser #密码设置成这个密码 myuser

- 8.14.3 创建myrole角色:

[root@controller ~]# openstack role create myrole

- 8.14.4 将myrole角色添加到myproject项目和myuser用户:

[root@controller ~]#openstack role add --project myproject --user myuser myrole

验证操作

8.15 取消设置临时 变量OS_AUTH_URL和OS_PASSWORD环境变量:

[root@controller ~]# unset OS_AUTH_URL OS_PASSWORD

8.16 以admin用户身份请求身份验证令牌:

[root@controller ~]# openstack --os-auth-url http://controller:5000/v3 \

--os-project-domain-name Default --os-user-domain-name Default \

--os-project-name admin --os-username admin token issue #提示输入密码 ADMIN_PASS

8.17 作为myuser上一节中创建的用户,请请求认证令牌:

[root@controller ~]# openstack --os-auth-url http://controller:5000/v3 \

--os-project-domain-name Default --os-user-domain-name Default \

--os-project-name myproject --os-username myuser token issue #提示输入密码 myuser

创建脚本

8.18 创建和编辑adminrc文件并添加以下内容:

[root@controller ~]# cat adminrc

export OS_PROJECT_DOMAIN_NAME=Default

export OS_USER_DOMAIN_NAME=Default

export OS_PROJECT_NAME=admin

export OS_USERNAME=admin

export OS_PASSWORD=ADMIN_PASS

export OS_AUTH_URL=http://controller:5000/v3

export OS_IDENTITY_API_VERSION=3

export OS_IMAGE_API_VERSION=2

8.19 创建和编辑demorc 文件并添加以下内容:

[root@controller ~]# cat demorc

export OS_PROJECT_DOMAIN_NAME=Default

export OS_USER_DOMAIN_NAME=Default

export OS_PROJECT_NAME=myproject

export OS_USERNAME=myuser

export OS_PASSWORD=MYUSER_PASS

export OS_AUTH_URL=http://controller:5000/v3

export OS_IDENTITY_API_VERSION=3

export OS_IMAGE_API_VERSION=2

8.20 加载admin-openrc文件以使用身份服务的位置以及admin项目和用户凭据填充环境变量:

[root@controller ~]# . adminrc

8.21 请求身份验证令牌:

[root@controller ~]# openstack token issue

九 、配置glance 图像服务

controller 节点执行

9.1 使用数据库访问客户端以root用户身份连接到数据库服务器:

[root@controller ~]# mysql -u root -p123456

- 9.1.1 创建glance数据库:

MariaDB [(none)]> CREATE DATABASE glance;

Query OK, 1 row affected (0.00 sec)

- 9.1.2 授予对glance数据库的适当访问权限:

MariaDB [(none)]> GRANT ALL PRIVILEGES ON glance.* TO 'glance'@'localhost' \

-> IDENTIFIED BY 'GLANCE_DBPASS';

MariaDB [(none)]> GRANT ALL PRIVILEGES ON glance.* TO 'glance'@'%' \

-> IDENTIFIED BY 'GLANCE_DBPASS';

MariaDB [(none)]> exit

9.2 来源admin凭据来访问仅管理员CLI命令:

[root@controller ~]# . adminrc

9.3 要创建服务凭证,请完成以下步骤:

- 9.3.1创建glance用户:

[root@controller ~]# openstack user create --domain default --password-prompt glance #提示输入密码GLANCE_PASS

- 9.3.2 将admin角色添加到glance用户和 service项目:

[root@controller ~]# openstack role add --project service --user glance admin

- 9.3.3 创建glance服务实体:

[root@controller ~]# openstack service create --name glance \

--description "OpenStack Image" image

- 9.3.4 创建图像服务API端点:

[root@controller ~]# openstack endpoint create --region RegionOne \

image public http://controller:9292

[root@controller ~]# openstack endpoint create --region RegionOne \

image internal http://controller:9292

[root@controller ~]# openstack endpoint create --region RegionOne \

image admin http://controller:9292

9.4 安装软件包:

[root@controller ~]#yum install openstack-glance -y

9.5 编辑glance-api.conf文件并完成以下操作:

[root@controller ~]#vim /etc/glance/glance-api.conf

[database] #在该[database]部分中,配置数据库访问:

connection = mysql+pymysql://glance:GLANCE_DBPASS@controller/glance

[keystone_authtoken] #在[keystone_authtoken]和[paste_deploy]部分中,配置身份服务访问:

www_authenticate_uri = http://controller:5000

auth_url = http://controller:5000

memcached_servers = controller:11211

auth_type = password

project_domain_name = Default

user_domain_name = Default

project_name = service

username = glance

password = GLANCE_PASS

[paste_deploy]

flavor = keystone

[glance_store] #在该[glance_store]部分中,配置本地文件系统存储和图像文件的位置:

stores = file,http

default_store = file

filesystem_store_datadir = /var/lib/glance/images/

9.6 编辑glance-registry.conf文件并完成以下操作:

[root@controller ~]#vim /etc/glance/glance-registry.conf 修改

[database] #在该[database]部分中,配置数据库访问:

connection = mysql+pymysql://glance:GLANCE_DBPASS@controller/glance

[keystone_authtoken] #在[keystone_authtoken]和[paste_deploy]部分中,配置身份服务访问:

www_authenticate_uri = http://controller:5000

auth_url = http://controller:5000

memcached_servers = controller:11211

auth_type = password

project_domain_name = Default

user_domain_name = Default

project_name = service

username = glance

password = GLANCE_PASS

[paste_deploy]

flavor = keystone

9.7 填充图像服务数据库:

[root@controller ~]#su -s /bin/sh -c "glance-manage db_sync" glance

9.8 启动映像服务,并将其配置为在系统引导时启动:

[root@controller ~]# systemctl enable openstack-glance-api.service \

openstack-glance-registry.service

[root@controller ~]# systemctl start openstack-glance-api.service \

openstack-glance-registry.service

9.9 查看glange日志

[root@controller ~]# cat /var/log/glance/registry.log

[root@controller ~]# cat /var/log/glance/api.log

验证操作

9.10 来源admin凭据来访问仅管理员CLI命令:

[root@controller ~]# . adminrc

9.11 下载源图像:

[root@controller ~]# wget http://download.cirros-cloud.net/0.4.0/cirros-0.4.0-x86_64-disk.img

9.12 使用QCOW2磁盘格式,裸 容器格式和公共可见性将图像上传到图像服务 ,以便所有项目都可以访问它:

[root@controller ~]# openstack image create "cirros" \

--file cirros-0.4.0-x86_64-disk.img \

--disk-format qcow2 --container-format bare \

--public

9.13 确认上传图片并验证属性:

[root@controller ~]# openstack image list

+--------------------------------------+--------+--------+

| ID | Name | Status |

+--------------------------------------+--------+--------+

| d1eca54c-3ade-4bb3-9b8a-43b3c6f3ed0d | cirros | active |

+--------------------------------------+--------+--------+

十、 计算服务

controller 节点执行

10.1 使用数据库访问客户端以root用户身份连接到数据库服务器:

[root@controller ~]# mysql -u root -p

- 10.1.1 创建nova_api,nova,nova_cell0,和placement数据库:

MariaDB [(none)]> CREATE DATABASE nova_api;

- 10.1.2 授予对数据库的适当访问权限:

MariaDB [(none)]> CREATE DATABASE nova;

MariaDB [(none)]> CREATE DATABASE nova_cell0;

MariaDB [(none)]> CREATE DATABASE placement;

MariaDB [(none)]> GRANT ALL PRIVILEGES ON nova_api.* TO 'nova'@'localhost' \

-> IDENTIFIED BY 'NOVA_DBPASS';

MariaDB [(none)]> GRANT ALL PRIVILEGES ON nova_api.* TO 'nova'@'%' \

-> IDENTIFIED BY 'NOVA_DBPASS';

MariaDB [(none)]> GRANT ALL PRIVILEGES ON nova.* TO 'nova'@'localhost' \

-> IDENTIFIED BY 'NOVA_DBPASS';

MariaDB [(none)]> GRANT ALL PRIVILEGES ON nova.* TO 'nova'@'%' \

-> IDENTIFIED BY 'NOVA_DBPASS';

MariaDB [(none)]> GRANT ALL PRIVILEGES ON nova_cell0.* TO 'nova'@'localhost' \

-> IDENTIFIED BY 'NOVA_DBPASS';

MariaDB [(none)]> GRANT ALL PRIVILEGES ON nova_cell0.* TO 'nova'@'%' \

-> IDENTIFIED BY 'NOVA_DBPASS';

MariaDB [(none)]> GRANT ALL PRIVILEGES ON placement.* TO 'placement'@'localhost' \

-> IDENTIFIED BY 'PLACEMENT_DBPASS';

MariaDB [(none)]> GRANT ALL PRIVILEGES ON placement.* TO 'placement'@'%' \

-> IDENTIFIED BY 'PLACEMENT_DBPASS';

MariaDB [(none)]> exit

10.2 来源admin凭据来访问仅管理员CLI命令:

[root@controller ~]# . adminrc

10.3 创建计算服务凭据:

- 10.3.1 创建nova用户:

[root@controller ~]# openstack user create --domain default --password-prompt nova #提示输入密码NOVA_PASS

- 10.3.2 admin向nova用户添加角色:

[root@controller ~]# openstack role add --project service --user nova admin

- 10.3.3 创建nova服务实体:

[root@controller ~]# openstack service create --name nova \

--description "OpenStack Compute" compute

- 10.3.4 创建Compute API服务端点:

[root@controller ~]# openstack endpoint create --region RegionOne \

compute public http://controller:8774/v2.1

[root@controller ~]# openstack endpoint create --region RegionOne \

compute internal http://controller:8774/v2.1

[root@controller ~]# openstack endpoint create --region RegionOne \

compute admin http://controller:8774/v2.1

- 10.3.5 使用您选择的来创建展示位置服务用户PLACEMENT_PASS:

[root@controller ~]# openstack user create --domain default --password-prompt placement #提示输入密码PLACEMENT_PASS

- 10.3.6 使用管理员角色将Placement用户添加到服务项目中:

[root@controller ~]# openstack role add --project service --user placement admin

- 10.3.7在服务目录中创建Placement API条目:

[root@controller ~]# openstack service create --name placement \

--description "Placement API" placement

- 10.3.8创建Placement API服务端点:

[root@controller ~]# openstack endpoint create --region RegionOne \

placement public http://controller:8778

[root@controller ~]# openstack endpoint create --region RegionOne \

placement internal http://controller:8778

[root@controller ~]# openstack endpoint create --region RegionOne \

placement admin http://controller:8778

10.4 安装软件包:

[root@controller ~]# yum install openstack-nova-api openstack-nova-conductor \

openstack-nova-console openstack-nova-novncproxy \

openstack-nova-scheduler openstack-nova-placement-api -y

10.5 编辑nova.conf文件并完成以下操作:

[root@controller ~]# vim /etc/nova/nova.conf

[DEFAULT] #在此[DEFAULT]部分中,仅启用计算和元数据API:

enabled_apis = osapi_compute,metadata

[api_database] #在[api_database],[database]和[placement_database] 部分,配置数据库访问:

connection = mysql+pymysql://nova:NOVA_DBPASS@controller/nova_api

[database]

connection = mysql+pymysql://nova:NOVA_DBPASS@controller/nova

[placement_database]

connection = mysql+pymysql://placement:PLACEMENT_DBPASS@controller/placement

[DEFAULT] #在该[DEFAULT]部分中,配置RabbitMQ消息队列访问:

transport_url = rabbit://openstack:RABBIT_PASS@controller

[api] #在[api]和[keystone_authtoken]部分中,配置身份服务访问:

auth_strategy = keystone

[keystone_authtoken]

auth_url = http://controller:5000/v3

memcached_servers = controller:11211

auth_type = password

project_domain_name = Default

user_domain_name = Default

project_name = service

username = nova

password = NOVA_PASS

[DEFAULT] #在该[DEFAULT]部分中,配置my_ip选项以使用控制器节点的管理接口IP地址:

my_ip = 172.31.100.22 #修改控制ip地址

[DEFAULT] # 在本[DEFAULT]节中,启用对网络服务的支持:

use_neutron = true

firewall_driver = nova.virt.firewall.NoopFirewallDriver

[vnc] #在该[vnc]部分中,将VNC代理配置为使用控制器节点的管理接口IP地址:

enabled = true

server_listen = $my_ip

server_proxyclient_address = $my_ip

[glance] #在该[glance]部分中,配置图像服务API的位置:

api_servers = http://controller:9292

[oslo_concurrency] #在该[oslo_concurrency]部分中,配置锁定路径:

lock_path = /var/lib/nova/tmp

[placement] #在该[placement]部分中,配置Placement API:

region_name = RegionOne

project_domain_name = Default

project_name = service

auth_type = password

user_domain_name = Default

auth_url = http://controller:5000/v3

username = placement

password = PLACEMENT_PASS

10.6 由于包装错误,您必须通过将以下配置添加到来启用对Placement API的访问 00-nova-placement-api.conf

[root@controller ~]# vim /etc/httpd/conf.d/00-nova-placement-api.conf #添加一下内容

<Directory /usr/bin>

<IfVersion >= 2.4>

Require all granted

</IfVersion>

<IfVersion < 2.4>

Order allow,deny

Allow from all

</IfVersion>

</Directory>

10.7 重新启动httpd服务:

[root@controller ~]# systemctl restart httpd

10.8 填充nova-api和placement数据库:

[root@controller ~]# su -s /bin/sh -c "nova-manage api_db sync" nova

10.9 注册cell0数据库:

[root@controller ~]# su -s /bin/sh -c "nova-manage cell_v2 map_cell0" nova

10.10 创建cell1单元格:

[root@controller ~]# su -s /bin/sh -c "nova-manage cell_v2 create_cell --name=cell1 --verbose" nova

10.11 填充nova数据库:

[root@controller ~]# su -s /bin/sh -c "nova-manage db sync" nova ##如果报错,重新执行就好了

10.12 验证nova cell0和cell1是否正确注册:

[root@controller ~]# su -s /bin/sh -c "nova-manage cell_v2 list_cells" nova

10.13 启动Compute服务并将其配置为在系统启动时启动:

[root@controller ~]# systemctl enable openstack-nova-api.service \

openstack-nova-consoleauth openstack-nova-scheduler.service \

openstack-nova-conductor.service openstack-nova-novncproxy.service

[root@controller ~]# systemctl start openstack-nova-api.service \

openstack-nova-consoleauth openstack-nova-scheduler.service \

openstack-nova-conductor.service openstack-nova-novncproxy.service

十一、 计算服务

compute1 节点执行

11.1 安装软件包:

[root@compute1 ~]# yum install openstack-nova-compute -y

11.2 是否开启虚拟化,必须开启虚拟化返回为0 表示没开启

[root@compute1 ~]# egrep -c '(vmx|svm)' /proc/cpuinfo

2 #大于0表示开启虚拟化

11.3 编辑nova.conf文件并完成以下操作:

[root@compute1 ~]# vim /etc/nova/nova.conf

[DEFAULT] #在此[DEFAULT]部分中,仅启用计算和元数据API:

enabled_apis = osapi_compute,metadata

[DEFAULT] #在该[DEFAULT]部分中,配置RabbitMQ消息队列访问:

transport_url = rabbit://openstack:RABBIT_PASS@controller

[api] #在[api]和[keystone_authtoken]部分中,配置身份服务访问:

auth_strategy = keystone

[keystone_authtoken]

auth_url = http://controller:5000/v3

memcached_servers = controller:11211

auth_type = password

project_domain_name = Default

user_domain_name = Default

project_name = service

username = nova

password = NOVA_PASS

[DEFAULT] #在该[DEFAULT]部分中,配置my_ip选项:

my_ip = 172.31.100.23 #计算节点IP

[DEFAULT] #在本[DEFAULT]节中,启用对网络服务的支持:

use_neutron = true

firewall_driver = nova.virt.firewall.NoopFirewallDriver

[vnc] #在该[vnc]部分中,启用和配置远程控制台访问:

enabled = true

server_listen = 0.0.0.0

server_proxyclient_address = $my_ip

novncproxy_base_url = http://172.31.100.22:6080/vnc_auto.html #IP填写控制IP地址

[glance] #在该[glance]部分中,配置图像服务API的位置:

api_servers = http://controller:9292

[oslo_concurrency] #在该[oslo_concurrency]部分中,配置锁定路径:

lock_path = /var/lib/nova/tmp

[placement] #在该[placement]部分中,配置Placement API:

region_name = RegionOne

project_domain_name = Default

project_name = service

auth_type = password

user_domain_name = Default

auth_url = http://controller:5000/v3

username = placement

password = PLACEMENT_PASS

[libvirt] 编辑文件中的[libvirt]部分,/etc/nova/nova.conf如下所示:

virt_type = qemu

11.4 启动Compute服务及其相关性,并将其配置为在系统启动时自动启动:

[root@compute1 ~]# systemctl enable libvirtd.service openstack-nova-compute.service

[root@compute1 ~]# systemctl start libvirtd.service openstack-nova-compute.service

回到控制服务器 验证

controller 节点执行

11.4 获取管理员凭据以启用仅管理员的CLI命令,然后确认数据库中有计算主机:

[root@controller ~]# . admin-openrc

[root@controller ~]# openstack compute service list --service nova-compute

+----+--------------+----------+------+---------+-------+----------------------------+

| ID | Binary | Host | Zone | Status | State | Updated At |

+----+--------------+----------+------+---------+-------+----------------------------+

| 7 | nova-compute | compute1 | nova | enabled | up | 2020-05-07T09:13:44.000000 |

+----+--------------+----------+------+---------+-------+----------------------------+

11.5 发现计算主机:

[root@controller ~]# su -s /bin/sh -c "nova-manage cell_v2 discover_hosts --verbose" nova

11.6 添加新的计算节点时,必须在控制器节点上运行以注册这些新的计算节点。另外,您可以在中设置适当的时间间隔 :nova-manage cell_v2 discover_hosts nova.conf

[root@controller ~]# vim /etc/nova/nova.conf

[scheduler]

discover_hosts_in_cells_interval = 300

11.7 查看是状态否正常

[root@controller ~]# openstack compute service list

[root@controller ~]# openstack catalog list

[root@controller ~]# openstack image list

[root@controller ~]# openstack compute service list

+----+------------------+------------+----------+---------+-------+----------------------------+

| ID | Binary | Host | Zone | Status | State | Updated At |

+----+------------------+------------+----------+---------+-------+----------------------------+

| 1 | nova-scheduler | controller | internal | enabled | up | 2020-05-07T09:17:04.000000 |

| 3 | nova-conductor | controller | internal | enabled | up | 2020-05-07T09:17:02.000000 |

| 4 | nova-consoleauth | controller | internal | enabled | up | 2020-05-07T09:17:08.000000 |

| 7 | nova-compute | compute1 | nova | enabled | up | 2020-05-07T09:17:04.000000 |

+----+------------------+------------+----------+---------+-------+----------------------------+

[root@controller ~]#

[root@controller ~]# openstack catalog list

+-----------+-----------+-----------------------------------------+

| Name | Type | Endpoints |

+-----------+-----------+-----------------------------------------+

| placement | placement | RegionOne |

| | | internal: http://controller:8778 |

| | | RegionOne |

| | | public: http://controller:8778 |

| | | RegionOne |

| | | admin: http://controller:8778 |

| | | |

| keystone | identity | RegionOne |

| | | internal: http://controller:5000/v3/ |

| | | RegionOne |

| | | public: http://controller:5000/v3/ |

| | | RegionOne |

| | | admin: http://controller:5000/v3/ |

| | | |

| nova | compute | RegionOne |

| | | public: http://controller:8774/v2.1 |

| | | RegionOne |

| | | admin: http://controller:8774/v2.1 |

| | | RegionOne |

| | | internal: http://controller:8774/v2.1 |

| | | |

| glance | image | RegionOne |

| | | public: http://controller:9292 |

| | | RegionOne |

| | | internal: http://controller:9292 |

| | | RegionOne |

| | | admin: http://controller:9292 |

| | | |

| neutron | network | RegionOne |

| | | admin: http://controller:9696 |

| | | RegionOne |

| | | public: http://controller:9696 |

| | | RegionOne |

| | | internal: http://controller:9696 |

| | | |

+-----------+-----------+-----------------------------------------+

[root@controller ~]#

[root@controller ~]# openstack image list

+--------------------------------------+--------+--------+

| ID | Name | Status |

+--------------------------------------+--------+--------+

| d1eca54c-3ade-4bb3-9b8a-43b3c6f3ed0d | cirros | active |

+--------------------------------------+--------+--------+

十二、 controller节点安装 neutron (联网服务)

12.1 要创建数据库,请完成以下步骤:

- 12.1.1 使用数据库访问客户端以root用户身份连接到数据库服务器:

[root@controller ~]# mysql -uroot -p123456;

- 12.1.2 创建neutron数据库:

MariaDB [(none)]> CREATE DATABASE neutron;

- 12.1.3 授予对neutron数据库的适当访问权限,NEUTRON_DBPASS并用合适的密码代替 :

MariaDB [(none)]> GRANT ALL PRIVILEGES ON neutron.* TO 'neutron'@'localhost' \

-> IDENTIFIED BY 'NEUTRON_DBPASS';

MariaDB [(none)]> GRANT ALL PRIVILEGES ON neutron.* TO 'neutron'@'%' \

-> IDENTIFIED BY 'NEUTRON_DBPASS';

MariaDB [(none)]> exit

12.2 来源admin凭据来访问仅管理员CLI命令:

[root@controller ~]#. adminrc

12.3 要创建服务凭证,请完成以下步骤:

- 12.3.1 创建neutron用户:

[root@controller ~]#openstack user create --domain default --password-prompt neutron # 提示密码输 NEUTRON_PASS

- 12.3.2 admin向neutron用户添加角色:

[root@controller ~]# openstack role add --project service --user neutron admin

- 12.3.3 创建neutron服务实体:

[root@controller ~]# openstack service create --name neutron \

--description "OpenStack Networking" network

- 12.3.4 创建网络服务API端点:

[root@controller ~]#openstack endpoint create --region RegionOne \

network public http://controller:9696

[root@controller ~]# openstack endpoint create --region RegionOne \

network internal http://controller:9696

[root@controller ~]#openstack endpoint create --region RegionOne \

network admin http://controller:9696

网络安装第二个 Networking Option 2: Self-service networks(联网选项2:自助服务网络)

12.4 安装的组件

[root@controller ~]# yum install openstack-neutron openstack-neutron-ml2 \

openstack-neutron-linuxbridge ebtables -y

12.5 编辑neutron.conf文件并完成以下操作:

[root@controller ~]# vim /etc/neutron/neutron.conf

[database] #在该[database]部分中,配置数据库访问:

connection = mysql+pymysql://neutron:NEUTRON_DBPASS@controller/neutron

[DEFAULT] #在该[DEFAULT]部分中,启用模块化第2层(ML2)插件,路由器服务和重叠的IP地址:

core_plugin = ml2

service_plugins = router

allow_overlapping_ips = true

[DEFAULT] #在该[DEFAULT]部分中,配置RabbitMQ 消息队列访问:

transport_url = rabbit://openstack:RABBIT_PASS@controller

[DEFAULT] #在[DEFAULT]和[keystone_authtoken]部分中,配置身份服务访问:

auth_strategy = keystone

[keystone_authtoken]

www_authenticate_uri = http://controller:5000

auth_url = http://controller:5000

memcached_servers = controller:11211

auth_type = password

project_domain_name = default

user_domain_name = default

project_name = service

username = neutron

password = NEUTRON_PASS

[DEFAULT] #在[DEFAULT]和[nova]部分中,将网络配置为通知Compute网络拓扑更改:

notify_nova_on_port_status_changes = true

notify_nova_on_port_data_changes = true

[nova]

auth_url = http://controller:5000

auth_type = password

project_domain_name = default

user_domain_name = default

region_name = RegionOne

project_name = service

username = nova

password = NOVA_PASS

[oslo_concurrency] #在该[oslo_concurrency]部分中,配置锁定路径:

lock_path = /var/lib/neutron/tmp

配置模块化层2(ML2)插件

ML2插件使用Linux桥接器机制为实例构建第2层(桥接和交换)虚拟网络基础架构。

12.6 编辑ml2_conf.ini文件并完成以下操作:

[root@controller ~]# vim /etc/neutron/plugins/ml2/ml2_conf.ini

[ml2] #在该[ml2]部分中,启用平面,VLAN和VXLAN网络:

type_drivers = flat,vlan,vxlan

[ml2] #在该[ml2]部分中,启用VXLAN自助服务网络:

tenant_network_types = vxlan

[ml2] #在本[ml2]节中,启用Linux桥接器和第2层填充机制:

mechanism_drivers = linuxbridge,l2population

[ml2] #在此[ml2]部分中,启用端口安全扩展驱动程序:

extension_drivers = port_security

[ml2_type_flat] #在本[ml2_type_flat]节中,将提供者虚拟网络配置为平面网络:

flat_networks = provider

[ml2_type_vxlan] #在该[ml2_type_vxlan]部分中,为自助服务网络配置VXLAN网络标识符范围:

vni_ranges = 1:1000

[securitygroup] #在本[securitygroup]节中,启用ipset以提高安全组规则的效率:

enable_ipset = true

配置Linux网桥代理

Linux网桥代理为实例构建第2层(桥接和交换)虚拟网络基础结构并处理安全组。

12.7 编辑linuxbridge_agent.ini文件并完成以下操作:

[root@controller ~]# vim /etc/neutron/plugins/ml2/linuxbridge_agent.ini

[linux_bridge] #在本[linux_bridge]节中,将提供者虚拟网络映射到提供者物理网络接口:

physical_interface_mappings = provider:ens34 #ens34 控制第二块网卡地址

[vxlan] #在本[vxlan]节中,启用VXLAN覆盖网络,配置处理覆盖网络的物理网络接口的IP地址,并启用第2层填充:

enable_vxlan = true

local_ip = 192.168.47.128 #控制第二块网卡 IP地址

l2_population = true

[securitygroup] #在该[securitygroup]部分中,启用安全组并配置Linux网桥iptables防火墙驱动程序:

enable_security_group = true

firewall_driver = neutron.agent.linux.iptables_firewall.IptablesFirewallDriver

配置第3层剂

第3层(L3)代理为自助式虚拟网络提供路由和NAT服务。

12.8 编辑l3_agent.ini文件并完成以下操作:

[root@controller ~]# vim /etc/neutron/l3_agent.ini

[DEFAULT] #在该[DEFAULT]部分中,配置Linux网桥接口驱动程序和外部网桥:

interface_driver = linuxbridge

配置DHCP代理

DHCP代理为虚拟网络提供DHCP服务。

12.9 编辑dhcp_agent.ini文件并完成以下操作:

[root@controller ~]# vim /etc/neutron/dhcp_agent.ini

[DEFAULT] #在本[DEFAULT]节中,配置Linux桥接口驱动程序Dnsmasq DHCP驱动程序,并启用隔离的元数据,以便提供商网络上的实例可以通过网络访问元数据:

interface_driver = linuxbridge

dhcp_driver = neutron.agent.linux.dhcp.Dnsmasq

enable_isolated_metadata = true

配置元数据代理

元数据代理提供配置信息,例如实例的凭据。

12.10 编辑metadata_agent.ini文件并完成以下操作:

[root@controller ~]# vim /etc/neutron/metadata_agent.ini

[DEFAULT] 在该[DEFAULT]部分中,配置元数据主机和共享机密:

nova_metadata_host = controller

metadata_proxy_shared_secret = METADATA_SECRET

配置计算服务使用网络服务

12.11 编辑nova.conf文件并执行以下操作:

[root@controller ~]# vim /etc/nova/nova.conf

[neutron]

url = http://controller:9696

auth_url = http://controller:5000

auth_type = password

project_domain_name = default

user_domain_name = default

region_name = RegionOne

project_name = service

username = neutron

password = NEUTRON_PASS

service_metadata_proxy = true

metadata_proxy_shared_secret = METADATA_SECRET

12.12 网络服务初始化脚本需要/etc/neutron/plugin.ini指向ML2插件配置文件的符号链接 /etc/neutron/plugins/ml2/ml2_conf.ini。如果此符号链接不存在,请使用以下命令创建它:

[root@controller ~]# ln -s /etc/neutron/plugins/ml2/ml2_conf.ini /etc/neutron/plugin.ini

12.13 填充数据库:

[root@controller ~]# su -s /bin/sh -c "neutron-db-manage --config-file /etc/neutron/neutron.conf \

--config-file /etc/neutron/plugins/ml2/ml2_conf.ini upgrade head" neutron

12.14 重新启动Compute API服务:

[root@controller ~]# systemctl restart openstack-nova-api.service

12.15 启动网络服务,并将其配置为在系统引导时启动。

对于两个网络选项:

[root@controller ~]# systemctl enable neutron-server.service \

neutron-linuxbridge-agent.service neutron-dhcp-agent.service \

neutron-metadata-agent.service

[root@controller ~]# systemctl start neutron-server.service \

neutron-linuxbridge-agent.service neutron-dhcp-agent.service \

neutron-metadata-agent.service

12.16 对于网络选项2,还启用并启动第3层服务:

[root@controller ~]# systemctl enable neutron-l3-agent.service

[root@controller ~]# systemctl start neutron-l3-agent.service

十三 、compute1安装neutron (联网服务)

compute1节点 执行

13.1 安装的组件

[root@compute1 ~]# yum install openstack-neutron-linuxbridge ebtables ipset -y

配置公共部件

网络公共组件配置包括身份验证机制,消息队列和插件。

13.2 编辑neutron.conf文件并完成以下操作:

[root@compute1 ~]# vim /etc/neutron/neutron.conf #修改

[DEFAULT] #在该[DEFAULT]部分中,配置RabbitMQ 消息队列访问:

transport_url = rabbit://openstack:RABBIT_PASS@controller

[DEFAULT] #在[DEFAULT]和[keystone_authtoken]部分中,配置身份服务访问:

auth_strategy = keystone

[keystone_authtoken]

www_authenticate_uri = http://controller:5000

auth_url = http://controller:5000

memcached_servers = controller:11211

auth_type = password

project_domain_name = default

user_domain_name = default

project_name = service

username = neutron

password = NEUTRON_PASS

[oslo_concurrency] #在该[oslo_concurrency]部分中,配置锁定路径:

lock_path = /var/lib/neutron/tmp

配置网络选项

选择第二个网络

Networking Option 2: Self-service networks 联网选项2:自助服务网络

配置Linux网桥代理

Linux网桥代理为实例构建第2层(桥接和交换)虚拟网络基础结构并处理安全组。

13.2 编辑linuxbridge_agent.ini文件并完成以下操作:

[root@compute1 ~]# vim /etc/neutron/plugins/ml2/linuxbridge_agent.ini #修改

[linux_bridge] # 在本[linux_bridge]节中,将提供者虚拟网络映射到提供者物理网络接口:

physical_interface_mappings = provider:ens34 #第二块网卡名称

[vxlan] #在本[vxlan]节中,启用VXLAN覆盖网络,配置处理覆盖网络的物理网络接口的IP地址,并启用第2层填充:

enable_vxlan = true

local_ip = 192.168.47.129 ##第二块网卡,ip地址

l2_population = true

[securitygroup] #在该[securitygroup]部分中,启用安全组并配置Linux网桥iptables防火墙驱动程序:

enable_security_group = true

firewall_driver = neutron.agent.linux.iptables_firewall.IptablesFirewallDriver

配置计算服务使用网络服务

13.3 编辑nova.conf文件并完成以下操作:

[root@compute1 ~]# vim /etc/nova/nova.conf

[neutron] #在该[neutron]部分中,配置访问参数:

url = http://controller:9696

auth_url = http://controller:5000

auth_type = password

project_domain_name = default

user_domain_name = default

region_name = RegionOne

project_name = service

username = neutron

password = NEUTRON_PASS

13.4 重新启动计算服务:

[root@compute1 ~]# systemctl restart openstack-nova-compute.service

13.5启动Linux网桥代理,并将其配置为在系统引导时启动:

[root@compute1 ~]# systemctl enable neutron-linuxbridge-agent.service

[root@compute1 ~]# systemctl start neutron-linuxbridge-agent.service

验证操作

13. 6 回到控制测试neutron

controller 节点执行

[root@controller ~]#. adminrc

[root@controller ~]# openstack network agent list

+--------------------------------------+--------------------+------------+-------------------+-------+-------+---------------------------+

| ID | Agent Type | Host | Availability Zone | Alive | State | Binary |

+--------------------------------------+--------------------+------------+-------------------+-------+-------+---------------------------+

| 0075474c-2e99-4ce5-94a2-e6b97c3d2edf | Metadata agent | controller | None | :-) | UP | neutron-metadata-agent |

| 24fbdf5b-e066-4104-ac37-af5ad6b263dc | Linux bridge agent | compute1 | None | :-) | UP | neutron-linuxbridge-agent |

| a9982336-5979-46b3-b663-0cb2a3d4b1e6 | DHCP agent | controller | nova | :-) | UP | neutron-dhcp-agent |

| b5fd6eab-c8a7-4dd1-a60b-f44439a26610 | Linux bridge agent | controller | None | :-) | UP | neutron-linuxbridge-agent |

| bf65de64-4949-4545-8560-4ba811508faf | L3 agent | controller | nova | :-) | UP | neutron-l3-agent |

+--------------------------------------+--------------------+------------+-------------------+-------+-------+---------------------------+

十四、dashboard 仪表盘

controller 节点执行

14.1 安装软件包:

yum install openstack-dashboard -y

14.2 编辑local_settings 文件并完成以下操作:

vim /etc/openstack-dashboard/local_settings

#配置仪表板以在controller节点上使用OpenStack服务 :

OPENSTACK_HOST = "controller"

#允许主机访问仪表板:

ALLOWED_HOSTS = ['*'] #允许那些主机可以访问,* 表示所有主机可以访问

#配置memcached会话存储服务:

SESSION_ENGINE = 'django.contrib.sessions.backends.cache'

CACHES = {

'default': {

'BACKEND': 'django.core.cache.backends.memcached.MemcachedCache',

'LOCATION': 'controller:11211',

}

}

#启用身份API版本3:

OPENSTACK_KEYSTONE_URL = "http://%s:5000/v3" % OPENSTACK_HOST

#启用对域的支持:

OPENSTACK_KEYSTONE_MULTIDOMAIN_SUPPORT = True

#配置API版本:

OPENSTACK_API_VERSIONS = {

"identity": 3,

"image": 2,

"volume": 2,

}

#配置Default为通过仪表板创建的用户的默认域:

OPENSTACK_KEYSTONE_DEFAULT_DOMAIN = "Default"

#配置user为通过仪表板创建的用户的默认角色:

OPENSTACK_KEYSTONE_DEFAULT_ROLE = "user"

14.3 openstack-dashboard.conf如果未包含,则添加以下行 。

vim /etc/httpd/conf.d/openstack-dashboard.conf

WSGIApplicationGroup %{GLOBAL} ## 添加

14.4 重新启动Web服务器和会话存储服务:

systemctl restart httpd.service memcached.service

验证访问并登录dashboard

14.5 执行和查看

[root@controller ~]# . adminrc

[root@controller ~]# cat adminrc

export OS_PROJECT_DOMAIN_NAME=Default

export OS_USER_DOMAIN_NAME=Default

export OS_PROJECT_NAME=admin

export OS_USERNAME=admin

export OS_PASSWORD=ADMIN_PASS

export OS_AUTH_URL=http://controller:5000/v3

export OS_IDENTITY_API_VERSION=3

export OS_IMAGE_API_VERSION=2

14.6 访问dashboard

> 使用admin或demo用户和default域凭据进行身份验证。

http://172.31.100.22/dashboard/

域:Default

用户名:admin

密码:ADMIN_PASS

登录成功

1145

1145

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?