Nan(not a number )在pandas表示缺失值

import pandas as pd

import numpy as np

string_data= pd. Series( [ 'aardvark' , 'artichoke' , np. nan, 'avocado' ] )

string_data

0 aardvark

1 artichoke

2 NaN

3 avocado

dtype: object

string_data. isnull( )

0 False

1 False

2 True

3 False

dtype: bool

string_data[ 0 ] = None

string_data. isnull( )

0 True

1 False

2 True

3 False

dtype: bool

dropna:删除缺失数据 fillna:插值方法填充缺失数据 isnull: 返回布尔值,表明哪些是缺失值 notnull :isnull的反面 from numpy import nan as NA

data= pd. Series( [ 1 , NA, 3.5 , NA, 7 ] )

data. dropna( )

0 1.0

2 3.5

4 7.0

dtype: float64

data[ data. notnull( ) ]

0 1.0

2 3.5

4 7.0

dtype: float64

data = pd. DataFrame( [ [ 1 . , 6.5 , 3 . ] , [ 1 . , NA, NA] ,

[ NA, NA, NA] , [ NA, 6.5 , 3 . ] ] )

data

0 1 2 0 1.0 6.5 3.0 1 1.0 NaN NaN 2 NaN NaN NaN 3 NaN 6.5 3.0

cleaned= data. dropna( )

cleaned

data. dropna( how= 'all' )

0 1 2 0 1.0 6.5 3.0 1 1.0 NaN NaN 3 NaN 6.5 3.0

data[ 4 ] = NA

data

0 1 2 4 0 1.0 6.5 3.0 NaN 1 1.0 NaN NaN NaN 2 NaN NaN NaN NaN 3 NaN 6.5 3.0 NaN

data. dropna( axis= 1 , how= 'all' )

0 1 2 0 1.0 6.5 3.0 1 1.0 NaN NaN 2 NaN NaN NaN 3 NaN 6.5 3.0

df= pd. DataFrame( np. random. randn( 7 , 3 ) )

df. iloc[ : 4 , 1 ] = NA

df. iloc[ : 2 , 2 ] = NA

df

0 1 2 0 1.230124 NaN NaN 1 -0.671868 NaN NaN 2 -0.596658 NaN 0.002418 3 -1.061044 NaN -0.246041 4 -0.677290 -1.394329 -1.870510 5 -0.313459 0.133874 -1.172282 6 -0.495465 -0.954127 0.150156

df. dropna( )

0 1 2 4 -0.677290 -1.394329 -1.870510 5 -0.313459 0.133874 -1.172282 6 -0.495465 -0.954127 0.150156

df. dropna( thresh= 2 )

0 1 2 2 -0.596658 NaN 0.002418 3 -1.061044 NaN -0.246041 4 -0.677290 -1.394329 -1.870510 5 -0.313459 0.133874 -1.172282 6 -0.495465 -0.954127 0.150156

df. fillna( 0 )

0 1 2 0 1.230124 0.000000 0.000000 1 -0.671868 0.000000 0.000000 2 -0.596658 0.000000 0.002418 3 -1.061044 0.000000 -0.246041 4 -0.677290 -1.394329 -1.870510 5 -0.313459 0.133874 -1.172282 6 -0.495465 -0.954127 0.150156

df. fillna( { 1 : 0.5 , 2 : 0 } )

0 1 2 0 1.230124 0.500000 0.000000 1 -0.671868 0.500000 0.000000 2 -0.596658 0.500000 0.002418 3 -1.061044 0.500000 -0.246041 4 -0.677290 -1.394329 -1.870510 5 -0.313459 0.133874 -1.172282 6 -0.495465 -0.954127 0.150156

_= df. fillna( 0 , inplace= True )

df

0 1 2 0 1.230124 0.000000 0.000000 1 -0.671868 0.000000 0.000000 2 -0.596658 0.000000 0.002418 3 -1.061044 0.000000 -0.246041 4 -0.677290 -1.394329 -1.870510 5 -0.313459 0.133874 -1.172282 6 -0.495465 -0.954127 0.150156

df= pd. DataFrame( np. random. randn( 6 , 3 ) )

df. iloc[ 2 : , 1 ] = NA

df. iloc[ 4 : , 2 ] = NA

df

0 1 2 0 0.536292 -0.231305 -0.944116 1 -0.216595 1.808402 1.086082 2 -0.457510 NaN -0.617013 3 -0.163709 NaN 0.450099 4 0.969959 NaN NaN 5 1.136978 NaN NaN

df. fillna( method= 'ffill' )

0 1 2 0 0.536292 -0.231305 -0.944116 1 -0.216595 1.808402 1.086082 2 -0.457510 1.808402 -0.617013 3 -0.163709 1.808402 0.450099 4 0.969959 1.808402 0.450099 5 1.136978 1.808402 0.450099

df. fillna( method= 'ffill' , limit= 2 )

0 1 2 0 0.536292 -0.231305 -0.944116 1 -0.216595 1.808402 1.086082 2 -0.457510 1.808402 -0.617013 3 -0.163709 1.808402 0.450099 4 0.969959 NaN 0.450099 5 1.136978 NaN 0.450099

data= pd. Series( [ 1 . , NA, 3.5 , NA, 7 ] )

data

0 1.0

1 NaN

2 3.5

3 NaN

4 7.0

dtype: float64

data. fillna( data. mean( ) )

0 1.000000

1 3.833333

2 3.500000

3 3.833333

4 7.000000

dtype: float64

fillna参数:

value:用于填充缺失值的标量值或字典对象 method:插值方式,未指定方式为ffill axis:默认为axis=0 inplace:如果为true,对原件更改 limit:向前/后可以连续填充最大数目 data = pd. DataFrame( { 'k1' : [ 'one' , 'two' ] * 3 + [ 'two' ] ,

'k2' : [ 1 , 1 , 2 , 3 , 3 , 4 , 4 ] } )

data

k1 k2 0 one 1 1 two 1 2 one 2 3 two 3 4 one 3 5 two 4 6 two 4

data. duplicated( )

0 False

1 False

2 False

3 False

4 False

5 False

6 True

dtype: bool

data. drop_duplicates( )

k1 k2 0 one 1 1 two 1 2 one 2 3 two 3 4 one 3 5 two 4

data[ 'v1' ] = range ( 7 )

data

k1 k2 v1 0 one 1 0 1 two 1 1 2 one 2 2 3 two 3 3 4 one 3 4 5 two 4 5 6 two 4 6

data. drop_duplicates( [ 'k1' ] )

data. drop_duplicates( [ 'k1' , 'k2' ] , keep= 'last' )

k1 k2 v1 0 one 1 0 1 two 1 1 2 one 2 2 3 two 3 3 4 one 3 4 6 two 4 6

data = pd. DataFrame( { 'food' : [ 'bacon' , 'pulled pork' , 'bacon' ,

'Pastrami' , 'corned beef' , 'Bacon' ,

'pastrami' , 'honey ham' , 'nova lox' ] ,

'ounces' : [ 4 , 3 , 12 , 6 , 7.5 , 8 , 3 , 5 , 6 ] } )

data

food ounces 0 bacon 4.0 1 pulled pork 3.0 2 bacon 12.0 3 Pastrami 6.0 4 corned beef 7.5 5 Bacon 8.0 6 pastrami 3.0 7 honey ham 5.0 8 nova lox 6.0

meat_to_animal = {

'bacon' : 'pig' ,

'pulled pork' : 'pig' ,

'pastrami' : 'cow' ,

'corned beef' : 'cow' ,

'honey ham' : 'pig' ,

'nova lox' : 'salmon'

}

meat_to_animal

{'bacon': 'pig',

'pulled pork': 'pig',

'pastrami': 'cow',

'corned beef': 'cow',

'honey ham': 'pig',

'nova lox': 'salmon'}

lowercased= data[ 'food' ] . str . lower( )

lowercased

0 bacon

1 pulled pork

2 bacon

3 pastrami

4 corned beef

5 bacon

6 pastrami

7 honey ham

8 nova lox

Name: food, dtype: object

data[ 'animal' ] = lowercased. map ( meat_to_animal)

data

food ounces animal 0 bacon 4.0 pig 1 pulled pork 3.0 pig 2 bacon 12.0 pig 3 Pastrami 6.0 cow 4 corned beef 7.5 cow 5 Bacon 8.0 pig 6 pastrami 3.0 cow 7 honey ham 5.0 pig 8 nova lox 6.0 salmon

data[ 'food' ]

0 bacon

1 pulled pork

2 bacon

3 Pastrami

4 corned beef

5 Bacon

6 pastrami

7 honey ham

8 nova lox

Name: food, dtype: object

data[ 'food' ] . str . lower( ) . map ( meat_to_animal)

0 pig

1 pig

2 pig

3 cow

4 cow

5 pig

6 cow

7 pig

8 salmon

Name: food, dtype: object

data[ 'food' ] . map ( lambda x: meat_to_animal[ x. lower( ) ] )

0 pig

1 pig

2 pig

3 cow

4 cow

5 pig

6 cow

7 pig

8 salmon

Name: food, dtype: object

data= pd. Series( [ 1 , - 999 , 2 , - 999 , - 1000 , 3 ] )

data

0 1

1 -999

2 2

3 -999

4 -1000

5 3

dtype: int64

data. replace( - 999 , np. nan)

0 1.0

1 NaN

2 2.0

3 NaN

4 -1000.0

5 3.0

dtype: float64

data. replace( [ - 999 , - 1000 ] , np. nan)

0 1.0

1 NaN

2 2.0

3 NaN

4 NaN

5 3.0

dtype: float64

data. replace( [ - 999 , - 1000 ] , [ np. nan, 0 ] )

0 1.0

1 NaN

2 2.0

3 NaN

4 0.0

5 3.0

dtype: float64

data. replace( { - 999 : np. nan, - 1000 : 0 } )

0 1.0

1 NaN

2 2.0

3 NaN

4 0.0

5 3.0

dtype: float64

data = pd. DataFrame( np. arange( 12 ) . reshape( ( 3 , 4 ) ) ,

index= [ 'Ohio' , 'Colorado' , 'New York' ] ,

columns= [ 'one' , 'two' , 'three' , 'four' ] )

data

one two three four Ohio 0 1 2 3 Colorado 4 5 6 7 New York 8 9 10 11

data. index

Index(['Ohio', 'Colorado', 'New York'], dtype='object')

transform= lambda x: x[ : 4 ] . upper( )

data. index. map ( transform)

Index(['OHIO', 'COLO', 'NEW '], dtype='object')

data. rename( index= str . title, columns= str . upper)

ONE TWO THREE FOUR Ohio 0 1 2 3 Colorado 4 5 6 7 New York 8 9 10 11

data. rename( index= { 'Ohio' : 'INDIANA' } ,

columns= { 'three' : 'peekaboo' } )

one two peekaboo four INDIANA 0 1 2 3 Colorado 4 5 6 7 New York 8 9 10 11

data. rename( index= { 'Ohio' : 'INDIANA' } , inplace= True )

data

one two three four INDIANA 0 1 2 3 Colorado 4 5 6 7 New York 8 9 10 11

假设有一组数据划分到不同的年龄组,如何操作?

ages = [ 20 , 22 , 25 , 27 , 21 , 23 , 37 , 31 , 61 , 45 , 41 , 32 ]

bins= [ 18 , 25 , 35 , 60 , 100 ]

cats= pd. cut( ages, bins)

cats

[(18, 25], (18, 25], (18, 25], (25, 35], (18, 25], ..., (25, 35], (60, 100], (35, 60], (35, 60], (25, 35]]

Length: 12

Categories (4, interval[int64]): [(18, 25] < (25, 35] < (35, 60] < (60, 100]]

cats. codes

array([0, 0, 0, 1, 0, 0, 2, 1, 3, 2, 2, 1], dtype=int8)

cats. categories

IntervalIndex([(18, 25], (25, 35], (35, 60], (60, 100]],

closed='right',

dtype='interval[int64]')

pd. value_counts( cats)

(18, 25] 5

(35, 60] 3

(25, 35] 3

(60, 100] 1

dtype: int64

pd. cut( ages, [ 18 , 26 , 36 , 61 , 100 ] , right= False )

[[18, 26), [18, 26), [18, 26), [26, 36), [18, 26), ..., [26, 36), [61, 100), [36, 61), [36, 61), [26, 36)]

Length: 12

Categories (4, interval[int64]): [[18, 26) < [26, 36) < [36, 61) < [61, 100)]

group_names = [ 'Youth' , 'YoungAdult' , 'MiddleAged' , 'Senior' ]

pd. cut( ages, bins, labels= group_names)

['Youth', 'Youth', 'Youth', 'YoungAdult', 'Youth', ..., 'YoungAdult', 'Senior', 'MiddleAged', 'MiddleAged', 'YoungAdult']

Length: 12

Categories (4, object): ['Youth' < 'YoungAdult' < 'MiddleAged' < 'Senior']

data= np. random. rand( 20 )

pd. cut( data, 4 , precision= 2 )

[(0.7, 0.92], (0.7, 0.92], (0.065, 0.28], (0.7, 0.92], (0.065, 0.28], ..., (0.7, 0.92], (0.49, 0.7], (0.28, 0.49], (0.7, 0.92], (0.065, 0.28]]

Length: 20

Categories (4, interval[float64]): [(0.065, 0.28] < (0.28, 0.49] < (0.49, 0.7] < (0.7, 0.92]]

data= np. random. randn( 1000 )

cats= pd. qcut( data, 4 )

cats

[(-3.5669999999999997, -0.673], (-3.5669999999999997, -0.673], (-0.673, -0.039], (-3.5669999999999997, -0.673], (-0.039, 0.631], ..., (-3.5669999999999997, -0.673], (-3.5669999999999997, -0.673], (-0.039, 0.631], (-3.5669999999999997, -0.673], (-3.5669999999999997, -0.673]]

Length: 1000

Categories (4, interval[float64]): [(-3.5669999999999997, -0.673] < (-0.673, -0.039] < (-0.039, 0.631] < (0.631, 3.121]]

pd. value_counts( cats)

(0.631, 3.121] 250

(-0.039, 0.631] 250

(-0.673, -0.039] 250

(-3.5669999999999997, -0.673] 250

dtype: int64

pd. qcut( data, [ 0 , 0.1 , 0.5 , 0.9 , 1 ] )

[(-1.294, -0.039], (-1.294, -0.039], (-1.294, -0.039], (-3.5669999999999997, -1.294], (-0.039, 1.256], ..., (-1.294, -0.039], (-1.294, -0.039], (-0.039, 1.256], (-1.294, -0.039], (-1.294, -0.039]]

Length: 1000

Categories (4, interval[float64]): [(-3.5669999999999997, -1.294] < (-1.294, -0.039] < (-0.039, 1.256] < (1.256, 3.121]]

import pandas as pd

import numpy as np

data= pd. DataFrame( np. random. randn( 1000 , 4 ) )

data. describe( )

0 1 2 3 count 1000.000000 1000.000000 1000.000000 1000.000000 mean 0.007752 -0.032028 -0.037349 -0.036083 std 0.976770 0.974456 0.983429 1.015825 min -3.357288 -3.298192 -2.813273 -3.235629 25% -0.663744 -0.662803 -0.703169 -0.758496 50% 0.011468 -0.026073 -0.087028 -0.063262 75% 0.662235 0.581248 0.639237 0.642911 max 3.320222 2.833708 3.536139 2.816898

col= data[ 2 ]

col[ np. abs ( col) > 3 ]

992 3.536139

Name: 2, dtype: float64

data[ ( np. abs ( data) > 3 ) . any ( 1 ) ]

0 1 2 3 61 0.502113 -3.298192 -1.445427 0.728776 643 0.430629 -3.060744 0.731826 -1.039144 678 0.115404 0.017918 0.058429 -3.235629 718 3.320222 0.486255 0.686823 0.966785 750 -3.206397 -1.836857 1.102002 -0.180903 813 -3.357288 -0.662363 -1.293561 -1.962479 824 -0.950342 2.208761 -0.203996 -3.059786 992 0.920673 -0.688196 3.536139 0.528149

data[ np. abs ( data) > 3 ] = np. sign( data) * 3

data. describe( )

0 1 2 3 count 1000.000000 1000.000000 1000.000000 1000.000000 mean 0.007996 -0.031669 -0.037885 -0.035788 std 0.973907 0.973313 0.981623 1.014933 min -3.000000 -3.000000 -2.813273 -3.000000 25% -0.663744 -0.662803 -0.703169 -0.758496 50% 0.011468 -0.026073 -0.087028 -0.063262 75% 0.662235 0.581248 0.639237 0.642911 max 3.000000 2.833708 3.000000 2.816898

np. sign( data) . head( )

0 1 2 3 0 1.0 1.0 1.0 -1.0 1 -1.0 -1.0 -1.0 -1.0 2 1.0 -1.0 -1.0 -1.0 3 1.0 1.0 -1.0 1.0 4 1.0 1.0 -1.0 1.0

df= pd. DataFrame( np. arange( 5 * 4 ) . reshape( 5 , 4 ) )

df

0 1 2 3 0 0 1 2 3 1 4 5 6 7 2 8 9 10 11 3 12 13 14 15 4 16 17 18 19

sampler= np. random. permutation( 5 )

sampler

array([2, 1, 3, 0, 4])

df. take( sampler)

0 1 2 3 2 8 9 10 11 1 4 5 6 7 3 12 13 14 15 0 0 1 2 3 4 16 17 18 19

df. sample( n= 3 )

choices = pd. Series( [ 5 , 7 , - 1 , 6 , 4 ] )

draws= choices. sample( n= 10 , replace= True )

draws

0 5

1 7

4 4

1 7

0 5

2 -1

3 6

0 5

2 -1

4 4

dtype: int64

将分类变量转化为“哑变量”/指标矩阵

df = pd. DataFrame( { 'key' : [ 'b' , 'b' , 'a' , 'c' , 'a' , 'b' ] ,

'data1' : range ( 6 ) } )

df

key data1 0 b 0 1 b 1 2 a 2 3 c 3 4 a 4 5 b 5

pd. get_dummies( df[ 'key' ] )

a b c 0 0 1 0 1 0 1 0 2 1 0 0 3 0 0 1 4 1 0 0 5 0 1 0

pd.get_dummies:pandas 实现one hot encode的方式

one-hot的基本思想:将离散型特征的每一种取值都看成一种状态,若你的这一特征中有N个不相同的取值,那么我们就可以将该特征抽象成N种不同的状态,one-hot编码保证了每一个取值只会使得一种状态处于“激活态”,也就是说这N种状态中只有一个状态位值为1,其他状态位都是0。

dummies = pd. get_dummies( df[ 'key' ] , prefix= 'key' )

dummies

key_a key_b key_c 0 0 1 0 1 0 1 0 2 1 0 0 3 0 0 1 4 1 0 0 5 0 1 0

如果输入字符串’data1’,得到结果位series

df[ 'data1' ]

0 0

1 1

2 2

3 3

4 4

5 5

Name: data1, dtype: int64

如果输入列表[‘data1’],则返回DataFrame

df[ [ 'data1' ] ]

df_with_dummy= df[ [ 'data1' ] ] . join( dummies)

df_with_dummy

data1 key_a key_b key_c 0 0 0 1 0 1 1 0 1 0 2 2 1 0 0 3 3 0 0 1 4 4 1 0 0 5 5 0 1 0

mnames = [ 'movie_id' , 'title' , 'genres' ]

movies = pd. read_table( 'pydata-book/datasets/movielens/movies.dat' , sep= '::' ,

header= None , names= mnames)

movies

E:\Anaconda\lib\site-packages\pandas\io\parsers.py:765: ParserWarning: Falling back to the 'python' engine because the 'c' engine does not support regex separators (separators > 1 char and different from '\s+' are interpreted as regex); you can avoid this warning by specifying engine='python'.

return read_csv(**locals())

movie_id title genres 0 1 Toy Story (1995) Animation|Children's|Comedy 1 2 Jumanji (1995) Adventure|Children's|Fantasy 2 3 Grumpier Old Men (1995) Comedy|Romance 3 4 Waiting to Exhale (1995) Comedy|Drama 4 5 Father of the Bride Part II (1995) Comedy ... ... ... ... 3878 3948 Meet the Parents (2000) Comedy 3879 3949 Requiem for a Dream (2000) Drama 3880 3950 Tigerland (2000) Drama 3881 3951 Two Family House (2000) Drama 3882 3952 Contender, The (2000) Drama|Thriller

3883 rows × 3 columns

movies. shape

(3883, 3)

all_genres= [ ]

for x in movies. genres:

all_genres. extend( x. split( '|' ) )

all_genres

['Animation',

"Children's",

'Comedy',

'Adventure',

"Children's",

'Fantasy',

'Comedy',

'Romance',

'Comedy',

'Drama',

'Comedy',

'Action',

'Crime',

'Thriller',

'Comedy',

'Romance',

'Adventure',

"Children's",

'Action',

'Action',

'Adventure',

'Thriller',

'Comedy',

'Drama',

'Romance',

'Comedy',

'Horror',

'Animation',

"Children's",

'Drama',

'Action',

'Adventure',

'Romance',

'Drama',

'Thriller',

'Drama',

'Romance',

'Thriller',

'Comedy',

'Action',

'Action',

'Comedy',

'Drama',

'Crime',

'Drama',

'Thriller',

'Thriller',

'Drama',

'Sci-Fi',

'Drama',

'Romance',

'Drama',

'Drama',

'Romance',

'Adventure',

'Sci-Fi',

'Drama',

'Drama',

'Drama',

'Sci-Fi',

'Adventure',

'Romance',

"Children's",

'Comedy',

'Drama',

'Drama',

'Romance',

'Drama',

'Documentary',

'Comedy',

'Comedy',

'Romance',

'Drama',

'Drama',

'War',

'Action',

'Crime',

'Drama',

'Drama',

'Action',

'Adventure',

'Comedy',

'Drama',

'Drama',

'Romance',

'Crime',

'Thriller',

'Animation',

"Children's",

'Musical',

'Romance',

'Drama',

'Romance',

'Crime',

'Thriller',

'Action',

'Drama',

'Thriller',

'Comedy',

'Drama',

"Children's",

'Comedy',

'Drama',

'Adventure',

"Children's",

'Fantasy',

'Drama',

'Drama',

'Romance',

'Drama',

'Mystery',

'Adventure',

"Children's",

'Fantasy',

'Drama',

'Thriller',

'Drama',

'Comedy',

'Comedy',

'Romance',

'Comedy',

'Sci-Fi',

'Thriller',

'Drama',

'Comedy',

'Romance',

'Comedy',

'Action',

'Comedy',

'Crime',

'Horror',

'Thriller',

'Action',

'Comedy',

'Drama',

'Drama',

'Musical',

'Drama',

'Romance',

'Comedy',

'Drama',

'Sci-Fi',

'Thriller',

'Documentary',

'Drama',

'Drama',

'Thriller',

'Drama',

'Crime',

'Drama',

'Romance',

'Drama',

'Drama',

'Comedy',

'Drama',

'Drama',

'Romance',

'Adventure',

'Drama',

"Children's",

'Comedy',

'Comedy',

'Action',

'Thriller',

'Drama',

'Drama',

'Thriller',

'Comedy',

'Romance',

'Drama',

'Action',

'Thriller',

'Comedy',

'Drama',

'Action',

'Thriller',

'Documentary',

'Drama',

'Thriller',

'Comedy',

'Comedy',

'Thriller',

'Comedy',

'Drama',

'Romance',

'Comedy',

'Drama',

'Adventure',

"Children's",

'Comedy',

'Musical',

'Documentary',

'Comedy',

'Action',

'Drama',

'War',

'Drama',

'Thriller',

'Action',

'Adventure',

'Crime',

'Drama',

'Mystery',

'Drama',

'Comedy',

'Documentary',

'Crime',

'Comedy',

'Romance',

'Comedy',

'Drama',

'Drama',

'Comedy',

'Romance',

'Drama',

'Mystery',

'Romance',

'Drama',

'Comedy',

'Adventure',

"Children's",

'Fantasy',

'Drama',

'Documentary',

'Comedy',

'Romance',

'Drama',

'Drama',

'Romance',

'Thriller',

'Comedy',

'Drama',

'Documentary',

'Comedy',

'Documentary',

'Documentary',

'Drama',

'Action',

'Drama',

'Drama',

'Romance',

'Comedy',

'Drama',

'Drama',

'Comedy',

'Action',

'Adventure',

"Children's",

'Drama',

'Drama',

'Crime',

'Drama',

'Thriller',

'Drama',

'Drama',

'Romance',

'War',

'Horror',

'Action',

'Adventure',

'Comedy',

'Crime',

'Drama',

'Drama',

'War',

'Comedy',

'Comedy',

'War',

'Adventure',

"Children's",

'Drama',

'Action',

'Adventure',

'Mystery',

'Sci-Fi',

'Drama',

'Thriller',

'War',

'Documentary',

'Action',

'Romance',

'Thriller',

'Crime',

'Film-Noir',

'Mystery',

'Thriller',

'Action',

'Thriller',

'Comedy',

'Drama',

'Drama',

'Action',

'Adventure',

'Drama',

'Romance',

'Adventure',

"Children's",

'Drama',

'Action',

'Crime',

'Thriller',

'Comedy',

'Action',

'Sci-Fi',

'Thriller',

'Action',

'Adventure',

'Sci-Fi',

'Comedy',

'Drama',

'Comedy',

'Horror',

'Comedy',

'Drama',

'Romance',

'Comedy',

'Action',

"Children's",

'Drama',

'Romance',

'Thriller',

'Drama',

'Sci-Fi',

'Thriller',

'Comedy',

'Comedy',

'Horror',

'Comedy',

'Thriller',

'Drama',

'Documentary',

'Drama',

'Drama',

'Comedy',

'Drama',

'Romance',

'Horror',

'Sci-Fi',

'Drama',

'Action',

'Crime',

'Sci-Fi',

'Drama',

'Musical',

'Thriller',

'Drama',

'Drama',

'Romance',

'Comedy',

'Action',

'Comedy',

'Drama',

'Documentary',

'Drama',

'Romance',

'Action',

'Adventure',

'Drama',

'Western',

'Drama',

'Comedy',

'Drama',

'Drama',

'Drama',

'Romance',

'Comedy',

'Drama',

'Thriller',

'Comedy',

'Drama',

'Drama',

'Horror',

'Drama',

'Romance',

'Comedy',

'Comedy',

'Drama',

'Romance',

'Drama',

'Thriller',

'Thriller',

'Action',

'Comedy',

'Drama',

'Thriller',

'Drama',

'Thriller',

'Comedy',

'Comedy',

'Drama',

'Drama',

'Comedy',

'Comedy',

'Drama',

'Comedy',

'Romance',

'Comedy',

'Romance',

'Adventure',

"Children's",

'Animation',

"Children's",

'Comedy',

'Romance',

'Thriller',

"Children's",

'Drama',

'Drama',

'Musical',

'Comedy',

'Animation',

"Children's",

'Crime',

'Drama',

'Documentary',

'Drama',

'Fantasy',

'Romance',

'Thriller',

'Comedy',

'Drama',

'Romance',

"Children's",

'Comedy',

'Action',

'Comedy',

'Romance',

'Drama',

'Horror',

'Drama',

'Comedy',

'Comedy',

'Sci-Fi',

'Mystery',

'Thriller',

'Adventure',

"Children's",

'Comedy',

'Fantasy',

'Romance',

'Crime',

'Drama',

'Thriller',

'Action',

'Adventure',

'Fantasy',

'Sci-Fi',

'Drama',

"Children's",

'Drama',

'Drama',

'Drama',

'Drama',

'Romance',

'Drama',

'Romance',

'War',

'Western',

'Comedy',

'Drama',

'Drama',

'Drama',

'Romance',

'Drama',

'Drama',

'Drama',

'Horror',

'Comedy',

'Comedy',

'Comedy',

'Romance',

'Drama',

'Comedy',

'Drama',

'Drama',

'Thriller',

'Drama',

'Drama',

'Crime',

'Drama',

'Action',

'Crime',

'Drama',

'Horror',

'Action',

'Sci-Fi',

'Thriller',

'Comedy',

'Romance',

'Action',

'Thriller',

'Comedy',

'Romance',

'Crime',

'Drama',

'Thriller',

'Action',

'Drama',

'Thriller',

'Crime',

'Drama',

'Romance',

'Thriller',

'Comedy',

'Romance',

'Comedy',

'Romance',

'Crime',

'Drama',

'Drama',

'Comedy',

'Drama',

'Drama',

'Drama',

'Romance',

'Drama',

'Romance',

'Action',

'Adventure',

'Western',

'Comedy',

'Drama',

'Comedy',

'Drama',

'Drama',

'Drama',

'Drama',

'Comedy',

'Horror',

'Thriller',

'Comedy',

'Animation',

"Children's",

'Drama',

'Action',

'Action',

'Adventure',

'Sci-Fi',

"Children's",

'Comedy',

'Fantasy',

'Drama',

'Thriller',

'Film-Noir',

'Thriller',

'Drama',

'Comedy',

'Drama',

'Comedy',

'Comedy',

'Drama',

'Action',

'Comedy',

'Musical',

'Sci-Fi',

'Horror',

'Action',

'Adventure',

'Sci-Fi',

'Comedy',

'Horror',

'Drama',

'Horror',

'Sci-Fi',

'Comedy',

'Drama',

'Mystery',

'Thriller',

'Drama',

'War',

'Drama',

'Sci-Fi',

'Thriller',

'Comedy',

'Romance',

'Adventure',

'Drama',

'Drama',

'Comedy',

'Romance',

"Children's",

'Comedy',

'Comedy',

'Drama',

'Drama',

'Musical',

'Drama',

'Comedy',

'Action',

'Adventure',

'Thriller',

'Drama',

'Mystery',

'Thriller',

'Comedy',

'Drama',

'Romance',

'Comedy',

'Action',

'Romance',

'Thriller',

'Drama',

"Children's",

'Comedy',

'Comedy',

'Romance',

'War',

'Comedy',

'Romance',

'Drama',

'Comedy',

'Drama',

'Romance',

'Action',

'Comedy',

'Drama',

'Romance',

'Adventure',

"Children's",

'Romance',

'Documentary',

'Animation',

"Children's",

'Musical',

'Drama',

'Horror',

'Comedy',

'Crime',

'Fantasy',

'Action',

'Comedy',

'Western',

'Drama',

'Comedy',

'Comedy',

'Drama',

'Comedy',

'Drama',

'Thriller',

"Children's",

'Comedy',

'Drama',

'Action',

'Thriller',

'Action',

'Romance',

'Thriller',

'Comedy',

'Romance',

'Action',

'Sci-Fi',

'Action',

'Adventure',

'Comedy',

'Romance',

'Drama',

'Drama',

'Horror',

'Western',

'Action',

'Drama',

'Drama',

'Action',

'Comedy',

'Drama',

'Drama',

'Romance',

'War',

'Action',

'Comedy',

'Drama',

'Crime',

'Drama',

'Adventure',

"Children's",

'Action',

'Action',

'Drama',

'Drama',

'Horror',

'Documentary',

'Drama',

'Drama',

'Action',

'Thriller',

'Comedy',

'Comedy',

'Crime',

'Drama',

'Documentary',

'Action',

'Sci-Fi',

'Drama',

'Horror',

'Thriller',

'Drama',

'Drama',

'Comedy',

'Comedy',

'Drama',

'Comedy',

'Comedy',

'Comedy',

'Thriller',

'Western',

'Comedy',

'Romance',

'Drama',

'Comedy',

'Action',

'Comedy',

'Adventure',

"Children's",

'Thriller',

'Action',

'Thriller',

'Drama',

'Drama',

'Romance',

'Horror',

'Sci-Fi',

'Thriller',

'Mystery',

'Romance',

'Thriller',

'Drama',

'Comedy',

'Drama',

'Crime',

'Drama',

'Comedy',

'Western',

'Comedy',

'Action',

'Adventure',

'Crime',

'Comedy',

'Sci-Fi',

'Drama',

'Thriller',

'Comedy',

'Action',

'Comedy',

'Drama',

'Comedy',

'Romance',

'Comedy',

'Action',

'Sci-Fi',

'Documentary',

'Comedy',

'Romance',

'Comedy',

'Drama',

'Romance',

'Comedy',

'Romance',

'Drama',

'Comedy',

'Comedy',

'Drama',

'Drama',

'Mystery',

'Romance',

'Drama',

'Comedy',

'Drama',

'Thriller',

'Adventure',

"Children's",

'Drama',

'Drama',

'Action',

'Thriller',

'Drama',

'Western',

'Action',

'Comedy',

'Drama',

'Romance',

'Action',

'Adventure',

'Crime',

'Drama',

'Thriller',

'Action',

'Adventure',

'Crime',

'Thriller',

'Action',

'Drama',

'War',

'Action',

'Comedy',

'War',

'Comedy',

'Comedy',

'Romance',

'Drama',

'Romance',

'Comedy',

'Comedy',

'Romance',

'Comedy',

'Drama',

'Comedy',

'War',

'Action',

'Thriller',

'Drama',

'Comedy',

'Drama',

'Drama',

'Comedy',

'Action',

'Action',

'Adventure',

'Sci-Fi',

'Drama',

'Thriller',

'Thriller',

'Drama',

'Adventure',

"Children's",

'Action',

'Comedy',

'Comedy',

'Comedy',

'Western',

'Drama',

'Comedy',

'Thriller',

'Drama',

'Comedy',

'Mystery',

'Action',

'Crime',

'Drama',

'Action',

'Thriller',

'Drama',

'Comedy',

'Drama',

'Romance',

'Comedy',

'Romance',

'Drama',

'Romance',

'Comedy',

'Romance',

'Comedy',

'Drama',

'Action',

"Children's",

'Drama',

'Action',

'Sci-Fi',

'Comedy',

'Drama',

'Action',

'Drama',

'Drama',

'Drama',

'Romance',

'Drama',

'Action',

'Drama',

'Horror',

'Sci-Fi',

'Comedy',

'Mystery',

'Romance',

'Comedy',

'Drama',

'Comedy',

'Drama',

'War',

'Action',

'Drama',

'Mystery',

'Comedy',

'Sci-Fi',

'Thriller',

'Comedy',

'Crime',

'Thriller',

'Action',

'Drama',

'Drama',

'Drama',

'Drama',

'Drama',

'Drama',

'War',

'Drama',

'Drama',

'Drama',

"Children's",

'Drama',

'Comedy',

'Crime',

'Horror',

'Action',

'Drama',

'Romance',

'Drama',

'Drama',

'Comedy',

'Drama',

'Drama',

'Comedy',

'Romance',

'Thriller',

'Film-Noir',

'Sci-Fi',

'Comedy',

'Comedy',

'Romance',

'Thriller',

'Action',

'Drama',

'Action',

'Adventure',

"Children's",

'Sci-Fi',

'Action',

'Adventure',

'Thriller',

'Action',

'Documentary',

'Comedy',

'Romance',

"Children's",

'Comedy',

'Musical',

'Action',

'Adventure',

'Comedy',

'Western',

'Thriller',

'Action',

'Crime',

'Romance',

'Documentary',

'Drama',

'Action',

'Adventure',

'Animation',

"Children's",

'Fantasy',

'Comedy',

'Drama',

'Thriller',

'Comedy',

'Drama',

'Drama',

'Comedy',

'Horror',

'Comedy',

'Romance',

'Drama',

'Comedy',

'Drama',

"Children's",

'Comedy',

'Comedy',

'Drama',

'Drama',

'Drama',

'Drama',

'Comedy',

'Drama',

"Children's",

'Comedy',

'Comedy',

'Adventure',

"Children's",

'Drama',

'Mystery',

'Thriller',

'Drama',

'Documentary',

'Comedy',

'Comedy',

'Drama',

'Drama',

'Comedy',

"Children's",

'Comedy',

'Comedy',

'Romance',

'Thriller',

'Animation',

"Children's",

'Comedy',

'Musical',

'Action',

'Sci-Fi',

'Thriller',

'Adventure',

...]

extend函数:向列表尾部追加一个列表,将列表中的每个元素都追加进来,在原有列表上增加。

genres= pd. unique( all_genres)

genres

array(['Animation', "Children's", 'Comedy', 'Adventure', 'Fantasy',

'Romance', 'Drama', 'Action', 'Crime', 'Thriller', 'Horror',

'Sci-Fi', 'Documentary', 'War', 'Musical', 'Mystery', 'Film-Noir',

'Western'], dtype=object)

zero_matrix= np. zeros( ( len ( movies) , len ( genres) ) )

dummies= pd. DataFrame( zero_matrix, columns= genres)

dummies

Animation Children's Comedy Adventure Fantasy Romance Drama Action Crime Thriller Horror Sci-Fi Documentary War Musical Mystery Film-Noir Western 0 0.0 0.0 0.0 0.0 0.0 0.0 0.0 0.0 0.0 0.0 0.0 0.0 0.0 0.0 0.0 0.0 0.0 0.0 1 0.0 0.0 0.0 0.0 0.0 0.0 0.0 0.0 0.0 0.0 0.0 0.0 0.0 0.0 0.0 0.0 0.0 0.0 2 0.0 0.0 0.0 0.0 0.0 0.0 0.0 0.0 0.0 0.0 0.0 0.0 0.0 0.0 0.0 0.0 0.0 0.0 3 0.0 0.0 0.0 0.0 0.0 0.0 0.0 0.0 0.0 0.0 0.0 0.0 0.0 0.0 0.0 0.0 0.0 0.0 4 0.0 0.0 0.0 0.0 0.0 0.0 0.0 0.0 0.0 0.0 0.0 0.0 0.0 0.0 0.0 0.0 0.0 0.0 ... ... ... ... ... ... ... ... ... ... ... ... ... ... ... ... ... ... ... 3878 0.0 0.0 0.0 0.0 0.0 0.0 0.0 0.0 0.0 0.0 0.0 0.0 0.0 0.0 0.0 0.0 0.0 0.0 3879 0.0 0.0 0.0 0.0 0.0 0.0 0.0 0.0 0.0 0.0 0.0 0.0 0.0 0.0 0.0 0.0 0.0 0.0 3880 0.0 0.0 0.0 0.0 0.0 0.0 0.0 0.0 0.0 0.0 0.0 0.0 0.0 0.0 0.0 0.0 0.0 0.0 3881 0.0 0.0 0.0 0.0 0.0 0.0 0.0 0.0 0.0 0.0 0.0 0.0 0.0 0.0 0.0 0.0 0.0 0.0 3882 0.0 0.0 0.0 0.0 0.0 0.0 0.0 0.0 0.0 0.0 0.0 0.0 0.0 0.0 0.0 0.0 0.0 0.0

3883 rows × 18 columns

gen = movies. genres[ 0 ]

gen

"Animation|Children's|Comedy"

gen. split( '|' )

['Animation', "Children's", 'Comedy']

dummies. columns. get_indexer( gen. split( '|' ) )

array([0, 1, 2], dtype=int64)

pandas.index.get_indexer(target, method=None, limit=None, tolerance=None)

作用:确定target的值在给的pandas的index的位置 target:输入的索引 method:选的方法,包括ffill,bfill

returns:从0到n - 1的整数表示这些位置处的索引与相应的target值匹配。

for i , gen in enumerate ( movies. genres) :

indices= dummies. columns. get_indexer( gen. split( '|' ) )

dummies. iloc[ i, indices] = 1

dummies

Animation Children's Comedy Adventure Fantasy Romance Drama Action Crime Thriller Horror Sci-Fi Documentary War Musical Mystery Film-Noir Western 0 1.0 1.0 1.0 0.0 0.0 0.0 0.0 0.0 0.0 0.0 0.0 0.0 0.0 0.0 0.0 0.0 0.0 0.0 1 0.0 1.0 0.0 1.0 1.0 0.0 0.0 0.0 0.0 0.0 0.0 0.0 0.0 0.0 0.0 0.0 0.0 0.0 2 0.0 0.0 1.0 0.0 0.0 1.0 0.0 0.0 0.0 0.0 0.0 0.0 0.0 0.0 0.0 0.0 0.0 0.0 3 0.0 0.0 1.0 0.0 0.0 0.0 1.0 0.0 0.0 0.0 0.0 0.0 0.0 0.0 0.0 0.0 0.0 0.0 4 0.0 0.0 1.0 0.0 0.0 0.0 0.0 0.0 0.0 0.0 0.0 0.0 0.0 0.0 0.0 0.0 0.0 0.0 ... ... ... ... ... ... ... ... ... ... ... ... ... ... ... ... ... ... ... 3878 0.0 0.0 1.0 0.0 0.0 0.0 0.0 0.0 0.0 0.0 0.0 0.0 0.0 0.0 0.0 0.0 0.0 0.0 3879 0.0 0.0 0.0 0.0 0.0 0.0 1.0 0.0 0.0 0.0 0.0 0.0 0.0 0.0 0.0 0.0 0.0 0.0 3880 0.0 0.0 0.0 0.0 0.0 0.0 1.0 0.0 0.0 0.0 0.0 0.0 0.0 0.0 0.0 0.0 0.0 0.0 3881 0.0 0.0 0.0 0.0 0.0 0.0 1.0 0.0 0.0 0.0 0.0 0.0 0.0 0.0 0.0 0.0 0.0 0.0 3882 0.0 0.0 0.0 0.0 0.0 0.0 1.0 0.0 0.0 1.0 0.0 0.0 0.0 0.0 0.0 0.0 0.0 0.0

3883 rows × 18 columns

movies_windic= movies. join( dummies. add_prefix( 'Genre_' ) )

movies_windic

movie_id title genres Genre_Animation Genre_Children's Genre_Comedy Genre_Adventure Genre_Fantasy Genre_Romance Genre_Drama ... Genre_Crime Genre_Thriller Genre_Horror Genre_Sci-Fi Genre_Documentary Genre_War Genre_Musical Genre_Mystery Genre_Film-Noir Genre_Western 0 1 Toy Story (1995) Animation|Children's|Comedy 1.0 1.0 1.0 0.0 0.0 0.0 0.0 ... 0.0 0.0 0.0 0.0 0.0 0.0 0.0 0.0 0.0 0.0 1 2 Jumanji (1995) Adventure|Children's|Fantasy 0.0 1.0 0.0 1.0 1.0 0.0 0.0 ... 0.0 0.0 0.0 0.0 0.0 0.0 0.0 0.0 0.0 0.0 2 3 Grumpier Old Men (1995) Comedy|Romance 0.0 0.0 1.0 0.0 0.0 1.0 0.0 ... 0.0 0.0 0.0 0.0 0.0 0.0 0.0 0.0 0.0 0.0 3 4 Waiting to Exhale (1995) Comedy|Drama 0.0 0.0 1.0 0.0 0.0 0.0 1.0 ... 0.0 0.0 0.0 0.0 0.0 0.0 0.0 0.0 0.0 0.0 4 5 Father of the Bride Part II (1995) Comedy 0.0 0.0 1.0 0.0 0.0 0.0 0.0 ... 0.0 0.0 0.0 0.0 0.0 0.0 0.0 0.0 0.0 0.0 ... ... ... ... ... ... ... ... ... ... ... ... ... ... ... ... ... ... ... ... ... ... 3878 3948 Meet the Parents (2000) Comedy 0.0 0.0 1.0 0.0 0.0 0.0 0.0 ... 0.0 0.0 0.0 0.0 0.0 0.0 0.0 0.0 0.0 0.0 3879 3949 Requiem for a Dream (2000) Drama 0.0 0.0 0.0 0.0 0.0 0.0 1.0 ... 0.0 0.0 0.0 0.0 0.0 0.0 0.0 0.0 0.0 0.0 3880 3950 Tigerland (2000) Drama 0.0 0.0 0.0 0.0 0.0 0.0 1.0 ... 0.0 0.0 0.0 0.0 0.0 0.0 0.0 0.0 0.0 0.0 3881 3951 Two Family House (2000) Drama 0.0 0.0 0.0 0.0 0.0 0.0 1.0 ... 0.0 0.0 0.0 0.0 0.0 0.0 0.0 0.0 0.0 0.0 3882 3952 Contender, The (2000) Drama|Thriller 0.0 0.0 0.0 0.0 0.0 0.0 1.0 ... 0.0 1.0 0.0 0.0 0.0 0.0 0.0 0.0 0.0 0.0

3883 rows × 21 columns

movies_windic. iloc[ 0 ]

movie_id 1

title Toy Story (1995)

genres Animation|Children's|Comedy

Genre_Animation 1

Genre_Children's 1

Genre_Comedy 1

Genre_Adventure 0

Genre_Fantasy 0

Genre_Romance 0

Genre_Drama 0

Genre_Action 0

Genre_Crime 0

Genre_Thriller 0

Genre_Horror 0

Genre_Sci-Fi 0

Genre_Documentary 0

Genre_War 0

Genre_Musical 0

Genre_Mystery 0

Genre_Film-Noir 0

Genre_Western 0

Name: 0, dtype: object

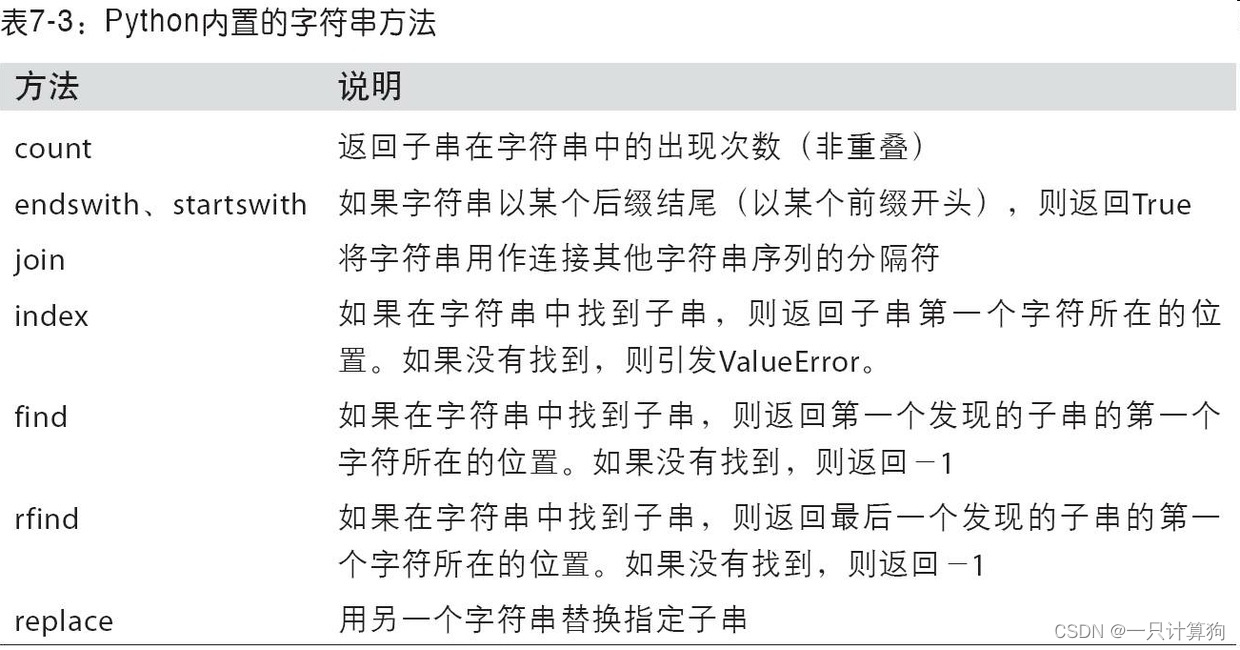

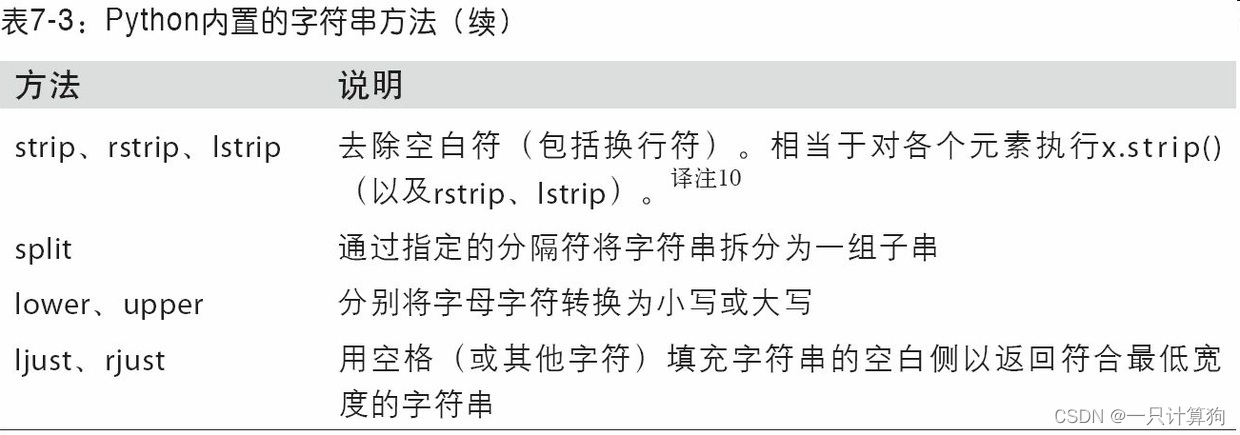

val = 'a,b, guido'

val. split( ',' )

['a', 'b', ' guido']

val= 'a,b, guido '

pieces= [ x. strip( ) for x in val. split( ',' ) ]

pieces

['a', 'b', 'guido']

first, second, third= pieces

first+ '::' + second+ '::' + third

'a::b::guido'

语法: ‘sep’.join(seq)

参数说明

返回值:返回一个以分隔符sep连接各个元素后生成的字符串

'::' . join( pieces)

'a::b::guido'

'guido' in val

True

index() 方法查找指定值的首次出现。

如果找不到该值,index() 方法将引发异常。

index() 方法与 find() 方法几乎相同,唯一的区别是,如果找不到该值,则 find() 方法将返回 -1。

val. index( ',' )

1

val. find( ':' )

-1

val. count( ',' )

2

val. replace( ',' , '::' )

'a::b:: guido '

import re

text = "foo bar\t baz \tqux"

re. split( '\s+' , text)

['foo', 'bar', 'baz', 'qux']

regex= re. compile ( '\s+' )

regex. split( text)

['foo', 'bar', 'baz', 'qux']

regex. findall( text)

[' ', '\t ', ' \t']

data = { 'Dave' : 'dave@google.com' , 'Steve' : 'steve@gmail.com' ,

'Rob' : 'rob@gmail.com' , 'Wes' : np. nan}

data= pd. Series( data)

data

Dave dave@google.com

Steve steve@gmail.com

Rob rob@gmail.com

Wes NaN

dtype: object

data. isnull( )

Dave False

Steve False

Rob False

Wes True

dtype: bool

data. str . contains( 'gmail' )

Dave False

Steve True

Rob True

Wes NaN

dtype: object

556

556

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?