项目地址:

github:Nana-nn/web-final-project (github.com)

知乎:新闻爬虫及爬取结果的查询网站 - 知乎 (zhihu.com)

视频请见:web demo(基于nodejs)_哔哩哔哩_bilibili

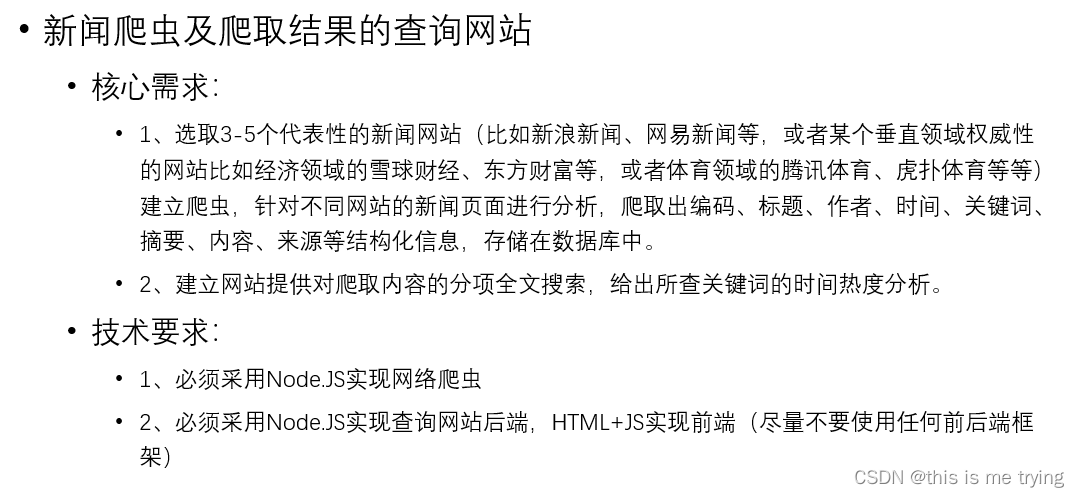

本篇博文是web编程期末大作业专栏的第二篇博文,详细介绍了开发过程中爬虫、前端、后端的设计思路以及重要代码。

二、开发过程

1. 新闻爬虫:

本次爬取了4个网页,新浪新闻,网易新闻,网易体育以及中国新闻网。

首先详细分析新浪新闻的页面爬取,其余3个页面的新闻爬取同新浪新闻大体类似,爬取的时候class或者id或者正则表达式可能对应不同,稍加修改即可。

新浪新闻爬取:

采用Node.JS实现网络爬虫

- 编写sina.js,首先引入必须的模块:

这里request主要是向被爬取的url发送请求,cheerio是为了解析并提取html中指定内容,iconv-lite是为了进行字符编码转换,date-utils是为了后续写入数据库时日期时间格式化,另外连接数据库还需要mysql包,连接数据库并执行sql语句,这在mysql.js中引入。

数据库中需要提前创建fetches的表,所用的sql如下:

DROP TABLE IF EXISTS `fetches`;

CREATE TABLE `fetches` (

`id_fetches` int(11) NOT NULL AUTO_INCREMENT,

`url` varchar(200) DEFAULT NULL,

`source` varchar(200) DEFAULT NULL,

`source_encoding` varchar(45) DEFAULT NULL,

`source_name` varchar(200) DEFAULT NULL,

`title` varchar(200) DEFAULT NULL,

`keywords` varchar(200) DEFAULT NULL,

`author` varchar(200) DEFAULT NULL,

`publish_date` date DEFAULT NULL,

`crawler_time` datetime DEFAULT NULL,

`content` longtext,

`description` longtext,

PRIMARY KEY (`id_fetches`),

UNIQUE KEY `id_fetches_UNIQUE` (`id_fetches`),

UNIQUE KEY `url_UNIQUE` (`url`)

) ENGINE=InnoDB DEFAULT CHARSET=utf8;

- 定义要访问的网站, 定义新闻页面里具体的元素的读取方式,定义哪些url可以作为新闻页面

进入新浪新闻首页:

主页中包含很多新闻连接,通过检查,可以发现在a标签中包含该链接,故但是可能a标签里还包含其他的链接,所以需要url_reg这个正则表达式来进行提取,进而提取出所有的新闻页面。

可以看到我们需要爬取的新闻页面的网址格式类似于:https://news.sina.com.cn/c/2022-07-16/doc-imizmscv1838099.shtml,即一开始是4个数字,中间-,2个数字,中间-,2个数字,之后必须是doc-,再跟15个数字字母,最后.shtml结尾。

之后便是确定keywords,title,date,author,source,description和content在页面中的哪个元素、标签、类、id

查看页面源代码:

可以看到title就是对应title标签,keywords对应meta name="keywords"这一标签的content,description对应meta name="description"这一标签的content,source对应meta name="mediaid"这一标签的content,date对应class="date"的类对应的标签内容

author对应class="show_author"类的文本内容,content对应class="article"类的文本内容

- 构造一个模仿浏览器的request并设置定时执行

-

读取种子页面:

首先用iconv转换编码,用cheerio解析html:

遍历种子页面里所有的a链接,新闻网页url是否符合url命名格式,如果数据库中不存在该新闻,则读取新闻页面。

-

读取新闻页面:

首先用iconv转换编码,用cheerio解析html,动态执行format字符串,构建json对象准备写入数据库,读取新闻页面中的元素并保存到fetch对象中。

-

写入数据库:

可以看到最后爬取到的数据:

综上,爬虫的总体思路如下:

•读取种子页面

•分析出种子页面里的所有新闻链接

•爬取所有新闻链接的内容

•分析新闻页面内容,解析出结构化数据

•将结构化数据保存到数据库

其他网页:

过程同新浪新闻的爬取类似,只不过新闻页面里具体的元素的读取方式,以及定义哪些url可以作为新闻页面不同

网易新闻:

title就是对应title标签,keywords对应meta name="keywords"这一标签的content,description对应meta name="description"这一标签的content,date对应id="ne_wrap"这一标签下data-publishtime

source对应class="post_info"下的第一个子元素的文本内容,content对应class="post_body"的文本内容

网易体育:

跟网易新闻的爬取很类似:

中国新闻网:

2. 前端:

采用express框架,根据菜单的点击来触发页面内容的更换,这里html和css的具体细节请见代码,这里主要详细介绍javascript的编写:

1. 点击查询按钮,触发search,返回搜索结果和图表

代码:

传入要搜索的类型,如’title’或者’content’或者’author’或者’publish_date’或者’title,content’,如果是复合查询,需要传入type,info,info1,symbol,这4个参数的含义分别代表类型,第一个输入框的输入内容,第二个输入框的输入内容,连接类型,为了书写简便,单个查询和复合查询就写在一个函数,统一传4个参数,只不过单个查询的时候info1和symbol不会用到。

数据拿到以后,就需要将原来位置的新闻信息删除,将新的数据内容append到指定位置,并且进行菜单颜色转变以及页面内容改变,构造图表所需要的dom元素,添加到指定位置即可。这里图表的绘制采用Echarts,绘制了2张图表。

function search(type) {

var info = "";

var info1 = "";

var symbol = "";

if (type == 'title') {

info = $('input#title').val();

} else if (type == 'content') {

info = $('input#content').val();

} else if (type == 'author') {

info = $('input#author').val();

} else if (type == 'publish_date') {

info = $('input#publish_date').val();

} else if (type == 'title,content') {

// 复合查询

info = $('input#title1').val();

info1 = $('input#content1').val();

symbol = $("#symbol").val();

}else if(type=='title,source_name'){

// 复合查询

info = $('input#title2').val();

info1 = $('input#content2').val();

symbol = $("#symbol1").val();

}

if (info == "") {

alert("请输入搜索内容");

} else {

var url = "/search";

$.ajax({

type: "GET",

url: url,

data: {

type: type,

info: info,

info1: info1,

symbol: symbol

},

success: function (data) {

origin_data = data;

// 将原来的元素清空

$(".del").remove();

$(".page-item").remove();

$("#delete").remove();

if (currentImageId == 1) {

// 菜单颜色转变

$(`.tm-nav-item:eq(${1})`).removeClass("active");

$(`.tm-nav-item:eq(${3})`).addClass("active");

// 页面内容转变

$(`.site-section-${1}`).fadeOut(function (e) {

$(`.site-section-${3}`).fadeIn();

});

previousImageId = 1;

currentImageId = 3;

bgCycle.cycleToNextImage(previousImageId, currentImageId);

}

// 可能有的长度小于10

var len = 0;

if (data.n_list.length < data.news_per_page) {

len = data.n_list.length;

} else {

len = data.news_per_page;

}

for (var i = 0; i < len; i++) {

// 控制长度,否则可能会溢出

if (data.n_list[i].title.length >= 35) {

data.n_list[i].title = data.n_list[i].title.slice(0, 35) + "......";

}

var title = "<td><a href='" + data.n_list[i].url + "'>" + data.n_list[i].title + "</a></td>";

var author = "<td>" + data.n_list[i].author + "</td>";

var publish_date = "<td>" + data.n_list[i].publish_date.slice(0, 10) + "</td>";

var source = "<td>" + data.n_list[i].source + "</td>";

var keywords = "<td>" + data.n_list[i].keywords + "</td>";

var info = "<td class='right'><input type='submit'class='search_button1'value='查看' οnclick='search_information(" + data.n_list[i].id_fetches + ")' /></td>";

var del = "<tr class='del' id='" + data.n_list[i].id_fetches + "'>";

$("#tbody-title").after($(del + title + author + publish_date + source + keywords + info + "</tr>"));

}

var addCode = "";

if (data.type == "publish_date") {

addCode = '<div class="table-background" id="delete">' +

'<div class="picture_part">' +

'<h5 class="table_title" style="margin-left:20px;padding-top:15px">' + data.n_list[i].publish_date.slice(0, 10) + '爬取的不同网页的新闻数量 搜索类型' + data.type + '</h5>' +

'<div id="date-num" style="width: 500px; height: 400px;margin-left:200px">' +

'</div>' +

'<div id="date-num1" style="width: 500px; height: 400px;margin-top:-400px;margin-left:800px">' +

'</div>' +

'</div>' +

'</div>';

} else {

addCode = '<div class="table-background" id="delete">' +

'<div class="picture_part">' +

'<h5 class="table_title"style="margin-left:20px;padding-top:15px">' + '时间热度分析 搜索类型' + data.type + '</h5>' +

'<div id="date-num" style="width: 500px; height: 400px;margin-left:200px">' +

'</div>' +

'<div id="date-num1" style="width: 500px; height: 400px;margin-top:-400px;margin-left:800px">' +

'</div>' +

'</div>' +

'</div>';

}

$("#picture").after($(addCode));

// 第一页是active

var nav_str = "<li class='page-item active' > <a class='page-link' οnclick='goto_page(" + 1 + ")'>" + 1 + "</a></li>";

$("#navs").append($(nav_str));

// // 从第二页开始

for (var i = 1; i < data.page_num; i++) {

var j = i + 1;

var nav_str = "<li class='page-item' > <a class='page-link' οnclick='goto_page(" + j + ")'>" + j + "</a></li>";

$("#navs").append($(nav_str));

}

var data_x = [];

var data_y = [];

if (data.type == "publish_date") {

for (var i = 0; i < data.charts.length; i++) {

data_x.push(data.charts[i].source_name);

data_y.push(data.charts[i].cnt);

}

} else {

for (var i = 0; i < data.charts.length; i++) {

data_x.push(data.charts[i].publish_date.slice(0, 10));

data_y.push(data.charts[i].cnt);

}

}

data_x.reverse();

data_y.reverse();

// 准备图表

var chart_date_num = echarts.init(document.getElementById('date-num'));

var option = {

legend: {

data: ['新闻数量']

},

xAxis: {

data: data_x

},

yAxis: {},

tooltip: {

trigger: 'item',

},

series: [{

name: '新闻数量',

type: 'bar',

data: data_y,

itemStyle: {

normal: {

color: ' #1e3c72',

lineStyle: {

color: ' #e9ecef'

}

}

},

}],

};

chart_date_num.setOption(option);

var chart_date_num1 = echarts.init(document.getElementById('date-num1'));

var option1 = {

legend: {

data: ['新闻数量']

},

xAxis: {

data: data_x

},

yAxis: {},

tooltip: {

trigger: 'item',

},

series: [{

name: '新闻数量',

type: 'line',

data: data_y,

itemStyle: {

normal: {

color: ' #f7b924',

lineStyle: {

color: ' #f7b924'

}

}

},

}],

};

chart_date_num1.setOption(option1);

},

error: function (XMLHttpRequest, textStatus, errorThrown) {

alert("无法获得search");

window.location = "/index.html";

}

});

}

}

这里search函数搜索的结果展现的是第一页的内容,后面当点击页码的时候,会触发goto_page(page)从而修改页面内容为第page页的内容。

2. 点击页码展示搜索结果新闻或者所有新闻的某一页

function goto_page(page_num) {

$(".del").remove();

$(".page-item").remove();

var select_data = origin_data.n_list.slice((page_num - 1) * origin_data.news_per_page, page_num * origin_data.news_per_page);

for (var i=select_data.length-1; i>=0; i--) {

// 控制长度,否则可能会溢出

if (select_data[i].title.length >= 35) {

select_data[i].title = select_data[i].title.slice(0, 35) + "......";

}

var title = "<td><a href='" + select_data[i].url + "'>" + select_data[i].title + "</a></td>";

var author = "<td>" + select_data[i].author + "</td>";

var publish_date = "<td>" + select_data[i].publish_date.slice(0, 10) + "</td>";

var source = "<td>" + select_data[i].source + "</td>";

var keywords = "<td>" + select_data[i].keywords + "</td>";

var info = "<td class='right'><input type='submit' class='search_button1'value='查看' οnclick='search_information(" + select_data[i].id_fetches + ")' /></td>";

var del = "<tr class='del' id='" + select_data[i].id_fetches + "'>";

$("#tbody-title").after($(del + title + author + publish_date + source + keywords + info + "</tr>"));

}

for (var i = 0; i < origin_data.page_num; i++) {

var j = i + 1;

var nav_str = "<li class='page-item' > <a class='page-link' οnclick='goto_page(" + j + ")'>" + j + "</a></li>";

if (j == page_num) {

var nav_str = "<li class='page-item active' > <a class='page-link' οnclick='goto_page(" + j + ")'>" + j + "</a></li>";

}

$("#navs").append($(nav_str));

}

}

这里的origin_data是一个全局变量,每次点击菜单中的新闻或者点击搜索中的查询按钮的时候都会对origin_data进行一个赋值,这样在触发goto_page或者goto_page1的时候就可以根据传入的page_num,对origin_data进行slice。

goto_page和goto_page1的逻辑一样,只不过goto_page针对搜索结果的分页,goto_page1是对数据库的所有新闻的分页,都是先将页面上已有的表格内容清空,然后再添加页面信息进去。

3. 展现数据库中的所有新闻的第一页

不需要传入参数,先将页面上已有的表格内容清空,然后再添加页面信息进去。

function all_news() {

var url = "/all_news";

$.ajax({

type: "GET",

url: url,

data: {

},

success: function (data) {

origin_data = data;

$(".del1").remove();

$(".page-item1").remove();

// $("#delete").remove();

// $("#head").html("新闻");

for (var i = 0; i < data.news_per_page; i++) {

var title = "<td><a href='" + data.n_list[i].url + "'>" + data.n_list[i].title + "</a></td>";

var author = "<td>" + data.n_list[i].author + "</td>";

var publish_date = "<td>" + data.n_list[i].publish_date.slice(0, 10) + "</td>";

var source = "<td>" + data.n_list[i].source + "</td>";

var keywords = "<td>" + data.n_list[i].keywords + "</td>";

var info = "<td class='right'><input type='submit'class='search_button1'value='查看' οnclick='news_information(" + data.n_list[i].id_fetches + ")' /></td>";

var del = "<tr class='del1' id='" + data.n_list[i].id_fetches + "'>";

$("#tbody-title1").after($(del + title + author + publish_date + source + keywords + info + "</tr>"));

}

// // 第一页是active

var nav_str = "<li class='page-item1 active' > <a class='page-link' οnclick='goto_page1(" + 1 + ")'>" + 1 + "</a></li>";

$("#navs1").append($(nav_str));

// // 从第二页开始

for (var i = 1; i < data.page_num; i++) {

var j = i + 1;

var nav_str = "<li class='page-item1' > <a class='page-link' οnclick='goto_page1(" + j + ")'>" + j + "</a></li>";

$("#navs1").append($(nav_str));

}

},

error: function (XMLHttpRequest, textStatus, errorThrown) {

alert("无法获得all_news");

window.location = "/index.html";

}

});

}

4. 查看新闻的详细信息:

分为两个函数,分别对应着查看所有新闻详细信息和查看搜索结果的详细信息,逻辑都是一样的,这里以查看搜索结果的详细信息为例:

function search_information(id_fetches) {

var url = "/news_information";

document.getElementById("back-pop1").style.display = "block";

$.ajax({

type: "GET",

url: url,

data: {

id_fetches: id_fetches

},

success: function (data) {

$("#url_pop1").html("url: " + "<a href='" + data.new_info.url + "'>" + data.new_info.url + "</a>");

$("#source1").html("来源: " + data.new_info.source);

$("#title_pop1").html("标题: " + data.new_info.title);

$("#keywords1").html("关键词: " + data.new_info.keywords);

$("#author_pop1").html("作者: " + data.new_info.author);

$("#date1").html("发表日期: " + data.new_info.publish_date);

$("#crawler_time1").html("爬取日期: " + data.new_info.crawler_time);

$("#summary1").html("摘要: " + data.new_info.description);

$("#content_pop1").html("内容: " + data.new_info.content);

},

error: function (XMLHttpRequest, textStatus, errorThrown) {

alert("无法获得news_information");

window.location = "/index.html";

}

});

}

传入参数是id_fetches,这里是要有弹窗出现的,所以需要

document.getElementById("back-pop1").style.display = "block";

然后将对应元素的内容添加即可。

5. 按照发表时间升序:

其实同all_news()逻辑一样,只是后端的sql语句中修改一下:

function top_order(){

var url = "/all_news_order";

$.ajax({

type: "GET",

url: url,

data: {

},

success: function (data) {

origin_data = data;

$(".del1").remove();

$(".page-item1").remove();

// $("#delete").remove();

// $("#head").html("新闻");

for (var i = data.news_per_page-1; i >=0; i--) {

var title = "<td><a href='" + data.n_list[i].url + "'>" + data.n_list[i].title + "</a></td>";

var author = "<td>" + data.n_list[i].author + "</td>";

var publish_date = "<td>" + data.n_list[i].publish_date.slice(0, 10) + "</td>";

var source = "<td>" + data.n_list[i].source + "</td>";

var keywords = "<td>" + data.n_list[i].keywords + "</td>";

var info = "<td class='right'><input type='submit'class='search_button1'value='查看' οnclick='news_information(" + data.n_list[i].id_fetches + ")' /></td>";

var del = "<tr class='del1' id='" + data.n_list[i].id_fetches + "'>";

$("#tbody-title1").after($(del + title + author + publish_date + source + keywords + info + "</tr>"));

}

// // 第一页是active

var nav_str = "<li class='page-item1 active' > <a class='page-link' οnclick='goto_page1(" + 1 + ")'>" + 1 + "</a></li>";

$("#navs1").append($(nav_str));

// // 从第二页开始

for (var i = 1; i < data.page_num; i++) {

var j = i + 1;

var nav_str = "<li class='page-item1' > <a class='page-link' οnclick='goto_page1(" + j + ")'>" + j + "</a></li>";

$("#navs1").append($(nav_str));

}

},

error: function (XMLHttpRequest, textStatus, errorThrown) {

alert("无法获得all_news");

window.location = "/index.html";

}

});

}

6. 按照发表时间降序排列:

其实同all_news()逻辑一样,只是后端的sql语句中修改一下:

function down(){

var url = "/all_news_desc";

$.ajax({

type: "GET",

url: url,

data: {

},

success: function (data) {

origin_data = data;

$(".del1").remove();

$(".page-item1").remove();

// $("#delete").remove();

// $("#head").html("新闻");

for (var i = 0; i < data.news_per_page; i++) {

var title = "<td><a href='" + data.n_list[i].url + "'>" + data.n_list[i].title + "</a></td>";

var author = "<td>" + data.n_list[i].author + "</td>";

var publish_date = "<td>" + data.n_list[i].publish_date.slice(0, 10) + "</td>";

var source = "<td>" + data.n_list[i].source + "</td>";

var keywords = "<td>" + data.n_list[i].keywords + "</td>";

var info = "<td class='right'><input type='submit'class='search_button1'value='查看' οnclick='news_information(" + data.n_list[i].id_fetches + ")' /></td>";

var del = "<tr class='del1' id='" + data.n_list[i].id_fetches + "'>";

$("#tbody-title1").after($(del + title + author + publish_date + source + keywords + info + "</tr>"));

}

// // 第一页是active

var nav_str = "<li class='page-item1 active' > <a class='page-link' οnclick='goto_page1(" + 1 + ")'>" + 1 + "</a></li>";

$("#navs1").append($(nav_str));

// // 从第二页开始

for (var i = 1; i < data.page_num; i++) {

var j = i + 1;

var nav_str = "<li class='page-item1' > <a class='page-link' οnclick='goto_page1(" + j + ")'>" + j + "</a></li>";

$("#navs1").append($(nav_str));

}

},

error: function (XMLHttpRequest, textStatus, errorThrown) {

alert("无法获得all_news");

window.location = "/index.html";

}

});

}

3. 后端:

采用Node.JS实现后端

为了更好地模块化,有利于开发和维护,利用express框架中的route方法,创建route文件夹,在这里面专门放路由的代码index.js。

后端路由主要是5个逻辑:

1. 返回查询的新闻:

这里包含单个的查询以及复合查询

router.get('/search', function (req, res) {

var n_list = [];

// 查询数据库

var type = req.query.type;

var info1 = req.query.info1;

var info = req.query.info;

var symbol = req.query.symbol;

var s_sql = "";

var c_sql = "";

// 单个的查询:查询标题、内容、作者、发表日期

if (type == 'title') {

s_sql = "select distinct id_fetches, url, keywords,source, title, author, publish_date from fetches where title like '%" + info + "%'";

// 最近7天

c_sql = "select count(*) as cnt, publish_date from fetches where title like'%" + info + "%' group by publish_date order by publish_date desc limit 7";

} else if (type == 'content') {

s_sql = "select distinct id_fetches, url, keywords,source, title, author, publish_date from fetches where content like '%" + info + "%'";

c_sql = "select count(*) as cnt, publish_date from fetches where content like'%" + info + "%' group by publish_date order by publish_date desc limit 7";

} else if (type == 'author') {

s_sql = "select distinct id_fetches, url, keywords,source, title, author, publish_date from fetches where author like '%" + info + "%'";

c_sql = "select count(*) as cnt, publish_date from fetches where author like'%" + info + "%' group by publish_date order by publish_date desc limit 7";

} else if (type == 'publish_date') {

s_sql = "select distinct id_fetches, url,keywords, source, title, author, publish_date from fetches where publish_date like '%" + info + "%'";

// 如果是时间,那么展现在这一天发表的不同来源的新闻个数,分别用柱状体和折线图表现

c_sql = "select count(*) as cnt, source_name from fetches where publish_date like '%" + info + "%' group by source_name order by cnt desc;";

} else if (type == 'title,content') {

if (symbol == "and") {

s_sql = "select distinct id_fetches, url, keywords, source, title, author, publish_date from fetches where title like '%" + info + "%' and " + "content like '%" + info1 + "%'";

c_sql = "select count(*) as cnt, publish_date from fetches where title like '%" + info + "%' and " + "content like '%" + info1 + "%' group by publish_date order by publish_date desc limit 7";

} else {

s_sql = "select distinct id_fetches, url, keywords, source, title, author, publish_date from fetches where title like '%" + info + "%' or " + "content like '%" + info1 + "%'";

c_sql = "select count(*) as cnt, publish_date from fetches where title like '%" + info + "%' or " + "content like '%" + info1 + "%' group by publish_date order by publish_date desc limit 7";

}

}

my_sql.query(c_sql, function (err, result1, fields) {

c_list = result1;

my_sql.query(s_sql, function (err, result, fields) {

if (err) {

console.log(err);

} else {

// 只把前news_per_page * page_num条新闻传过去

n_list = result;

if (n_list.length <= news_per_page * page_num) {

var page = Math.ceil(n_list.length / news_per_page);

// 不足news_per_page * page_num条的新闻,直接传

res.json({

info: info, type: type,

n_list: n_list, page_num: page, news_per_page: news_per_page, charts: c_list

});

} else {

// 超过news_per_page * page_num条的新闻,传前面的news_per_page * page_num条

res.json({

info: info, type: type,

n_list: n_list.slice(0, news_per_page * page_num),

page_num: page_num, news_per_page: news_per_page, charts: c_list

});

}

}

});

});

})

2. 展示数据库中所有的新闻:

router.get('/all_news', function (req, res) {

var s_sql = "select distinct id_fetches, url, keywords,source, title, author, publish_date from fetches";

my_sql.query(s_sql, function (err, result, fields) {

if (err) {

console.log(err);

} else {

// 只把前news_per_page *page_num条新闻传过去

n_list = result;

if (n_list.length <= news_per_page * page_num) {

var page = Math.ceil(n_list.length / news_per_page);

// 不足news_per_page *page_num条的新闻,直接传

res.json({n_list: n_list, page_num: page, news_per_page: news_per_page });

} else {

// 超过news_per_page *page_num条的新闻

res.json({n_list: n_list.slice(0, news_per_page * page_num), page_num: page_num, news_per_page: news_per_page });

}

}

});

})

3. 查看新闻的详细信息:

router.get('/news_information', function (req, res) {

var id_fetches = req.query.id_fetches;

var s_sql = "select distinct url, source, title, keywords, author, publish_date, crawler_time, description, content from fetches where id_fetches=?";

my_sql.query(s_sql, id_fetches, function (err, result, fields) {

res.json({ new_info: result[0] });

});

})

4. 按照发表时间升序排列:

router.get('/all_news', function (req, res) {

var s_sql = "select distinct id_fetches, url, keywords,source, title, author, publish_date from fetches where keywords!='' and title!='' and author!='' and source!=''";

my_sql.query(s_sql, function (err, result, fields) {

if (err) {

console.log(err);

} else {

// 只把前news_per_page *page_num条新闻传过去

n_list = result;

if (n_list.length <= news_per_page * page_num) {

var page = Math.ceil(n_list.length / news_per_page);

// 不足news_per_page *page_num条的新闻,直接传

res.json({n_list: n_list, page_num: page, news_per_page: news_per_page });

} else {

// 超过news_per_page *page_num条的新闻

res.json({n_list: n_list.slice(0, news_per_page * page_num), page_num: page_num, news_per_page: news_per_page });

}

}

});

})

5. 按照发表时间降序排列:

router.get('/all_news_desc', function (req, res) {

var s_sql = "select distinct id_fetches, url, keywords,source, title, author, publish_date from fetches where keywords!='' and title!='' and author!='' and source!='' order by publish_date desc ";

my_sql.query(s_sql, function (err, result, fields) {

if (err) {

console.log(err);

} else {

// 只把前news_per_page *page_num条新闻传过去

n_list = result;

if (n_list.length <= news_per_page * page_num) {

var page = Math.ceil(n_list.length / news_per_page);

// 不足news_per_page *page_num条的新闻,直接传

res.json({n_list: n_list, page_num: page, news_per_page: news_per_page });

} else {

// 超过news_per_page *page_num条的新闻,

res.json({n_list: n_list.slice(0, news_per_page * page_num), page_num: page_num, news_per_page: news_per_page });

}

}

});

})

194

194

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?