/usr/lib/jvm/default-java/bin/java: 没有那个文件或目录

遇到的问题

[hadoop@hadoop100 ~]$ cd /usr/local/spark

[hadoop@hadoop100 spark]$ bin/pyspark

/usr/local/spark/bin/spark-class:行71: /usr/lib/jvm/default-java/bin/java: 没有那个文件或目录

解决办法

大概率是环境变量问题

#指向 JDK 的安装位置

export JAVA_HOME=/usr/lib/jvm/java-1.8.0-openjdk

#java环境

export JAVA_HOME=/usr/lib/jvm/jdk1.8.0_162

export JRE_HOME=${JAVA_HOME}/jre

export CLASSPATH=.:${JAVA_HOME}/lib:${JRE_HOME}/lib

export PATH=${JAVA_HOME}/bin:$PATH

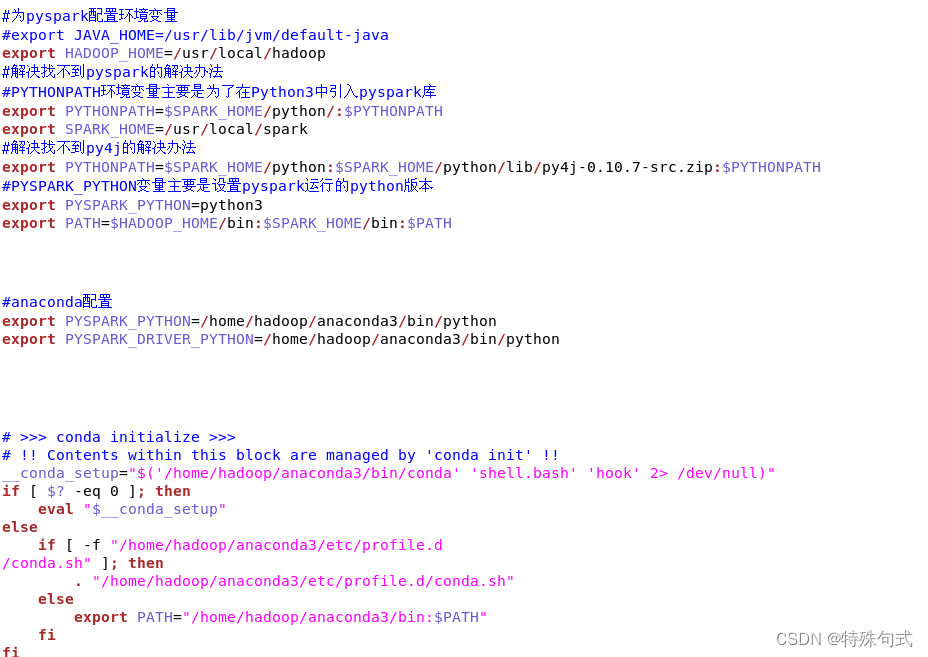

放一个完整环境变量的图,留个纪念,以便于自己下次改错,目前为止,运行时没有问题了

代码:

# .bashrc

export PATH=$PATH:/usr/local/sbin:/usr/local/bin:/sbin:/bin:/usr/

# Source global definitions

if [ -f /etc/bashrc ]; then

. /etc/bashrc

fi

# Uncomment the following line if you don't like systemctl's auto-paging feature:

# export SYSTEMD_PAGER=

# User specific aliases and functions

#指向 JDK 的安装位置

export JAVA_HOME=/usr/lib/jvm/java-1.8.0-openjdkS

#java环境

export JAVA_HOME=/usr/lib/jvm/jdk1.8.0_162

export JRE_HOME=${JAVA_HOME}/jre

export CLASSPATH=.:${JAVA_HOME}/lib:${JRE_HOME}/lib

export PATH=${JAVA_HOME}/bin:$PATH

export PATH=${JAVA_HOME}/bin:$PATH/bin:/home/hadoop/anaconda3/bin

# Hadoop Environment Variables

export HADOOP_HOME=/usr/local/hadoop

export HADOOP_INSTALL=$HADOOP_HOME

export HADOOP_MAPRED_HOME=$HADOOP_HOME

export HADOOP_COMMON_HOME=$HADOOP_HOME

export HADOOP_HDFS_HOME=$HADOOP_HOME

export YARN_HOME=$HADOOP_HOME

export HADOOP_COMMON_LIB_NATIVE_DIR=$HADOOP_HOME/lib/native

export PATH=$PATH:$HADOOP_HOME/sbin:$HADOOP_HOME/bin

#为pyspark配置环境变量

#export JAVA_HOME=/usr/lib/jvm/default-java

export HADOOP_HOME=/usr/local/hadoop

#解决找不到pyspark的解决办法

#PYTHONPATH环境变量主要是为了在Python3中引入pyspark库

export PYTHONPATH=$SPARK_HOME/python/:$PYTHONPATH

export SPARK_HOME=/usr/local/spark

#解决找不到py4j的解决办法

export PYTHONPATH=$SPARK_HOME/python:$SPARK_HOME/python/lib/py4j-0.10.7-src.zip:$PYTHONPATH

#PYSPARK_PYTHON变量主要是设置pyspark运行的python版本

export PYSPARK_PYTHON=python3

export PATH=$HADOOP_HOME/bin:$SPARK_HOME/bin:$PATH

#anaconda配置

export PYSPARK_PYTHON=/home/hadoop/anaconda3/bin/python

export PYSPARK_DRIVER_PYTHON=/home/hadoop/anaconda3/bin/python

# >>> conda initialize >>>

# !! Contents within this block are managed by 'conda init' !!

__conda_setup="$('/home/hadoop/anaconda3/bin/conda' 'shell.bash' 'hook' 2> /dev/null)"

if [ $? -eq 0 ]; then

eval "$__conda_setup"

else

if [ -f "/home/hadoop/anaconda3/etc/profile.d

/conda.sh" ]; then

. "/home/hadoop/anaconda3/etc/profile.d/conda.sh"

else

export PATH="/home/hadoop/anaconda3/bin:$PATH"

fi

fi

大三spark实验中遇到的问题留念而作,如果有问题,请指出

4969

4969

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?