解析-xpath

xpath

xpath使用:

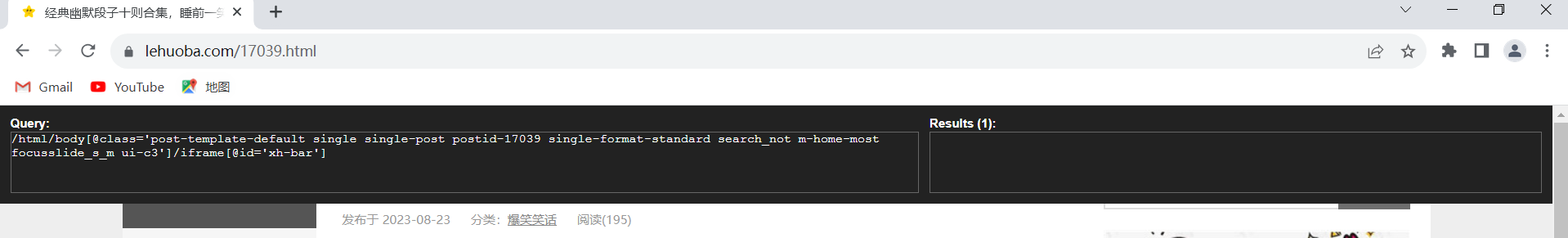

注意:提前安装xpath插件

(1) 打开chrome浏览器

(2) 点击右上角小圆点

(3)更多工具

(4) 扩展程序

(5)拖拽xpath插件到扩展程序中

(6)如果crx文件失效,需要将后缀修改zip

(7)再次拖拽

(8) 关闭浏览器重新打开

(9) ctrl + shift + x

(10) 出现小黑框

1.安装xml库

pip install lxml -i https://pypi.douban.com/simple

2.导入1xml.etree

from lxml import etree

3.etree .parse() 解析本地文件

html_tree = etree.parse( 'xx.html')

4.etree .HTML() 服务器响应文件

html_tree = etree.HTML(response.read().decode( 'utf-8')

5.html_tree.xpath(xpath路径)

xpath基本语法:

1.路径查询

//: 查找所有子孙节点,不考虑层级关系

/ : 找直接子节点

2.谓词查询

//div[@id]

//div[@id="maincontent"]

3.属性查询

//@class

4.模糊查询

//div[contains(@id,"he")]

//div[starts-with(@id,"he")]

5.内容查询

//div/h1/text()

6.逻辑运算

//div[@id="head" and @class="s down"]

//title ! //price

from lxml import etree

# xpath解析本地文件

tree = etree.parse('spider_解析_xpath的基本使用.html')

# tree.xpath('xpath路径')

# 查找ul下面的li

# li_list = tree.xpath('//body/ul/li/text()')

# 查找所有有id属性的li标签

# text()获取标签中的内容

# li_list = tree.xpath('//ul/li[@id]/text()')

# 查找id为1的li标签 注意引号问题

# li_list = tree.xpath('//ul/li[@id="l1"]/text()')

# 查找id为l1的li标签的class的属性值

# li = tree.xpath('//ul/li[@id="l1"]/@class')

# 查找id中包含l的li标签

# li_list = tree.xpath('//ul/li[contains(@id, "l")]/text()')

# 查找id的值以l开头的li标签

# li_list = tree.xpath('//ul/li[starts-with(@id, "l")]/text()')

# 查找id为l1和class为c1的li标签

# li_list = tree.xpath('//ul/li[@id="l1" and @class="c1"]/text()')

li_list = tree.xpath('//ul/li[@id="l1"]/text() | //ul/li[@id="l2"]/text()')

# 判断列表的长度

print(li_list)

print(len(li_list))

xpath获取百度网站的百度一下四个字

import urllib.request

url = "https://www.baidu.com/"

headers = {

'User-Agent': 'Mozilla/5.0 (Windows NT 10.0; Win64; x64) AppleWebKit/537.36 (KHTML, like Gecko) Chrome/116.0.0.0 Safari/537.36'

}

request = urllib.request.Request(url=url, headers=headers)

response = urllib.request.urlopen(request)

content = response.read().decode('utf-8')

# 解析网页源码 获取想要的数据

from lxml import etree

# 解析服务器响应的文件

tree = etree.HTML(content)

# 获取想要的数据 xpath的返回值是一个列表类型的数据

result = tree.xpath('//*[@id="su"]/@value')[0]

print(result)

爬取站长素材网的风景图片

代码如下:

import urllib.request

from lxml import etree

# https://img.ivsky.com/tupian/renwutupian/ 第一页

# https://img.ivsky.com/tupian/renwutupian/index_2.html

# https://img.ivsky.com/tupian/renwutupian/index_3.html

def create_request(page):

if page == 1:

url = 'https://sc.chinaz.com/tupian/fengjing.html '

else:

url = 'https://sc.chinaz.com/tupian/fengjing_' + str(page) + '.html'

headers = {

'User-Agent': 'Mozilla/5.0 (Windows NT 10.0; Win64; x64) AppleWebKit/537.36 (KHTML, like Gecko) Chrome/116.0.0.0 Safari/537.36'

}

request = urllib.request.Request(url=url, headers=headers)

return request

def get_content(request):

response = urllib.request.urlopen(request)

content = response.read().decode('utf-8')

return content

def download(content):

tree = etree.HTML(content)

name_list = tree.xpath('//div[@class="container"]//img/@alt')

src_list = tree.xpath('//div[@class="container"]//img/@data-original')

for i in range(len(name_list)):

name = name_list[i]

src = src_list[i]

url = 'https:' + src

urllib.request.urlretrieve(url=url, filename='./fengjing/' + name + '.png')

if __name__ == '__main__':

start_page = int(input('请输入开始的页数:'))

end_page = int(input('请输入结束的页数:'))

for page in range(start_page, end_page+1):

request = create_request(page)

content = get_content(request)

download(content)

e(start_page, end_page+1):

request = create_request(page)

content = get_content(request)

download(content)

320

320

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?