k8s-搭建redis集群

目录

本博客摘录自K8S搭建Redis集群

镜像源来自于阿里云开发者社区

DNS解析文件来源于阿里云dns镜像站

更多福利介绍详见https://mp.csdn.net/mp_blog/manage/traffic

一、规划思路

- redis持久化数据存储的路径;

- 从节点挂载;

- 编写statefulsets、configmap、service等yaml文件并创建;(要改dns解析文件的挂载路径)

- 验收无头redis集群;

- 其他步骤;

二、 创建NFS存储

- 共享路径的服务器节点上做检查

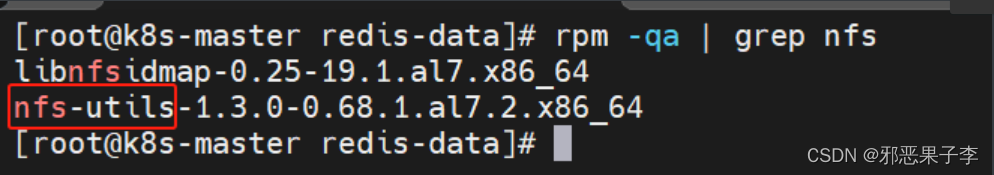

# 前置检查步骤(检查nfs工具包是否存在)

rpm -qa | grep nfs

# 不存在则

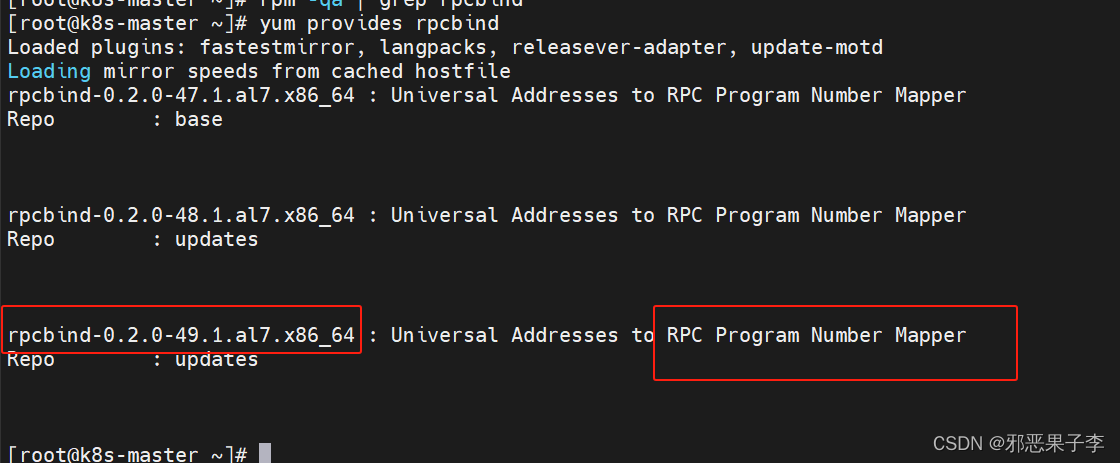

yum provides nfs-utils

# 选择比较合理的版本

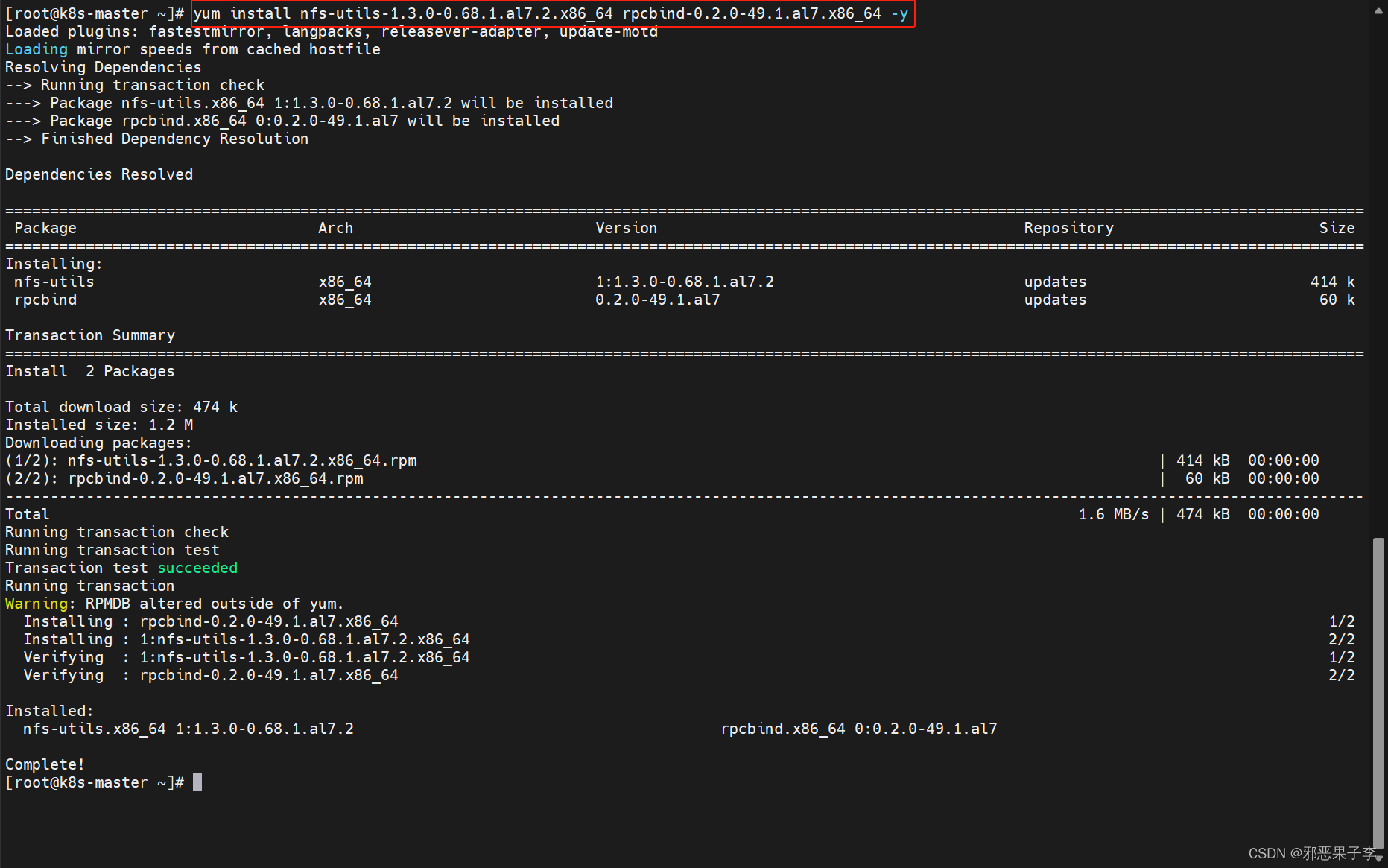

yum install -y nfs-utils-1.3.0-0.68.1.al7.2.x86_64 rpcbind-0.2.0-49.1.al7.x86_64

三、 共享路径(需要被从节点识别挂载)

- master节点创建规划好的共享路径

# 创建

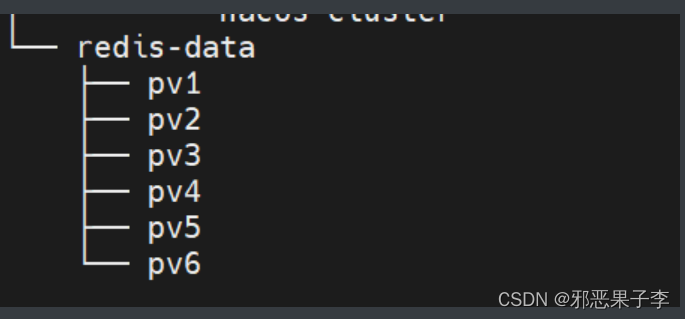

mkdir -p /data/redis-data/{pv1,pv2,pv3,pv4,pv5,pv6}

# 验证

tree -l

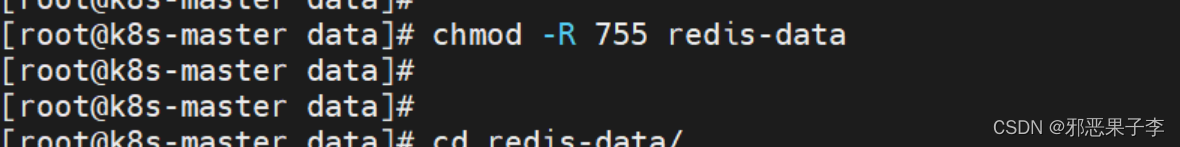

- 递归提升文件目录的使用权限

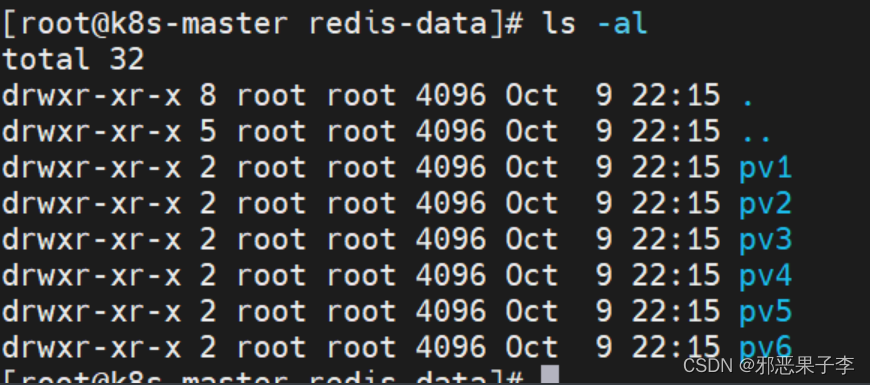

# 验证

ls -al

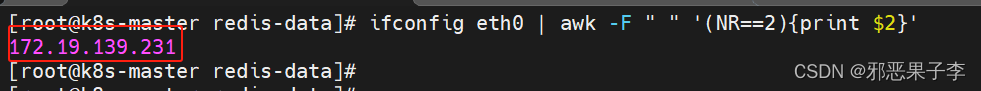

ifconfig eth0 | awk -F " " '(NR==2){print $2}'

3. 把持久化存储路径写进/etc/exports

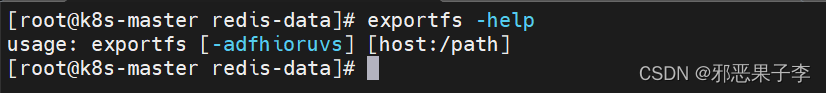

查看刷新共享路径指令

exportfs -a

vim /etc/exports

---------------------------------------------------------

/data/redis-data/pv1 172.19.0.0/16(sync,wdelay,hide,no_subtree_check,sec=sys,rw,secure,no_root_squash,no_all_squash)

/data/redis-data/pv2 172.19.0.0/16(sync,wdelay,hide,no_subtree_check,sec=sys,rw,secure,no_root_squash,no_all_squash)

/data/redis-data/pv3 172.19.0.0/16(sync,wdelay,hide,no_subtree_check,sec=sys,rw,secure,no_root_squash,no_all_squash)

/data/redis-data/pv4 172.19.0.0/16(sync,wdelay,hide,no_subtree_check,sec=sys,rw,secure,no_root_squash,no_all_squash)

/data/redis-data/pv5 172.19.0.0/16(sync,wdelay,hide,no_subtree_check,sec=sys,rw,secure,no_root_squash,no_all_squash)

/data/redis-data/pv6 172.19.0.0/16(sync,wdelay,hide,no_subtree_check,sec=sys,rw,secure,no_root_squash,no_all_squash)

exportfs -help

四、路径挂载

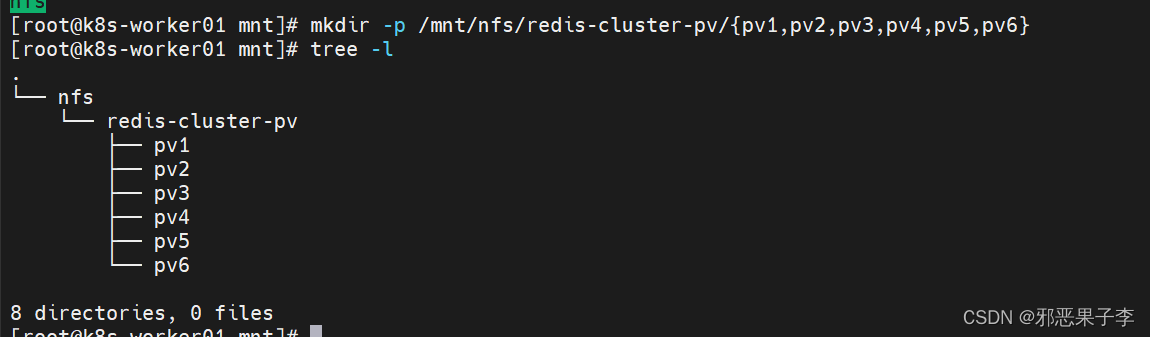

1.创建需要被挂载的共享路径

# 至从节点创建需要被挂载出来的共享路径

mkdir -p /mnt/nfs/redis-cluster-pv/{pv1,pv2,pv3,pv4,pv5,pv6}

chmod -R 777 /mnt/nfs

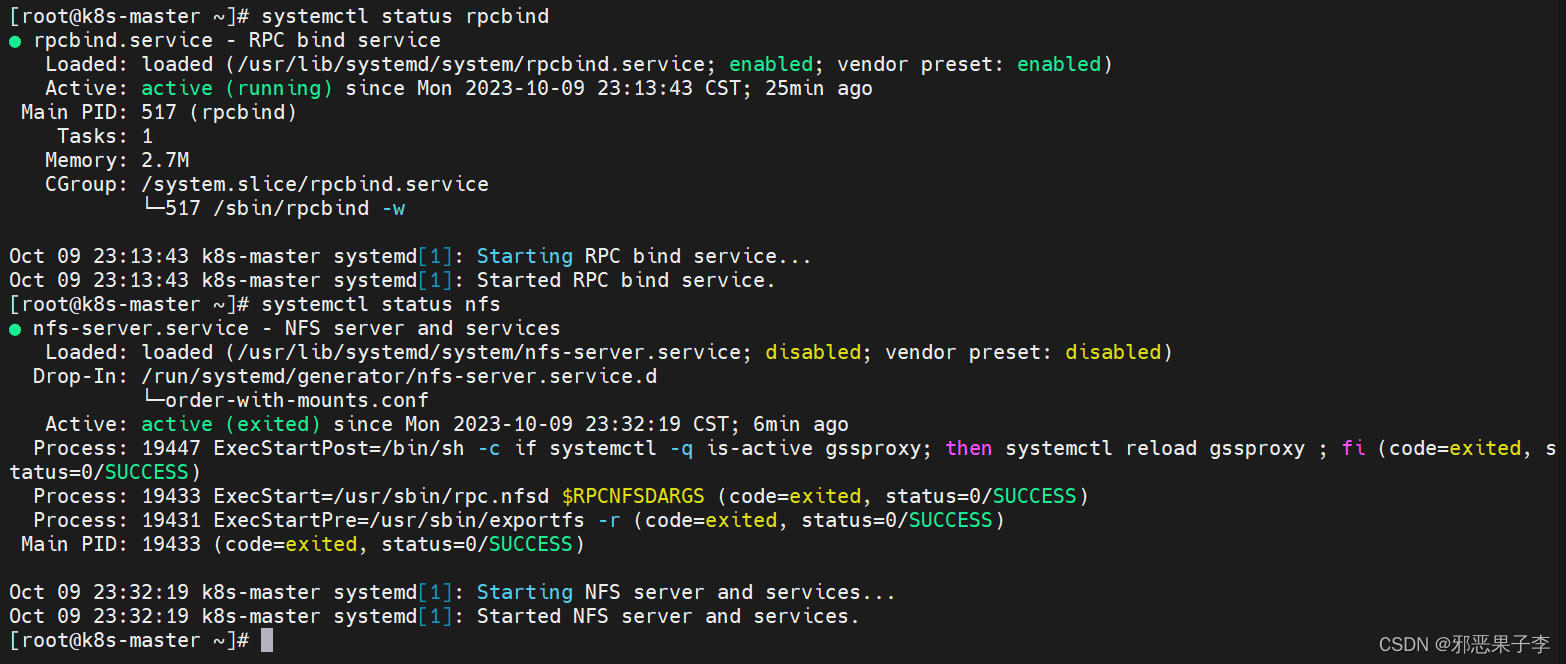

2.检查对应rpcbind以及nfs服务正常与否

systemctl status rpcbind

systemctl status nfs

# 没有激活的状态

systemctl enable rpcbind

systemctl enable nfs

systemctl start rpcbind

systemctl start nfs

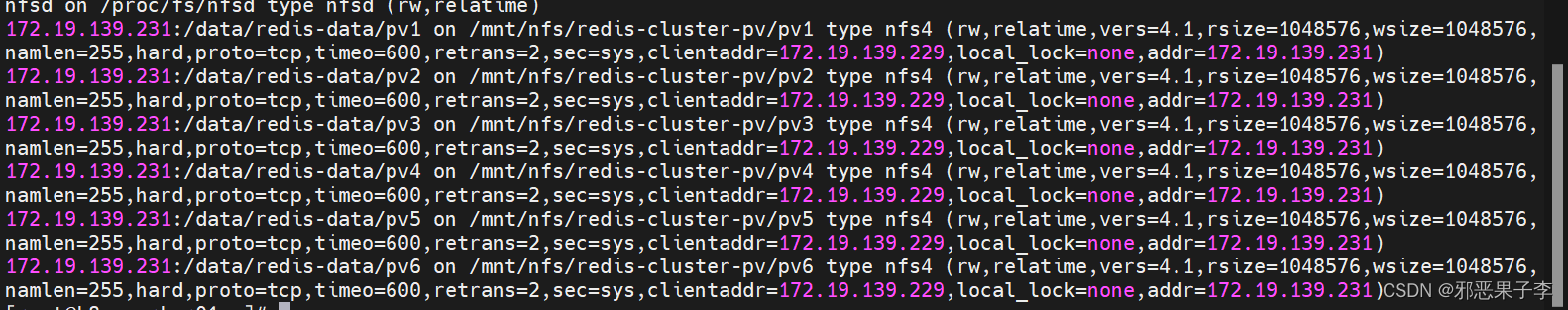

3.挂载对应路径

# vi /etc/fstab

172.19.139.231:/data/redis-data/pv1 /mnt/nfs/redis-cluster-pv/pv1 nfs defaults 0 0

172.19.139.231:/data/redis-data/pv2 /mnt/nfs/redis-cluster-pv/pv2 nfs defaults 0 0

172.19.139.231:/data/redis-data/pv3 /mnt/nfs/redis-cluster-pv/pv3 nfs defaults 0 0

172.19.139.231:/data/redis-data/pv4 /mnt/nfs/redis-cluster-pv/pv4 nfs defaults 0 0

172.19.139.231:/data/redis-data/pv5 /mnt/nfs/redis-cluster-pv/pv5 nfs defaults 0 0

172.19.139.231:/data/redis-data/pv6 /mnt/nfs/redis-cluster-pv/pv6 nfs defaults 0 0

# 重新加载挂载目录

mount -a

# 做检查

mount

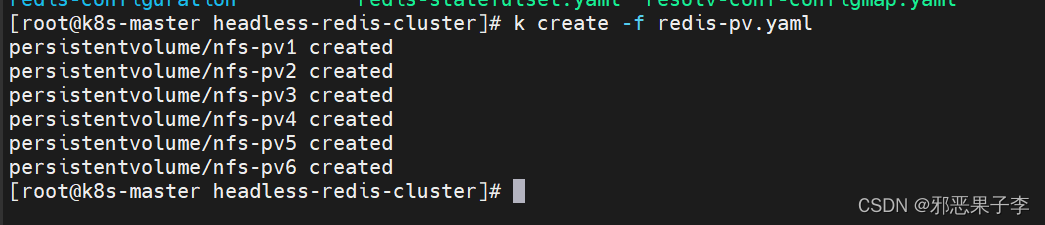

五、编写yaml文件

- 编写redis-pv.yaml文件创建持久卷

vim redis-pv.yaml

---

apiVersion: v1

kind: PersistentVolume

metadata:

name: nfs-pv1

spec:

storageClassName: redis

capacity:

storage: 1Gi

accessModes:

- ReadWriteMany

nfs:

server: 172.19.139.231

path: "/data/redis-data/pv1"

---

apiVersion: v1

kind: PersistentVolume

metadata:

name: nfs-pv2

spec:

storageClassName: redis

capacity:

storage: 1Gi

accessModes:

- ReadWriteMany

nfs:

server: 172.19.139.231

path: "/data/redis-data/pv2"

---

apiVersion: v1

kind: PersistentVolume

metadata:

name: nfs-pv3

spec:

storageClassName: redis

capacity:

storage: 1Gi

accessModes:

- ReadWriteMany

nfs:

server: 172.19.139.231

path: "/data/redis-data/pv3"

---

apiVersion: v1

kind: PersistentVolume

metadata:

name: nfs-pv4

spec:

storageClassName: redis

capacity:

storage: 1Gi

accessModes:

- ReadWriteMany

nfs:

server: 172.19.139.231

path: "/data/redis-data/pv4"

---

apiVersion: v1

kind: PersistentVolume

metadata:

name: nfs-pv5

spec:

storageClassName: redis

capacity:

storage: 1Gi

accessModes:

- ReadWriteMany

nfs:

server: 172.19.139.231

path: "/data/redis-data/pv5"

---

apiVersion: v1

kind: PersistentVolume

metadata:

name: nfs-pv6

spec:

storageClassName: redis

capacity:

storage: 1Gi

accessModes:

- ReadWriteMany

nfs:

server: 172.19.139.231

path: "/data/redis-data/pv6"

2. 创建redis的resolv.conf configmap对象

vim resolv-conf-configmap.yaml

# ----------------------------------------------------------------------------

---

apiVersion: v1

kind: ConfigMap

metadata:

name: resolv-conf

data:

resolv.conf: |

search default.svc.cluster.local

search svc.cluster.local

search cluster.local

nameserver 223.5.5.5

nameserver 223.6.6.6

options ndots:5

# ----------------------------------------------------------------------------

k apply -f resolv-conf-configmap.yaml

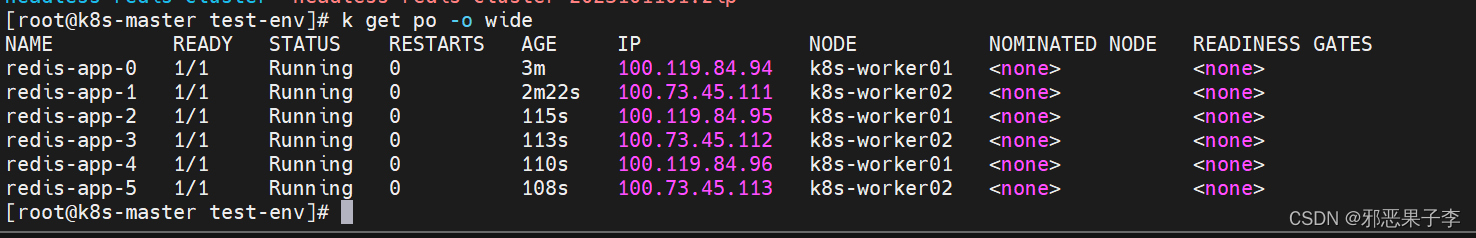

- 查看野pod

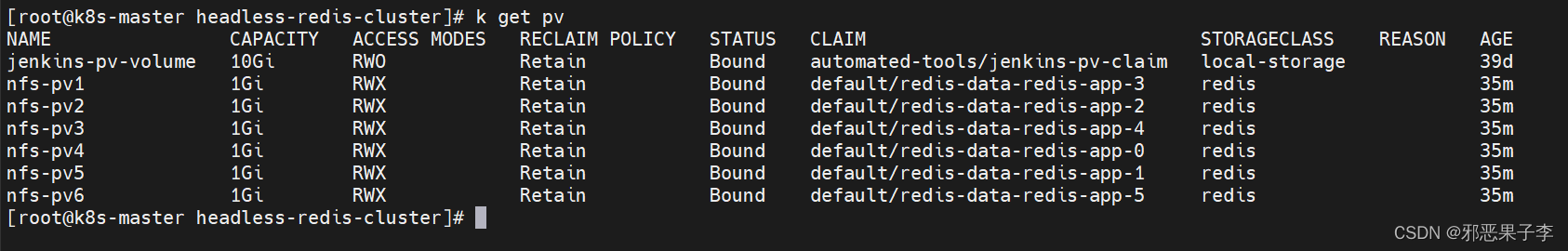

- 检查pv持久卷

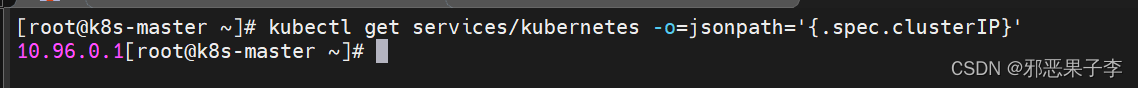

# 查看api-server的IP地址

kubectl get services/kubernetes -o=jsonpath='{.spec.clusterIP}'

303

303

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?