1.介绍

从MobileNet V3的名字,我们就知道,它是对基于MobileNet V1和 MobileNet V2而进行改进的,但它的结构不是单纯通过人工设计的,而是结合了神经架构搜索,更加详细的介绍可以参见:Searching for MobileNetV3

2.模型结构

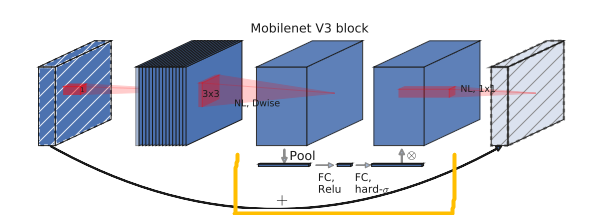

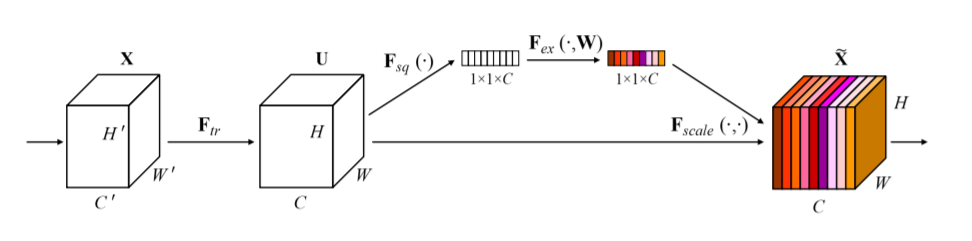

MobileNet V3结构中包含了深度可分离卷积(MobileNets V1神经网络简介与代码实战_天竺街潜水的八角的博客-CSDN博客)和颠倒残差块( MobileNets V2神经网络简介与代码实战_天竺街潜水的八角的博客-CSDN博客),可见我以前的博客,这里就重点介绍一下MnasNet 模型中SE结构(轻量级注意力结构),在下图中的黄色框框区域。

把黄色区域展开,就是下面这张图片,SENet的核心思想在于通过网络根据loss去学习特征权重,使得有效的feature map权重大,无效或效果小的feature map权重小的方式训练模型达到更好的结果。

3.模型特点

MobileNet V3相对于MobileNets V1和MobileNets V2有以下两个特点:

1. 引入SE结构

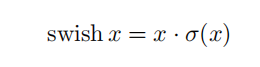

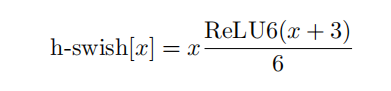

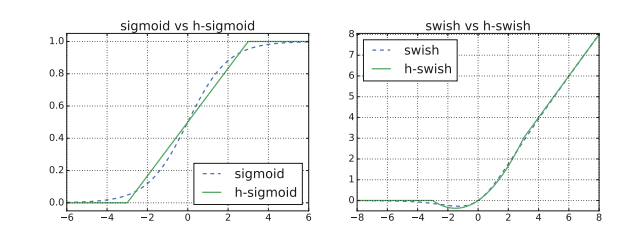

2. 引入h-swish激活函数(作者发现swish激活函数能够有效提高网络的精度。然而,swish的计算量太大了,所以改进出h-swish)

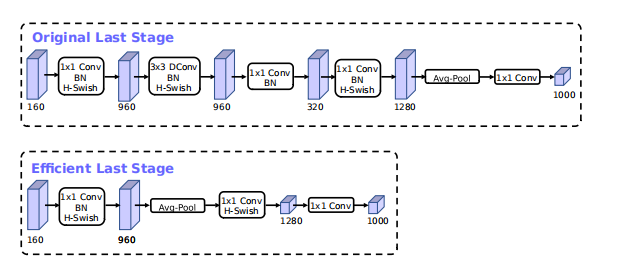

3. 相对于MobileNets V2(Original Last Stage),减掉了中间的3x3卷积block和1x1卷积block,作用:减少计算量的同时并使得网络没有失去准确率(高维的特征空间还在)

4.代码实现 pytorch

class hswish(nn.Module):

def forward(self, x):

out = x * F.relu6(x + 3, inplace=True) / 6

return out

class hsigmoid(nn.Module):

def forward(self, x):

out = F.relu6(x + 3, inplace=True) / 6

return out

class SeModule(nn.Module):

def __init__(self, in_size, reduction=4):

super(SeModule, self).__init__()

self.se = nn.Sequential(

nn.AdaptiveAvgPool2d(1),

nn.Conv2d(in_size, in_size // reduction, kernel_size=1, stride=1, padding=0, bias=False),

nn.BatchNorm2d(in_size // reduction),

nn.ReLU(inplace=True),

nn.Conv2d(in_size // reduction, in_size, kernel_size=1, stride=1, padding=0, bias=False),

nn.BatchNorm2d(in_size),

hsigmoid()

)

def forward(self, x):

return x * self.se(x)

class Block(nn.Module):

'''expand + depthwise + pointwise'''

def __init__(self, kernel_size, in_size, expand_size, out_size, nolinear, semodule, stride):

super(Block, self).__init__()

self.stride = stride

self.se = semodule

self.conv1 = nn.Conv2d(in_size, expand_size, kernel_size=1, stride=1, padding=0, bias=False)

self.bn1 = nn.BatchNorm2d(expand_size)

self.nolinear1 = nolinear

self.conv2 = nn.Conv2d(expand_size, expand_size, kernel_size=kernel_size, stride=stride, padding=kernel_size//2, groups=expand_size, bias=False)

self.bn2 = nn.BatchNorm2d(expand_size)

self.nolinear2 = nolinear

self.conv3 = nn.Conv2d(expand_size, out_size, kernel_size=1, stride=1, padding=0, bias=False)

self.bn3 = nn.BatchNorm2d(out_size)

self.shortcut = nn.Sequential()

if stride == 1 and in_size != out_size:

self.shortcut = nn.Sequential(

nn.Conv2d(in_size, out_size, kernel_size=1, stride=1, padding=0, bias=False),

nn.BatchNorm2d(out_size),

)

def forward(self, x):

out = self.nolinear1(self.bn1(self.conv1(x)))

out = self.nolinear2(self.bn2(self.conv2(out)))

out = self.bn3(self.conv3(out))

if self.se != None:

out = self.se(out)

out = out + self.shortcut(x) if self.stride==1 else out

return out

class MobileNetV3_Large(nn.Module):

def __init__(self, num_classes=1000):

super(MobileNetV3_Large, self).__init__()

self.conv1 = nn.Conv2d(3, 16, kernel_size=3, stride=2, padding=1, bias=False)

self.bn1 = nn.BatchNorm2d(16)

self.hs1 = hswish()

self.bneck = nn.Sequential(

Block(3, 16, 16, 16, nn.ReLU(inplace=True), None, 1),

Block(3, 16, 64, 24, nn.ReLU(inplace=True), None, 2),

Block(3, 24, 72, 24, nn.ReLU(inplace=True), None, 1),

Block(5, 24, 72, 40, nn.ReLU(inplace=True), SeModule(40), 2),

Block(5, 40, 120, 40, nn.ReLU(inplace=True), SeModule(40), 1),

Block(5, 40, 120, 40, nn.ReLU(inplace=True), SeModule(40), 1),

Block(3, 40, 240, 80, hswish(), None, 2),

Block(3, 80, 200, 80, hswish(), None, 1),

Block(3, 80, 184, 80, hswish(), None, 1),

Block(3, 80, 184, 80, hswish(), None, 1),

Block(3, 80, 480, 112, hswish(), SeModule(112), 1),

Block(3, 112, 672, 112, hswish(), SeModule(112), 1),

Block(5, 112, 672, 160, hswish(), SeModule(160), 1),

Block(5, 160, 672, 160, hswish(), SeModule(160), 2),

Block(5, 160, 960, 160, hswish(), SeModule(160), 1),

)

self.conv2 = nn.Conv2d(160, 960, kernel_size=1, stride=1, padding=0, bias=False)

self.bn2 = nn.BatchNorm2d(960)

self.hs2 = hswish()

self.linear3 = nn.Linear(960, 1280)

self.bn3 = nn.BatchNorm1d(1280)

self.hs3 = hswish()

self.linear4 = nn.Linear(1280, num_classes)

self.init_params()

def init_params(self):

for m in self.modules():

if isinstance(m, nn.Conv2d):

init.kaiming_normal_(m.weight, mode='fan_out')

if m.bias is not None:

init.constant_(m.bias, 0)

elif isinstance(m, nn.BatchNorm2d):

init.constant_(m.weight, 1)

init.constant_(m.bias, 0)

elif isinstance(m, nn.Linear):

init.normal_(m.weight, std=0.001)

if m.bias is not None:

init.constant_(m.bias, 0)

def forward(self, x):

out = self.hs1(self.bn1(self.conv1(x)))

out = self.bneck(out)

out = self.hs2(self.bn2(self.conv2(out)))

out = F.avg_pool2d(out, 7)

out = out.view(out.size(0), -1)

out = self.hs3(self.bn3(self.linear3(out)))

out = self.linear4(out)

return out

class MobileNetV3_Small(nn.Module):

def __init__(self, num_classes=1000):

super(MobileNetV3_Small, self).__init__()

self.conv1 = nn.Conv2d(3, 16, kernel_size=3, stride=2, padding=1, bias=False)

self.bn1 = nn.BatchNorm2d(16)

self.hs1 = hswish()

self.bneck = nn.Sequential(

Block(3, 16, 16, 16, nn.ReLU(inplace=True), SeModule(16), 2),

Block(3, 16, 72, 24, nn.ReLU(inplace=True), None, 2),

Block(3, 24, 88, 24, nn.ReLU(inplace=True), None, 1),

Block(5, 24, 96, 40, hswish(), SeModule(40), 2),

Block(5, 40, 240, 40, hswish(), SeModule(40), 1),

Block(5, 40, 240, 40, hswish(), SeModule(40), 1),

Block(5, 40, 120, 48, hswish(), SeModule(48), 1),

Block(5, 48, 144, 48, hswish(), SeModule(48), 1),

Block(5, 48, 288, 96, hswish(), SeModule(96), 2),

Block(5, 96, 576, 96, hswish(), SeModule(96), 1),

Block(5, 96, 576, 96, hswish(), SeModule(96), 1),

)

self.conv2 = nn.Conv2d(96, 576, kernel_size=1, stride=1, padding=0, bias=False)

self.bn2 = nn.BatchNorm2d(576)

self.hs2 = hswish()

self.linear3 = nn.Linear(576, 1280)

self.bn3 = nn.BatchNorm1d(1280)

self.hs3 = hswish()

self.linear4 = nn.Linear(1280, num_classes)

self.init_params()

def init_params(self):

for m in self.modules():

if isinstance(m, nn.Conv2d):

init.kaiming_normal_(m.weight, mode='fan_out')

if m.bias is not None:

init.constant_(m.bias, 0)

elif isinstance(m, nn.BatchNorm2d):

init.constant_(m.weight, 1)

init.constant_(m.bias, 0)

elif isinstance(m, nn.Linear):

init.normal_(m.weight, std=0.001)

if m.bias is not None:

init.constant_(m.bias, 0)

def forward(self, x):

out = self.hs1(self.bn1(self.conv1(x)))

out = self.bneck(out)

out = self.hs2(self.bn2(self.conv2(out)))

out = F.avg_pool2d(out, 7)

out = out.view(out.size(0), -1)

out = self.hs3(self.bn3(self.linear3(out)))

out = self.linear4(out)

return out

2103

2103

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?