先自我介绍一下,小编浙江大学毕业,去过华为、字节跳动等大厂,目前阿里P7

深知大多数程序员,想要提升技能,往往是自己摸索成长,但自己不成体系的自学效果低效又漫长,而且极易碰到天花板技术停滞不前!

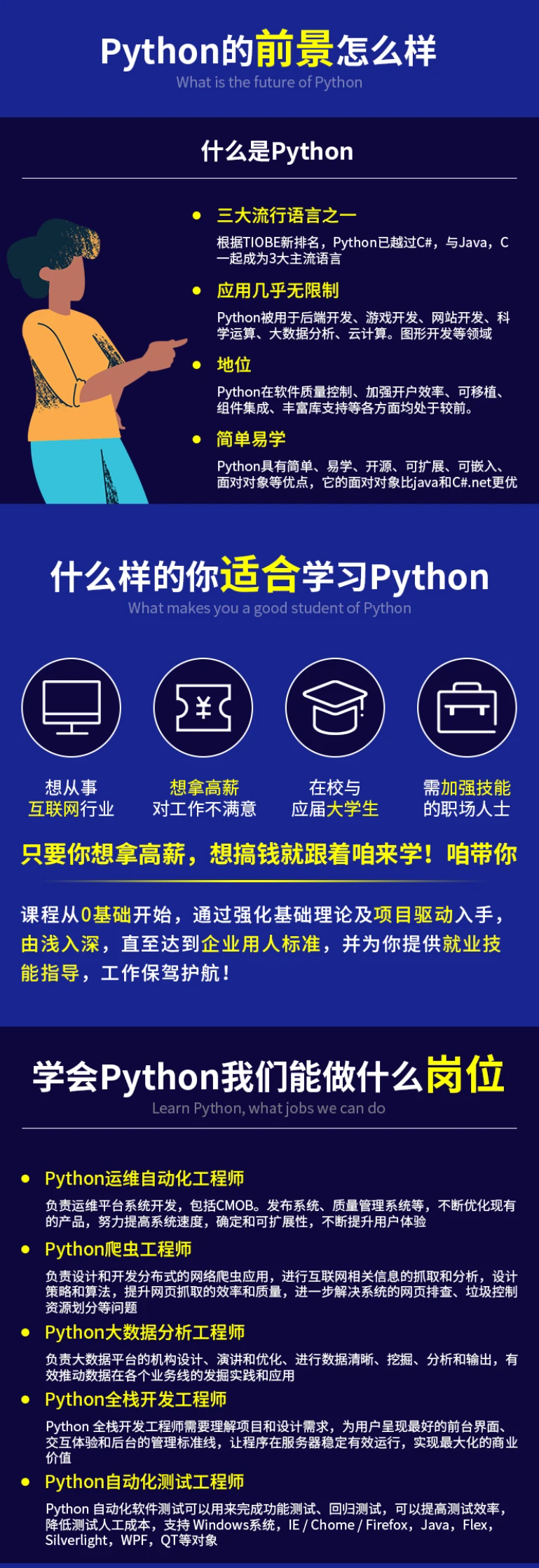

因此收集整理了一份《2024年最新Python全套学习资料》,初衷也很简单,就是希望能够帮助到想自学提升又不知道该从何学起的朋友。

既有适合小白学习的零基础资料,也有适合3年以上经验的小伙伴深入学习提升的进阶课程,涵盖了95%以上Python知识点,真正体系化!

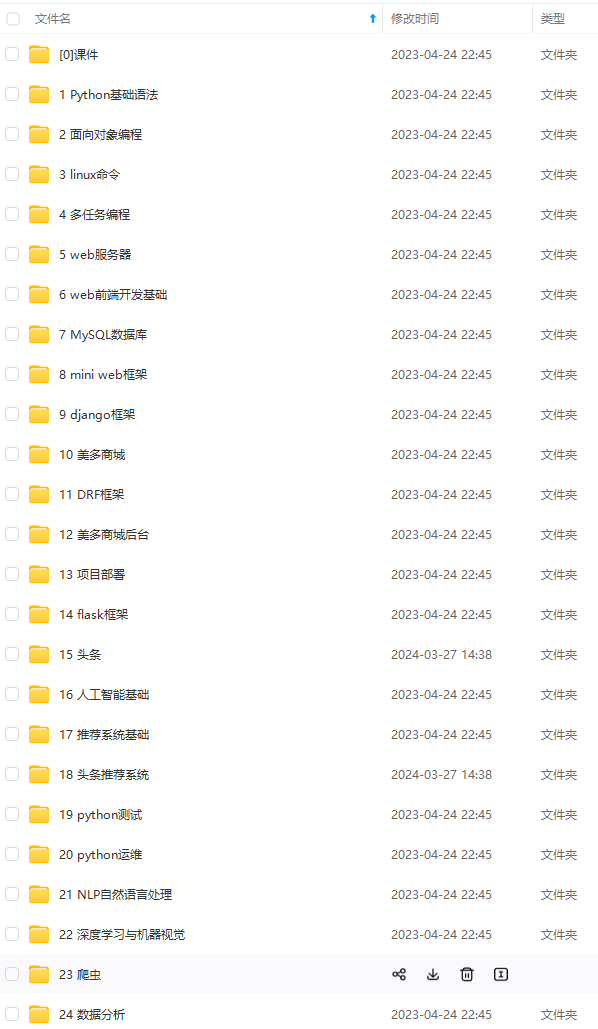

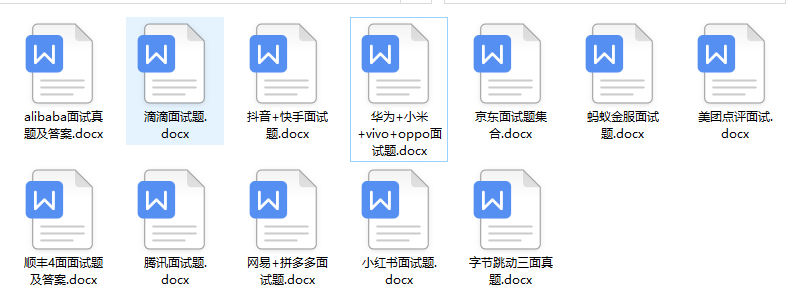

由于文件比较多,这里只是将部分目录截图出来,全套包含大厂面经、学习笔记、源码讲义、实战项目、大纲路线、讲解视频,并且后续会持续更新

如果你需要这些资料,可以添加V获取:vip1024c (备注Python)

正文

norm_layer=None, cl_norm_layer=None, cross_stage=False):

super().init()

if in_chs != out_chs or stride > 1:

self.downsample = nn.Sequential(

norm_layer(in_chs),

nn.Conv2d(in_chs, out_chs, kernel_size=stride, stride=stride),

)

else:

self.downsample = nn.Identity()

dp_rates = dp_rates or [0.] * depth

self.blocks = nn.Sequential(*[ConvNeXtBlock(

dim=out_chs, drop_path=dp_rates[j], ls_init_value=ls_init_value, conv_mlp=conv_mlp,

norm_layer=norm_layer if conv_mlp else cl_norm_layer)

for j in range(depth)]

)

def forward(self, x):

x = self.downsample(x)

x = self.blocks(x)

return x

class ConvNeXt(nn.Module):

r"“” ConvNeXt

A PyTorch impl of : A ConvNet for the 2020s - https://arxiv.org/pdf/2201.03545.pdf

Args:

in_chans (int): Number of input image channels. Default: 3

num_classes (int): Number of classes for classification head. Default: 1000

depths (tuple(int)): Number of blocks at each stage. Default: [3, 3, 9, 3]

dims (tuple(int)): Feature dimension at each stage. Default: [96, 192, 384, 768]

drop_rate (float): Head dropout rate

drop_path_rate (float): Stochastic depth rate. Default: 0.

ls_init_value (float): Init value for Layer Scale. Default: 1e-6.

head_init_scale (float): Init scaling value for classifier weights and biases. Default: 1.

“”"

def init(

self, in_chans=3, num_classes=1000, global_pool=‘avg’, output_stride=32, patch_size=4,

depths=(3, 3, 9, 3), dims=(96, 192, 384, 768), ls_init_value=1e-6, conv_mlp=False,

head_init_scale=1., head_norm_first=False, norm_layer=None, drop_rate=0., drop_path_rate=0.,

):

super().init()

assert output_stride == 32

if norm_layer is None:

norm_layer = partial(LayerNorm2d, eps=1e-6)

cl_norm_layer = norm_layer if conv_mlp else partial(nn.LayerNorm, eps=1e-6)

else:

assert conv_mlp,\

‘If a norm_layer is specified, conv MLP must be used so all norm expect rank-4, channels-first input’

cl_norm_layer = norm_layer

self.num_classes = num_classes

self.drop_rate = drop_rate

self.feature_info = []

NOTE: this stem is a minimal form of ViT PatchEmbed, as used in SwinTransformer w/ patch_size = 4

self.stem = nn.Sequential(

nn.Conv2d(in_chans, dims[0], kernel_size=patch_size, stride=patch_size),

norm_layer(dims[0])

)

self.stages = nn.Sequential()

dp_rates = [x.tolist() for x in torch.linspace(0, drop_path_rate, sum(depths)).split(depths)]

curr_stride = patch_size

prev_chs = dims[0]

stages = []

4 feature resolution stages, each consisting of multiple residual blocks

for i in range(4):

stride = 2 if i > 0 else 1

FIXME support dilation / output_stride

curr_stride *= stride

out_chs = dims[i]

stages.append(ConvNeXtStage(

prev_chs, out_chs, stride=stride,

depth=depths[i], dp_rates=dp_rates[i], ls_init_value=ls_init_value, conv_mlp=conv_mlp,

norm_layer=norm_layer, cl_norm_layer=cl_norm_layer)

)

prev_chs = out_chs

NOTE feature_info use currently assumes stage 0 == stride 1, rest are stride 2

self.feature_info += [dict(num_chs=prev_chs, reduction=curr_stride, module=f’stages.{i}')]

self.stages = nn.Sequential(*stages)

self.num_features = prev_chs

if head_norm_first:

norm -> global pool -> fc ordering, like most other nets (not compat with FB weights)

self.norm_pre = norm_layer(self.num_features) # final norm layer, before pooling

self.head = ClassifierHead(self.num_features, num_classes, pool_type=global_pool, drop_rate=drop_rate)

else:

pool -> norm -> fc, the default ConvNeXt ordering (pretrained FB weights)

self.norm_pre = nn.Identity()

self.head = nn.Sequential(OrderedDict([

(‘global_pool’, SelectAdaptivePool2d(pool_type=global_pool)),

(‘norm’, norm_layer(self.num_features)),

(‘flatten’, nn.Flatten(1) if global_pool else nn.Identity()),

(‘drop’, nn.Dropout(self.drop_rate)),

(‘fc’, nn.Linear(self.num_features, num_classes) if num_classes > 0 else nn.Identity())

]))

named_apply(partial(_init_weights, head_init_scale=head_init_scale), self)

def get_classifier(self):

return self.head.fc

def reset_classifier(self, num_classes=0, global_pool=‘avg’):

if isinstance(self.head, ClassifierHead):

norm -> global pool -> fc

self.head = ClassifierHead(

self.num_features, num_classes, pool_type=global_pool, drop_rate=self.drop_rate)

else:

pool -> norm -> fc

self.head = nn.Sequential(OrderedDict([

(‘global_pool’, SelectAdaptivePool2d(pool_type=global_pool)),

(‘norm’, self.head.norm),

(‘flatten’, nn.Flatten(1) if global_pool else nn.Identity()),

(‘drop’, nn.Dropout(self.drop_rate)),

(‘fc’, nn.Linear(self.num_features, num_classes) if num_classes > 0 else nn.Identity())

]))

def forward_features(self, x):

x = self.stem(x)

x = self.stages(x)

x = self.norm_pre(x)

return x

def forward(self, x):

x = self.forward_features(x)

x = self.head(x)

return x

def _init_weights(module, name=None, head_init_scale=1.0):

if isinstance(module, nn.Conv2d):

trunc_normal_(module.weight, std=.02)

nn.init.constant_(module.bias, 0)

elif isinstance(module, nn.Linear):

trunc_normal_(module.weight, std=.02)

nn.init.constant_(module.bias, 0)

if name and ‘head.’ in name:

module.weight.data.mul_(head_init_scale)

module.bias.data.mul_(head_init_scale)

def checkpoint_filter_fn(state_dict, model):

“”" Remap FB checkpoints -> timm “”"

if ‘model’ in state_dict:

state_dict = state_dict[‘model’]

out_dict = {}

import re

for k, v in state_dict.items():

k = k.replace(‘downsample_layers.0.’, ‘stem.’)

k = re.sub(r’stages.([0-9]+).([0-9]+)‘, r’stages.\1.blocks.\2’, k)

k = re.sub(r’downsample_layers.([0-9]+).([0-9]+)‘, r’stages.\1.downsample.\2’, k)

k = k.replace(‘dwconv’, ‘conv_dw’)

k = k.replace(‘pwconv’, ‘mlp.fc’)

k = k.replace(‘head.’, ‘head.fc.’)

if k.startswith(‘norm.’):

k = k.replace(‘norm’, ‘head.norm’)

if v.ndim == 2 and ‘head’ not in k:

model_shape = model.state_dict()[k].shape

v = v.reshape(model_shape)

out_dict[k] = v

return out_dict

def _create_convnext(variant, pretrained=False, **kwargs):

model = build_model_with_cfg(

ConvNeXt, variant, pretrained,

default_cfg=default_cfgs[variant],

pretrained_filter_fn=checkpoint_filter_fn,

feature_cfg=dict(out_indices=(0, 1, 2, 3), flatten_sequential=True),

**kwargs)

return model

@register_model

def convnext_tiny(pretrained=False, **kwargs):

model_args = dict(depths=(3, 3, 9, 3), dims=(96, 192, 384, 768), **kwargs)

model = _create_convnext(‘convnext_tiny’, pretrained=pretrained, **model_args)

return model

@register_model

def convnext_tiny_hnf(pretrained=False, **kwargs):

model_args = dict(depths=(3, 3, 9, 3), dims=(96, 192, 384, 768), head_norm_first=True, **kwargs)

model = _create_convnext(‘convnext_tiny_hnf’, pretrained=pretrained, **model_args)

return model

@register_model

def convnext_small(pretrained=False, **kwargs):

model_args = dict(depths=[3, 3, 27, 3], dims=[96, 192, 384, 768], **kwargs)

model = _create_convnext(‘convnext_small’, pretrained=pretrained, **model_args)

return model

@register_model

def convnext_base(pretrained=False, **kwargs):

model_args = dict(depths=[3, 3, 27, 3], dims=[128, 256, 512, 1024], **kwargs)

model = _create_convnext(‘convnext_base’, pretrained=pretrained, **model_args)

return model

@register_model

def convnext_large(pretrained=False, **kwargs):

model_args = dict(depths=[3, 3, 27, 3], dims=[192, 384, 768, 1536], **kwargs)

model = _create_convnext(‘convnext_large’, pretrained=pretrained, **model_args)

return model

@register_model

def convnext_base_in22ft1k(pretrained=False, **kwargs):

model_args = dict(depths=[3, 3, 27, 3], dims=[128, 256, 512, 1024], **kwargs)

model = _create_convnext(‘convnext_base_in22ft1k’, pretrained=pretrained, **model_args)

return model

@register_model

def convnext_large_in22ft1k(pretrained=False, **kwargs):

model_args = dict(depths=[3, 3, 27, 3], dims=[192, 384, 768, 1536], **kwargs)

model = _create_convnext(‘convnext_large_in22ft1k’, pretrained=pretrained, **model_args)

return model

@register_model

def convnext_xlarge_in22ft1k(pretrained=False, **kwargs):

model_args = dict(depths=[3, 3, 27, 3], dims=[256, 512, 1024, 2048], **kwargs)

model = _create_convnext(‘convnext_xlarge_in22ft1k’, pretrained=pretrained, **model_args)

return model

@register_model

def convnext_base_384_in22ft1k(pretrained=False, **kwargs):

model_args = dict(depths=[3, 3, 27, 3], dims=[128, 256, 512, 1024], **kwargs)

model = _create_convnext(‘convnext_base_384_in22ft1k’, pretrained=pretrained, **model_args)

return model

@register_model

def convnext_large_384_in22ft1k(pretrained=False, **kwargs):

model_args = dict(depths=[3, 3, 27, 3], dims=[192, 384, 768, 1536], **kwargs)

model = _create_convnext(‘convnext_large_384_in22ft1k’, pretrained=pretrained, **model_args)

return model

@register_model

def convnext_xlarge_384_in22ft1k(pretrained=False, **kwargs):

model_args = dict(depths=[3, 3, 27, 3], dims=[256, 512, 1024, 2048], **kwargs)

model = _create_convnext(‘convnext_xlarge_384_in22ft1k’, pretrained=pretrained, **model_args)

return model

@register_model

def convnext_base_in22k(pretrained=False, **kwargs):

model_args = dict(depths=[3, 3, 27, 3], dims=[128, 256, 512, 1024], **kwargs)

model = _create_convnext(‘convnext_base_in22k’, pretrained=pretrained, **model_args)

return model

@register_model

def convnext_large_in22k(pretrained=False, **kwargs):

model_args = dict(depths=[3, 3, 27, 3], dims=[192, 384, 768, 1536], **kwargs)

model = _create_convnext(‘convnext_large_in22k’, pretrained=pretrained, **model_args)

return model

@register_model

def convnext_xlarge_in22k(pretrained=False, **kwargs):

model_args = dict(depths=[3, 3, 27, 3], dims=[256, 512, 1024, 2048], **kwargs)

model = _create_convnext(‘convnext_xlarge_in22k’, pretrained=pretrained, **model_args)

return model

另一个版本:

Copyright © Meta Platforms, Inc. and affiliates.

All rights reserved.

This source code is licensed under the license found in the

LICENSE file in the root directory of this source tree.

import torch

import torch.nn as nn

import torch.nn.functional as F

from timm.models.layers import trunc_normal_, DropPath

from timm.models.registry import register_model

class Block(nn.Module):

r"“” ConvNeXt Block. There are two equivalent implementations:

(1) DwConv -> LayerNorm (channels_first) -> 1x1 Conv -> GELU -> 1x1 Conv; all in (N, C, H, W)

(2) DwConv -> Permute to (N, H, W, C); LayerNorm (channels_last) -> Linear -> GELU -> Linear; Permute back

We use (2) as we find it slightly faster in PyTorch

Args:

dim (int): Number of input channels.

drop_path (float): Stochastic depth rate. Default: 0.0

layer_scale_init_value (float): Init value for Layer Scale. Default: 1e-6.

“”"

def init(self, dim, drop_path=0., layer_scale_init_value=1e-6):

super().init()

self.dwconv = nn.Conv2d(dim, dim, kernel_size=7, padding=3, groups=dim) # depthwise conv

self.norm = LayerNorm(dim, eps=1e-6)

self.pwconv1 = nn.Linear(dim, 4 * dim) # pointwise/1x1 convs, implemented with linear layers

self.act = nn.GELU()

self.pwconv2 = nn.Linear(4 * dim, dim)

self.gamma = nn.Parameter(layer_scale_init_value * torch.ones((dim)),

requires_grad=True) if layer_scale_init_value > 0 else None

self.drop_path = DropPath(drop_path) if drop_path > 0. else nn.Identity()

def forward(self, x):

input = x

x = self.dwconv(x)

x = x.permute(0, 2, 3, 1) # (N, C, H, W) -> (N, H, W, C)

x = self.norm(x)

x = self.pwconv1(x)

x = self.act(x)

x = self.pwconv2(x)

if self.gamma is not None:

x = self.gamma * x

x = x.permute(0, 3, 1, 2) # (N, H, W, C) -> (N, C, H, W)

x = input + self.drop_path(x)

return x

class ConvNeXt(nn.Module):

r"“” ConvNeXt

A PyTorch impl of : A ConvNet for the 2020s -

https://arxiv.org/pdf/2201.03545.pdf

Args:

in_chans (int): Number of input image channels. Default: 3

num_classes (int): Number of classes for classification head. Default: 1000

depths (tuple(int)): Number of blocks at each stage. Default: [3, 3, 9, 3]

dims (int): Feature dimension at each stage. Default: [96, 192, 384, 768]

drop_path_rate (float): Stochastic depth rate. Default: 0.

layer_scale_init_value (float): Init value for Layer Scale. Default: 1e-6.

head_init_scale (float): Init scaling value for classifier weights and biases. Default: 1.

“”"

def init(self, in_chans=3, num_classes=1000,

depths=[3, 3, 9, 3], dims=[96, 192, 384, 768], drop_path_rate=0.,

layer_scale_init_value=1e-6, head_init_scale=1.,

):

super().init()

self.downsample_layers = nn.ModuleList() # stem and 3 intermediate downsampling conv layers

stem = nn.Sequential(

nn.Conv2d(in_chans, dims[0], kernel_size=4, stride=4),

LayerNorm(dims[0], eps=1e-6, data_format=“channels_first”)

)

self.downsample_layers.append(stem)

for i in range(3):

downsample_layer = nn.Sequential(

LayerNorm(dims[i], eps=1e-6, data_format=“channels_first”),

nn.Conv2d(dims[i], dims[i+1], kernel_size=2, stride=2),

)

self.downsample_layers.append(downsample_layer)

self.stages = nn.ModuleList() # 4 feature resolution stages, each consisting of multiple residual blocks

dp_rates=[x.item() for x in torch.linspace(0, drop_path_rate, sum(depths))]

cur = 0

for i in range(4):

stage = nn.Sequential(

*[Block(dim=dims[i], drop_path=dp_rates[cur + j],

layer_scale_init_value=layer_scale_init_value) for j in range(depths[i])]

)

self.stages.append(stage)

cur += depths[i]

self.norm = nn.LayerNorm(dims[-1], eps=1e-6) # final norm layer

self.head = nn.Linear(dims[-1], num_classes)

self.apply(self._init_weights)

self.head.weight.data.mul_(head_init_scale)

self.head.bias.data.mul_(head_init_scale)

def _init_weights(self, m):

最后

不知道你们用的什么环境,我一般都是用的Python3.6环境和pycharm解释器,没有软件,或者没有资料,没人解答问题,都可以免费领取(包括今天的代码),过几天我还会做个视频教程出来,有需要也可以领取~

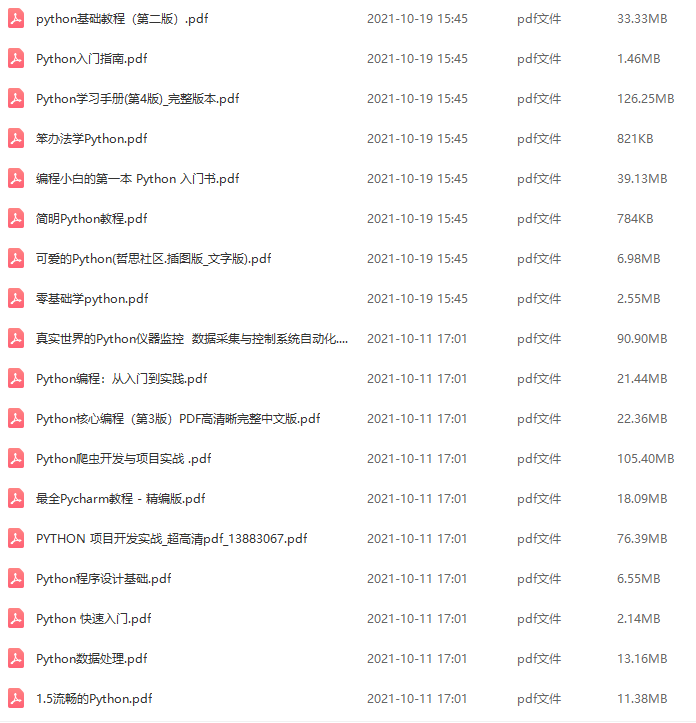

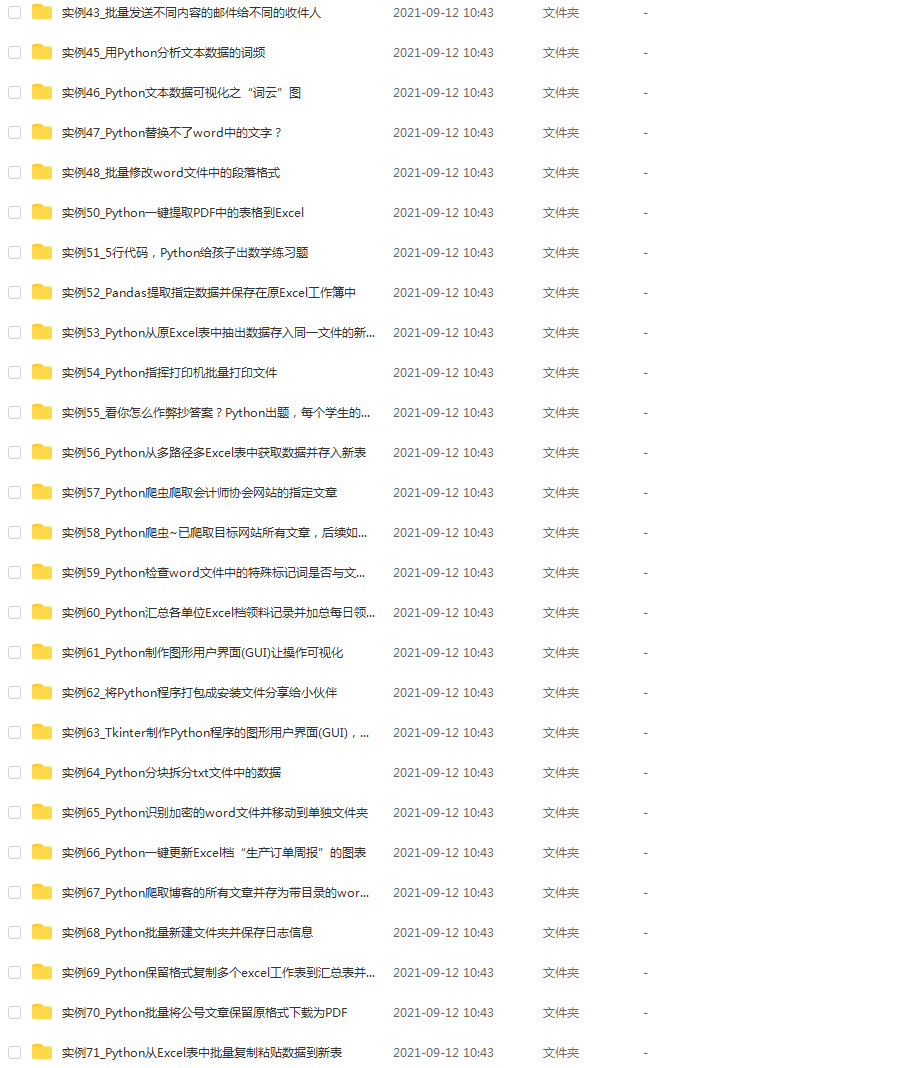

给大家准备的学习资料包括但不限于:

Python 环境、pycharm编辑器/永久激活/翻译插件

python 零基础视频教程

Python 界面开发实战教程

Python 爬虫实战教程

Python 数据分析实战教程

python 游戏开发实战教程

Python 电子书100本

Python 学习路线规划

网上学习资料一大堆,但如果学到的知识不成体系,遇到问题时只是浅尝辄止,不再深入研究,那么很难做到真正的技术提升。

需要这份系统化的资料的朋友,可以添加V获取:vip1024c (备注python)

一个人可以走的很快,但一群人才能走的更远!不论你是正从事IT行业的老鸟或是对IT行业感兴趣的新人,都欢迎加入我们的的圈子(技术交流、学习资源、职场吐槽、大厂内推、面试辅导),让我们一起学习成长!

不知道你们用的什么环境,我一般都是用的Python3.6环境和pycharm解释器,没有软件,或者没有资料,没人解答问题,都可以免费领取(包括今天的代码),过几天我还会做个视频教程出来,有需要也可以领取~

给大家准备的学习资料包括但不限于:

Python 环境、pycharm编辑器/永久激活/翻译插件

python 零基础视频教程

Python 界面开发实战教程

Python 爬虫实战教程

Python 数据分析实战教程

python 游戏开发实战教程

Python 电子书100本

Python 学习路线规划

网上学习资料一大堆,但如果学到的知识不成体系,遇到问题时只是浅尝辄止,不再深入研究,那么很难做到真正的技术提升。

需要这份系统化的资料的朋友,可以添加V获取:vip1024c (备注python)

[外链图片转存中…(img-YuY2WMgu-1713287402857)]

一个人可以走的很快,但一群人才能走的更远!不论你是正从事IT行业的老鸟或是对IT行业感兴趣的新人,都欢迎加入我们的的圈子(技术交流、学习资源、职场吐槽、大厂内推、面试辅导),让我们一起学习成长!

3万+

3万+

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?