FPFH(Fast Point Feature Histogram)是由PFH(Point Feature Histogram)改进而来。

以下代码调用PCL库版本为1.12.1。

基于FPFH进行粗配准:

#include <iostream>

#include <pcl/io/pcd_io.h>

#include <pcl/point_types.h>

#include <pcl/features/normal_3d.h>

#include <pcl/features/impl/normal_3d.hpp>

#include <pcl/features/fpfh.h>

#include <pcl/features/impl/fpfh.hpp>

#include <pcl/registration/icp.h>

#include <pcl/filters/voxel_grid.h>

#include <pcl/visualization/pcl_visualizer.h>

#include <pcl/registration/sample_consensus_prerejective.h>

#include <pcl/point_representation.h>

int main(int argc, char** argv) {

if (argc != 3) {

std::cout << "Usage: feature_extraction_and_registration <source_cloud.pcd> <target_cloud.pcd>" << std::endl;

return -1;

}

// 加载源点云和目标点云

pcl::PointCloud<pcl::PointXYZ>::Ptr source_cloud(new pcl::PointCloud<pcl::PointXYZ>);

pcl::PointCloud<pcl::PointXYZ>::Ptr target_cloud(new pcl::PointCloud<pcl::PointXYZ>);

pcl::io::loadPCDFile(argv[1], *source_cloud);

pcl::io::loadPCDFile(argv[2], *target_cloud);

// 体素化降采样

pcl::PointCloud<pcl::PointXYZ>::Ptr source_downsampled(new pcl::PointCloud<pcl::PointXYZ>);

pcl::PointCloud<pcl::PointXYZ>::Ptr target_downsampled(new pcl::PointCloud<pcl::PointXYZ>);

pcl::VoxelGrid<pcl::PointXYZ> voxel_grid;

voxel_grid.setLeafSize(0.05f, 0.05f, 0.05f);

voxel_grid.setInputCloud(source_cloud);

voxel_grid.filter(*source_downsampled);

voxel_grid.setInputCloud(target_cloud);

voxel_grid.filter(*target_downsampled);

// 计算表面法向量

pcl::NormalEstimation<pcl::PointXYZ, pcl::Normal> normal_estimation;

pcl::search::KdTree<pcl::PointXYZ>::Ptr tree(new pcl::search::KdTree<pcl::PointXYZ>);

normal_estimation.setSearchMethod(tree);

normal_estimation.setRadiusSearch(0.1);

pcl::PointCloud<pcl::Normal>::Ptr source_normals(new pcl::PointCloud<pcl::Normal>);

normal_estimation.setInputCloud(source_downsampled);

normal_estimation.compute(*source_normals);

pcl::PointCloud<pcl::Normal>::Ptr target_normals(new pcl::PointCloud<pcl::Normal>);

normal_estimation.setInputCloud(target_downsampled);

normal_estimation.compute(*target_normals);

// 计算FPFH特征

pcl::FPFHEstimation<pcl::PointXYZ, pcl::Normal, pcl::FPFHSignature33> fpfh_estimation;

fpfh_estimation.setSearchMethod(tree);

fpfh_estimation.setRadiusSearch(0.2);

pcl::PointCloud<pcl::FPFHSignature33>::Ptr source_features(new pcl::PointCloud<pcl::FPFHSignature33>);

fpfh_estimation.setInputCloud(source_downsampled);

fpfh_estimation.setInputNormals(source_normals);

fpfh_estimation.compute(*source_features);

pcl::PointCloud<pcl::FPFHSignature33>::Ptr target_features(new pcl::PointCloud<pcl::FPFHSignature33>);

fpfh_estimation.setInputCloud(target_downsampled);

fpfh_estimation.setInputNormals(target_normals);

fpfh_estimation.compute(*target_features);

// 使用样本共识预射进行粗配准

pcl::SampleConsensusPrerejective<pcl::PointXYZ, pcl::PointXYZ, pcl::FPFHSignature33> sac_pr;

sac_pr.setInputSource(source_downsampled);

sac_pr.setSourceFeatures(source_features);

sac_pr.setInputTarget(target_downsampled);

sac_pr.setTargetFeatures(target_features);

sac_pr.setMaximumIterations(1000);

sac_pr.setNumberOfSamples(3);

sac_pr.setCorrespondenceRandomness(5);

sac_pr.setSimilarityThreshold(0.9);

sac_pr.setMaxCorrespondenceDistance(0.2);

pcl::PointCloud<pcl::PointXYZ>::Ptr coarse_registered_cloud(new pcl::PointCloud<pcl::PointXYZ>);

sac_pr.align(*coarse_registered_cloud);

if (sac_pr.hasConverged()) {

std::cout << "Coarse registration has converged. Transformation matrix:" << std::endl;

std::cout << sac_pr.getFinalTransformation() << std::endl;

}

else {

std::cout << "Coarse registration did not converge." << std::endl;

return -1;

}

// 可视化结果

pcl::visualization::PCLVisualizer viewer("Cloud Registration");

viewer.addPointCloud(source_cloud, "original_source");

viewer.setPointCloudRenderingProperties(pcl::visualization::PCL_VISUALIZER_COLOR, 1, 0, 0, "original_source");

viewer.addPointCloud(target_cloud, "target");

viewer.setPointCloudRenderingProperties(pcl::visualization::PCL_VISUALIZER_COLOR, 0, 1, 0, "target");

viewer.addPointCloud(transformed_original_source, "transformed_original");

viewer.setPointCloudRenderingProperties(pcl::visualization::PCL_VISUALIZER_COLOR, 0, 0, 1, "transformed_original");

while (!viewer.wasStopped()) {

viewer.spinOnce(100);

}

return 0;

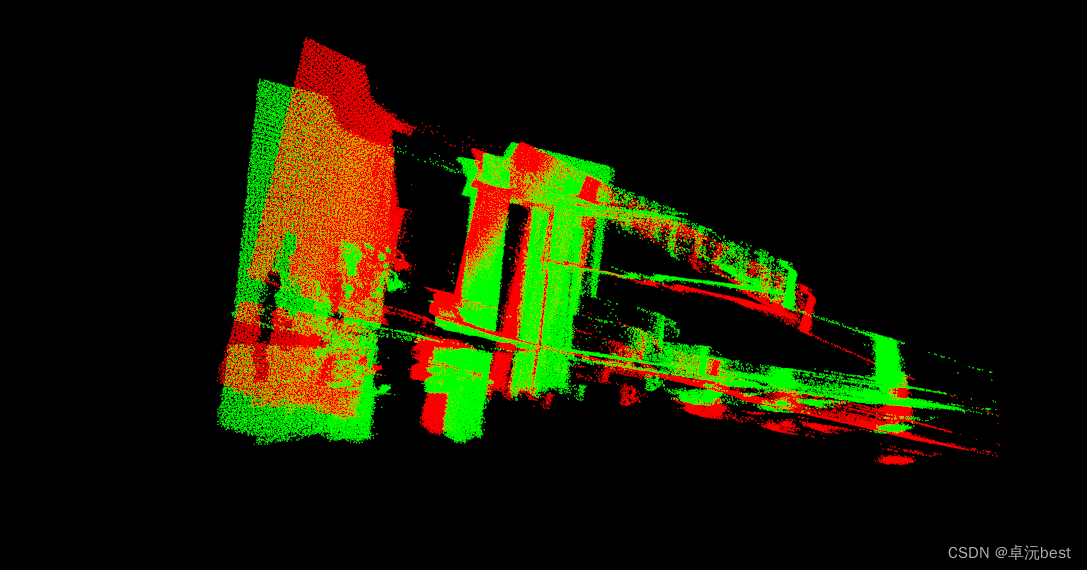

}配准前:

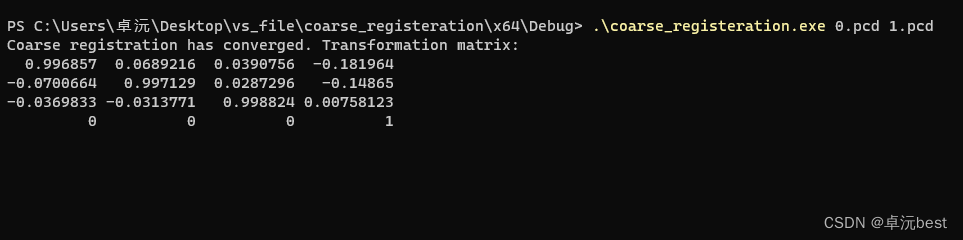

运行程序:

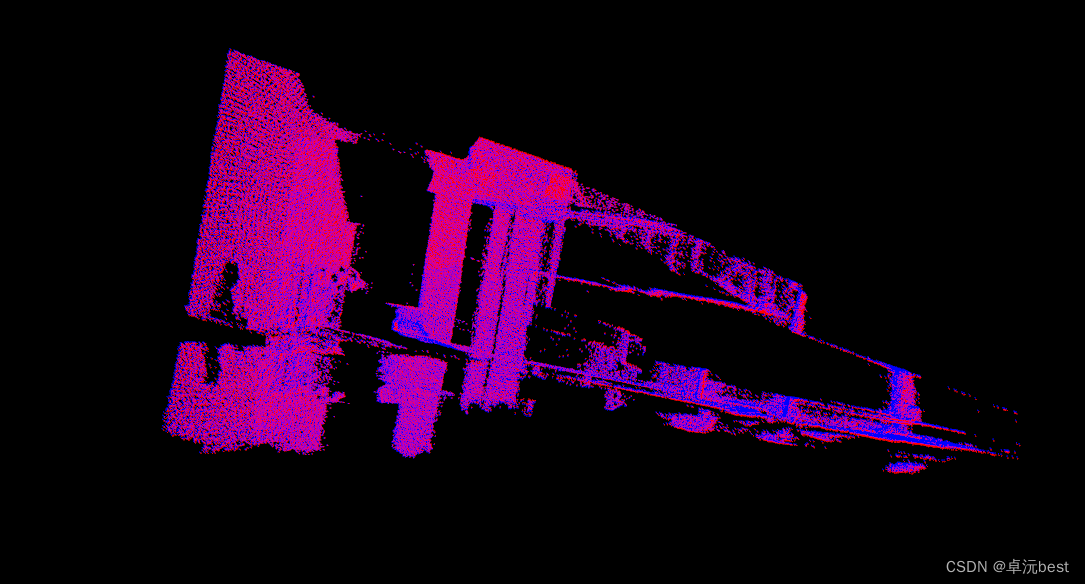

.\coarse_registeration.exe 0.pcd 1.pcd配准后:

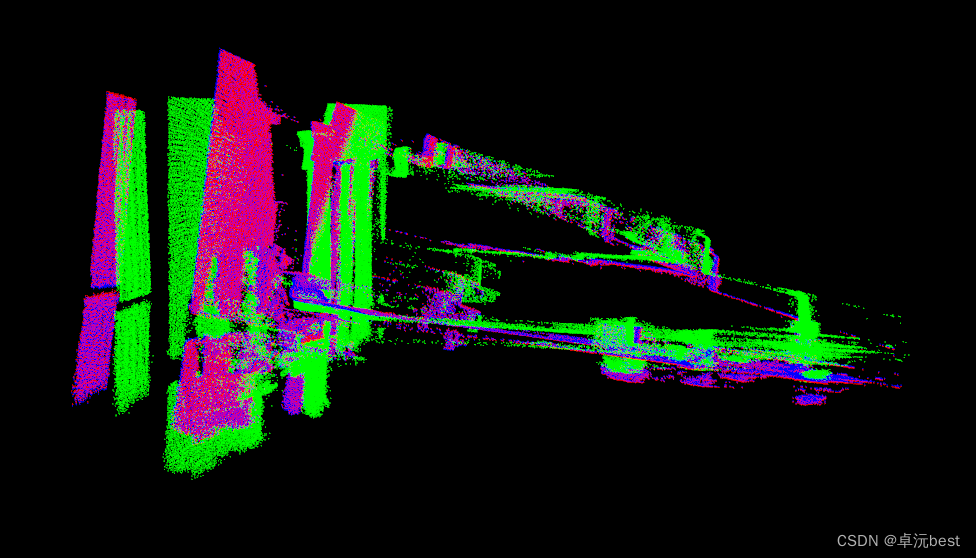

前后对比:

这种一般是需要两段配准,粗配准为精配准提供有利条件,提高后续精配准精度,下面加一个ICP(Iterative Closest Point)精配准看看 。

//插到粗配准程序下面

// Perform registration using ICP (Iterative Closest Point) algorithm(精配准)

pcl::IterativeClosestPoint<pcl::PointXYZ, pcl::PointXYZ> icp;

icp.setInputSource(coarse_registered_cloud);

icp.setInputTarget(target_downsampled);

icp.setMaxCorrespondenceDistance(0.2);

icp.setMaximumIterations(50);

icp.setTransformationEpsilon(1e-8);

icp.setEuclideanFitnessEpsilon(1);

pcl::PointCloud<pcl::PointXYZ>::Ptr registered_cloud(new pcl::PointCloud<pcl::PointXYZ>);

icp.align(*registered_cloud);

if (icp.hasConverged()) {

std::cout << "ICP has converged. Score: " << icp.getFitnessScore() << std::endl;

std::cout << "ICP transformation: " << std::endl << icp.getFinalTransformation() << std::endl;

}

else {

std::cout << "ICP did not converge." << std::endl;

return -1;

}结果对比:

467

467

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?