机器翻译是指将一段文本从一种语言自动翻译到另一种语言。因为一段文本序列在不同语言中的长度不一定相同,所以我们使用机器翻译为例来介绍编码器—解码器和注意力机制的应用。

1 读取和预处理数据

1.1 实验介绍

机器翻译所需数据集d2lzh_pytorch.tar,使用代码:

!tar -xf d2lzh_pytorch.tar对数据集进行解压。

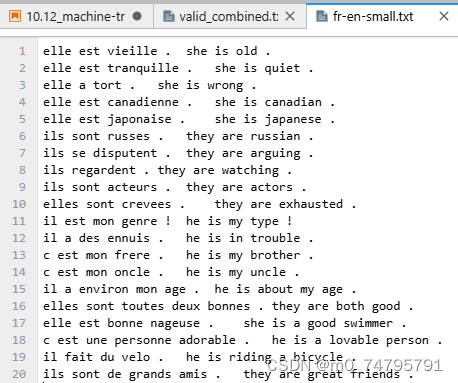

同时使用一个小型的法语-英语数据集 fr-en-small.txt,

1.2 数据预处理

我们先定义一些特殊符号。其中“<pad>”(padding)符号用来添加在较短序列后,直到每个序列等长,而“<bos>”和“<eos>”符号分别表示序列的开始和结束。

import collections

import os

import io

import math

import torch

from torch import nn

import torch.nn.functional as F

import torchtext.vocab as Vocab

import torch.utils.data as Data

import sys

# sys.path.append("..")

import d2lzh_pytorch as d2l

PAD, BOS, EOS = '<pad>', '<bos>', '<eos>'

os.environ["CUDA_VISIBLE_DEVICES"] = "0"

device = torch.device('cuda' if torch.cuda.is_available() else 'cpu')

print(torch.__version__, device)1.5.0 cpu

接着定义两个辅助函数对后面读取的数据进行预处理。

# 将一个序列中所有的词记录在all_tokens中以便之后构造词典,然后在该序列后面添加PAD直到序列

# 长度变为max_seq_len,然后将序列保存在all_seqs中

def process_one_seq(seq_tokens, all_tokens, all_seqs, max_seq_len):

all_tokens.extend(seq_tokens)

seq_tokens += [EOS] + [PAD] * (max_seq_len - len(seq_tokens) - 1)

all_seqs.append(seq_tokens)

# 使用所有的词来构造词典。并将所有序列中的词变换为词索引后构造Tensor

def build_data(all_tokens, all_seqs):

vocab = Vocab.Vocab(collections.Counter(all_tokens),

specials=[PAD, BOS, EOS])

indices = [[vocab.stoi[w] for w in seq] for seq in all_seqs]

return vocab, torch.tensor(indices)在 fr-en-small.txt 数据集里,每一行是一对法语句子和它对应的英语句子,中间使用'\t'隔开。在读取数据时,我们在句末附上“<eos>”符号,并可能通过添加“<pad>”符号使每个序列的长度均为max_seq_len。我们为法语词和英语词分别创建词典。法语词的索引和英语词的索引相互独立。

def read_data(max_seq_len):

# in和out分别是input和output的缩写

in_tokens, out_tokens, in_seqs, out_seqs = [], [], [], []

with io.open('fr-en-small.txt') as f:

lines = f.readlines()

for line in lines:

in_seq, out_seq = line.rstrip().split('\t')

in_seq_tokens, out_seq_tokens = in_seq.split(' '), out_seq.split(' ')

if max(len(in_seq_tokens), len(out_seq_tokens)) > max_seq_len - 1:

continue # 如果加上EOS后长于max_seq_len,则忽略掉此样本

process_one_seq(in_seq_tokens, in_tokens, in_seqs, max_seq_len)

process_one_seq(out_seq_tokens, out_tokens, out_seqs, max_seq_len)

in_vocab, in_data = build_data(in_tokens, in_seqs)

out_vocab, out_data = build_data(out_tokens, out_seqs)

return in_vocab, out_vocab, Data.TensorDataset(in_data, out_data)将序列的最大长度设成7,然后查看读取到的第一个样本。该样本分别包含法语词索引序列和英语词索引序列。

max_seq_len = 7

in_vocab, out_vocab, dataset = read_data(max_seq_len)

dataset[0](tensor([ 5, 4, 45, 3, 2, 0, 0]), tensor([ 8, 4, 27, 3, 2, 0, 0]))

2 含注意力机制的编码器—解码器

我们将使用含注意力机制的编码器—解码器来将一段简短的法语翻译成英语。下面我们来介绍模型的实现。

2.1 编码器

在编码器中,我们将输入语言的词索引通过词嵌入层得到词的表征,然后输入到一个多层门控循环单元中。正如我们在循环神经网络的简洁实现中提到的,PyTorch的nn.GRU实例在前向计算后也会分别返回输出和最终时间步的多层隐藏状态。其中的输出指的是最后一层的隐藏层在各个时间步的隐藏状态,并不涉及输出层计算。注意力机制将这些输出作为键项和值项。

class Encoder(nn.Module):

def __init__(self, vocab_size, embed_size, num_hiddens, num_layers,

drop_prob=0, **kwargs):

super(Encoder, self).__init__(**kwargs)

self.embedding = nn.Embedding(vocab_size, embed_size)

self.rnn = nn.GRU(embed_size, num_hiddens, num_layers, dropout=drop_prob)

def forward(self, inputs, state):

# 输入形状是(批量大小, 时间步数)。将输出互换样本维和时间步维

embedding = self.embedding(inputs.long()).permute(1, 0, 2) # (seq_len, batch, input_size)

return self.rnn(embedding, state)

def begin_state(self):

return None下面我们来创建一个批量大小为4、时间步数为7的小批量序列输入。设门控循环单元的隐藏层个数为2,隐藏单元个数为16。编码器对该输入执行前向计算后返回的输出形状为(时间步数, 批量大小, 隐藏单元个数)。门控循环单元在最终时间步的多层隐藏状态的形状为(隐藏层个数, 批量大小, 隐藏单元个数)。对于门控循环单元来说,state就是一个元素,即隐藏状态;如果使用长短期记忆,state是一个元组,包含两个元素即隐藏状态和记忆细胞。

encoder = Encoder(vocab_size=10, embed_size=8, num_hiddens=16, num_layers=2)

output, state = encoder(torch.zeros((4, 7)), encoder.begin_state())

output.shape, state.shape # GRU的state是h, 而LSTM的是一个元组(h, c)(torch.Size([7, 4, 16]), torch.Size([2, 4, 16]))

2.2 注意力机制

我们将实现 注意力机制 中定义的函数𝑎𝑎:将输入连结后通过含单隐藏层的多层感知机变换。其中隐藏层的输入是解码器的隐藏状态与编码器在所有时间步上隐藏状态的一一连结,且使用tanh函数作为激活函数。输出层的输出个数为1。两个Linear实例均不使用偏差。其中函数𝑎𝑎定义里向量𝑣𝑣的长度是一个超参数,即attention_size。

def attention_model(input_size, attention_size):

model = nn.Sequential(nn.Linear(input_size, attention_size, bias=False),

nn.Tanh(),

nn.Linear(attention_size, 1, bias=False))

return model注意力机制的输入包括查询项、键项和值项。设编码器和解码器的隐藏单元个数相同。这里的查询项为解码器在上一时间步的隐藏状态,形状为(批量大小, 隐藏单元个数);键项和值项均为编码器在所有时间步的隐藏状态,形状为(时间步数, 批量大小, 隐藏单元个数)。注意力机制返回当前时间步的背景变量,形状为(批量大小, 隐藏单元个数)。

def attention_forward(model, enc_states, dec_state):

"""

enc_states: (时间步数, 批量大小, 隐藏单元个数)

dec_state: (批量大小, 隐藏单元个数)

"""

# 将解码器隐藏状态广播到和编码器隐藏状态形状相同后进行连结

dec_states = dec_state.unsqueeze(dim=0).expand_as(enc_states)

enc_and_dec_states = torch.cat((enc_states, dec_states), dim=2)

e = model(enc_and_dec_states) # 形状为(时间步数, 批量大小, 1)

alpha = F.softmax(e, dim=0) # 在时间步维度做softmax运算

return (alpha * enc_states).sum(dim=0) # 返回背景变量在下面的例子中,编码器的时间步数为10,批量大小为4,编码器和解码器的隐藏单元个数均为8。注意力机制返回一个小批量的背景向量,每个背景向量的长度等于编码器的隐藏单元个数。因此输出的形状为(4, 8)。

seq_len, batch_size, num_hiddens = 10, 4, 8

model = attention_model(2*num_hiddens, 10)

enc_states = torch.zeros((seq_len, batch_size, num_hiddens))

dec_state = torch.zeros((batch_size, num_hiddens))

attention_forward(model, enc_states, dec_state).shapetorch.Size([4, 8])

2.3 含注意力机制的解码器

我们直接将编码器在最终时间步的隐藏状态作为解码器的初始隐藏状态。这要求编码器和解码器的循环神经网络使用相同的隐藏层个数和隐藏单元个数。

在解码器的前向计算中,我们先通过刚刚介绍的注意力机制计算得到当前时间步的背景向量。由于解码器的输入来自输出语言的词索引,我们将输入通过词嵌入层得到表征,然后和背景向量在特征维连结。我们将连结后的结果与上一时间步的隐藏状态通过门控循环单元计算出当前时间步的输出与隐藏状态。最后,我们将输出通过全连接层变换为有关各个输出词的预测,形状为(批量大小, 输出词典大小)。

class Decoder(nn.Module):

def __init__(self, vocab_size, embed_size, num_hiddens, num_layers,

attention_size, drop_prob=0):

super(Decoder, self).__init__()

self.embedding = nn.Embedding(vocab_size, embed_size)

self.attention = attention_model(2*num_hiddens, attention_size)

# GRU的输入包含attention输出的c和实际输入, 所以尺寸是 num_hiddens+embed_size

self.rnn = nn.GRU(num_hiddens + embed_size, num_hiddens,

num_layers, dropout=drop_prob)

self.out = nn.Linear(num_hiddens, vocab_size)

def forward(self, cur_input, state, enc_states):

"""

cur_input shape: (batch, )

state shape: (num_layers, batch, num_hiddens)

"""

# 使用注意力机制计算背景向量

c = attention_forward(self.attention, enc_states, state[-1])

# 将嵌入后的输入和背景向量在特征维连结, (批量大小, num_hiddens+embed_size)

input_and_c = torch.cat((self.embedding(cur_input), c), dim=1)

# 为输入和背景向量的连结增加时间步维,时间步个数为1

output, state = self.rnn(input_and_c.unsqueeze(0), state)

# 移除时间步维,输出形状为(批量大小, 输出词典大小)

output = self.out(output).squeeze(dim=0)

return output, state

def begin_state(self, enc_state):

# 直接将编码器最终时间步的隐藏状态作为解码器的初始隐藏状态

return enc_state3 训练模型

我们先实现batch_loss函数计算一个小批量的损失。解码器在最初时间步的输入是特殊字符BOS。之后,解码器在某时间步的输入为样本输出序列在上一时间步的词,即强制教学。此外,同10.3节(word2vec的实现)中的实现一样,我们在这里也使用掩码变量避免填充项对损失函数计算的影响。

def batch_loss(encoder, decoder, X, Y, loss):

batch_size = X.shape[0]

enc_state = encoder.begin_state()

enc_outputs, enc_state = encoder(X, enc_state)

# 初始化解码器的隐藏状态

dec_state = decoder.begin_state(enc_state)

# 解码器在最初时间步的输入是BOS

dec_input = torch.tensor([out_vocab.stoi[BOS]] * batch_size)

# 我们将使用掩码变量mask来忽略掉标签为填充项PAD的损失, 初始全1

mask, num_not_pad_tokens = torch.ones(batch_size,), 0

l = torch.tensor([0.0])

for y in Y.permute(1,0): # Y shape: (batch, seq_len)

dec_output, dec_state = decoder(dec_input, dec_state, enc_outputs)

l = l + (mask * loss(dec_output, y)).sum()

# 使用解码器在上一时间步的输出作为当前时间步的输入

dec_input = dec_output.argmax(dim=-1)

num_not_pad_tokens += mask.sum().item()

mask = mask * (y != out_vocab.stoi[EOS]).float()

return l / num_not_pad_tokens在训练函数中,我们需要同时迭代编码器和解码器的模型参数。

def train(encoder, decoder, dataset, lr, batch_size, num_epochs):

enc_optimizer = torch.optim.Adam(encoder.parameters(), lr=lr)

dec_optimizer = torch.optim.Adam(decoder.parameters(), lr=lr)

loss = nn.CrossEntropyLoss(reduction='none')

data_iter = Data.DataLoader(dataset, batch_size, shuffle=True)

for epoch in range(num_epochs):

l_sum = 0.0

for X, Y in data_iter:

enc_optimizer.zero_grad()

dec_optimizer.zero_grad()

l = batch_loss(encoder, decoder, X, Y, loss)

l.backward()

enc_optimizer.step()

dec_optimizer.step()

l_sum += l.item()

if (epoch + 1) % 10 == 0:

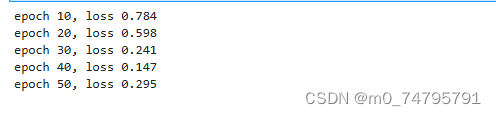

print("epoch %d, loss %.3f" % (epoch + 1, l_sum / len(data_iter)))接下来,创建模型实例并设置超参数。然后,我们就可以训练模型了。

embed_size, num_hiddens, num_layers = 64, 64, 2

attention_size, drop_prob, lr, batch_size, num_epochs = 10, 0.5, 0.01, 2, 50

encoder = Encoder(len(in_vocab), embed_size, num_hiddens, num_layers,

drop_prob)

decoder = Decoder(len(out_vocab), embed_size, num_hiddens, num_layers,

attention_size, drop_prob)

train(encoder, decoder, dataset, lr, batch_size, num_epochs)

4 预测不定长的序列

在束搜索中我们介绍了3种方法来生成解码器在每个时间步的输出。这里我们实现最简单的贪婪搜索。

def translate(encoder, decoder, input_seq, max_seq_len):

in_tokens = input_seq.split(' ')

in_tokens += [EOS] + [PAD] * (max_seq_len - len(in_tokens) - 1)

enc_input = torch.tensor([[in_vocab.stoi[tk] for tk in in_tokens]]) # batch=1

enc_state = encoder.begin_state()

enc_output, enc_state = encoder(enc_input, enc_state)

dec_input = torch.tensor([out_vocab.stoi[BOS]])

dec_state = decoder.begin_state(enc_state)

output_tokens = []

for _ in range(max_seq_len):

dec_output, dec_state = decoder(dec_input, dec_state, enc_output)

pred = dec_output.argmax(dim=1)

pred_token = out_vocab.itos[int(pred.item())]

if pred_token == EOS: # 当任一时间步搜索出EOS时,输出序列即完成

break

else:

output_tokens.append(pred_token)

dec_input = pred

return output_tokens简单测试一下模型。输入法语句子“ils regardent.”,翻译后的英语句子应该是“they are watching.”。

input_seq = 'ils regardent .'

translate(encoder, decoder, input_seq, max_seq_len)['they', 'are', 'watching', '.']

5 评价翻译结果

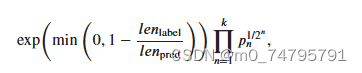

评价机器翻译结果通常使用BLEU(Bilingual Evaluation Understudy)[1]。对于模型预测序列中任意的子序列,BLEU考察这个子序列是否出现在标签序列中。

具体来说,设词数为𝑛𝑛的子序列的精度为𝑝𝑛𝑝𝑛。它是预测序列与标签序列匹配词数为𝑛𝑛的子序列的数量与预测序列中词数为𝑛𝑛的子序列的数量之比。举个例子,假设标签序列为𝐴𝐴、𝐵𝐵、𝐶𝐶、𝐷𝐷、𝐸𝐸、𝐹𝐹,预测序列为𝐴𝐴、𝐵𝐵、𝐵𝐵、𝐶𝐶、𝐷𝐷,那么𝑝1 = 4/5, 𝑝2 = 3/4, 𝑝3 = 1/3, 𝑝4 = 0。设𝑙𝑒𝑛_label和𝑙𝑒𝑛_pred分别为标签序列和预测序列的词数,那么,BLEU的定义为

其中𝑘是我们希望匹配的子序列的最大词数。可以看到当预测序列和标签序列完全一致时,BLEU为1。

因为匹配较长子序列比匹配较短子序列更难,BLEU对匹配较长子序列的精度赋予了更大权重。例如,当𝑝_𝑛固定在0.5时,随着𝑛的增大,0.5^(1/2) ≈ 0.7, 0.5^(1/4) ≈ 0.84, 0.5^(1/8) ≈ 0.92, 0.5^(1/16) ≈ 0.960。另外,模型预测较短序列往往会得到较高𝑝_𝑛值。因此,上式中连乘项前面的系数是为了惩罚较短的输出而设的。举个例子,当𝑘=2时,假设标签序列为𝐴、𝐵、𝐶、𝐷、𝐸、𝐹,而预测序列为𝐴、𝐵。虽然𝑝1=𝑝2=1,但惩罚系数exp(1−6/2) ≈ 0.14 ,因此BLEU也接近0.14。

下面来实现BLEU的计算。

def bleu(pred_tokens, label_tokens, k):

len_pred, len_label = len(pred_tokens), len(label_tokens)

score = math.exp(min(0, 1 - len_label / len_pred))

for n in range(1, k + 1):

num_matches, label_subs = 0, collections.defaultdict(int)

for i in range(len_label - n + 1):

label_subs[''.join(label_tokens[i: i + n])] += 1

for i in range(len_pred - n + 1):

if label_subs[''.join(pred_tokens[i: i + n])] > 0:

num_matches += 1

label_subs[''.join(pred_tokens[i: i + n])] -= 1

score *= math.pow(num_matches / (len_pred - n + 1), math.pow(0.5, n))

return score接下来,定义一个辅助打印函数。

def score(input_seq, label_seq, k):

pred_tokens = translate(encoder, decoder, input_seq, max_seq_len)

label_tokens = label_seq.split(' ')

print('bleu %.3f, predict: %s' % (bleu(pred_tokens, label_tokens, k),

' '.join(pred_tokens)))预测正确则分数为1。

score('ils regardent .', 'they are watching .', k=2)bleu 1.000, predict: they are watching .

score('ils sont canadienne .', 'they are canadian .', k=2)bleu 0.658, predict: they are actors .

实例:

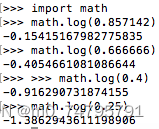

译文(Candidate) Going to play basketball this afternoon ?

参考答案(Reference) Going to play basketball in the afternoon ?

译文gram长度:7 参考答案gram长度:8

先看1-gram,除了this这个单词没有命中,其他都命中了,因此:

P1 = 6/7 = 0.85714...

其他gram以此类推:

P2 = 4/6 = 0.6666..

P3 = 2/5 = 0.4

P4 = 1/4 = 0.25

再计算logPn,这里用python自带的:

BLEU = 0.867 * e^((P1 + P2 + P3 + P4)/4) = 0.867*0.4889 = 0.4238

6 小结

- 可以将编码器—解码器和注意力机制应用于机器翻译中。

- BLEU可以用来评价翻译结果。

7 练习

- 如果编码器和解码器的隐藏单元个数不同或层数不同,我们该如何改进解码器的隐藏状态初始化方法?

使用编码器的最后一个隐藏状态初始化解码器的隐藏状态,但是需要将其映射到解码器隐藏单元的数量和层数。可以通过添加额外的线性层或使用适当的方法进行映射。

- 在训练中,将强制教学替换为使用解码器在上一时间步的输出作为解码器在当前时间步的输入。结果有什么变化吗?

在训练中,将强制教学替换为使用解码器在上一时间步的输出作为解码器在当前时间步的输入会导致模型变为自回归模型,即当前时间步的输出会影响下一时间步的输入。这通常会提高模型的性能,因为模型可以更好地利用自身的输出来生成更准确的翻译。

- 试着使用更大的翻译数据集来训练模型,例如 WMT [2] 和 Tatoeba Project [3]。

!wget http://www.statmt.org/wmt14/training-parallel-nc-v9.tgz !tar -xf training-parallel-nc-v9.tgz -C WMT # 存储在目录 /WMT/training/.--2024-06-16 07:47:34-- http://www.statmt.org/wmt14/training-parallel-nc-v9.tgz Resolving www.statmt.org (www.statmt.org)... 129.215.32.28 Connecting to www.statmt.org (www.statmt.org)|129.215.32.28|:80... connected. HTTP request sent, awaiting response... 301 Moved Permanently Location: https://www.statmt.org/wmt14/training-parallel-nc-v9.tgz [following] --2024-06-16 07:47:35-- https://www.statmt.org/wmt14/training-parallel-nc-v9.tgz Connecting to www.statmt.org (www.statmt.org)|129.215.32.28|:443... connected. HTTP request sent, awaiting response... 200 OK Length: 80418416 (77M) [application/x-gzip] Saving to: ‘training-parallel-nc-v9.tgz.1’ training-parallel-n 100%[===================>] 76.69M 6.82MB/s in 11s 2024-06-16 07:47:46 (7.11 MB/s) - ‘training-parallel-nc-v9.tgz.1’ saved [80418416/80418416]!wget http://www.manythings.org/anki/fra-eng.zip !unzip fra-eng.zip -d "Tatoeba Project" # 存储在目录 /Tatoeba Project/training/.--2024-06-16 07:52:43-- http://www.manythings.org/anki/fra-eng.zip Resolving www.manythings.org (www.manythings.org)... 173.254.30.110 Connecting to www.manythings.org (www.manythings.org)|173.254.30.110|:80... connected. HTTP request sent, awaiting response... 200 OK Length: 7943074 (7.6M) [application/zip] Saving to: ‘fra-eng.zip’ fra-eng.zip 100%[===================>] 7.57M 2.66MB/s in 2.8s 2024-06-16 07:52:46 (2.66 MB/s) - ‘fra-eng.zip’ saved [7943074/7943074] Archive: fra-eng.zip inflating: Tatoeba Project/_about.txt inflating: Tatoeba Project/fra.txt!pip install spacy !python -m spacy download en_core_web_smInstalling collected packages: en-core-web-sm Successfully installed en-core-web-sm-3.6.0 ✔ Download and installation successful You can now load the package via spacy.load('en_core_web_sm')import spacy # 加载英语模型 try: spacy_en = spacy.load('en_core_web_sm') print("English model loaded successfully") except: print("Failed to load English model") # 加载法语模型 try: spacy_fr = spacy.load('fr_core_news_sm') print("French model loaded successfully") except: print("Failed to load French model")English model loaded successfully French model loaded successfully接下来使用WMT翻译数据集训练模型:

import os import torch from torch import nn from torch.optim import Adam from torchtext.datasets import TranslationDataset from torchtext.data import Field, BucketIterator import random import spacy # Tokenizers def tokenize_en(text): return [tok.text for tok in spacy_en.tokenizer(text)] def tokenize_fr(text): return [tok.text for tok in spacy_fr.tokenizer(text)] # Define fields SRC = Field(tokenize=tokenize_en, lower=True, include_lengths=True) TRG = Field(tokenize=tokenize_fr, lower=True, include_lengths=True) # Data paths data_path = "WMT/training/news-commentary-v9.fr-en" train_file_en = f"{data_path}.en" train_file_fr = f"{data_path}.fr" # Check if dataset files exist if not (os.path.exists(train_file_en) and os.path.exists(train_file_fr)): raise FileNotFoundError(f"Dataset files not found in the specified path: {data_path}") # Split data into train, valid, and test sets def split_data(en_file, fr_file, split_ratio=(0.8, 0.1, 0.1)): with open(en_file, 'r', encoding='utf-8') as f: en_lines = f.readlines() with open(fr_file, 'r', encoding='utf-8') as f: fr_lines = f.readlines() data = list(zip(en_lines, fr_lines)) random.shuffle(data) total_len = len(data) train_end = int(total_len * split_ratio[0]) valid_end = train_end + int(total_len * split_ratio[1]) train_data = data[:train_end] valid_data = data[train_end:valid_end] test_data = data[valid_end:] def write_split(split_data, en_output, fr_output): with open(en_output, 'w', encoding='utf-8') as en_file: en_file.writelines([line[0] for line in split_data]) with open(fr_output, 'w', encoding='utf-8') as fr_file: fr_file.writelines([line[1] for line in split_data]) write_split(train_data, 'train.en', 'train.fr') write_split(valid_data, 'valid.en', 'valid.fr') write_split(test_data, 'test.en', 'test.fr') split_data(train_file_en, train_file_fr) # Load datasets train_data, valid_data, test_data = TranslationDataset.splits( path="", train='train', validation='valid', test='test', exts=('.en', '.fr'), fields=(SRC, TRG) ) # Build vocabulary SRC.build_vocab(train_data, min_freq=2) TRG.build_vocab(train_data, min_freq=2) # Encoder class class Encoder(nn.Module): def __init__(self, input_dim, emb_dim, hidden_dim, n_layers, dropout): super().__init__() self.embedding = nn.Embedding(input_dim, emb_dim) self.rnn = nn.LSTM(emb_dim, hidden_dim, n_layers, dropout=dropout) self.dropout = nn.Dropout(dropout) def forward(self, src): embedded = self.dropout(self.embedding(src)) outputs, (hidden, cell) = self.rnn(embedded) return hidden, cell # Decoder class class Decoder(nn.Module): def __init__(self, output_dim, emb_dim, hidden_dim, n_layers, dropout): super().__init__() self.embedding = nn.Embedding(output_dim, emb_dim) self.rnn = nn.LSTM(emb_dim, hidden_dim, n_layers, dropout=dropout) self.fc_out = nn.Linear(hidden_dim, output_dim) self.dropout = nn.Dropout(dropout) def forward(self, input, hidden, cell): input = input.unsqueeze(0) embedded = self.dropout(self.embedding(input)) output, (hidden, cell) = self.rnn(embedded, (hidden, cell)) prediction = self.fc_out(output.squeeze(0)) return prediction, hidden, cell # Seq2Seq class class Seq2Seq(nn.Module): def __init__(self, encoder, decoder, device): super().__init__() self.encoder = encoder self.decoder = decoder self.device = device def forward(self, src, trg, teacher_forcing_ratio=0.5): batch_size = trg.shape[1] trg_len = trg.shape[0] trg_vocab_size = self.decoder.fc_out.out_features outputs = torch.zeros(trg_len, batch_size, trg_vocab_size).to(self.device) hidden, cell = self.encoder(src) input = trg[0, :] for t in range(1, trg_len): output, hidden, cell = self.decoder(input, hidden, cell) outputs[t] = output teacher_force = random.random() < teacher_forcing_ratio top1 = output.argmax(1) input = trg[t] if teacher_force else top1 return outputs # Model components INPUT_DIM = len(SRC.vocab) OUTPUT_DIM = len(TRG.vocab) ENC_EMB_DIM = 256 DEC_EMB_DIM = 256 HID_DIM = 512 N_LAYERS = 2 ENC_DROPOUT = 0.5 DEC_DROPOUT = 0.5 enc = Encoder(INPUT_DIM, ENC_EMB_DIM, HID_DIM, N_LAYERS, ENC_DROPOUT) dec = Decoder(OUTPUT_DIM, DEC_EMB_DIM, HID_DIM, N_LAYERS, DEC_DROPOUT) # Initialize model and optimizer device = torch.device("cuda" if torch.cuda.is_available() else "cpu") model = Seq2Seq(enc, dec, device).to(device) optimizer = Adam(model.parameters()) # Loss function criterion = nn.CrossEntropyLoss(ignore_index=TRG.vocab.stoi[TRG.pad_token]) # Data iterators BATCH_SIZE = 32 train_iterator, valid_iterator, test_iterator = BucketIterator.splits( (train_data, valid_data, test_data), batch_size=BATCH_SIZE, device=device, sort_within_batch=True, sort_key=lambda x: len(x.src) ) # Training function def train(model, iterator, optimizer, criterion, clip): model.train() epoch_loss = 0 for i, batch in enumerate(iterator): src, src_len = batch.src trg, trg_len = batch.trg optimizer.zero_grad() output = model(src, trg) output_dim = output.shape[-1] output = output[1:].view(-1, output_dim) trg = trg[1:].view(-1) loss = criterion(output, trg) loss.backward() torch.nn.utils.clip_grad_norm_(model.parameters(), clip) optimizer.step() epoch_loss += loss.item() return epoch_loss / len(iterator) # Train model N_EPOCHS = 10 CLIP = 1 for epoch in range(N_EPOCHS): train_loss = train(model, train_iterator, optimizer, criterion, CLIP) print(f"Epoch: {epoch+1:02} | Train Loss: {train_loss:.3f}") # Save model torch.save(model.state_dict(), "model.pt")再使用 Tatoeba Project 数据集训练模型:

!wget http://www.manythings.org/anki/fra-eng.zip !unzip fra-eng.zip -d "Tatoeba Project" # 存储在目录 /Tatoeba Project/training/.--2024-06-16 07:52:43-- http://www.manythings.org/anki/fra-eng.zip Resolving www.manythings.org (www.manythings.org)... 173.254.30.110 Connecting to www.manythings.org (www.manythings.org)|173.254.30.110|:80... connected. HTTP request sent, awaiting response... 200 OK Length: 7943074 (7.6M) [application/zip] Saving to: ‘fra-eng.zip’ fra-eng.zip 100%[===================>] 7.57M 2.66MB/s in 2.8s 2024-06-16 07:52:46 (2.66 MB/s) - ‘fra-eng.zip’ saved [7943074/7943074] Archive: fra-eng.zip inflating: Tatoeba Project/_about.txt inflating: Tatoeba Project/fra.txtimport os import random import torch from torch import nn from torch.optim import Adam from torchtext.data import Field, BucketIterator, TabularDataset import spacy # 定义 tokenizer 函数 def tokenize_en(text): return [tok.text for tok in spacy_en.tokenizer(text)] def tokenize_fr(text): return [tok.text for tok in spacy_fr.tokenizer(text)] # 定义数据字段 SRC = Field(tokenize=tokenize_en, lower=True, init_token='<sos>', eos_token='<eos>') TRG = Field(tokenize=tokenize_fr, lower=True, init_token='<sos>', eos_token='<eos>') # 加载数据 data_path = 'Tatoeba Project/fra.txt' def load_data(file_path, split_ratio=(0.8, 0.1, 0.1)): with open(file_path, 'r', encoding='utf-8') as f: lines = f.readlines() pairs = [line.strip().split('\t') for line in lines] random.shuffle(pairs) total_len = len(pairs) train_end = int(total_len * split_ratio[0]) valid_end = train_end + int(total_len * split_ratio[1]) train_data = pairs[:train_end] valid_data = pairs[train_end:valid_end] test_data = pairs[valid_end:] return train_data, valid_data, test_data train_data, valid_data, test_data = load_data(data_path) # 保存分割后的数据 def save_data(data, file_prefix): with open(f'{file_prefix}.en', 'w', encoding='utf-8') as en_file: en_file.writelines([pair[0] + '\n' for pair in data]) with open(f'{file_prefix}.fr', 'w', encoding='utf-8') as fr_file: fr_file.writelines([pair[1] + '\n' for pair in data]) save_data(train_data, 'train') save_data(valid_data, 'valid') save_data(test_data, 'test') # 加载分割后的数据集 train_data, valid_data, test_data = TabularDataset.splits( path='', train='train', validation='valid', test='test', format='tsv', fields=[('src', SRC), ('trg', TRG)] ) # 构建词汇表 SRC.build_vocab(train_data, min_freq=2) TRG.build_vocab(train_data, min_freq=2) class Encoder(nn.Module): def __init__(self, input_dim, emb_dim, hidden_dim, n_layers, dropout): super().__init__() self.embedding = nn.Embedding(input_dim, emb_dim) self.rnn = nn.LSTM(emb_dim, hidden_dim, n_layers, dropout=dropout) self.dropout = nn.Dropout(dropout) def forward(self, src): embedded = self.dropout(self.embedding(src)) outputs, (hidden, cell) = self.rnn(embedded) return hidden, cell class Decoder(nn.Module): def __init__(self, output_dim, emb_dim, hidden_dim, n_layers, dropout): super().__init__() self.embedding = nn.Embedding(output_dim, emb_dim) self.rnn = nn.LSTM(emb_dim, hidden_dim, n_layers, dropout=dropout) self.fc_out = nn.Linear(hidden_dim, output_dim) self.dropout = nn.Dropout(dropout) def forward(self, input, hidden, cell): input = input.unsqueeze(0) embedded = self.dropout(self.embedding(input)) output, (hidden, cell) = self.rnn(embedded, (hidden, cell)) prediction = self.fc_out(output.squeeze(0)) return prediction, hidden, cell class Seq2Seq(nn.Module): def __init__(self, encoder, decoder, device): super().__init__() self.encoder = encoder self.decoder = decoder self.device = device def forward(self, src, trg, teacher_forcing_ratio=0.5): batch_size = trg.shape[1] trg_len = trg.shape[0] trg_vocab_size = self.decoder.fc_out.out_features outputs = torch.zeros(trg_len, batch_size, trg_vocab_size).to(self.device) hidden, cell = self.encoder(src) input = trg[0, :] for t in range(1, trg_len): output, hidden, cell = self.decoder(input, hidden, cell) outputs[t] = output teacher_force = random.random() < teacher_forcing_ratio top1 = output.argmax(1) input = trg[t] if teacher_force else top1 return outputs # 定义模型组件 INPUT_DIM = len(SRC.vocab) OUTPUT_DIM = len(TRG.vocab) ENC_EMB_DIM = 256 DEC_EMB_DIM = 256 HID_DIM = 512 N_LAYERS = 2 ENC_DROPOUT = 0.5 DEC_DROPOUT = 0.5 enc = Encoder(INPUT_DIM, ENC_EMB_DIM, HID_DIM, N_LAYERS, ENC_DROPOUT) dec = Decoder(OUTPUT_DIM, DEC_EMB_DIM, HID_DIM, N_LAYERS, DEC_DROPOUT) # 初始化模型和优化器 device = torch.device("cuda" if torch.cuda.is_available() else "cpu") model = Seq2Seq(enc, dec, device).to(device) optimizer = Adam(model.parameters()) # 定义损失函数 criterion = nn.CrossEntropyLoss(ignore_index=TRG.vocab.stoi[TRG.pad_token]) # 创建数据加载器 BATCH_SIZE = 32 train_iterator, valid_iterator, test_iterator = BucketIterator.splits( (train_data, valid_data, test_data), batch_size=BATCH_SIZE, device=device, sort_within_batch=True, sort_key=lambda x: len(x.src) ) # 训练模型 def train(model, iterator, optimizer, criterion, clip): model.train() epoch_loss = 0 for i, batch in enumerate(iterator): src, src_len = batch.src trg, trg_len = batch.trg optimizer.zero_grad() output = model(src, trg) output_dim = output.shape[-1] output = output[1:].view(-1, output_dim) trg = trg[1:].view(-1) loss = criterion(output, trg) loss.backward() torch.nn.utils.clip_grad_norm_(model.parameters(), clip) optimizer.step() epoch_loss += loss.item() return epoch_loss / len(iterator) # 定义评估函数 def evaluate(model, iterator, criterion): model.eval() epoch_loss = 0 with torch.no_grad(): for i, batch in enumerate(iterator): src, src_len = batch.src trg, trg_len = batch.trg output = model(src, trg, 0) # turn off teacher forcing output_dim = output.shape[-1] output = output[1:].view(-1, output_dim) trg = trg[1:].view(-1) loss = criterion(output, trg) epoch_loss += loss.item() return epoch_loss / len(iterator) # 训练模型 N_EPOCHS = 10 CLIP = 1 best_valid_loss = float('inf') for epoch in range(N_EPOCHS): train_loss = train(model, train_iterator, optimizer, criterion, CLIP) valid_loss = evaluate(model, valid_iterator, criterion) if valid_loss < best_valid_loss: best_valid_loss = valid_loss torch.save(model.state_dict(), 'tatoeba-model.pt') print(f"Epoch: {epoch+1:02} | Train Loss: {train_loss:.3f} | Val. Loss: {valid_loss:.3f}")由于希冀平台不支持GPU,训练过程无法展示。

8 参考文献

[1] Papineni, K., Roukos, S., Ward, T., & Zhu, W. J. (2002, July). BLEU: a method for automatic evaluation of machine translation. In Proceedings of the 40th annual meeting on association for computational linguistics (pp. 311-318). Association for Computational Linguistics.

[2] WMT. Translation Task - ACL 2014 Ninth Workshop on Statistical Machine Translation

[3] Tatoeba Project. Tab-delimited Bilingual Sentence Pairs from the Tatoeba Project (Good for Anki and Similar Flashcard Applications)

1028

1028

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?