参考:https://blog.csdn.net/mn_kw/article/details/79913786

https://blog.csdn.net/weixin_37051000/article/details/78587370

决策树给出的特征Gini重要性计算:https://blog.csdn.net/DKY10/article/details/84843864,https://www.jianshu.com/p/cfd7e2d385da

- 原理

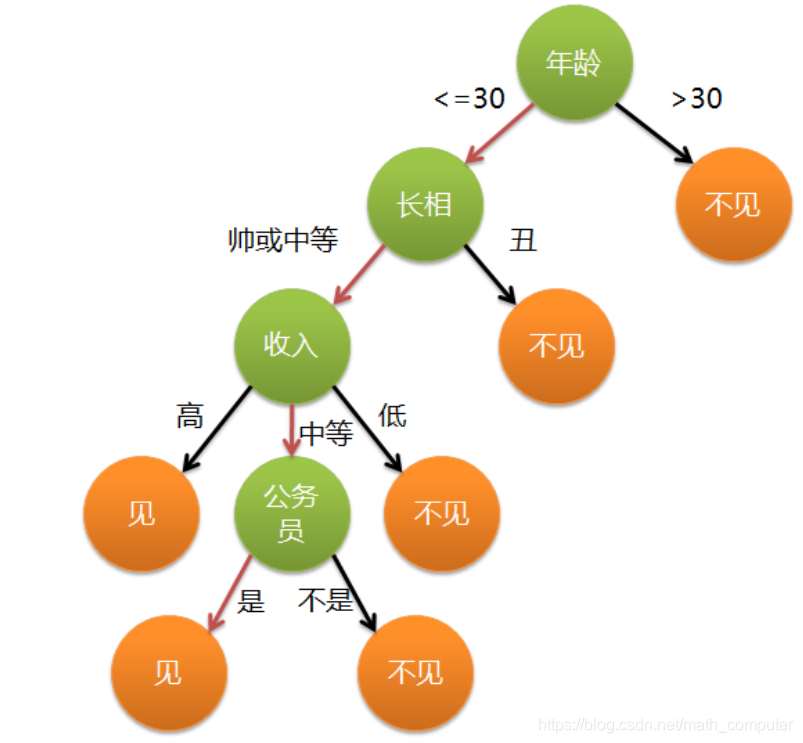

特征选择办法:先选信息增益大的

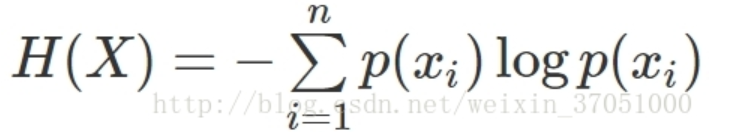

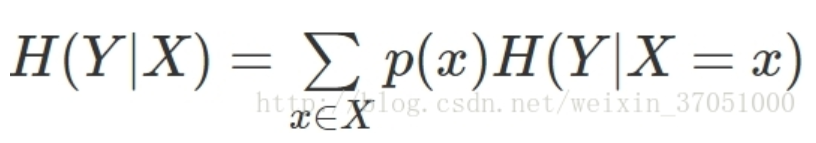

信息增益:信息熵-条件熵

- 特征Gini重要性

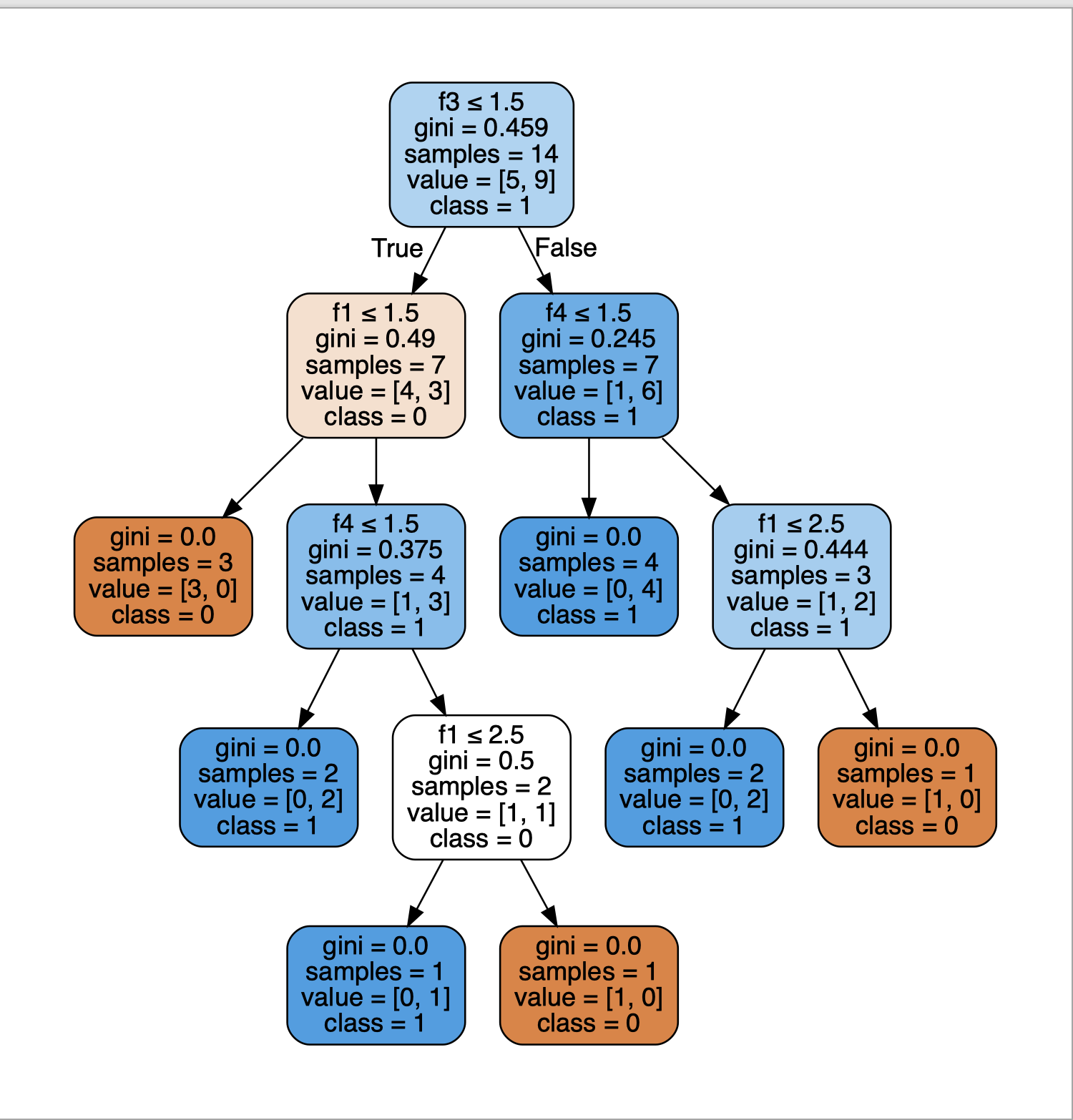

sklearn.tree.DicisionTreeClassifier类中的feature_importances_属性返回的是特征的重要性,feature_importances_越高代表特征越重要,scikit-learn官方文档1中的解释如下:

The importance of a feature is computed as the (normalized) total reduction of the criterion brought by that feature. It is also known as the Gini importance.

1.计算个特征的重要性

f1 = 0.49*7 - 0.375*4 + 0.5*2 + 0.444*3 = 4.262

f2 = 0

f3 = 0.459*14 - 0.49*7 - 0.245*7 = 1.281

f4 = 0.245*7 - 0.444*3 + 0.375*4 - 0.5*2 = 0.883

2.这棵树总的不纯减少量为4.262+1.281+0.883=6.426

3.经过归一化后,各特征的重要性分别如下:

f1_importance = 4.262/6.426=0.663

f2_importance = 0

f3_importance = 1.281/6.426=0.2

f4_importance = 0.883/6.426=0.137

1万+

1万+

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?